August 20, 2015 6.46pm EDT

The Earth seen from Apollo, a photo now known as the “Blue Marble”. NASA

It is often said that the first full image of the Earth, “Blue Marble”, taken by the Apollo 17 space mission in December 1972, revealed Earth to be precious, fragile and protected only by a wafer-thin atmospheric layer. It reinforced the imperative for better stewardship of our “only home”.

But there was another way of seeing the Earth revealed by those photographs. For some the image showed the Earth as a total object, a knowable system, and validated the belief that the planet is there to be used for our own ends.

In this way, the “Blue Marble” image was not a break from technological thinking but its affirmation. A few years earlier, reflecting on the spiritual consequences of space flight, the theologian Paul Tillich wrote of how the possibility of looking down at the Earth gives rise to “a kind of estrangement between man and earth” so that the Earth is seen as a totally calculable material body.

For some, by objectifying the planet this way the Apollo 17 photograph legitimised the Earth as a domain of technological manipulation, a domain from which any unknowable and unanalysable element has been banished. It prompts the idea that the Earth as a whole could be subject to regulation.

This metaphysical possibility is today a physical reality in work now being carried out on geoengineering – technologies aimed at deliberate, large-scale intervention in the climate system designed to counter global warming or offset some of its effects.

While some proposed schemes are modest and relatively benign, the more ambitious ones – each now with a substantial scientific-commercial constituency – would see humanity mobilising its technological power to seize control of the climate system. And because the climate system cannot be separated from the rest of the Earth System, that means regulating the planet, probably in perpetuity.

Dreams of escape

Geoengineering is often referred to as Plan B, one we should be ready to deploy because Plan A, cutting global greenhouse gas emissions, seems unlikely to be implemented in time. Others are now working on what might be called Plan C. It was announced last year in The Times:

British scientists and architects are working on plans for a “living spaceship” like an interstellar Noah’s Ark that will launch in 100 years’ time to carry humans away from a dying Earth.

This version of Plan C is known as Project Persephone, which is curious as Persephone in Greek mythology was the queen of the dead. The project’s goal is to build “prototype exovivaria – closed ecosystems inside satellites, to be maintained from Earth telebotically, and democratically governed by a global community.”

NASA and DARPA, the US Defense Department’s advanced technologies agency, are also developing a “worldship” designed to take a multi-generational community of humans beyond the solar system.

Paul Tillich noticed the intoxicating appeal that space travel holds for certain kinds of people. Those first space flights became symbols of a new ideal of human existence, “the image of the man who looks down at the earth, not from heaven, but from a cosmic sphere above the earth”. A more common reaction to Project Persephone is summed up by a reader of the Daily Mail: “Only the ‘elite’ will go. The rest of us will be left to die.”

Perhaps being left to die on the home planet would be a more welcome fate. Imagine being trapped on this “exovivarium”, a self-contained world in which exported nature becomes a tool for human survival; a world where there is no night and day; no seasons; no mountains, streams, oceans or bald eagles; no ice, storms or winds; no sky; no sunrise; a closed world whose occupants would work to keep alive by simulation the archetypal habits of life on Earth.

Into the endless void

What kind of person imagines himself or herself living in such a world? What kind of being, after some decades, would such a post-terrestrial realm create? What kind of children would be bred there?

According to Project Persephone’s sociologist, Steve Fuller: “If the Earth ends up a no-go zone for human beings [sic] due to climate change or nuclear or biological warfare, we have to preserve human civilisation.”

Why would we have to preserve human civilisation? What is the value of a civilisation if not to raise human beings to a higher level of intellectual sophistication and moral responsibility? What is a civilisation worth if it cannot protect the natural conditions that gave birth to it?

Those who blast off leaving behind a ruined Earth would carry into space a fallen civilisation. As the Earth receded into the all-consuming blackness those who looked back on it would be the beings who had shirked their most primordial responsibility, beings corroded by nostalgia and survivor guilt.

He’s now mostly forgotten, but in the 1950s and 1960s the Swedish poet Harry Martinson was famous for his haunting epic poem Aniara, which told the story of a spaceship carrying a community of several thousand humans out into space escaping an Earth devastated by nuclear conflagration. At the end of the epic the spaceship’s controller laments the failure to create a new Eden:

“I had meant to make them an Edenic place,

but since we left the one we had destroyed

our only home became the night of space

where no god heard us in the endless void.”

So from the cruel fantasy of Plan C we are obliged to return to Plan A, and do all we can to slow the geological clock that has ticked over into the Anthropocene. If, on this Earthen beast provoked, a return to the halcyon days of an undisturbed climate is no longer possible, at least we can resolve to calm the agitations of “the wakened giant” and so make this new and unwanted epoch one in which humans can survive.

Laymert Garcia dos Santos: ‘Hoje, xamanismo é alta tecnologia de acesso’ (O Globo)

Doutor pela Sorbonne e estudioso dos ianomâmis, paulista que montou ópera com cosmologia indígena em Munique veio ao Rio para aula na EAV Parque Lage

“Nasci numa cidade que mal conheço, Itápolis, mistura de pedra, (‘ita’), do guarani, e cidade (‘polis’), do grego: um pouco a essência brasileira. Estudei no Rio e passei décadas na França. Lecionei muitos anos no Brasil, trabalhando relações entre tecnologia, cultura, ambiente e arte. Sou casado, tenho um filho patologista”

Conte algo que não sei.

Hoje, o que a gente considerava o sobrenatural indígena, o xamanismo, é uma alta tecnologia de acesso o a mundos virtuais, com lógicas que não são ocidentais, mas no final acabam chegando, cada vez mais, a uma espécie de cruzamento com a perspectiva tecnocientífica racional.

Em que ponto se dá esse cruzamento?

A ciência já sabe que existe, na Amazônia, um apocalipse anunciado, se a devastação persistir. Há um milênio os ianomâmis falam de um apocalipse mítico: quando não houver mais xamãs, o céu vai cair… pois são eles que seguram o céu, junto com os espíritos auxiliares humanimais.

As profecias convergem para a ecologia de ponta…

Sim. E na ópera que fizemos essas duas perspectivas acabam convergindo para um final catastrófico. Na perspectiva ianomâmi, o homem branco é inumano, um vetor de destruição, e produz a xawara, espécie de fumaça canibal, que vai devorando florestas, espalhando as doenças e epidemias, contaminando rios.

Deve ser complexo transpor uma cosmologia dessas para os palcos de Munique…

Ficamos um tempo na aldeia Demini, semi-isolada, e trabalhamos com os xamãs, em parceria com o Instituto Goethe, o Sesc São Paulo, o ZKM (maior centro de arte e tecnologia da Europa), a Bienal de Teatro Música de Munique e gente da comunidade científica.

Como levar o espírito da aldeia a uma cena de ópera?

Depois de todo o trabalho conceitual na aldeia, chegamos a uma encenação do conflito entre a xawara e o xamã. O público assistia circulando no próprio palco, um labirinto. O xamã era representado pelo cantor suíço Christian Zehnder, que já trabalhou na África e na Ásia e é um dos raros no mundo a usar a técnica do voice over, que permite emitir duas vozes ao mesmo tempo, recurso gutural. Quem fazia a xawara era um grande cantor de idade já, o inglês Phil Minton, cantor de jazz.

E tal da tecnologia,era só coadjuvante da tragédia?

Num espaço comprid se dispunha uma sequência de telas, e eram projetadas imagens e luzes que traduziam os fenômenos da selvada através de algoritmos. O público ficava perdido na “floresta,” o xamã numa ponta, xawara na outra, além de um político, um missionário e um cientista.

Os ianomâmis assistiram?

A maioria, não. A ópera não foi feita para eles. Mesmo assim, foram a Munique o chefe Davi Kopenawa e dois xamãs.

E como reagiram?

Primeiro, ficaram satisfeitos com o fato de um público tomar conhecimento, de maneira séria, do que são a cosmologia e o pensamento deles. O caráter estratégico. Mas da a apresentação em si eles riram: acham que arte é coisa de criança, não é o sentido profundo do fenômeno. Que aquela ópera era uma brincadeira perto do xamanismo. Um professor de filosofia percebeu aí uma simetria: os brancos acham os índios infantis por suas crenças, e eles nos acham infantis por nossas representações de sua realidade.

O que a experiência trouxe a você como pessoa?

Fui muitas vezes. Nos começo dos anos 2000 presidi uma ONG que lutou pela defesa e preservação do território ianomami. Estar com eles ajuda a gente a entender não só o que é o outro, mas o que somos. É um tipo de privilégio. Pena que pouca gente teve ou tem um contato de pura positividade com esse mundo que, para nós, é quase sempre vivido na esfera do negativo. Pela educação que a gente tem, pela tradição histórica do modo como os brasileiros tratam os índios. No Japão, seriam seres preciosos, sagrados.

Stop burning fossil fuels now: there is no CO2 ‘technofix’, scientists warn (The Guardian)

Researchers have demonstrated that even if a geoengineering solution to CO2 emissions could be found, it wouldn’t be enough to save the oceans

“The chemical echo of this century’s CO2 pollutiuon will reverberate for thousands of years,” said the report’s co-author, Hans Joachim Schellnhuber Photograph: Doug Perrine/Design Pics/Corbis

German researchers have demonstrated once again that the best way to limit climate change is to stop burning fossil fuels now.

In a “thought experiment” they tried another option: the future dramatic removal of huge volumes of carbon dioxide from the atmosphere. This would, they concluded, return the atmosphere to the greenhouse gas concentrations that existed for most of human history – but it wouldn’t save the oceans.

That is, the oceans would stay warmer, and more acidic, for thousands of years, and the consequences for marine life could be catastrophic.

The research, published in Nature Climate Change today delivers yet another demonstration that there is so far no feasible “technofix” that would allow humans to go on mining and drilling for coal, oil and gas (known as the “business as usual” scenario), and then geoengineer a solution when climate change becomes calamitous.

Sabine Mathesius (of the Helmholtz Centre for Ocean Research in Kiel and the Potsdam Institute for Climate Impact Research) and colleagues decided to model what could be done with an as-yet-unproven technology called carbon dioxide removal. One example would be to grow huge numbers of trees, burn them, trap the carbon dioxide, compress it and bury it somewhere. Nobody knows if this can be done, but Dr Mathesius and her fellow scientists didn’t worry about that.

They calculated that it might plausibly be possible to remove carbon dioxide from the atmosphere at the rate of 90 billion tons a year. This is twice what is spilled into the air from factory chimneys and motor exhausts right now.

The scientists hypothesised a world that went on burning fossil fuels at an accelerating rate – and then adopted an as-yet-unproven high technology carbon dioxide removal technique.

“Interestingly, it turns out that after ‘business as usual’ until 2150, even taking such enormous amounts of CO2 from the atmosphere wouldn’t help the deep ocean that much – after the acidified water has been transported by large-scale ocean circulation to great depths, it is out of reach for many centuries, no matter how much CO2 is removed from the atmosphere,” said a co-author, Ken Caldeira, who is normally based at the Carnegie Institution in the US.

The oceans cover 70% of the globe. By 2500, ocean surface temperatures would have increased by 5C (41F) and the chemistry of the ocean waters would have shifted towards levels of acidity that would make it difficult for fish and shellfish to flourish. Warmer waters hold less dissolved oxygen. Ocean currents, too, would probably change.

But while change happens in the atmosphere over tens of years, change in the ocean surface takes centuries, and in the deep oceans, millennia. So even if atmospheric temperatures were restored to pre-Industrial Revolution levels, the oceans would continue to experience climatic catastrophe.

“In the deep ocean, the chemical echo of this century’s CO2 pollution will reverberate for thousands of years,” said co-author Hans Joachim Schellnhuber, who directs the Potsdam Institute. “If we do not implement emissions reductions measures in line with the 2C (35.6F) target in time, we will not be able to preserve ocean life as we know it.”

Nova técnica estima multidões analisando atividade de celulares (BBC Brasil)

3 junho 2015

Pesquisadores buscam maneiras mais eficientes de medir tamanho de multidões sem depender de imagens

Um estudo de uma universidade britânica desenvolveu um novo meio de estimar multidões em protestos ou outros eventos de massa: através da análise de dados geográficos de celulares e Twitter.

Pesquisadores da Warwick University, na Inglaterra, analisaram a geolocalização de celulares e de mensagens no Twitter durante um período de dois meses em Milão, na Itália.

Em dois locais com números de visitantes conhecidos – um estádio de futebol e um aeroporto – a atividade nas redes sociais e nos celulares aumentou e diminuiu de maneira semelhante ao fluxo de pessoas.

A equipe disse que, utilizando esta técnica, pode fazer medições em eventos como protestos.

Outros pesquisadores enfatizaram o fato de que há limitações neste tipo de dados – por exemplo, somente uma parte da população usa smartphones e Twitter e nem todas as áreas em um espaço estão bem servidos de torres telefônicas.

Mas os autores do estudo dizem que os resultados foram “um excelente ponto de partida” para mais estimativas do tipo – com mais precisão – no futuro.

“Estes números são exemplos de calibração nos quais podemos nos basear”, disse o coautor do estudo, Tobias Preis.

“Obviamente seria melhor termos exemplos em outros países, outros ambientes, outros momentos. O comportamento humano não é uniforme em todo o mundo, mas está é uma base muito boa para conseguir estimativas iniciais.”

O estudo, divulgado na publicação científica Royal Society Open Science, é parte de um campo de pesquisa em expansão que explora o que a atividade online pode revelar sobre o comportamento humano e outros fenômenos reais.

Cientistas compararam dados oficiais de visitantes em aeroporto e estádio com atividade no Twitter e no celular

Federico Botta, estudante de PhD que liderou a análise, afirmou que a metodologia baseada em celulares tem vantagens importantes sobre outros métodos para estimar o tamanho de multidões – que costumam se basear em observações no local ou em imagens.

“Este método é muito rápido e não depende do julgamento humano. Ele só depende dos dados que vêm dos telefones celulares ou da atividade no Twitter”, disse à BBC.

Margem de erro

Com dois meses de dados de celulares fornecidos pela Telecom Italia, Botta e seus colegas se concentraram no aeroporto de Linate e no estádio de futebol San Siro, em Milão.

Eles compararam o número de pessoas que se sabia estarem naqueles locais a cada momento – baseado em horários de voos e na venda de ingressos para os jogos de futebol – com três tipos de atividade em telefones celulares: o número de chamadas feitas e de mensagens de texto enviadas, a quantidade de internet utilizada e o volume de tuítes feitos.

“O que vimos é que estas atividades realmente tinham um comportamento muito semelhante ao número de pessoas no local”, afirma Botta.

Isso pode não parecer tão surpreendente, mas, especialmente no estádio de futebol, os padrões observados pela equipe eram tão confiáveis que eles conseguiam até fazer previsões.

Houve dez jogos de futebol no período em que o experimento foi feito. Com base nos dados de nove jogos, foi possível estimar quantas pessoas estariam no décimo jogo usando apenas os dados dos celulares.

“Nossa porcentagem absoluta média de erro é cerca de 13%. Isso significa que nossas estimativas e o número real de pessoas têm uma diferença entre si, em valores absolutos, de cerca de 13%”, diz Botta.

De acordo com os pesquisadores, esta margem de erro é boa em comparação com as técnicas tradicionais baseadas em imagens e no julgamento humano.

Eles deram o exemplo do manifestação em Washington, capital americana, conhecida como “Million Man March” (Passeata do milhão, em tradução livre) em 1995, em que mesmo as análises mais criteriosas conseguiram produzir estimativas com 20% de erro – depois que medições iniciais variaram entre 400 mil e dois milhões de pessoas.

Precisão de dados coletados em estádio de futebol surpreendeu até mesmo a equipe de pesquisadores

Segundo Ed Manley, do Centro para Análise Espacial Avançada do University College London, a técnica tem potencial e as pessoas devem sentir-se “otimistas, mas cautelosas” em relação ao uso de dados de celulares nestas estimativas.

“Temos essas bases de dados enormes e há muito o que pode ser feito com elas… Mas precisamos ter cuidado com o quanto vamos exigir dos dados”, afirmou.

Ele também chama a atenção para o fato de que tais informações não refletem igualitariamente uma população.

“Há vieses importantes aqui. Quem exatamente estamos medindo com essas bases de dados?”, o Twitter, por exemplo, diz Manley, tem uma base de usuários relativamente jovem e de classe alta.

Além destas dificuldades, há o fato de que é preciso escolher com cuidado as atividades que serão medidas, porque as pessoas usam seus telefones de maneira diferente em diferentes lugares – mais chamadas no aeroporto e mais tuítes no futebol, por exemplo.

Outra ressalva importante é o fato de que toda a metodologia de análise defendida por Botta depende do sinal de telefone e internet – que varia muito de lugar para lugar, quando está disponível.

“Se estamos nos baseando nesses dados para saber onde as pessoas estão, o que acontece quando temos um problema com a maneira como os dados são coletados?”, indaga Manley.

How Facebook’s Algorithm Suppresses Content Diversity (Modestly) and How the Newsfeed Rules Your Clicks (The Message)

Zeynep Tufekci on May 7, 2015

Today, three researchers at Facebook published an article in Science on how Facebook’s newsfeed algorithm suppresses the amount of “cross-cutting” (i.e. likely to cause disagreement) news articles a person sees. I read a lot of academic research, and usually, the researchers are at a pains to highlight their findings. This one buries them as deep as it could, using a mix of convoluted language and irrelevant comparisons. So, first order of business is spelling out what they found. Also, for another important evaluation — with some overlap to this one — go read this post by University of Michigan professor Christian Sandvig.

The most important finding, if you ask me, is buried in an appendix. Here’s the chart showing that the higher an item is in the newsfeed, the more likely it is clicked on.

Notice how steep the curve is. The higher the link, more (a lot more) likely it will be clicked on. You live and die by placement, determined by the newsfeed algorithm. (The effect, as Sean J. Taylor correctly notes, is a combination of placement, and the fact that the algorithm is guessing what you would like). This was already known, mostly, but it’s great to have it confirmed by Facebook researchers (the study was solely authored by Facebook employees).

The most important caveat that is buried is that this study is not about all of Facebook users, despite language at the end that’s quite misleading. The researchers end their paper with: “Finally, we conclusively establish that on average in the context of Facebook…” No. The research was conducted on a small, skewed subset of Facebook users who chose to self-identify their political affiliation on Facebook and regularly log on to Facebook, about ~4% of the population available for the study. This is super important because this sampling confounds the dependent variable.

The gold standard of sampling is random, where every unit has equal chance of selection, which allows us to do amazing things like predict elections with tiny samples of thousands. Sometimes, researchers use convenience samples — whomever they can find easily — and those can be okay, or not, depending on how typical the sample ends up being compared to the universe. Sometimes, in cases like this, the sampling affects behavior: people who self-identify their politics are almost certainly going to behave quite differently, on average, than people who do not, when it comes to the behavior in question which is sharing and clicking through ideologically challenging content. So, everything in this study applies only to that small subsample of unusual people. (Here’s a post by the always excellent Eszter Hargittai unpacking the sampling issue further.) The study is still interesting, and important, but it is not a study that can generalize to Facebook users. Hopefully that can be a future study.

What does the study actually say?

- Here’s the key finding: Facebook researchers conclusively show that Facebook’s newsfeed algorithm decreases ideologically diverse, cross-cutting content people see from their social networks on Facebook by a measurable amount. The researchers report that exposure to diverse content is suppressed by Facebook’s algorithm by 8% for self-identified liberals and by 5% for self-identified conservatives. Or, as Christian Sandvig puts it, “the algorithm filters out 1 in 20 cross-cutting hard news stories that a self-identified conservative sees (or 5%) and 1 in 13cross-cutting hard news stories that a self-identified liberal sees (8%).” You are seeing fewer news items that you’d disagree with which are shared by your friends because the algorithm is not showing them to you.

- Now, here’s the part which will likely confuse everyone, but it should not. The researchers also report a separate finding that individual choice to limit exposure through clicking behavior results in exposure to 6% less diverse content for liberals and 17% less diverse content for conservatives.

Are you with me? One novel finding is that the newsfeed algorithm (modestly) suppresses diverse content, and another crucial and also novel finding is that placement in the feed is (strongly) influential of click-through rates.

Researchers then replicate and confirm a well-known, uncontested and long-established finding which is that people have a tendency to avoid content that challenges their beliefs. Then, confusingly, the researchers compare whether algorithm suppression effect size is stronger than people choosing what to click, and have a lot of language that leads Christian Sandvig to call this the “it’s not our fault” study. I cannot remember a worse apples to oranges comparison I’ve seen recently, especially since these two dynamics, algorithmic suppression and individual choice, have cumulative effects.

Comparing the individual choice to algorithmic suppression is like asking about the amount of trans fatty acids in french fries, a newly-added ingredient to the menu, and being told that hamburgers, which have long been on the menu, also have trans-fatty acids — an undisputed, scientifically uncontested and non-controversial fact. Individual self-selection in news sources long predates the Internet, and is a well-known, long-identified and well-studied phenomenon. Its scientific standing has never been in question. However, the role of Facebook’s algorithm in this process is a new — and important — issue. Just as the medical profession would be concerned about the amount of trans-fatty acids in the new item, french fries, as well as in the existing hamburgers, researchers should obviously be interested in algorithmic effects in suppressing diversity, in addition to long-standing research on individual choice, since the effects are cumulative. An addition, not a comparison, is warranted.

Imagine this (imperfect) analogy where many people were complaining, say, a washing machine has a faulty mechanism that sometimes destroys clothes. Now imagine washing machine company research paper which finds this claim is correct for a small subsample of these washing machines, and quantifies that effect, but also looks into how many people throw out their clothes before they are totally worn out, a well-established, undisputed fact in the scientific literature. The correct headline would not be “people throwing out used clothes damages more dresses than the the faulty washing machine mechanism.” And if this subsample was drawn from one small factory located everywhere else than all the other factories that manufacture the same brand, and produced only 4% of the devices, the headline would not refer to all washing machines, and the paper would not (should not) conclude with a claim about the average washing machine.

Also, in passing the paper’s conclusion appears misstated. Even though the comparison between personal choice and algorithmic effects is not very relevant, the result is mixed, rather than “conclusively establish[ing] that on average in the context of Facebook individual choices more than algorithms limit exposure to attitude-challenging content”. For self-identified liberals, the algorithm was a stronger suppressor of diversity (8% vs. 6%) while for self-identified conservatives, it was a weaker one (5% vs 17%).)

Also, as Christian Sandvig states in this post, and Nathan Jurgenson in this important post here, and David Lazer in the introduction to the piece in Science explore deeply, the Facebook researchers are not studying some neutral phenomenon that exists outside of Facebook’s control. The algorithm is designed by Facebook, and is occasionally re-arranged, sometimes to the devastation of groups who cannot pay-to-play for that all important positioning. I’m glad that Facebook is choosing to publish such findings, but I cannot but shake my head about how the real findings are buried, and irrelevant comparisons take up the conclusion. Overall, from all aspects, this study confirms that for this slice of politically-engaged sub-population, Facebook’s algorithm is a modest suppressor of diversity of content people see on Facebook, and that newsfeed placement is a profoundly powerful gatekeeper for click-through rates. This, not all the roundabout conversation about people’s choices, is the news.

Late Addition: Contrary to some people’s impressions, I am not arguing against all uses of algorithms in making choices in what we see online. The questions that concern me are how these algorithms work, what their effects are, who controls them, and what are the values that go into the design choices. At a personal level, I’d love to have the choice to set my newsfeed algorithm to “please show me more content I’d likely disagree with” — something the researchers prove that Facebook is able to do.

Software tool allows scientists to correct climate ‘misinformation’ from major media outlets (ClimateWire)

ClimateWire, April 13, 2015.

| Manon Verchot, E&E reporter |

| Published: Monday, April 13, 2015 |

| After years of misinformation about climate change and climate science in the media, more than two dozen climate scientists are developing a Web browser plugin to right the wrongs in climate reporting.

The plugin, called Climate Feedback and developed by Hypothes.is, a nonprofit software developer, allows researchers to annotate articles in major media publications and correct errors made by journalists. “People’s views about climate science depend far too much on their politics and what their favorite politicians are saying,” said Aaron Huertas, science communications officer at the Union of Concerned Scientists. “Misinformation hurts our ability to make rational decisions. It’s up to journalists to tell the public what we really know, though it can be difficult to make time to do that, especially when covering breaking news.” An analysis from the Union of Concerned Scientists found that levels of inaccuracy surrounding climate change vary dramatically depending on the news outlet. In 2013, 72 percent of climate-related coverage on Fox News contained misleading statements, compared to 30 percent on CNN and 8 percent on MSNBC. Through Climate Feedback, researchers can comment on inaccurate statements and rate the credibility of articles. The group focuses on annotating articles from news outlets it considers influential — like The Wall Street Journal or The New York Times — rather than blogs. “When you read an article it’s not just about it being wrong or right — it’s much more complicated than that,” said Emmanuel Vincent, a climate scientist at the University of California, Merced’s Center for Climate Communication, who developed the idea behind Climate Feedback. “People still get confused about the basics of climate change.” ‘It’s crucial in a democracy’According to Vincent, one of the things journalists struggle with most is articulating the effect of climate change on extreme weather events. Though hurricanes or other major storms cannot be directly attributed to climate change, scientists expect warmer ocean temperatures and higher levels of water vapor in the atmosphere to make storms more intense. Factors like sea-level rise are expected to make hurricanes more devastating as higher sea levels allow storm surges to pass over existing infrastructure. “Trying to connect a weather event with climate change is not the best approach,” Vincent said. Climate Feedback hopes to clarify issues like these. The group’s first task was annotating an article published inThe Wall Street Journal in September 2014. In the piece, the newspaper reported that sea-level rise experienced today is the same as sea-level rise experienced 70 years ago. But in the annotated version of the story, Vincent pointed to research from Columbia University that directly contradicted that idea. “The rate of sea level rise has actually quadrupled since preindustrial times,” wrote Vincent in the margins. Vincent hopes that tools like Climate Feedback can help journalists learn to better communicate climate research and can make members of the public confident that the information they are receiving is credible. Researchers who want to contribute to Climate Feedback are required to have published at least one climate-related article that passed a peer review. Many say these tools are particularly important in the Internet era, when masses of information make it difficult for the public to wade through the vast quantities of articles and reports. “There are big decisions that need to be made about climate change,” Vincent said. “It’s crucial in a democracy for people to know about these issues.” |

Preternatural machines (AEON)

by

Robots came to Europe before the dawn of the mechanical age. To a medieval world, they were indistinguishable from magic

E R Truitt is a medieval historian at Bryn Mawr College in Pennsylvania. Her book, Medieval Robots: Mechanism, Magic, Nature, and Art, is out in June.

In 807 the Abbasid caliph in Baghdad, Harun al-Rashid, sent Charlemagne a gift the like of which had never been seen in the Christian empire: a brass water clock. It chimed the hours by dropping small metal balls into a bowl. Instead of a numbered dial, the clock displayed the time with 12 mechanical horsemen that popped out of small windows, rather like an Advent calendar. It was a thing of beauty and ingenuity, and the Frankish chronicler who recorded the gift marvelled how it had been ‘wondrously wrought by mechanical art’. But given the earliness of the date, what’s not clear is quite what he might have meant by that.

Certain technologies are so characteristic of their historical milieux that they serve as a kind of shorthand. The arresting title credit sequence to the TV series Game of Thrones (2011-) proclaims the show’s medieval setting with an assortment of clockpunk gears, waterwheels, winches and pulleys. In fact, despite the existence of working models such as Harun al-Rashid’s gift, it was another 500 years before similar contraptions started to emerge in Europe. That was at the turn of the 14th century, towards the end of the medieval period – the very era, in fact, whose political machinations reportedly inspired the plot of Game of Thrones.

When mechanical clockwork finally took off, it spread fast. In the first decades of the 14th century, it became so ubiquitous that, in 1324, the treasurer of Lincoln Cathedral offered a substantial donation to build a new clock, to address the embarrassing problem that ‘the cathedral was destitute of what other cathedrals, churches, and convents almost everywhere in the world are generally known to possess’. It’s tempting, then, to see the rise of the mechanical clock as a kind of overnight success.

But technological ages rarely have neat boundaries. Throughout the Latin Middle Ages we find references to many apparent anachronisms, many confounding examples of mechanical art. Musical fountains. Robotic servants. Mechanical beasts and artificial songbirds. Most were designed and built beyond the boundaries of Latin Christendom, in the cosmopolitan courts of Baghdad, Damascus, Constantinople and Karakorum. Such automata came to medieval Europe as gifts from foreign rulers, or were reported in texts by travellers to these faraway places.

In the mid-10th century, for instance, the Italian diplomat Liudprand of Cremona described the ceremonial throne room in the Byzantine emperor’s palace in Constantinople. In a building adjacent to the Great Palace complex, Emperor Constantine VII received foreign guests while seated on a throne flanked by golden lions that ‘gave a dreadful roar with open mouth and quivering tongue’ and switched their tails back and forth. Next to the throne stood a life-sized golden tree, on whose branches perched dozens of gilt birds, each singing the song of its particular species. When Liudprand performed the customary prostration before the emperor, the throne rose up to the ceiling, potentate still perched on top. At length, the emperor returned to earth in a different robe, having effected a costume change during his journey into the rafters.

The throne and its automata disappeared long ago, but Liudprand’s account echoes a description of the same marvel that appears in a Byzantine manual of courtly etiquette, written – by the Byzantine emperor himself, no less – at around the same time. The contrast between the two accounts is telling. The Byzantine one is preoccupied with how the special effects slotted into certain rigid courtly rituals. It was during the formal introduction of an ambassador, the manual explains, that ‘the lions begin to roar, and the birds on the throne and likewise those in the trees begin to sing harmoniously, and the animals on the throne stand upright on their bases’. A nice refinement of royal protocol. Liudprand, however, marvelled at the spectacle. He hazarded a guess that a machine similar to a winepress might account for the rising throne; as for the birds and lions, he admitted: ‘I could not imagine how it was done.’

Other Latin Christians, confronted with similarly exotic wonders, were more forthcoming with theories. Engineers in the West might have lacked the knowledge to copy these complex machines or invent new ones, but thanks to gifts such as Harun al-Rashid’s clock and travel accounts such as Liudprand’s, different kinds of automata became known throughout the Christian world. In time, scholars and philosophers used their own scientific ideas to account for them. Their framework did not rely on a thorough understanding of mechanics. How could it? The kind of mechanical knowledge that had flourished since antiquity in the East had been lost to Europe following the decline of the western Roman Empire.

Instead, they talked about what they knew: the hidden powers of Nature, the fundamental sympathies between celestial bodies and earthly things, and the certainty that demons existed and intervened in human affairs. Arthur C Clarke’s dictum that any sufficiently advanced technology is indistinguishable from magic was rarely more apposite. Yet the very blurriness of that boundary made it fertile territory for the medieval Christian mind. In time, the mechanical age might have disenchanted the world – but its eventual victory was much slower than the clock craze might suggest. And in the meantime, there were centuries of magical machines.

In the medieval Latin world, Nature could – and often did – act predictably. But some phenomena were sufficiently weird and rare that they could not be considered of a piece with the rest of the natural world. They therefore were classified as preternatural: literally, praeter naturalis or ‘beyond nature’.

What might fall into this category? Pretty much any freak occurrence or deviation from the ordinary course of things: a two-headed sheep, for example. Then again, some phenomena qualified as preternatural because their causes were not readily apparent and were thus difficult to know. Take certain hidden – but essential – characteristics of objects, such as the supposedly fire-retardant skin of the salamander, or the way that certain gems were thought to detect or counteract poison. Magnets were, of course, a clear case of the preternatural at work.

If the manifestations of the preternatural were various, so were its causes. Nature herself might be responsible – just because she often behaved predictably did not mean that she was required to do so – but so, equally, might demons and angels. People of great ability and learning could use their knowledge, acquired from ancient texts, to predict preternatural events such as eclipses. Or they might harness the secret properties of plants or natural laws to bring about certain desired outcomes. Magic was largely a matter of manipulating this preternatural domain: summoning demons, interpreting the stars, and preparing a physic could all fall under the same capacious heading.

All of which is to say, there were several possible explanations for the technological marvels that were arriving from the east and south. Robert of Clari, a French knight during the disastrous Fourth Crusade of 1204, described copper statues on the Hippodrome that moved ‘by enchantment’. Several decades later, Franciscan missionaries to the Mongol Empire reported on the lifelike artificial birds at the Khan’s palace and speculated that demons might be the cause (though they didn’t rule out superior engineering as an alternative theory).

Does a talking statue owe its powers to celestial influence or demonic intervention?

Moving, speaking statues might also be the result of a particular alignment of planets. While he taught at the cathedral school in Reims, Gerbert of Aurillac, later Pope Sylvester II (999-1003), introduced tools for celestial observation (the armillary sphere and the star sphere) and calculation (the abacus and Arabic numerals) to the educated elites of northern Europe. His reputation for learning was so great that, more than 100 years after his death, he was also credited with making a talking head that foretold the future. According to some accounts, he accomplished this through demonic magic, which he had learnt alongside the legitimate subjects of science and mathematics; according to others, he used his superior knowledge of planetary motion to cast the head at the precise moment of celestial conjunction so that it would reveal the future. (No doubt he did his calculations with an armillary sphere.)

Because the category of the preternatural encompassed so many objects and phenomena, and because there were competing, rationalised explanations for preternatural things, it could be difficult to discern the correct cause. Does a talking statue owe its powers to celestial influence or demonic intervention? According to one legend, Albert the Great – a 13th-century German theologian, university professor, bishop, and saint – used his knowledge to make a prophetic robot. One of Albert’s brothers in the Dominican Order went to visit him in his cell, knocked on the door, and was told to enter. When the friar went inside he saw that it was not Brother Albert who had answered his knock, but a strange, life-like android. Thinking that the creature must be some kind of demon, the monk promptly destroyed it, only to be scolded for his rashness by a weary and frustrated Albert, who explained that he had been able to create his robot because of a very rare planetary conjunction that happened only once every 30,000 years.

In legend, fiction and philosophy, writers offered explanations for the moving statues, artificial animals and musical figures that they knew were part of the world beyond Latin Christendom. Like us, they used technology to evoke particular places or cultures. The golden tree with artificial singing birds that confounded Liudprand on his visit to Constantinople appears to have been a fairly common type of automaton: it appears in the palaces of Samarra and Baghdad and, later, in the courts of central India. In the early 13th century, the sultan of Damascus sent a metal tree with mechanical songbirds as a gift to the Holy Roman Emperor Frederick II. But this same object also took root in the Western imagination: we find writers of fiction in medieval Europe including golden trees with eerily lifelike artificial birds in many descriptions of courts in Babylon and India.

In one romance from the early 13th century, sorcerers use gemstones with hidden powers combined with necromancy to make the birds hop and chirp. In another, from the late 12th century, the king harnesses the winds to make the golden branches sway and the gilt birds sing. There were several different species of birds represented on the king’s fabulous tree, each with its own birdsong, so exact that real birds flocked to the tree in hopes of finding a mate. ‘Thus the blackbirds, skylarks, jaybirds, starlings, nightingales, finches, orioles and others which flocked to the park in high spirits on hearing the beautiful birdsong, were quite unhappy if they did not find their partner!’

Of course, the Latin West did not retain its innocence of mechanical explanations forever. Three centuries after Gerbert taught his students how to understand the heavens with an armillary sphere, the enthusiasm for mechanical clocks began to sweep northern Europe. These giant timepieces could model the cosmos, chime the hour, predict eclipses and represent the totality of human history, from the fall of humankind in the Garden of Eden to the birth and death of Jesus, and his promised return.

Astronomical instruments, like astrolabes and armillary spheres, oriented the viewer in the cosmos by showing the phases of the moon, the signs of the zodiac and the movements of the planets. Carillons, programmed with melodies, audibly marked the passage of time. Large moving figures of people, weighted with Christian symbolism, appear as monks, Jesus, the Virgin Mary. They offered a master narrative that fused past, present and future (including salvation). The monumental clocks of the late medieval period employed cutting-edge technology to represent secular and sacred chronology in one single timeline.

Secular powers were no slower to embrace the new technologies. Like their counterparts in distant capitals, European rulers incorporated mechanical marvels into their courtly pageantry. The day before his official coronation in Westminster Abbey in 1377, Richard II of England was ‘crowned’ by a golden mechanical angel – made by the goldsmiths’ guild – during his coronation pageant in Cheapside.

And yet, although medieval Europeans had figured out how to build the same kinds of complex automata that people in other places had been designing and constructing for centuries, they did not stop believing in preternatural causes. They merely added ‘mechanical’ to the list of possible explanations. Just as one person’s ecstatic visions might equally be attributed to divine inspiration or diabolical trickery, a talking or moving statue could be ascribed to artisanal or engineering know-how, the science of the stars, or demonic art. Certainly the London goldsmiths in 1377 were in no doubt about how the marvellous angel worked. But because a range of possible causes could animate automata, reactions to them in this late medieval period tended to depend heavily on the perspective of the individual.

At a coronation feast for the queen at the court of Ferdinand I of Aragon in 1414, theatrical machinery – of the kind used in religious Mystery Plays – was used for part of the entertainment. A mechanical device called a cloud, used for the arrival of any celestial being (gods, angels and the like), swept down from the ceiling. The figure of Death, probably also mechanical, appeared above the audience and claimed a courtier and jester named Borra for his own. Other guests at the feast had been forewarned, but nobody told Borra. A chronicler reported on this marvel with dry exactitude:

Death threw down a rope, they [fellow guests] tied it around Borra, and Death hanged him. You would not believe the racket that he made, weeping and expressing his terror, and he urinated into his underclothes, and urine fell on the heads of the people below. He was quite convinced he was being carried off to Hell. The king marvelled at this and was greatly amused.

Such theatrical tricks sound a little gimcrack to us, but if the very stage machinery might partake of uncanny forces, no wonder Borra was afraid.

Nevertheless, as mechanical technology spread throughout Europe, mechanical explanations of automata (and machines in general) gradually prevailed over magical alternatives. By the end of the 17th century, the realm of the preternatural had largely vanished. Technological marvels were understood to operate within the boundaries of natural laws rather than at the margins of them. Nature went from being a powerful, even capricious entity to an abstract noun denoted with a lower-case ‘n’: predictable, regular, and subject to unvarying law, like the movements of a mechanical clock.

This new mechanistic world-view prevailed for centuries. But the preternatural lingered, in hidden and surprising ways. In the 19th century, scientists and artists offered a vision of the natural world that was alive with hidden powers and sympathies. Machines such as the galvanometer – to measure electricity – placed scientists in communication with invisible forces. Perhaps the very spark of life was electrical.

Even today, we find traces of belief in the preternatural, though it is found more often in conjunction with natural, rather than artificial, phenomena: the idea that one can balance an egg on end more easily at the vernal equinox, for example, or a belief in ley lines and other Earth mysteries. Yet our ongoing fascination with machines that escape our control or bridge the human-machine divide, played out countless times in books and on screen, suggest that a touch of that old medieval wonder still adheres to the mechanical realm.

30 March 2015

Anthropocene: The human age (Nature)

Momentum is building to establish a new geological epoch that recognizes humanity’s impact on the planet. But there is fierce debate behind the scenes.

11 March 2015

Illustration by Jessica Fortner

Almost all the dinosaurs have vanished from the National Museum of Natural History in Washington DC. The fossil hall is now mostly empty and painted in deep shadows as palaeobiologist Scott Wing wanders through the cavernous room.

Wing is part of a team carrying out a radical, US$45-million redesign of the exhibition space, which is part of the Smithsonian Institution. And when it opens again in 2019, the hall will do more than revisit Earth’s distant past. Alongside the typical displays of Tyrannosaurus rex and Triceratops, there will be a new section that forces visitors to consider the species that is currently dominating the planet.

“We want to help people imagine their role in the world, which is maybe more important than many of them realize,” says Wing.

This provocative exhibit will focus on the Anthropocene — the slice of Earth’s history during which people have become a major geological force. Through mining activities alone, humans move more sediment than all the world’s rivers combined. Homo sapiens has also warmed the planet, raised sea levels, eroded the ozone layer and acidified the oceans.

Given the magnitude of these changes, many researchers propose that the Anthropocene represents a new division of geological time. The concept has gained traction, especially in the past few years — and not just among geoscientists. The word has been invoked by archaeologists, historians and even gender-studies researchers; several museums around the world have exhibited art inspired by the Anthropocene; and the media have heartily adopted the idea. “Welcome to the Anthropocene,” The Economist announced in 2011.

The greeting was a tad premature. Although the term is trending, the Anthropocene is still an amorphous notion — an unofficial name that has yet to be accepted as part of the geological timescale. That may change soon. A committee of researchers is currently hashing out whether to codify the Anthropocene as a formal geological unit, and when to define its starting point.

But critics worry that important arguments against the proposal have been drowned out by popular enthusiasm, driven in part by environmentally minded researchers who want to highlight how destructive humans have become. Some supporters of the Anthropocene idea have even been likened to zealots. “There’s a similarity to certain religious groups who are extremely keen on their religion — to the extent that they think everybody who doesn’t practise their religion is some kind of barbarian,” says one geologist who asked not to be named.

The debate has shone a spotlight on the typically unnoticed process by which geologists carve up Earth’s 4.5 billion years of history. Normally, decisions about the geological timescale are made solely on the basis of stratigraphy — the evidence contained in layers of rock, ocean sediments, ice cores and other geological deposits. But the issue of the Anthropocene “is an order of magnitude more complicated than the stratigraphy”, says Jan Zalasiewicz, a geologist at the University of Leicester, UK, and the chair of the Anthropocene Working Group that is evaluating the issue for the International Commission on Stratigraphy (ICS).

Written in stone

For geoscientists, the timescale of Earth’s history rivals the periodic table in terms of scientific importance. It has taken centuries of painstaking stratigraphic work — matching up major rock units around the world and placing them in order of formation — to provide an organizing scaffold that supports all studies of the planet’s past. “The geologic timescale, in my view, is one of the great achievements of humanity,” says Michael Walker, a Quaternary scientist at the University of Wales Trinity St David in Lampeter, UK.

Walker’s work sits at the top of the timescale. He led a group that helped to define the most recent unit of geological time, the Holocene epoch, which began about 11,700 years ago.

Sources: Dams/Water/Fertilizer, IGBP; Fallout, Ref. 5; Map, E. C. Ellis Phil. Trans. R. Soc. A 369, 1010–1035 (2011); Methane, Ref. 4

The decision to formalize the Holocene in 2008 was one of the most recent major actions by the ICS, which oversees the timescale. The commission has segmented Earth’s history into a series of nested blocks, much like the years, months and days of a calendar. In geological time, the 66 million years since the death of the dinosaurs is known as the Cenozoic era. Within that, the Quaternary period occupies the past 2.58 million years — during which Earth has cycled in and out of a few dozen ice ages. The vast bulk of the Quaternary consists of the Pleistocene epoch, with the Holocene occupying the thin sliver of time since the end of the last ice age.

When Walker and his group defined the beginning of the Holocene, they had to pick a spot on the planet that had a signal to mark that boundary. Most geological units are identified by a specific change recorded in rocks — often the first appearance of a ubiquitous fossil. But the Holocene is so young, geologically speaking, that it permits an unusual level of precision. Walker and his colleagues selected a climatic change — the end of the last ice age’s final cold snap — and identified a chemical signature of that warming at a depth of 1,492.45 metres in a core of ice drilled near the centre of Greenland1. A similar fingerprint of warming can be seen in lake and marine sediments around the world, allowing geologists to precisely identify the start of the Holocene elsewhere.

“The geologic timescale, in my view, is one of the great achievements of humanity.”

Even as the ICS was finalizing its decision on the start of the Holocene, discussion was already building about whether it was time to end that epoch and replace it with the Anthropocene. This idea has a long history. In the mid-nineteenth century, several geologists sought to recognize the growing power of humankind by referring to the present as the ‘anthropozoic era’, and others have since made similar proposals, sometimes with different names. The idea has gained traction only in the past few years, however, in part because of rapid changes in the environment, as well as the influence of Paul Crutzen, a chemist at the Max Plank Institute for Chemistry in Mainz, Germany.

Crutzen has first-hand experience of how human actions are altering the planet. In the 1970s and 1980s, he made major discoveries about the ozone layer and how pollution from humans could damage it — work that eventually earned him a share of a Nobel prize. In 2000, he and Eugene Stoermer of the University of Michigan in Ann Arbor argued that the global population has gained so much influence over planetary processes that the current geological epoch should be called the Anthropocene2. As an atmospheric chemist, Crutzen was not part of the community that adjudicates changes to the geological timescale. But the idea inspired many geologists, particularly Zalasiewicz and other members of the Geological Society of London. In 2008, they wrote a position paper urging their community to consider the idea3.

Those authors had the power to make things happen. Zalasiewicz happened to be a member of the Quaternary subcommission of the ICS, the body that would be responsible for officially considering the suggestion. One of his co-authors, geologist Phil Gibbard of the University of Cambridge, UK, chaired the subcommission at the time.

Although sceptical of the idea, Gibbard says, “I could see it was important, something we should not be turning our backs on.” The next year, he tasked Zalasiewicz with forming the Anthropocene Working Group to look into the matter.

A new beginning

Since then, the working group has been busy. It has published two large reports (“They would each hurt you if they dropped on your toe,” says Zalasiewicz) and dozens of other papers.

The group has several issues to tackle: whether it makes sense to establish the Anthropocene as a formal part of the geological timescale; when to start it; and what status it should have in the hierarchy of the geological time — if it is adopted.

When Crutzen proposed the term Anthropocene, he gave it the suffix appropriate for an epoch and argued for a starting date in the late eighteenth century, at the beginning of the Industrial Revolution. Between then and the start of the new millennium, he noted, humans had chewed a hole in the ozone layer over Antarctica, doubled the amount of methane in the atmosphere and driven up carbon dioxide concentrations by 30%, to a level not seen in 400,000 years.

When the Anthropocene Working Group started investigating, it compiled a much longer long list of the changes wrought by humans. Agriculture, construction and the damming of rivers is stripping away sediment at least ten times as fast as the natural forces of erosion. Along some coastlines, the flood of nutrients from fertilizers has created oxygen-poor ‘dead zones’, and the extra CO2 from fossil-fuel burning has acidified the surface waters of the ocean by 0.1 pH units. The fingerprint of humans is clear in global temperatures, the rate of species extinctions and the loss of Arctic ice.

The group, which includes Crutzen, initially leaned towards his idea of choosing the Industrial Revolution as the beginning of the Anthropocene. But other options were on the table.

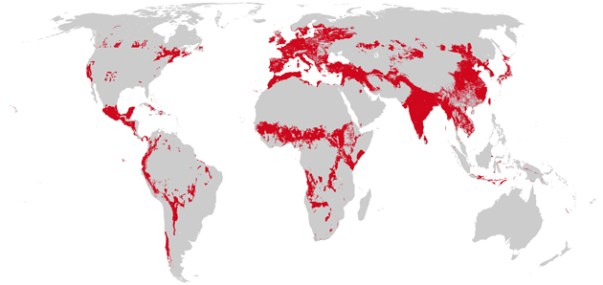

Some researchers have argued for a starting time that coincides with an expansion of agriculture and livestock cultivation more than 5,000 years ago4, or a surge in mining more than 3,000 years ago (see ‘Humans at the helm’). But neither the Industrial Revolution nor those earlier changes have left unambiguous geological signals of human activity that are synchronous around the globe (see ‘Landscape architecture’).

This week in Nature, two researchers propose that a potential marker for the start of the Anthropocene could be a noticeable drop in atmospheric CO2 concentrations between 1570 and 1620, which is recorded in ice cores (see page 171). They link this change to the deaths of some 50 million indigenous people in the Americas, triggered by the arrival of Europeans. In the aftermath, forests took over 65 million hectares of abandoned agricultural fields — a surge of regrowth that reduced global CO2.

In the working group, Zalasiewicz and others have been talking increasingly about another option — using the geological marks left by the atomic age. Between 1945 and 1963, when the Limited Nuclear Test Ban Treaty took effect, nations conducted some 500 above-ground nuclear blasts. Debris from those explosions circled the globe and created an identifiable layer of radioactive elements in sediments. At the same time, humans were making geological impressions in a number of other ways — all part of what has been called the Great Acceleration of the modern world. Plastics started flooding the environment, along with aluminium, artificial fertilizers, concrete and leaded petrol, all of which have left signals in the sedimentary record.

In January, the majority of the 37-person working group offered its first tentative conclusion. Zalasiewicz and 25 other members reported5 that the geological markers available from the mid-twentieth century make this time “stratigraphically optimal” for picking the start of the Anthropocene, whether or not it is formally defined. Zalasiewicz calls it “a candidate for the least-worst boundary”.

The group even proposed a precise date: 16 July 1945, the day of the first atomic-bomb blast. Geologists thousands of years in the future would be able to identify the boundary by looking in the sediments for the signature of long-lived plutonium from mid-century bomb blasts or many of the other global markers from that time.

A many-layered debate

The push to formalize the Anthropocene upsets some stratigraphers. In 2012, a commentary published by the Geological Society of America6 asked: “Is the Anthropocene an issue of stratigraphy or pop culture?” Some complain that the working group has generated a stream of publicity in support of the concept. “I’m frustrated because any time they do anything, there are newspaper articles,” says Stan Finney, a stratigraphic palaeontologist at California State University in Long Beach and the chair of the ICS, which would eventually vote on any proposal put forward by the working group. “What you see here is, it’s become a political statement. That’s what so many people want.”

Finney laid out some of his concerns in a paper7 published in 2013. One major question is whether there really are significant records of the Anthropocene in global stratigraphy. In the deep sea, he notes, the layer of sediments representing the past 70 years would be thinner than 1 millimetre. An even larger issue, he says, is whether it is appropriate to name something that exists mainly in the present and the future as part of the geological timescale.

“It’s become a political statement. That’s what so many people want.”

Some researchers argue that it is too soon to make a decision — it will take centuries or longer to know what lasting impact humans are having on the planet. One member of the working group, Erle Ellis, a geographer at the University of Maryland, Baltimore County, says that he raised the idea of holding off with fellow members of the group. “We should set a time, perhaps 1,000 years from now, in which we would officially investigate this,” he says. “Making a decision before that would be premature.”

That does not seem likely, given that the working group plans to present initial recommendations by 2016.

Some members with different views from the majority have dropped out of the discussion. Walker and others contend that human activities have already been recognized in the geological timescale: the only difference between the current warm period, the Holocene, and all the interglacial times during the Pleistocene is the presence of human societies in the modern one. “You’ve played the human card in defining the Holocene. It’s very difficult to play the human card again,” he says.

Walker resigned from the group a year ago, when it became clear that he had little to add. He has nothing but respect for its members, he says, but he has heard concern that the Anthropocene movement is picking up speed. “There’s a sense in some quarters that this is something of a juggernaut,” he says. “Within the geologic community, particularly within the stratigraphic community, there is a sense of disquiet.”

Zalasiewicz takes pains to make it clear that the working group has not yet reached any firm conclusions.“We need to discuss the utility of the Anthropocene. If one is to formalize it, who would that help, and to whom it might be a nuisance?” he says. “There is lots of work still to do.”

Any proposal that the group did make would still need to pass a series of hurdles. First, it would need to receive a supermajority — 60% support — in a vote by members of the Quaternary subcommission. Then it would need to reach the same margin in a second vote by the leadership of the full ICS, which includes chairs from groups that study the major time blocks. Finally, the executive committee of the International Union of Geological Sciences must approve the request.

At each step, proposals are often sent back for revision, and they sometimes die altogether. It is an inherently conservative process, says Martin Head, a marine stratigrapher at Brock University in St Catharines, Canada, and the current head of the Quaternary subcommission. “You are messing around with a timescale that is used by millions of people around the world. So if you’re making changes, they have to be made on the basis of something for which there is overwhelming support.”

Some voting members of the Quaternary subcommission have told Nature that they have not been persuaded by the arguments raised so far in favour of the Anthropocene. Gibbard, a friend of Zalasiewicz’s, says that defining this new epoch will not help most Quaternary geologists, especially those working in the Holocene, because they tend not to study material from the past few decades or centuries. But, he adds: “I don’t want to be the person who ruins the party, because a lot of useful stuff is coming out as a consequence of people thinking about this in a systematic way.”

If a proposal does not pass, researchers could continue to use the name Anthropocene on an informal basis, in much the same way as archaeological terms such as the Neolithic era and the Bronze Age are used today. Regardless of the outcome, the Anthropocene has already taken on a life of its own. Three Anthropocene journals have started up in the past two years, and the number of papers on the topic is rising sharply, with more than 200 published in 2014.

By 2019, when the new fossil hall opens at the Smithsonian’s natural history museum, it will probably be clear whether the Anthropocene exhibition depicts an official time unit or not. Wing, a member of the working group, says that he does not want the stratigraphic debate to overshadow the bigger issues. “There is certainly a broader point about human effects on Earth systems, which is way more important and also more scientifically interesting.”

As he walks through the closed palaeontology hall, he points out how much work has yet to be done to refashion the exhibits and modernize the museum, which opened more than a century ago. A hundred years is a heartbeat to a geologist. But in that span, the human population has more than tripled. Wing wants museum visitors to think, however briefly, about the planetary power that people now wield, and how that fits into the context of Earth’s history. “If you look back from 10 million years in the future,” he says, “you’ll be able to see what we were doing today.”

Nature 519, 144–147 (12 March 2015), doi:10.1038/519144a

- See Editorial page 129

On Reverse Engineering (Anthropology and Algorithms)

Nick Seaver on Jan 27, 2014

Looking for the cultural work of engineers

The Atlantic welcomed 2014 with a major feature on web behemoth Netflix. If you didn’t know, Netflix has developed a system for tagging movies and for assembling those tags into phrases that look like hyper-specific genre names: Visually-striking Foreign Nostalgic Dramas, Critically-acclaimed Emotional Underdog Movies, Romantic Chinese Crime Movies, and so on. The sometimes absurd specificity of these names (or “altgenres,” as Netflix calls them) is one of the peculiar pleasures of the contemporary web, recalling the early days of website directories and Usenet newsgroups, when it seemed like the internet would be a grand hotel, providing a room for any conceivable niche.

Netflix’s weird genres piqued the interest of Atlantic editor Alexis Madrigal, who set about scraping the whole list. Working from the US in late 2013, his scraper bot turned up a startling 76,897 genre names — clearly the emanations of some unseen algorithmic force. How were they produced? What was their generative logic? What made them so good—plausible, specific, with some inexpressible touch of the human? Pursuing these mysteries brought Madrigal to the world of corpus analysis software and eventually to Netflix’s Silicon Valley offices.

The resulting article is an exemplary piece of contemporary web journalism — a collaboratively produced, tech-savvy 5,000-word “long read” that is both an exposé of one of the largest internet companies (by volume) and a reflection on what it is like to be human with machines. It is supported by a very entertaining altgenre-generating widget, built by professor and software carpenter Ian Bogost and illustrated by Twitter mystery darth. Madrigal pieces the story together with his signature curiosity and enthusiasm, and the result feels so now that future corpus analysts will be able to use it as a model to identify texts written in the United States from 2013–14. You really should read it.

As a cultural anthropologist in the middle of a long-term research project on algorithmic filtering systems, I am very interested in how people think about companies like Netflix, which take engineering practices and apply them to cultural materials. In the popular imagination, these do not go well together: engineering is about universalizable things like effectiveness, rationality, and algorithms, while culture is about subjective and particular things, like taste, creativity, and artistic expression. Technology and culture, we suppose, make an uneasy mix. When Felix Salmon, in his response to Madrigal’s feature, complains about “the systematization of the ineffable,” he is drawing on this common sense: engineers who try to wrangle with culture inevitably botch it up.

Yet, in spite of their reputations, we always seem to find technology and culture intertwined. The culturally-oriented engineering of companies like Netflix is a quite explicit case, but there are many others. Movies, for example, are a cultural form dependent on a complicated system of technical devices — cameras, editing equipment, distribution systems, and so on. Technologies that seem strictly practical — like the Māori eel trap pictured above—are influenced by ideas about effectiveness, desired outcomes, and interpretations of the natural world, all of which vary cross-culturally. We may talk about technology and culture as though they were independent domains, but in practice, they never stay where they belong. Technology’s straightforwardness and culture’s contingency bleed into each other.

This can make it hard to talk about what happens when engineers take on cultural objects. We might suppose that it is a kind of invasion: The rationalizers and quantifiers are over the ridge! They’re coming for our sensitive expressions of the human condition! But if technology and culture are already mixed up with each other, then this doesn’t make much sense. Aren’t the rationalizers expressing their own cultural ideas? Aren’t our sensitive expressions dependent on our tools? In the present moment, as companies like Netflix proliferate, stories trying to make sense of the relationship between culture and technology also proliferate. In my own research, I examine these stories, as told by people from a variety of positions relative to the technology in question. There are many such stories, and they can have far-reaching consequences for how technical systems are designed, built, evaluated, and understood.

The story Madrigal tells in The Atlantic is framed in terms of “reverse engineering.” The engineers of Netflix have not invaded cultural turf — they’ve reverse engineered it and figured out how it works. To report on this reverse engineering, Madrigal has done some of his own, trying to figure out the organizing principles behind the altgenre system. So, we have two uses of reverse engineering here: first, it is a way to describe what engineers do to cultural stuff; second, it is a way to figure out what engineers do.

So what does “reverse engineering” mean? What kind of things can be reverse engineered? What assumptions does reverse engineering make about its objects? Like any frame, reverse engineering constrains as well as enables the presentation of certain stories. I want to suggest here that, while reverse engineering might be a useful strategy for figuring out how an existing technology works, it is less useful for telling us how it came to work that way. Because reverse engineering starts from a finished technical object, it misses the accidents that happened along the way — the abandoned paths, the unusual stories behind features that made it to release, moments of interpretation, arbitrary choice, and failure. Decisions that seemed rather uncertain and subjective as they were being made come to appear necessary in retrospect. Engineering looks a lot different in reverse.

This is especially evident in the case of explicitly cultural technologies. Where “technology” brings to mind optimization, functionality, and necessity, “culture” seems to represent the opposite: variety, interpretation, and arbitrariness. Because it works from a narrowly technical view of what engineering entails, reverse engineering has a hard time telling us about the cultural work of engineers. It is telling that the word “culture” never appears in this piece about the contemporary state of the culture industry.

Inspired by Madrigal’s article, here are some notes on the consequences of reverse engineering for how we think about the cultural lives of engineers. As culture and technology continue to escape their designated places and intertwine, we need ways to talk about them that don’t assume they can be cleanly separated.

There is a terrible movie about reverse engineering, based on a short story by Philip K. Dick. It is called Paycheck, stars Ben Affleck, and is not currently available for streaming on Netflix. In it, Affleck plays a professional reverse engineer (the “best in the business”), who is hired by companies to figure out the secrets of their competitors. After doing this, his memory of the experience is wiped and in return, he is compensated very well. Affleck is a sort of intellectual property conduit: he extracts secrets from devices, and having moved those secrets from one company to another, they are then extracted from him. As you might expect, things go wrong: Affleck wakes up one day to find that he has forfeited his payment in exchange for an envelope of apparently worthless trinkets and, even worse, his erstwhile employer now wants to kill him. The trinkets turn out to be important in unexpected ways as Affleck tries to recover the facts that have been stricken from his memory. The movie’s tagline is “Remember the Future”—you get the idea.

Paycheck illustrates a very popular way of thinking about engineering knowledge. To know about something is to know the facts about how it works. These facts are like physical objects — they can be hidden (inside of technologies, corporations, envelopes, or brains), and they can be retrieved and moved around. In this way of thinking about knowledge, facts that we don’t yet know are typically hidden on the other side of some barrier. To know through reverse engineering is to know by trying to pull those pre-existing facts out.

This is why reverse engineering is sometimes used as a metaphor in the sciences to talk about revealing the secrets of Nature. When biologists “reverse engineer” a cell, for example, they are trying to uncover its hidden functional principles. This kind of work is often described as “pulling back the curtain” on nature (or, in older times, as undressing a sexualized, female Nature — the kind of thing we in academia like to call “problematic”). Nature, if she were a person, holds the secrets her reverse engineers want.

In the more conventional sense of the term, reverse engineering is concerned with uncovering secrets held by engineers. Unlike its use in the natural sciences, here reverse engineering presupposes that someone already knows what we want to find out. Accessing this kind of information is often described as “pulling back the curtain” on a company. (This is likely the unfortunate naming logic behind Kimono, a new service for scraping websites and automatically generating APIs to access the scraped data.) Reverse engineering is not concerned with producing “new” knowledge, but with extracting facts from one place and relocating them to another.

Reverse engineering (and I guess this is obvious) is concerned with finished technologies, so it presumes that there is a straightforward fact of the matter to be worked out. Something happened to Ben Affleck before his memory was wiped, and eventually he will figure it out. This is not Rashomon, which suggests there might be multiple interpretations of the same event (although that isn’t available for streaming either). The problem is that this narrow scope doesn’t capture everything we might care about: why this technology and not another one? If a technology is constantly changing, like the algorithms and data structures under the hood at Netflix, then why is it changing as it does? Reverse engineering, at best, can only tell you the what, not the why or the how. But it even has some trouble with the what.

Netflix, like most companies today, is surrounded by a curtain of non-disclosure agreements and intellectual property protections. This curtain animates Madrigal’s piece, hiding the secrets that his reverse engineering is aimed at. For people inside the curtain, nothing in his article is news. What is newsworthy, Madrigal writes, is that “no one outside the company has ever assembled this data before.” The existence of the curtain shapes what we imagine knowledge about Netflix to be: something possessed by people on the inside and lacked by people on the outside.

So, when Madrigal’s reverse engineering runs out of steam, the climax of the story comes and the curtain is pulled back to reveal the “Wizard of Oz, the man who made the machine”: Netflix’s VP of Product Innovation Todd Yellin. Here is the guy who holds the secrets behind the altgenres, the guy with the knowledge about how Netflix has tried to bridge the world of engineering and the world of cultural production. According to the logic of reverse engineering, Yellin should be able to tell us everything we want to know.

From Yellin, Madrigal learns about the extensiveness of the tagging that happens behind the curtain. He learns some things that he can’t share publicly, and he learns of the existence of even more secrets — the contents of the training manual which dictate how movies are to be entered into the system. But when it comes to how that massive data and intelligence infrastructure was put together, he learns this:

“It’s a real combination: machine-learned, algorithms, algorithmic syntax,” Yellin said, “and also a bunch of geeks who love this stuff going deep.”

This sentence says little more than “we did it with computers,” and it illustrates a problem for the reverse engineer: there is always another curtain to get behind. Scraping altgenres will only get you so far, and even when you get “behind the curtain,” companies like Netflix are only willing to sketch out their technical infrastructure in broad strokes. In more technically oriented venues or the academic research community, you may learn more, but you will never get all the way to the bottom of things. The Wizard of Oz always holds on to his best secrets.