www1.folha.uol.com.br

25.jun.2024 às 23h00

Todos nós humanos vivemos no mesmo mundo e temos experiências semelhantes. Por isso, todas as línguas faladas no planeta possuem as mesmas categorias básicas para expressar ideias e objetos, refletindo essa experiência humana comum.

Essa noção foi defendida por anos por diversos linguistas, mas para o linguista americano Caleb Everett, quando analisamos os idiomas mais de perto, descobrimos que muitos conceitos básicos não são universais e que falantes de línguas diferentes veem e pensam o mundo de forma diferente.

Em um novo livro, baseado em muitas línguas que ele pesquisou na Amazônia brasileira, Everett mostra que muitas culturas não pensam da mesma forma o tempo, o espaço ou os números.

Algumas línguas têm muitas palavras para descrever um conceito como tempo. Outras, como a Tupi Kawahib, sequer tem uma definição de tempo.

Talvez poucas pessoas estejam mais aptas a pensar sobre esse problema do que Everett. Nascido nos Estados Unidos, ele teve uma infância incomum nos anos 1980, dividindo seu tempo entre seu país natal, escolas públicas em São Paulo e Porto Velho, e aldeias indígenas no interior da Amazônia, em Rondônia.

Caleb é filho do americano Daniel Everett, que veio ao Brasil nos anos 1970 como missionário cristão com o propósito de traduzir a Bíblia para o idioma pirahã, uma língua falada hoje por cerca de 300 indígenas brasileiros.

Daniel veio para ajudar a converter os indígenas, mas acabou ele próprio convertido: abandonou a religião e passou a se dedicar ao estudo do pirahã, com um doutorado em linguística na Unicamp.

Desde cedo, Caleb acompanhou o pai e a mãe (que também era missionária) em missões na Amazônia brasileira. Chegou a viver entre os indígenas, passando parte da infância pescando e brincando com eles na floresta.

De volta aos EUA, se formou e foi trabalhar no mercado financeiro. Mas uma questão sempre o perturbou: interessado em psicologia, ele lia em revistas científicas que diziam que a forma que os humanos aprendem e entendem os números é universal.

“Nem todos os humanos pensam assim. Eu tenho o grande privilégio de conhecer alguns dos povos indígenas do Brasil que não pensam assim”, diz Everett.

Cada vez mais interessado em pesquisar sobre os indígenas que conheceu na sua infância, ele resolveu dar uma guinada na sua vida. Abandonou o mundo financeiro, fez doutorado e voltou para Rondônia, onde foi investigar as línguas amazônicas.

Da pesquisa, saiu seu primeiro livro, de 2017, “Numbers and the Making of Us: Counting and the Course of Human Cultures”(os números e a nossa formação: a contagem e o curso das culturas humanas). No livro, Caleb Everett defende que os números são um conceito que não é natural ou inato ao ser humano, e varia imensamente de acordo com cada cultura e idioma, ao ponto que é impossível dizer que existe uma forma universal e “natural” para os humanos aprenderem quantidades.

Recentemente, ele lançou outro livro em que volta ao tema. Em “A Myriad of Tongues: How Languages Reveal Differences in How We Think” (uma miríade de línguas: como as línguas revelam diferenças na forma como pensamos), Everett diz que nos acostumamos a acreditar que todas as línguas do mundo usam categorias universais para classificar ideias e objetos, já que a experiência humana é limitada a alguns aspectos comuns de todas as culturas.

Afinal, todos nós, independente de onde nascemos, contamos quantidades, lembramos do passado, planejamos o futuro e usamos pontos geográficos para nos localizarmos.

Mas, segundo Everett, nem todas as línguas refletem o mundo dessa forma. Há línguas no mundo, como a pirahã, que ele aprendeu na infância, que sequer têm números precisos. Algumas línguas possuem apenas dois tempos verbais (o futuro e o não futuro), outras possuem sete.

Essas discrepâncias são muito maiores do que apenas diferenças culturais, argumenta Caleb. Elas determinam de forma profunda como cada ser humano percebe e pensa o mundo.

A diferença é que para um povo, algumas noções de tempo podem ser não só irrelevantes, como quase incompreensíveis. Já outros povos podem ter uma compreensão mais sofisticada de tempo do que outros.

Para entender isso, linguistas como Caleb estão se debruçando sobre muitas línguas que não eram devidamente estudadas no passado, sobretudo na Amazônia. A tecnologia e a facilidade de se viajar no mundo atual acelerou o trabalho dos linguistas.

Mas eles correm contra o tempo, já que a modernidade está “matando” línguas em um ritmo mais acelerado, com povos indígenas tendo cada vez mais dificuldade de se sustentarem sem o aprendizado de outros idiomas.

O estudo das línguas amazônicas também está desafiando noções antigas de intelectuais sobre como os humanos falam. Esse debate traz à tona uma famosa disputa que existe no mundo acadêmico entre seu pai, Daniel, e o linguista americano Noam Chomsky, em torno da língua pirahã, de Rondônia, justamente a que Caleb aprendeu ainda quando criança.

Chomsky é famoso por propor o conceito de “gramática universal”, a ideia de que todas as línguas humanas possuem uma estrutura comum, independente de onde essas línguas se desenvolvem.

Mas Daniel Everett afirma que a língua pirahã desmente a tese de Chomsky. Em pirahã, não existiria a recursividade, algo que Chomsky diz ser inerente a todas as línguas e, portanto, universal. Recursividade é quando se insere uma frase dentro de outra, como em: “O policial que prendeu o bandido que roubou uma casa está na delegacia”.

Esse é um dos debates mais acalorados no mundo da linguística. Chomsky chegou a chamar Daniel Everett de charlatão e sugeriu que sua pesquisa sobre os pirahã era falsificada, já que por anos Daniel foi o único acadêmico a falar a língua.

Em entrevista para a BBC News Brasil, Caleb disse acreditar que este debate está ficando no passado, com os avanços tecnológicos que estão acontecendo no mundo da linguística. No mundo de hoje, são faladas mais de 7 mil línguas, e graças a avanços como ciência de dados e aprendizado de máquina, linguistas estão conseguindo expandir sua compreensão desses idiomas em uma velocidade inédita.

O resultado, segundo Caleb, é que algumas noções clássicas do mundo da linguística dos anos 1970 estão finalmente podendo ser colocadas à prova, e muitas delas não estão sendo aprovadas no teste.

Confira abaixo a entrevista que Caleb Everett deu à BBC News Brasil na qual fala sobre suas experiências na Amazônia brasileira, o debate sobre como as línguas moldam o mundo que experimentamos e os avanços no estudo dos idiomas nos dias de hoje.

Seu livro sugere que estamos tendo uma melhor compreensão das mais de 7.000 línguas que hoje são faladas no mundo. O que os linguistas estão aprendendo com essas línguas menos conhecidas?

Estamos aprendendo muito. O que está claro é que as línguas são muito mais diferentes entre si do que pensávamos. Nós costumávamos supor que existia essa diversidade entre as línguas, mas que por trás delas haveria algum tipo de componente universal, algo que todas as línguas compartilhavam.

E o que estamos descobrindo, à medida que olhamos para mais e mais línguas, é que elas são diferentes em maneiras muito profundas, que não foram previstas em alguns dos modelos teóricos da linguística dos anos 1960 e 1970.

Existem alguns pontos em comum, é claro. Todos nós temos os mesmos ouvidos, as mesmas bocas e os mesmos cérebros.

Há essas semelhanças entre as línguas, mas não é porque existe algo geneticamente programado dentro da linguagem.

Muito do seu trabalho é baseado em línguas amazônicas que você estuda há muito tempo. O que você aprendeu especificamente com elas?

A Amazônia é realmente fascinante, porque embora existam outras regiões do mundo, como a Nova Guiné ou a África Ocidental, que têm mais línguas, as línguas da Amazônia são totalmente não relacionadas entre si.

Existem algumas centenas de línguas, mas existem dezenas de famílias linguísticas, como tupi ou aruaque ou algumas outras línguas isoladas que não têm “parentes” conhecidos.

Algumas são totalmente distintas entre si e estão a apenas 100 quilômetros de distância uma da outra.

A Amazônia é uma espécie de microcosmo fascinante da diversidade linguística que existe no mundo.

E podemos aprender muito sobre as diversas formas como os humanos se comunicam olhando apenas para as pessoas na Amazônia.

Muitas vezes, eu acho, nós somos culpados no Ocidente de uma espécie de homogeneização desses grupos. Nós meio que os colocamos suas línguas, suas culturas no mesmo bojo.

Na Amazônia, o que você descobriu que sustenta essa ideia de que as pessoas pensam diferente porque falam diferente?

Uma forma pela qual as línguas dessa região produziram insights é como as pessoas pensam sobre o tempo.

Em inglês e em muitas línguas, temos a tendência, por exemplo, de usar metáforas em que o futuro está na nossa frente e o passado está atrás de nós.

Mas existem alguns grupos na Amazônia que não falam sobre o tempo dessa forma.

Há um caso famoso de língua Tupi Kawahib, onde eles nem falam sobre tempo em termos de espaço.

Quando uma língua como o inglês tem três tempos, algumas línguas têm até sete tempos. Elas dividem o tempo de maneiras muito diferentes.

Então não se trata apenas de coisas superficiais, como “eles falam sobre plantas e animais de forma diferente”.

E isso é verdade até certo ponto. Mas o que mais me interessa, e o foco do livro, são esses aspectos fundamentais do pensamento humano.

Como pensamos sobre as quantidades, como pensamos sobre o espaço, como pensamos sobre o tempo e como os humanos desenvolvem essas capacidades, e como isso parece variar em alguns aspectos entre culturas.

No seu livro, você dá o exemplo de uma frase em inglês com muitas referências ao tempo: “Na segunda-feira passada eu corri por meia hora, como eu faço todas as semanas”. Você disse que algumas das línguas que estuda não têm todos os recursos para enquadrar o tempo dessa forma. Já outras têm sete tempos verbais. Essas línguas são menos ou mais sofisticadas do que as que estamos acostumados?

Você vê idiomas que talvez prestem atenção ao tempo e às maneiras que nós não fazemos.

Se você tiver na sua língua apenas passado, presente ou futuro, quando você estiver falando, basta indicar se foi em um desses três tempos.

Mas se você tem sete tempos que podem incluir algo como passado muito distante ou um futuro muito distante, então você deve prestar atenção a esses aspectos temporais e talvez a formas mais sutis.

Em que idioma foi isso?

É uma linguagem chamada yagua [falada na Amazônia peruana]. Embora existam muitas línguas que possuem cinco ou seis tempos, há algumas que não possuem nenhum tempo verbal.

Uma das línguas que trabalhei na Amazônia, Karitiana, tem dois tempos: futuro e não futuro. Essa é uma língua falada no Estado de Rondônia. Esse é um sistema de tempo bastante comum. Mas o exemplo que você lembrou, sobre uma corrida que fiz de 30 minutos ontem ou na semana passada. Vamos pensar sobre essa frase. O que são 30 minutos? Minutos é algo muito definido cultural e linguisticamente. O minuto vem de um sistema numérico de base 60 que remonta à Mesopotâmia, e é por isso que dividimos a nossa hora 60, e depois dividimos novamente para ter segundos. São coisas culturais muito arbitrárias que aprendemos, e parecem naturais para nós à medida que aprendemos a contar as horas.

Mas é realmente antinatural para muitas pessoas.

Então você pode imaginar se estiver conversando com um amazônico que nunca topou com o conceito de horas, minutos ou semana, que também é culturalmente construída. Há tantas tradições culturais muito específicas incorporadas apenas nessa frase que impactam como pensamos.

Pense no quanto o seu dia é ditado olhando os relógios e pensando onde você tem que estar em um determinado horário e em determinados minutos. Isso tudo é arbitrário.

Muitas culturas prescindem completamente destas noções. Estas coisas são codificadas na linguagem aprendida pelas crianças desde cedo, que moldam a forma como pensamos sobre a passagem do tempo. E isso parece totalmente natural para nós até que você seja confrontado com alguém para quem esses conceitos sejam totalmente antinaturais e você percebe “este é um humano inteligente e eles não precisam desses conceitos.”

Isso não quer dizer que eles sejam inúteis. Acho que são muito úteis, mas são úteis no nosso contexto cultural. E são apenas uma maneira diferente de pensar sobre o mundo. Eles não são “a” maneira de pensar sobre o mundo.

Vamos pegar, por exemplo, o idioma que você mencionou que tem sete tempos. O que você percebe que é diferente na maneira como eles pensam ou na forma como sua sociedade é?

Parte disso, eu diria, é arbitrário.

Mas o que alguns pesquisadores tentaram fazer é um teste experimental: será que estas diferenças linguísticas têm impacto na forma como as pessoas pensam sobre o tempo em geral, mesmo quando não estão falando?

E há uma boa quantidade de evidências agora de que isso acontece.

Como no exemplo do futuro estando à sua frente no passado, atrás de você.

Há uma boa quantidade de evidências experimentais agora de que, mesmo quando as pessoas nessas línguas estavam, o passado está à sua frente e o futuro está atrás de você, há uma boa quantidade de evidências de que as pessoas pensam sobre o tempo de maneira diferente, mesmo quando elas não estão falando.

Experiências básicas mostraram que quando as pessoas falam sobre o futuro em algumas destas línguas, elas apontam para trás, e quando falam sobre o passado, apontam para a frente, enquanto os falantes de inglês fazem o inverso.

Tendemos a pensar que estamos caminhando em direção ao futuro, enquanto para muitas dessas culturas é o contrário. E se você pensar bem, faz sentido. Porque você pode ver o passado. Você vê o que comeu no café da manhã. Você sabe o que aconteceu ontem. Mas o futuro é meio desconhecido para nós, então esse tipo de metáfora básica de visão e ver o passado, não ver o futuro, é a base de como as pessoas pensam sobre o tempo. E algumas dessas culturas e essa forma de pensar sobre o tempo surge mesmo em contextos não linguísticos.

Você teve uma infância muito interessante e inusitada, tendo passado grande parte do tempo com indígenas no Brasil. Como foi essa experiência?

Tenho boas lembranças da minha infância e do Brasil. Passei grande parte da minha infância na aldeia pirahã com minhas duas irmãs e meus pais.

Mas também passei um tempo em escolas públicas brasileiras, indo e voltando e ocasionalmente visitando os EUA.

Minha infância foi uma mistura de estar na aldeia no meio da selva, estar em cidades brasileiras e depois estar ocasionalmente em cidades americanas.

Em Porto Velho e São Paulo, porque meu pai acabou fazendo doutorado na Unicamp e assim em Campinas e em São Paulo.

As memórias de estar na selva são geralmente muito boas. Eu olho para trás agora e penso que nunca faria isso com meu filho (risos), quando penso nos riscos que corremos. Todos nós contraímos malária. É fácil olhar para trás com carinho quando todos sobreviveram.

Mas porque todos nós sobrevivemos e eu tenho boas lembranças de estar na aldeia nadando no rio com meus amigos indígenas, de caçar ou pescar com minhas irmãs, mas também alguns dos aspectos negativos, como a exploração dos indígenas por comerciantes locais.

No geral, foi uma infância muito positiva e tenho ótimas lembranças de estar na selva.

Você mencionou a língua pirahã e esse tem sido um debate bastante famoso no mundo linguístico entre seu pai e o famoso linguista americano Noam Chomsky. Esse debate intelectual chegou a ser bastante feroz na troca de palavras. O seu trabalho parece estar muito relacionado a essa questão que é central no mundo da linguística. Como você vê esse debate tão polêmico?

É um debate muito polêmico. Gosto de pensar que, de certa forma, a ciência superou alguns desses debates e o campo se tornou mais empírico. Meu pai foi certamente uma das pessoas que contribuiu para isso. Muitos pesquisadores nas últimas décadas trouxeram dados de diferentes idiomas na Austrália, na Amazônia e na África, que não parecem estar de acordo com os modelos que Chomsky e que outros promoveram nas décadas de 60 e 70. E esses modelos se tornaram muito influentes.

Na defesa desses modelos, eles parecem ter funcionado muito bem no começo. Mas na medida em que surgem mais e mais exceções, as coisas simplesmente não parecem se encaixar. E você tem que perguntar qual é a utilidade desse modelo?

O modelo é baseado, em grande parte, no inglês.

A nova safra de pesquisadores, a minha geração e a geração seguinte, não está muito satisfeita com os modelos dos anos 60 e 70. E isso não é um insulto.

Isso acontece em muitos campos. As coisas evoluem.

E agora acho que já ultrapassamos isso de uma forma que não é mais o debate central da linguística.

Mas ele ainda desperta muitas emoções fortes. Você acha que o mundo da linguística vai acabar deixando a gramática universal para trás?

A ideia de gramática universal mudou muito. Se você voltar e olhar os estudos dos anos 60 e 70, eles fizeram previsões muito grandes. Agora as previsões são quase impossíveis de serem provadas falsas.

Eles dizem: todos os humanos têm uma linguagem e então deve haver uma gramática universal.

É algo tão vago que não se pode discordar, mas que já não ajuda a se fazer nenhuma previsão, na minha opinião.

Mas digo isso também porque os pesquisadores que realmente respeito agora, que são talvez 10 anos mais novos que eu, certo, que estão fazendo pesquisas de ponta, eles não parecem estar levando em conta esse debate no seu trabalho.

Em vez disso, eles estão focados em realizar experimentos realmente bons, usando big data, ciência de dados e programação de computador, que se tornaram central para o trabalho que fazemos.

E isso não é verdade apenas na pesquisa linguística.

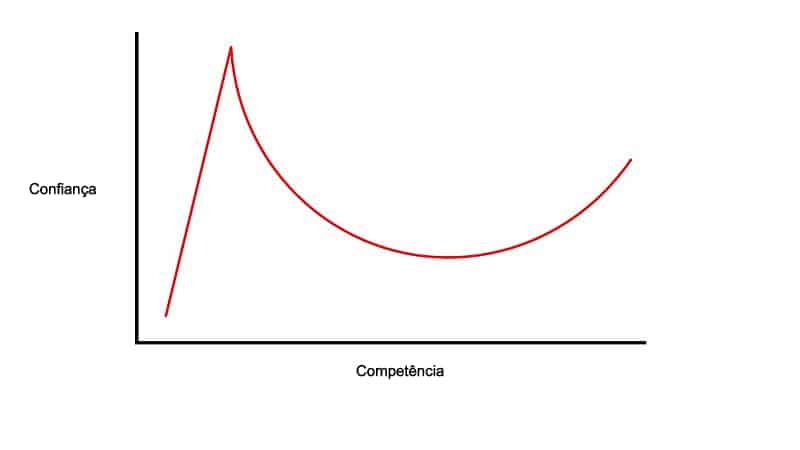

Quando as pessoas investem décadas de suas vidas em qualquer modelo teórico específico em qualquer disciplina, elas tendem a ser indivíduos bastante tendenciosos.

E então existe uma velha expressão que diz: a ciência muda uma aposentadoria por vez. E, de certa forma, acho que isso é verdade, que leva tempo.

Gostamos de pensar que somos objetivos, mas na verdade não somos, depois que investimos décadas em uma determinada visão e a promovemos, é preciso ser uma pessoa realmente grande para dizer “sabe: eu estive errado nos últimos 30 anos e preciso reconhecer isso diante de tantas evidências”.

Eu não vou ficar parado esperando isso acontecer. Eu acho que é apenas uma mudança social gradual em uma disciplina.

A tecnologia recente acelerou o estudo das línguas. Mas muitas dessas línguas estão morrendo rapidamente também. Existe uma corrida contra o tempo para estudá-las antes que morram?

Sim. Eu acho que há muita documentação linguística ao redor do mundo e às vezes eu acho que na verdade somos meio egoístas como pesquisadores, queremos obter todos esses dados antes que eles desapareçam ou queremos manter as pessoas falando suas línguas.

Na Amazônia, por exemplo, você vê que existem alguns grupos indígenas que realmente importam muito para eles manterem sua língua e para alguns deles isso não parece importar muito.

E quem somos nós para dizer a eles que isso deveria importar?

Acho que às vezes isso é importante para mim porque eu tenho um interesse egoísta de querer mais idiomas e é uma coisa fascinante para mim e para minha carreira olhar para esses dados.

Mas sim, infelizmente, para alguns, as línguas estão morrendo.

Elas estão morrendo principalmente hoje em dia por razões econômicas, na medida em que grupos de pessoas que estão no Brasil e em outros lugares, se quiserem que seus filhos possam ser economicamente viáveis diante do encolhimento das reservas e da dificuldade cada vez maior de sobreviver da caça e da pesca, essas pessoas têm que falar português, espanhol ou inglês.

Dependendo do contexto em que se encontram, as pressões econômicas são tão fortes sobre alguns destes grupos individuais que a maioria dos modelos sugere que muitas destas línguas desaparecerão nos próximos 100 anos.

Ao longo da sua vida, você viu línguas amazônicas morrerem ou prestes a morrer?

Sim. Um exemplo que me vem à mente é o idioma suruí que também é falado em Rondônia e ainda há falantes. O missionário que foi um dos primeiros a contatá-los nos anos 60 falava que era um idioma vibrante em termos linguísticos, mas agora muitas dessas pessoas falam principalmente português.

E se você olhar a proporção de crianças que estão aprendendo a língua como primeira língua e vê que isso está diminuindo. Esse é geralmente o melhor indicador de se uma linguagem sobreviverá ou não.

Para muitas destas línguas, simplesmente não há muitas crianças a aprendê-las.

Existem outras línguas que vimos morrer completamente.

Uma que me vem à mente é uma língua chamada Orouim, que era falada na fronteira brasileira com a Bolívia.

Mas há muitos exemplos de línguas que acabaram morrendo. Ou de línguas onde ainda há muitos falantes, mas a proporção de número de falantes de português aumentou muito entre as crianças. Você vê isso no parque Xingu, por exemplo. Muitas das línguas ainda são faladas, mas muitas vezes as crianças falam principalmente português.

E com a morte das línguas a humanidade está perdendo diversidade na forma de se pensar o mundo?

Uma das coisas que descobrimos e que é mencionada no livro é que há vários grupos que demonstraram ter vocabulários ricos sobre cheiros.

Isso é outra coisa que costumávamos pensar: “nós, humanos, não temos palavras abstratas para cheiros”.

Mas acontece que houve uma série de línguas documentadas nos últimos 10 anos que possuem palavras ricas e abstratas para cheiros.

À medida que essas línguas morrem e algumas delas estão à beira da extinção, estamos perdendo algo crítico sobre como os humanos pensam sobre cheiros e como eles podem falar sobre cheiros. Se perdemos isso, nós perderemos um pouco de como os humanos pensam sobre os cheiros que sentem.

Na medida em que as línguas morrem, estamos perdendo algo básico de nossa compreensão de como os humanos pensam sobre as sensações que sentem.

Você compara línguas amplamente faladas com línguas pouco conhecidas para ilustrar como pessoas podem pensar de formas diferentes. Mas existe essa diferença na forma de pensar o mundo mesmo entre línguas amplamente faladas? Por exemplo, um chinês pensa o mundo diferente de um alemão, por conta da língua que fala?

É sempre difícil saber quanto disso é a cultura e quanto disso é a linguagem. Mas no caso chinês, por exemplo, tem havido algumas pesquisas fascinantes mostrando que os falantes de mandarim parecem pensar sobre o tempo de maneiras diferentes dos falantes de inglês, porque as metáforas que usam para o tempo são um pouco diferentes.

Os chineses usam metáforas verticais, em que o tempo está caindo, em oposição à metáfora horizontal do futuro estar diante de você, como no inglês.

Outro exemplo com falantes de chinês é o da cognição quantitativa, como as pessoas pensam sobre quantidades.

Os falantes de inglês, por exemplo, tendem ser um pouco mais lentos do que os falantes de chinês no aprendizado de números, por causa como de números como 11 (“eleven”) e 12 (“twelve”).

No inglês, nas dezenas de 13 em diante, existe um padrão previsível: “thirteen” (13) e “fourteen” (14) são a junção do número três e quatro com a dezena (“teen”). Mas isso não acontece com as palavras “eleven” (11) e “twelve” (12).

Em idiomas como o chinês isso é mais transparente. Na parte das dezenas, você aprende a junção “um-dezena”, dois-dezena”, etc.

Isso ajudaria a explicar por que as crianças chinesas se saem um pouco melhor mais cedo em alguns exercícios de adição do que as crianças que falam inglês.

Um exemplo que se costuma dar em linguística é que os esquimós têm mais de 50 palavras para neve, já que é algo importante na cultura deles. Mas isso é um exemplo errado?

Isso se tornou uma coisa divertida para os linguistas zombarem.

Chegou ao ponto de o New York Times publicar um artigo que dizia que os esquimós têm centenas de palavras para neve e isso simplesmente não é verdade.

No entanto, a ideia central por trás dessa mentira não é imprecisa, que é a de que as pessoas vivem em ambientes muito diferentes. Não é de surpreender que alguns grupos amazônicos não tenham palavras para neve.

Há algumas evidências agora de que alguns destes termos que existem no ambiente podem ter impacto na forma como as pessoas pensam sobre algumas destas coisas externas.

Você menciona que as sociedades WEIRD (sigla em inglês para sociedades ocidentais, educadas, industrializadas, ricas e democráticas) não são uma população boa para se generalizar as capacidades da humanidade. Por que isso?

Foram pesquisadores de psicologia há cerca de 15 anos que inventaram essa sigla para WEIRD.

E eu acho que é uma maneira muito inteligente de fazer isso. As pessoas estão cientes hoje em dia que, se existem cerca de 7.000 línguas e culturas distintas no mundo, é problemático nós ficarmos nos debruçando repetidamente apenas no que pensam os americanos, os britânicos ou até mesmo os japoneses, e generalizar que é assim que os humanos pensam.

Nós [dos países WEIRD] somos uma pequena amostra da diversidade humana.

E além disso não somos representativos.

Um dos motivos disso é que estudos mostram que a alfabetização, por exemplo, muda a composição do cérebro.

Á medida que as pessoas aprendem a ler e escrever e ficam focadas em imagens bidimensionais. As crianças fazem isso repetidas vezes com livros e telas e isso tem alguns efeitos cognitivos.

Mas na perspectiva da história humana, se pensarmos em escalas de tempo maiores, os humanos deixaram a África há cerca de 100.000 anos, aproximadamente em ondas diferentes.

Eles caminharam por todo o mundo e chegaram a diversos lugares, incluindo o sul da América do Sul, há 20 mil anos.

Durante esse tempo, desenvolvemos formas muito diferentes de pensar.

Na vertente europeia, a agricultura tem apenas cerca de 8.000 anos e a industrialização tem apenas alguns 100 anos.

E a alfabetização generalizada, em que se espera que todas as pessoas leiam e escrevam, é um fenômeno recente.

Quando usamos as pessoas dos países WEIRD para generalizar como os humanos pensam, estamos olhando apenas para uma vertente específica de humanos que se desenvolveu em uma determinada parte do mundo durante apenas alguns mil anos de toda essa história de 100 mil anos.

É uma parte muito pequena da história de uma perspectiva histórica.

Obviamente, hoje é incrivelmente influente porque estes grupos tornaram-se potências colonizadoras e mudaram a forma como o mundo funciona.

Mas de uma perspectiva histórica e antropológica, isso é apenas uma parte do quadro. E às vezes é uma parte não representativa.

Temos que buscar uma amostragem menos tendenciosa de como os humanos falam e pensam.

Você convive há anos com indígenas brasileiros. Na sua visão, a vida deles melhorou ou piorou ao longo dos anos?

Essa é uma pergunta difícil. Depende do contexto. Acho que em muitos aspectos a vida deles piorou. Mas depende de com quem você fala. E não gosto de projetar minha opinião sobre se a situação piorou.

Obviamente, o maior acontecimento na história das populações indígenas no Brasil e em outros lugares foi a introdução de doenças que dizimaram muitas delas e, de muitas maneiras, elas nunca se recuperaram dessa devastação.

Isso segue algo muito importante: ter acesso a bons remédios. Quando você conhece qualquer mãe indígena, independente da formação cultural, ela quer a saúde do filho.

E a saúde continua realmente inadequada. Alguns criticaram e acho que com razão, o governo brasileiro por isso, por não priorizar o suficiente, a saúde dos povos indígenas, apesar da criação de diferentes agências que tentaram fazer isso.

As comunidades indígenas estão tendo poder para conduzir seus rumos? Ou elas estão sendo conduzidas por outros?

Minha opinião é que eles estão sendo mais conduzidos. Os poderes que atuam em suas vidas são muito maiores do que qualquer tipo de liberdade que eles tenham.

É o caso, por exemplo, do povo que eu conheço bem, os karitianas. Eles têm uma reserva enorme. Alguns dos brasileiros que são pobres chegam a ter inveja deles e pensam: “por que eles têm tanta terra e eu não?”

Mas se você pegar uma reserva assim, ela é cercada pelos brasileiros. Isso significa que a caça, os animais e a pesca simplesmente não são mais o que eram. Mesmo sendo um grande pedaço de terra, não há animais e peixes suficientes para subsistir.

Então agora essas pessoas são forçadas a ir a locais como o Porto Velho para tentar ganhar a vida vendendo artefatos. Isso cria todos os tipos de problemas e cria pessoas que agora estão interagindo na cultura brasileira com aspectos dela que talvez não estivessem preparados. Às vezes eles podem não ter tolerância ao álcool ou enfrentar coisas que não enfrentaram na aldeia, e agora você tem filhos que estão lá fora. É uma coisa fundamentalmente econômica.

Alguns deles eu sei que querem viver na reserva e ter uma vida mais tradicional. Até mesmo para alguns dos mais jovens. Mas simplesmente não é viável.

Como é o dia a dia do trabalho de um linguista no Brasil?

Muito do trabalho envolve eu sentado em frente a um computador fazendo programação. Com isso, eu meio que me afastei do trabalho de campo, mas isso também aconteceu porque tive um filho e não queríamos ficar levando ele para a aldeia.

Estou de volta ao início dos anos 2000, quando estava fazendo meu doutorado, passei muito tempo na cidade de Porto Velho pesquisando todos os dias com amigos que falavam a língua e gravando suas vozes, analisando. Fiquei focado principalmente em padrões sonoros que achei bastante interessantes.

Então você pode analisar isso com um software acústico, mas meu dia a dia era andar de moto, andar pela selva, conversar com eles, entrevistá-los. Foi muito divertido.

A pesquisa em si, voltando ao computador e observando esses padrões, porque a linguagem é realmente complexa em certos aspectos, é tentar descobrir alguns desses padrões. Mesmo que algumas pessoas tenham estudado a linguagem antes, é realmente muito desgastante mentalmente.

Você ainda mantém contato com amigos lá?

Sim. Eu não volto tanto, embora espere voltar no próximo ano por um longo tempo.

Eu tenho contato por email com algumas dessas pessoas e muitas delas estão no Facebook agora.

O engraçado é que não estou muito nas redes sociais, mas elas estão. Então, se eu quiser segui-los, talvez eu tenha que entrar no Facebook pela primeira vez em anos e ver o que está acontecendo. Mas mantenho contato por email.

Em português?

Sim, em português. Às vezes eles escrevem na língua deles e eu tenho que tentar lembrar, porque estou sem prática. E isso não é algo que você pode clicar no Google Tradutor para te ajudar (risos).

A inteligência artificial está ajudando a estudar novas línguas. Mas ela também está mudando as línguas que falamos. Quais os perigos da inteligência artificial para as nossas línguas?

Acho que isso está exacerbando a tendência que já existe há muito tempo, de que as maiores línguas estão se tornando ainda mais influentes.

Isso acontece, por exemplo, com os large language models, que são a base de tecnologias como o Chat GPT.

Esses modelos são abastecidos com muitos dados e isso só pode ser feito com poucas línguas no mundo que são muito faladas.

O mundo tem cerca de 7.400 línguas e só algumas poucas dezenas delas possuem dados suficientes para informar esses modelos.

Talvez um dia haja uma maneira de coletar dados suficientes e isso me deixa otimista de que existem maneiras pelas quais a inteligência artificial poderia ser usada para substituir os trabalhadores linguísticos de campo para coletar apenas grandes quantidades de dados desses grupos indígenas, assumindo que eles estão eles concordam com isso para registrar e depois analisar e novas maneiras suas linguagens.

Essa parte ainda não é possível, mas há uma parte de mim que está otimista de que isso será possível nas próximas décadas e que poderá realmente ajudar a preservar algumas destas línguas.

Mas agora eu diria que grande parte da tecnologia baseada em grandes modelos de linguagem apenas cria um “pool” maior para essas linguagens muito grandes

Este texto foi publicado originalmente aqui.

Você precisa fazer login para comentar.