Since the 2008 financial crisis, colleges and universities have faced increased pressure to identify essential disciplines, and cut the rest. In 2009, Washington State University announced it would eliminate the department of theatre and dance, the department of community and rural sociology, and the German major – the same year that the University of Louisiana at Lafayette ended its philosophy major. In 2012, Emory University in Atlanta did away with the visual arts department and its journalism programme. The cutbacks aren’t restricted to the humanities: in 2011, the state of Texas announced it would eliminate nearly half of its public undergraduate physics programmes. Even when there’s no downsizing, faculty salaries have been frozen and departmental budgets have shrunk.

But despite the funding crunch, it’s a bull market for academic economists. According to a 2015 sociological study in the Journal of Economic Perspectives, the median salary of economics teachers in 2012 increased to $103,000 – nearly $30,000 more than sociologists. For the top 10 per cent of economists, that figure jumps to $160,000, higher than the next most lucrative academic discipline – engineering. These figures, stress the study’s authors, do not include other sources of income such as consulting fees for banks and hedge funds, which, as many learned from the documentary Inside Job (2010), are often substantial. (Ben Bernanke, a former academic economist and ex-chairman of the Federal Reserve, earns $200,000-$400,000 for a single appearance.)

Unlike engineers and chemists, economists cannot point to concrete objects – cell phones, plastic – to justify the high valuation of their discipline. Nor, in the case of financial economics and macroeconomics, can they point to the predictive power of their theories. Hedge funds employ cutting-edge economists who command princely fees, but routinely underperform index funds. Eight years ago, Warren Buffet made a 10-year, $1 million bet that a portfolio of hedge funds would lose to the S&P 500, and it looks like he’s going to collect. In 1998, a fund that boasted two Nobel Laureates as advisors collapsed, nearly causing a global financial crisis.

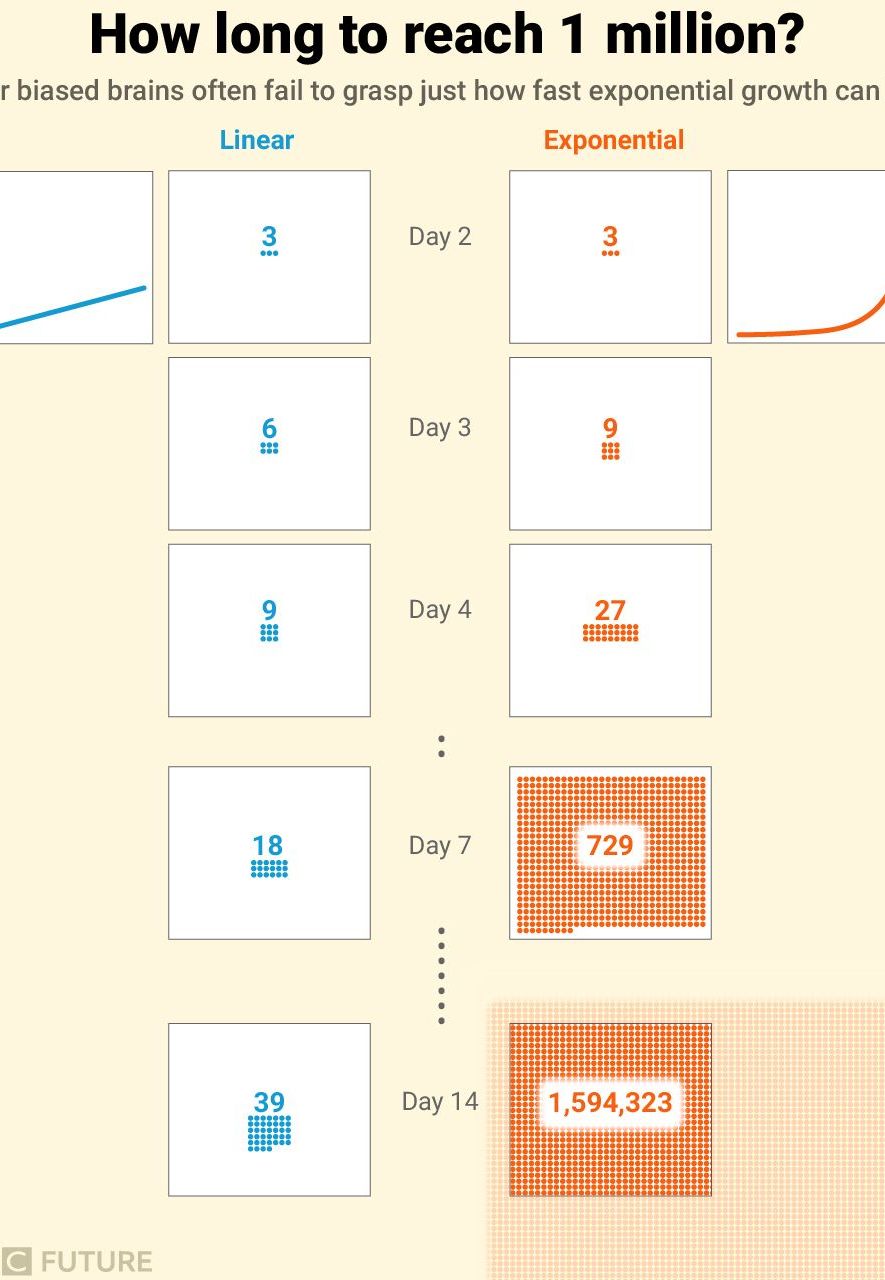

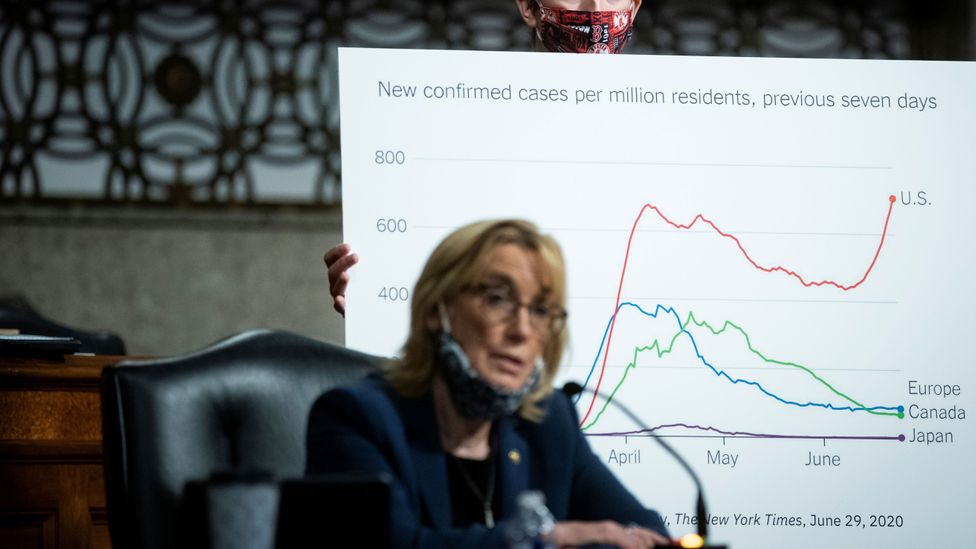

The failure of the field to predict the 2008 crisis has also been well-documented. In 2003, for example, only five years before the Great Recession, the Nobel Laureate Robert E Lucas Jr told the American Economic Association that ‘macroeconomics […] has succeeded: its central problem of depression prevention has been solved’. Short-term predictions fair little better – in April 2014, for instance, a survey of 67 economists yielded 100 per cent consensus: interest rates would rise over the next six months. Instead, they fell. A lot.

Nonetheless, surveys indicate that economists see their discipline as ‘the most scientific of the social sciences’. What is the basis of this collective faith, shared by universities, presidents and billionaires? Shouldn’t successful and powerful people be the first to spot the exaggerated worth of a discipline, and the least likely to pay for it?

In the hypothetical worlds of rational markets, where much of economic theory is set, perhaps. But real-world history tells a different story, of mathematical models masquerading as science and a public eager to buy them, mistaking elegant equations for empirical accuracy.

As an extreme example, take the extraordinary success of Evangeline Adams, a turn-of-the-20th-century astrologer whose clients included the president of Prudential Insurance, two presidents of the New York Stock Exchange, the steel magnate Charles M Schwab, and the banker J P Morgan. To understand why titans of finance would consult Adams about the market, it is essential to recall that astrology used to be a technical discipline, requiring reams of astronomical data and mastery of specialised mathematical formulas. ‘An astrologer’ is, in fact, the Oxford English Dictionary’s second definition of ‘mathematician’. For centuries, mapping stars was the job of mathematicians, a job motivated and funded by the widespread belief that star-maps were good guides to earthly affairs. The best astrology required the best astronomy, and the best astronomy was done by mathematicians – exactly the kind of person whose authority might appeal to bankers and financiers.

In fact, when Adams was arrested in 1914 for violating a New York law against astrology, it was mathematics that eventually exonerated her. During the trial, her lawyer Clark L Jordan emphasised mathematics in order to distinguish his client’s practice from superstition, calling astrology ‘a mathematical or exact science’. Adams herself demonstrated this ‘scientific’ method by reading the astrological chart of the judge’s son. The judge was impressed: the plaintiff, he observed, went through a ‘mathematical process to get at her conclusions… I am satisfied that the element of fraud… is absent here.’

Romer compares debates among economists to those between 16th-century advocates of heliocentrism and geocentrism

The enchanting force of mathematics blinded the judge – and Adams’s prestigious clients – to the fact that astrology relies upon a highly unscientific premise, that the position of stars predicts personality traits and human affairs such as the economy. It is this enchanting force that explains the enduring popularity of financial astrology, even today. The historian Caley Horan at the Massachusetts Institute of Technology described to me how computing technology made financial astrology explode in the 1970s and ’80s. ‘Within the world of finance, there’s always a superstitious, quasi-spiritual trend to find meaning in markets,’ said Horan. ‘Technical analysts at big banks, they’re trying to find patterns in past market behaviour, so it’s not a leap for them to go to astrology.’ In 2000, USA Today quoted Robin Griffiths, the chief technical analyst at HSBC, the world’s third largest bank, saying that ‘most astrology stuff doesn’t check out, but some of it does’.

Ultimately, the problem isn’t with worshipping models of the stars, but rather with uncritical worship of the language used to model them, and nowhere is this more prevalent than in economics. The economist Paul Romer at New York University has recently begun calling attention to an issue he dubs ‘mathiness’ – first in the paper ‘Mathiness in the Theory of Economic Growth’ (2015) and then in a series of blog posts. Romer believes that macroeconomics, plagued by mathiness, is failing to progress as a true science should, and compares debates among economists to those between 16th-century advocates of heliocentrism and geocentrism. Mathematics, he acknowledges, can help economists to clarify their thinking and reasoning. But the ubiquity of mathematical theory in economics also has serious downsides: it creates a high barrier to entry for those who want to participate in the professional dialogue, and makes checking someone’s work excessively laborious. Worst of all, it imbues economic theory with unearned empirical authority.

‘I’ve come to the position that there should be a stronger bias against the use of math,’ Romer explained to me. ‘If somebody came and said: “Look, I have this Earth-changing insight about economics, but the only way I can express it is by making use of the quirks of the Latin language”, we’d say go to hell, unless they could convince us it was really essential. The burden of proof is on them.’

Right now, however, there is widespread bias in favour of using mathematics. The success of math-heavy disciplines such as physics and chemistry has granted mathematical formulas with decisive authoritative force. Lord Kelvin, the 19th-century mathematical physicist, expressed this quantitative obsession:

When you can measure what you are speaking about and express it in numbers you know something about it; but when you cannot measure it… in numbers, your knowledge is of a meagre and unsatisfactory kind.

The trouble with Kelvin’s statement is that measurement and mathematics do not guarantee the status of science – they guarantee only the semblance of science. When the presumptions or conclusions of a scientific theory are absurd or simply false, the theory ought to be questioned and, eventually, rejected. The discipline of economics, however, is presently so blinkered by the talismanic authority of mathematics that theories go overvalued and unchecked.

Romer is not the first to elaborate the mathiness critique. In 1886, an article in Science accused economics of misusing the language of the physical sciences to conceal ‘emptiness behind a breastwork of mathematical formulas’. More recently, Deirdre N McCloskey’s The Rhetoric of Economics(1998) and Robert H Nelson’s Economics as Religion (2001) both argued that mathematics in economic theory serves, in McCloskey’s words, primarily to deliver the message ‘Look at how very scientific I am.’

After the Great Recession, the failure of economic science to protect our economy was once again impossible to ignore. In 2009, the Nobel Laureate Paul Krugman tried to explain it in The New York Times with a version of the mathiness diagnosis. ‘As I see it,’ he wrote, ‘the economics profession went astray because economists, as a group, mistook beauty, clad in impressive-looking mathematics, for truth.’ Krugman named economists’ ‘desire… to show off their mathematical prowess’ as the ‘central cause of the profession’s failure’.

The mathiness critique isn’t limited to macroeconomics. In 2014, the Stanford financial economist Paul Pfleiderer published the paper‘Chameleons: The Misuse of Theoretical Models in Finance and Economics’, which helped to inspire Romer’s understanding of mathiness. Pfleiderer called attention to the prevalence of ‘chameleons’ – economic models ‘with dubious connections to the real world’ that substitute ‘mathematical elegance’ for empirical accuracy. Like Romer, Pfleiderer wants economists to be transparent about this sleight of hand. ‘Modelling,’ he told me, ‘is now elevated to the point where things have validity just because you can come up with a model.’

The notion that an entire culture – not just a few eccentric financiers – could be bewitched by empty, extravagant theories might seem absurd. How could all those people, all that math, be mistaken? This was my own feeling as I began investigating mathiness and the shaky foundations of modern economic science. Yet, as a scholar of Chinese religion, it struck me that I’d seen this kind of mistake before, in ancient Chinese attitudes towards the astral sciences. Back then, governments invested incredible amounts of money in mathematical models of the stars. To evaluate those models, government officials had to rely on a small cadre of experts who actually understood the mathematics – experts riven by ideological differences, who couldn’t even agree on how to test their models. And, of course, despite collective faith that these models would improve the fate of the Chinese people, they did not.

Astral Science in Early Imperial China, a forthcoming book by the historian Daniel P Morgan, shows that in ancient China, as in the Western world, the most valuable type of mathematics was devoted to the realm of divinity – to the sky, in their case (and to the market, in ours). Just as astrology and mathematics were once synonymous in the West, the Chinese spoke of li, the science of calendrics, which early dictionaries also glossed as ‘calculation’, ‘numbers’ and ‘order’. Li models, like macroeconomic theories, were considered essential to good governance. In the classic Book of Documents, the legendary sage king Yao transfers the throne to his successor with mention of a single duty: ‘Yao said: “Oh thou, Shun! The li numbers of heaven rest in thy person.”’

China’s oldest mathematical text invokes astronomy and divine kingship in its very title – The Arithmetical Classic of the Gnomon of the Zhou. The title’s inclusion of ‘Zhou’ recalls the mythic Eden of the Western Zhou dynasty (1045–771 BCE), implying that paradise on Earth can be realised through proper calculation. The book’s introduction to the Pythagorean theorem asserts that ‘the methods used by Yu the Great in governing the world were derived from these numbers’. It was an unquestioned article of faith: the mathematical patterns that govern the stars also govern the world. Faith in a divine, invisible hand, made visible by mathematics. No wonder that a newly discovered text fragment from 200 BCE extolls the virtues of mathematics over the humanities. In it, a student asks his teacher whether he should spend more time learning speech or numbers. His teacher replies: ‘If my good sir cannot fathom both at once, then abandon speech and fathom numbers, [for] numbers can speak, [but] speech cannot number.’

Modern governments, universities and businesses underwrite the production of economic theory with huge amounts of capital. The same was true for li production in ancient China. The emperor – the ‘Son of Heaven’ – spent astronomical sums refining mathematical models of the stars. Take the armillary sphere, such as the two-metre cage of graduated bronze rings in Nanjing, made to represent the celestial sphere and used to visualise data in three-dimensions. As Morgan emphasises, the sphere was literally made of money. Bronze being the basis of the currency, governments were smelting cash by the metric ton to pour it into li. A divine, mathematical world-engine, built of cash, sanctifying the powers that be.

The enormous investment in li depended on a huge assumption: that good government, successful rituals and agricultural productivity all depended upon the accuracy of li. But there were, in fact, no practical advantages to the continued refinement of li models. The calendar rounded off decimal points such that the difference between two models, hotly contested in theory, didn’t matter to the final product. The work of selecting auspicious days for imperial ceremonies thus benefited only in appearance from mathematical rigour. And of course the comets, plagues and earthquakes that these ceremonies promised to avert kept on coming. Farmers, for their part, went about business as usual. Occasional governmental efforts to scientifically micromanage farm life in different climes using li ended in famine and mass migration.

Like many economic models today, li models were less important to practical affairs than their creators (and consumers) thought them to be. And, like today, only a few people could understand them. In 101 BCE, Emperor Wudi tasked high-level bureaucrats – including the Great Director of the Stars – with creating a new li that would glorify the beginning of his path to immortality. The bureaucrats refused the task because ‘they couldn’t do the math’, and recommended the emperor outsource it to experts.

The equivalent in economic theory might be to grant a model high points for success in predicting short-term markets, while failing to deduct for missing the Great Recession

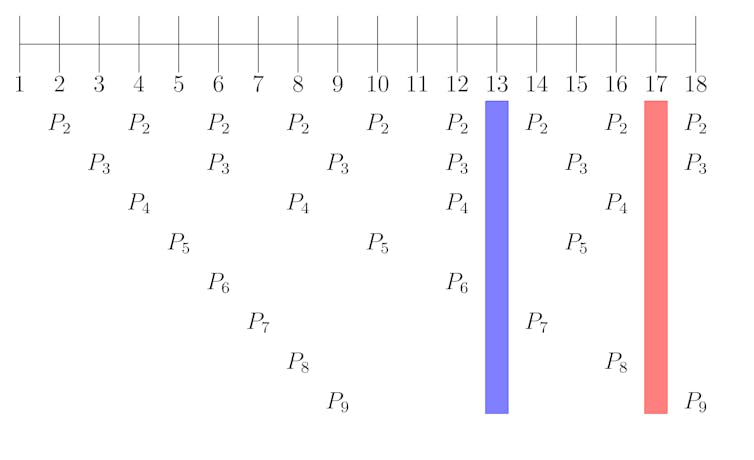

The debates of these ancient li experts bear a striking resemblance to those of present-day economists. In 223 CE, a petition was submitted to the emperor asking him to approve tests of a new li model developed by the assistant director of the astronomical office, a man named Han Yi.

At the time of the petition, Han Yi’s model, and its competitor, the so-called Supernal Icon, had already been subjected to three years of ‘reference’, ‘comparison’ and ‘exchange’. Still, no one could agree which one was better. Nor, for that matter, was there any agreement on how they should be tested.

In the end, a live trial involving the prediction of eclipses and heliacal risings was used to settle the debate. With the benefit of hindsight, we can see this trial was seriously flawed. The helical rising (first visibility) of planets depends on non-mathematical factors such as eyesight and atmospheric conditions. That’s not to mention the scoring of the trial, which was modelled on archery competitions. Archers scored points for proximity to the bullseye, with no consideration for overall accuracy. The equivalent in economic theory might be to grant a model high points for success in predicting short-term markets, while failing to deduct for missing the Great Recession.

None of this is to say that li models were useless or inherently unscientific. For the most part, li experts were genuine mathematical virtuosos who valued the integrity of their discipline. Despite being based on inaccurate assumptions – that the Earth was at the centre of the cosmos – their models really did work to predict celestial motions. Imperfect though the live trial might have been, it indicates that superior predictive power was a theory’s most important virtue. All of this is consistent with real science, and Chinese astronomy progressed as a science, until it reached the limits imposed by its assumptions.

However, there was no science to the belief that accurate li would improve the outcome of rituals, agriculture or government policy. No science to the Hall of Light, a temple for the emperor built on the model of a magic square. There, by numeric ritual gesture, the Son of Heaven was thought to channel the invisible order of heaven for the prosperity of man. This was quasi-theology, the belief that heavenly patterns – mathematical patterns – could be used to model every event in the natural world, in politics, even the body. Macro- and microcosm were scaled reflections of one another, yin and yang in a unifying, salvific mathematical vision. The expensive gadgets, the personnel, the bureaucracy, the debates, the competition – all of this testified to the divinely authoritative power of mathematics. The result, then as now, was overvaluation of mathematical models based on unscientific exaggerations of their utility.

In ancient China it would have been unfair to blame li experts for the pseudoscientific exploitation of their theories. These men had no way to evaluate the scientific merits of assumptions and theories – ‘science’, in a formalised, post-Enlightenment sense, didn’t really exist. But today it is possible to distinguish, albeit roughly, science from pseudoscience, astronomy from astrology. Hypothetical theories, whether those of economists or conspiracists, aren’t inherently pseudoscientific. Conspiracy theories can be diverting – even instructive – flights of fancy. They become pseudoscience only when promoted from fiction to fact without sufficient evidence.

Romer believes that fellow economists know the truth about their discipline, but don’t want to admit it. ‘If you get people to lower their shield, they’ll tell you it’s a big game they’re playing,’ he told me. ‘They’ll say: “Paul, you may be right, but this makes us look really bad, and it’s going to make it hard for us to recruit young people.”’

Demanding more honesty seems reasonable, but it presumes that economists understand the tenuous relationship between mathematical models and scientific legitimacy. In fact, many assume the connection is obvious – just as in ancient China, the connection between li and the world was taken for granted. When reflecting in 1999 on what makes economics more scientific than the other social sciences, the Harvard economist Richard B Freeman explained that economics ‘attracts stronger students than [political science or sociology], and our courses are more mathematically demanding’. In Lives of the Laureates (2004), Robert E Lucas Jr writes rhapsodically about the importance of mathematics: ‘Economic theory is mathematical analysis. Everything else is just pictures and talk.’ Lucas’s veneration of mathematics leads him to adopt a method that can only be described as a subversion of empirical science:

The construction of theoretical models is our way to bring order to the way we think about the world, but the process necessarily involves ignoring some evidence or alternative theories – setting them aside. That can be hard to do – facts are facts – and sometimes my unconscious mind carries out the abstraction for me: I simply fail to see some of the data or some alternative theory.

Even for those who agree with Romer, conflict of interest still poses a problem. Why would skeptical astronomers question the emperor’s faith in their models? In a phone conversation, Daniel Hausman, a philosopher of economics at the University of Wisconsin, put it bluntly: ‘If you reject the power of theory, you demote economists from their thrones. They don’t want to become like sociologists.’

George F DeMartino, an economist and an ethicist at the University of Denver, frames the issue in economic terms. ‘The interest of the profession is in pursuing its analysis in a language that’s inaccessible to laypeople and even some economists,’ he explained to me. ‘What we’ve done is monopolise this kind of expertise, and we of all people know how that gives us power.’

Every economist I interviewed agreed that conflicts of interest were highly problematic for the scientific integrity of their field – but only tenured ones were willing to go on the record. ‘In economics and finance, if I’m trying to decide whether I’m going to write something favourable or unfavourable to bankers, well, if it’s favourable that might get me a dinner in Manhattan with movers and shakers,’ Pfleiderer said to me. ‘I’ve written articles that wouldn’t curry favour with bankers but I did that when I had tenure.’

When mathematical theory is the ultimate arbiter of truth, it becomes difficult to see the difference between science and pseudoscience

Then there’s the additional problem of sunk-cost bias. If you’ve invested in an armillary sphere, it’s painful to admit that it doesn’t perform as advertised. When confronted with their profession’s lack of predictive accuracy, some economists find it difficult to admit the truth. Easier, instead, to double down, like the economist John H Cochrane at the University of Chicago. The problem isn’t too much mathematics, he writes in response to Krugman’s 2009 post-Great-Recession mea culpa for the field, but rather ‘that we don’t have enough math’. Astrology doesn’t work, sure, but only because the armillary sphere isn’t big enough and the equations aren’t good enough.

If overhauling economics depended solely on economists, then mathiness, conflict of interest and sunk-cost bias could easily prove insurmountable. Fortunately, non-experts also participate in the market for economic theory. If people remain enchanted by PhDs and Nobel Prizes awarded for the production of complicated mathematical theories, those theories will remain valuable. If they become disenchanted, the value will drop.

Economists who rationalise their discipline’s value can be convincing, especially with prestige and mathiness on their side. But there’s no reason to keep believing them. The pejorative verb ‘rationalise’ itself warns of mathiness, reminding us that we often deceive each other by making prior convictions, biases and ideological positions look ‘rational’, a word that confuses truth with mathematical reasoning. To be rational is, simply, to think in ratios, like the ratios that govern the geometry of the stars. Yet when mathematical theory is the ultimate arbiter of truth, it becomes difficult to see the difference between science and pseudoscience. The result is people like the judge in Evangeline Adams’s trial, or the Son of Heaven in ancient China, who trust the mathematical exactitude of theories without considering their performance – that is, who confuse math with science, rationality with reality.

There is no longer any excuse for making the same mistake with economic theory. For more than a century, the public has been warned, and the way forward is clear. It’s time to stop wasting our money and recognise the high priests for what they really are: gifted social scientists who excel at producing mathematical explanations of economies, but who fail, like astrologers before them, at prophecy.

Você precisa fazer login para comentar.