Sue Halpern

NOVEMBER 20, 2014 ISSUE

The Zero Marginal Cost Society: The Internet of Things, the Collaborative Commons, and the Eclipse of Capitalism

by Jeremy Rifkin

Palgrave Macmillan, 356 pp., $28.00

Enchanted Objects: Design, Human Desire, and the Internet of Things

by David Rose

Scribner, 304 pp., $28.00

Age of Context: Mobile, Sensors, Data and the Future of Privacy

by Robert Scoble and Shel Israel, with a foreword by Marc Benioff

Patrick Brewster, 225 pp., $14.45 (paper)

More Awesome Than Money: Four Boys and Their Heroic Quest to Save Your Privacy from Facebook

by Jim Dwyer

Viking, 374 pp., $27.95

A detail of Penelope Umbrico’s Sunset Portraits from 11,827,282 Flickr Sunsets on 1/7/13, 2013. For the project, Umbrico searched the website Flickr for scenes of sunsets in which the sun, not the subject, predominated. The installation, consisting of two thousand 4 x 6 C-prints, explores the idea that ‘the individual assertion of “being here” is ultimately read as a lack of individuality when faced with so many assertions that are more or less all the same.’ A collection of her work, Penelope Umbrico (photographs), was published in 2011 by Aperture.

Every day a piece of computer code is sent to me by e-mail from a website to which I subscribe called IFTTT. Those letters stand for the phrase “if this then that,” and the code is in the form of a “recipe” that has the power to animate it. Recently, for instance, I chose to enable an IFTTT recipe that read, “if the temperature in my house falls below 45 degrees Fahrenheit, then send me a text message.” It’s a simple command that heralds a significant change in how we will be living our lives when much of the material world is connected—like my thermostat—to the Internet.

It is already possible to buy Internet-enabled light bulbs that turn on when your car signals your home that you are a certain distance away and coffeemakers that sync to the alarm on your phone, as well as WiFi washer-dryers that know you are away and periodically fluff your clothes until you return, and Internet-connected slow cookers, vacuums, and refrigerators. “Check the morning weather, browse the web for recipes, explore your social networks or leave notes for your family—all from the refrigerator door,” reads the ad for one.

Welcome to the beginning of what is being touted as the Internet’s next wave by technologists, investment bankers, research organizations, and the companies that stand to rake in some of an estimated $14.4 trillion by 2022—what they call the Internet of Things (IoT). Cisco Systems, which is one of those companies, and whose CEO came up with that multitrillion-dollar figure, takes it a step further and calls this wave “the Internet of Everything,” which is both aspirational and telling. The writer and social thinker Jeremy Rifkin, whose consulting firm is working with businesses and governments to hurry this new wave along, describes it like this:

The Internet of Things will connect every thing with everyone in an integrated global network. People, machines, natural resources, production lines, logistics networks, consumption habits, recycling flows, and virtually every other aspect of economic and social life will be linked via sensors and software to the IoT platform, continually feeding Big Data to every node—businesses, homes, vehicles—moment to moment, in real time. Big Data, in turn, will be processed with advanced analytics, transformed into predictive algorithms, and programmed into automated systems to improve thermodynamic efficiencies, dramatically increase productivity, and reduce the marginal cost of producing and delivering a full range of goods and services to near zero across the entire economy.

In Rifkin’s estimation, all this connectivity will bring on the “Third Industrial Revolution,” poised as he believes it is to not merely redefine our relationship to machines and their relationship to one another, but to overtake and overthrow capitalism once the efficiencies of the Internet of Things undermine the market system, dropping the cost of producing goods to, basically, nothing. His recent book, The Zero Marginal Cost Society: The Internet of Things, the Collaborative Commons, and the Eclipse of Capitalism, is a paean to this coming epoch.

It is also deeply wishful, as many prospective arguments are, even when they start from fact. And the fact is, the Internet of Things is happening, and happening quickly. Rifkin notes that in 2007 there were ten million sensors of all kinds connected to the Internet, a number he says will increase to 100 trillion by 2030. A lot of these are small radio-frequency identification (RFID) microchips attached to goods as they crisscross the globe, but there are also sensors on vending machines, delivery trucks, cattle and other farm animals, cell phones, cars, weather-monitoring equipment, NFL football helmets, jet engines, and running shoes, among other things, generating data meant to streamline, inform, and increase productivity, often by bypassing human intervention. Additionally, the number of autonomous Internet-connected devices such as cell phones—devices that communicate directly with one another—now doubles every five years, growing from 12.5 billion in 2010 to an estimated 25 billion next year and 50 billion by 2020.

For years, a cohort of technologists, most notably Ray Kurzweil, the writer, inventor, and director of engineering at Google, have been predicting the day when computer intelligence surpasses human intelligence and merges with it in what they call the Singularity. We are not there yet, but a kind of singularity is already upon us as we swallow pills embedded with microscopic computer chips, activated by stomach acids, that will be able to report compliance with our doctor’s orders (or not) directly to our electronic medical records. Then there is the singularity that occurs when we outfit our bodies with “wearable technology” that sends data about our physical activity, heart rate, respiration, and sleep patterns to a database in the cloud as well as to our mobile phones and computers (and to Facebook and our insurance company and our employer).

Cisco Systems, for instance, which is already deep into wearable technology, is working on a platform called “the Connected Athlete” that “turns the athlete’s body into a distributed system of sensors and network intelligence…[so] the athlete becomes more than just a competitor—he or she becomes a Wireless Body Area Network, or WBAN.” Wearable technology, which generated $800 million in 2013, is expected to make nearly twice that this year. These are numbers that not only represent sales, but the public’s acceptance of, and habituation to, becoming one of the things connected to and through the Internet.

One reason that it has been easy to miss the emergence of the Internet of Things, and therefore miss its significance, is that much of what is presented to the public as its avatars seems superfluous and beside the point. An alarm clock that emits the scent of bacon, a glow ball that signals if it is too windy to go out sailing, and an “egg minder” that tells you how many eggs are in your refrigerator no matter where you are in the (Internet-connected) world, revolutionary as they may be, hardly seem the stuff of revolutions; because they are novelties, they obscure what is novel about them.

And then there is the creepiness factor. In the weeks before the general release of Google Glass, Google’s $1,500 see-through eyeglass computer that lets the wearer record what she is seeing and hearing, the press reported a number of incidents in which early adopters were physically accosted by people offended by the product’s intrusiveness. Enough is enough, the Glass opponents were saying.

Why a small cohort of people encountering Google Glass for the first time found it disturbing is the same reason that David Rose, an instructor at MIT and the founder of a company that embeds Internet connectivity into everyday devices like umbrellas and medicine vials, celebrates it and waxes nearly poetic on the potential of “heads up displays.” As he writes in Enchanted Objects: Design, Human Desire, and the Internet of Things, such devices have the potential to radically transform human encounters. Rose imagines a party where

Wearing your fashionable [heads up] display, you will instruct the device to display the people’s names and key biographical info above their heads. In the business meeting, you will call up information about previous meetings and agenda items. The HUD display will call up useful websites, tap into social networks, and dig into massive info sources…. You will fact-check your friends and colleagues…. You will also engage in real-time messaging, including videoconferencing with friends or colleagues who will participate, coach, consult, or lurk.

Whether this scenario excites or repels you, it represents the vision of more than one of the players moving us in the direction of pervasive connectivity. Rose’s company, Ambient Devices, has been at the forefront of what he calls “enchanting” objects—that is, connecting them to the Internet to make them “extraordinary.” This is a task that Glenn Lurie, the CEO of ATT Mobility, believes is “spot on.” Among these enchanted objects are the Google Latitude Doorbell that “lets you know where your family members are and when they are approaching home,” an umbrella that turns blue when it is about to rain so you might be inspired to take it with you, and a jacket that gives you a hug every time someone likes your Facebook post.

Rose envisions “an enchanted wall in your kitchen that could display, through lines of colored light, the trends and patterns of your loved ones’ moods,” because it will offer “a better understanding of [the] hidden thoughts and emotions that are relevant to us….” If his account of a mood wall seems unduly fanciful (and nutty), it should be noted that this summer, British Airways gave passengers flying from New York to London blankets embedded with neurosensors to track how they were feeling. Apparently this was more scientific than simply asking them. According to one report:

When the fiber optics woven into the blanket turned red, flight attendants knew that the passengers were feeling stressed and anxious. Blue blankets were a sign that the passenger was feeling calm and relaxed.

Thus the airline learned that passengers were happiest when eating and drinking, and most relaxed when sleeping.

While, arguably, this “finding” is as trivial as an umbrella that turns blue when it’s going to rain, there is nothing trivial about collecting personal data, as innocuous as that data may seem. It takes very little imagination to foresee how the kitchen mood wall could lead to advertisements for antidepressants that follow you around the Web, or trigger an alert to your employer, or show up on your Facebook page because, according to Robert Scoble and Shel Israel in Age of Context: Mobile, Sensors, Data and the Future of Privacy, Facebook “wants to build a system that anticipates your needs.”

It takes even less imagination to foresee how information about your comings and goings obtained from the Google Latitude Doorbell could be used in a court of law. Cars are now outfitted with scores of sensors, including ones in the seats that determine how many passengers are in them, as well as with an “event data recorder” (EDR), which is the automobile equivalent of an airplane’s black box. As Scoble and Israel report in Age of Context, “the general legal consensus is that police will be able to subpoena car logs the same way they now subpoena phone records.”

Meanwhile, cars themselves are becoming computers on wheels, with operating system updates coming wirelessly over the air, and with increasing capacity to “understand” their owners. As Scoble and Israel tell it:

They not only adjust seat positions and mirrors automatically, but soon they’ll also know your preferences in music, service stations, dining spots and hotels…. They know when you are headed home, and soon they’ll be able to remind you to stop at the market to get a dessert for dinner.

Recent revelations from the journalist Glenn Greenwald put the number of Americans under government surveillance at a colossal 1.2 million people. Once the Internet of Things is in place, that number might easily expand to include everyone else, because a system that can remind you to stop at the market for dessert is a system that knows who you are and where you are and what you’ve been doing and with whom you’ve been doing it. And this is information we give out freely, or unwittingly, and largely without question or complaint, trading it for convenience, or what passes for convenience.

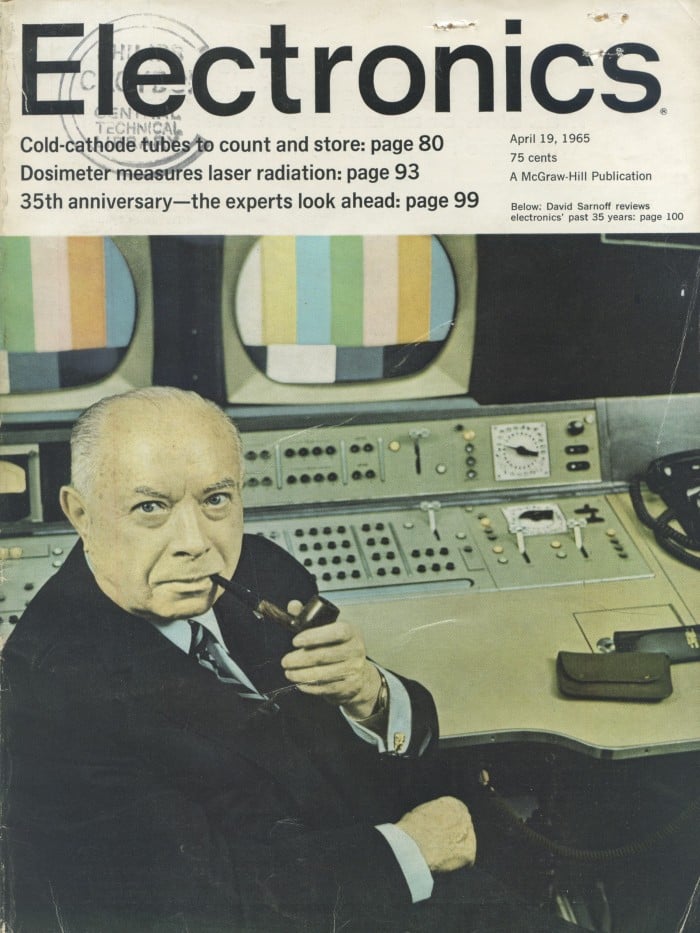

halpern_2-112014.jpg

Michael Cogliantry

The journalist A.J. Jacobs wearing data-collecting sensors to keep track of his health and fitness; from Rick Smolan and Jennifer Erwitt’s The Human Face of Big Data, published in 2012 by Against All Odds

In other words, as human behavior is tracked and merchandized on a massive scale, the Internet of Things creates the perfect conditions to bolster and expand the surveillance state. In the world of the Internet of Things, your car, your heating system, your refrigerator, your fitness apps, your credit card, your television set, your window shades, your scale, your medications, your camera, your heart rate monitor, your electric toothbrush, and your washing machine—to say nothing of your phone—generate a continuous stream of data that resides largely out of reach of the individual but not of those willing to pay for it or in other ways commandeer it.

That is the point: the Internet of Things is about the “dataization” of our bodies, ourselves, and our environment. As a post on the tech website Gigaom put it, “The Internet of Things isn’t about things. It’s about cheap data.” Lots and lots of it. “The more you tell the world about yourself, the more the world can give you what you want,” says Sam Lessin, the head of Facebook’s Identity Product Group. It’s a sentiment shared by Scoble and Israel, who write:

The more the technology knows about you, the more benefits you will receive. That can leave you with the chilling sensation that big data is watching you. In the vast majority of cases, we believe the coming benefits are worth that trade-off.

So, too, does Jeremy Rifkin, who dismisses our legal, social, and cultural affinity for privacy as, essentially, a bourgeois affectation—a remnant of the enclosure laws that spawned capitalism:

Connecting everyone and everything in a neural network brings the human race out of the age of privacy, a defining characteristic of modernity, and into the era of transparency. While privacy has long been considered a fundamental right, it has never been an inherent right. Indeed, for all of human history, until the modern era, life was lived more or less publicly….

In virtually every society that we know of before the modern era, people bathed together in public, often urinated and defecated in public, ate at communal tables, frequently engaged in sexual intimacy in public, and slept huddled together en masse. It wasn’t until the early capitalist era that people began to retreat behind locked doors.

As anyone who has spent any time on Facebook knows, transparency is a fiction—literally. Social media is about presenting a curated self; it is opacity masquerading as transparency. In a sense, then, it is about preserving privacy. So when Rifkin claims that for young people, “privacy has lost much of its appeal,” he is either confusing sharing (as in sharing pictures of a vacation in Spain) with openness, or he is acknowledging that young people, especially, have become inured to the trade-offs they are making to use services like Facebook. (But they are not completely inured to it, as demonstrated by both Jim Dwyer’s painstaking book More Awesome Than Money, about the failed race to build a noncommercial social media site called Diaspora in 2010, as well as the overwhelming response—as many as 31,000 requests an hour for invitations—to the recent announcement that there soon will be a Facebook alternative, Ello, that does not collect or sell users’ data.)

These trade-offs will only increase as the quotidian becomes digitized, leaving fewer and fewer opportunities to opt out. It’s one thing to edit the self that is broadcast on Facebook and Twitter, but the Internet of Things, which knows our viewing habits, grooming rituals, medical histories, and more, allows no such interventions—unless it is our behaviors and curiosities and idiosyncracies themselves that end up on the cutting room floor.

Even so, no matter what we do, the ubiquity of the Internet of Things is putting us squarely in the path of hackers, who will have almost unlimited portals into our digital lives. When, last winter, cybercriminals broke into more than 100,000 Internet-enabled appliances including refrigerators and sent out 750,000 spam e-mails to their users, they demonstrated just how vulnerable Internet-connected machines are.

Not long after that, Forbes reported that security researchers had come up with a $20 tool that was able to remotely control a car’s steering, brakes, acceleration, locks, and lights. It was an experiment that, again, showed how simple it is to manipulate and sabotage the smartest of machines, even though—but really because—a car is now, in the words of a Ford executive, a “cognitive device.”

More recently, a study of ten popular IoT devices by the computer company Hewlett-Packard uncovered a total of 250 security flaws among them. As Jerry Michalski, a former tech industry analyst and founder of the REX think tank, observed in a recent Pew study: “Most of the devices exposed on the internet will be vulnerable. They will also be prone to unintended consequences: they will do things nobody designed for beforehand, most of which will be undesirable.”

Breaking into a home system so that the refrigerator will send out spam that will flood your e-mail and hacking a car to trigger a crash are, of course, terrible and real possibilities, yet as bad as they may be, they are limited in scope. As IoT technology is adopted in manufacturing, logistics, and energy generation and distribution, the vulnerabilities do not have to scale up for the stakes to soar. In a New York Times article last year, Matthew Wald wrote:

If an adversary lands a knockout blow [to the energy grid]…it could black out vast areas of the continent for weeks; interrupt supplies of water, gasoline, diesel fuel and fresh food; shut down communications; and create disruptions of a scale that was only hinted at by Hurricane Sandy and the attacks of Sept. 11.

In that same article, Wald noted that though government officials, law enforcement personnel, National Guard members, and utility workers had been brought together to go through a worst-case scenario practice drill, they often seemed to be speaking different languages, which did not bode well for an effective response to what is recognized as a near inevitability. (Last year the Department of Homeland Security responded to 256 cyberattacks, half of them directed at the electrical grid. This was double the number for 2012.)

This Babel problem dogs the whole Internet of Things venture. After the “things” are connected to the Internet, they need to communicate with one another: your smart TV to your smart light bulbs to your smart door locks to your smart socks (yes, they exist). And if there is no lingua franca—which there isn’t so far—then when that television breaks or becomes obsolete (because soon enough there will be an even smarter one), your choices will be limited by what language is connecting all your stuff. Though there are industry groups trying to unify the platform, in September Apple offered a glimpse of how the Internet of Things actually might play out, when it introduced the company’s new smart watch, mobile payment system, health apps, and other, seemingly random, additions to its product line. As Mat Honan virtually shouted in Wired:

Apple is building a world in which there is a computer in your every interaction, waking and sleeping. A computer in your pocket. A computer on your body. A computer paying for all your purchases. A computer opening your hotel room door. A computer monitoring your movements as you walk though the mall. A computer watching you sleep. A computer controlling the devices in your home. A computer that tells you where you parked. A computer taking your pulse, telling you how many steps you took, how high you climbed and how many calories you burned—and sharing it all with your friends…. THIS IS THE NEW APPLE ECOSYSTEM. APPLE HAS TURNED OUR WORLD INTO ONE BIG UBIQUITOUS COMPUTER.

The ecosystem may be lush, but it will be, by design, limited. Call it the Internet of Proprietary Things.

For many of us, it is difficult to imagine smart watches and WiFi-enabled light bulbs leading to a new world order, whether that new world order is a surveillance state that knows more about us than we do about ourselves or the techno-utopia envisioned by Jeremy Rifkin, where people can make much of what they need on 3-D printers powered by solar panels and unleashed human creativity. Because home automation is likely to be expensive—it will take a lot of eggs before the egg minder pays for itself—it is unlikely that those watches and light bulbs will be the primary driver of the Internet of Things, though they will be its showcase.

Rather, the Internet’s third wave will be propelled by businesses that are able to rationalize their operations by replacing people with machines, using sensors to simplify distribution patterns and reduce inventories, deploying algorithms that eliminate human error, and so on. Those business savings are crucial to Rifkin’s vision of the Third Industrial Revolution, not simply because they have the potential to bring down the price of consumer goods, but because, for the first time, a central tenet of capitalism—that increased productivity requires increased human labor—will no longer hold. And once productivity is unmoored from labor, he argues, capitalism will not be able to support itself, either ideologically or practically.

What will rise in place of capitalism is what Rifkin calls the “collaborative commons,” where goods and property are shared, and the distinction between those who own the means of production and those who are beholden to those who own the means of production disappears. “The old paradigm of owners and workers, and of sellers and consumers, is beginning to break down,” he writes.

Consumers are becoming their own producers, eliminating the distinction. Prosumers will increasingly be able to produce, consume, and share their own goods…. The automation of work is already beginning to free up human labor to migrate to the evolving social economy…. The Internet of Things frees human beings from the market economy to pursue nonmaterial shared interests on the Collaborative Commons.

Rifkin’s vision that people will occupy themselves with more fulfilling activities like making music and self-publishing novels once they are freed from work, while machines do the heavy lifting, is offered at a moment when a new kind of structural unemployment born of robotics, big data, and artificial intelligence takes hold globally, and traditional ways of making a living disappear. Rifkin’s claims may be comforting, but they are illusory and misleading. (We’ve also heard this before, in 1845, when Marx wrote in The German Ideology that under communism people would be “free to hunt in the morning, fish in the afternoon, rear cattle in the evening, [and] criticize after dinner.”)

As an example, Rifkin points to Etsy, the online marketplace where thousands of “prosumers” sell their crafts, as a model for what he dubs the new creative economy. “Currently 900,000 small producers of goods advertise at no cost on the Etsy website,” he writes.

Nearly 60 million consumers per month from around the world browse the website, often interacting personally with suppliers…. This form of laterally scaled marketing puts the small enterprise on a level playing field with the big boys, allowing them to reach a worldwide user market at a fraction of the cost.

All that may be accurate and yet largely irrelevant if the goal is for those 900,000 small producers to make an actual living. As Amanda Hess wrote last year in Slate:

Etsy says its crafters are “thinking and acting like entrepreneurs,” but they’re not thinking or acting like very effective ones. Seventy-four percent of Etsy sellers consider their shop a “business,” including 65 percent of sellers who made less than $100 last year.

While it is true that a do-it-yourself subculture is thriving, and sharing cars, tools, houses, and other property is becoming more common, it is also true that much of this activity is happening under duress as steady employment disappears. As an article in The New York Times this past summer made clear, employment in the sharing economy, also known as the gig economy, where people piece together an income by driving for Uber and delivering groceries for Instacart, leaves them little time for hunting and fishing, unless it’s hunting for work and fishing under a shared couch for loose change.

So here comes the Internet’s Third Wave. In its wake jobs will disappear, work will morph, and a lot of money will be made by the companies, consultants, and investment banks that saw it coming. Privacy will disappear, too, and our intimate spaces will become advertising platforms—last December Google sent a letter to the SEC explaining how it might run ads on home appliances—and we may be too busy trying to get our toaster to communicate with our bathroom scale to notice. Technology, which allows us to augment and extend our native capabilities, tends to evolve haphazardly, and the future that is imagined for it—good or bad—is almost always historical, which is to say, naive.

Lançado pelo Instituto Microsoft Research-FAPESP de Pesquisas em TI, o livro O Quarto Paradigma debate os desafios da eScience, nova área dedicada a lidar com o imenso volume de informações que caracteriza a ciência atual

Lançado pelo Instituto Microsoft Research-FAPESP de Pesquisas em TI, o livro O Quarto Paradigma debate os desafios da eScience, nova área dedicada a lidar com o imenso volume de informações que caracteriza a ciência atual

Você precisa fazer login para comentar.