Getty Images

Stuart Hameroff has faced three decades of criticism for his quantum consciousness theory, but new studies show the idea may not be as fringe as once believed.

By Darren Orf – Published: Dec 18, 2024 5:13 PM EST

For nearly his entire life, Dr. Stuart Hameroff has been fascinated with the bedeviling question of consciousness. But instead of studying neurology or another field commonly associated with the inner workings of the brain, it was Hameroff’s familiarity with anesthetics, a family of drugs that famously induces the opposite of consciousness, that fueled his curiosity.

“I thought about neurology, psychology, and neurosurgery, but none of those . . . seemed to be dealing with the problem of consciousness,” says Hameroff, a now-retired professor of anesthesiology from the University of Arizona. Hameroff recalls a particularly eye-opening moment when he first arrived at the university and met the chairman of the anesthesia department. “He says ‘hey, if you want to understand consciousness, figure out how anesthesia works because we don’t have a clue.’”

Hameroff’s work in anesthesia showed that unconsciousness occurred due to some effect on microtubules and wondered if perhaps these structures somehow played a role in forming consciousness. So instead of using the neuron, or the brain’s nerve cells, as the “base unit” of consciousness, Hameroff’s ideas delved deeper and looked at the billions of individual tubulins inside microtubules themselves. He quickly became obsessed.

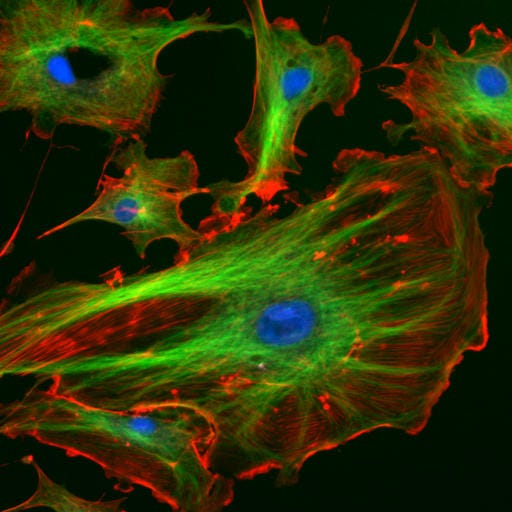

Found in a cell’s cytoskeleton—the structure that helps a cell keep its shape and undergo mitosis—microtubules are made up of tubulin proteins and can be found in cells throughout the body. Hameroff describes the overall shape of microtubules as a “hollow ear of corn” where the kernels represent the alpha- and beta-tubulin proteins. Hameroff first found out about these structures in medical school in the 1970s, learning how microtubules duplicate chromosomes during cell division. If the spindles of the microtubules don’t pull this dance off perfectly (a process known as missegregation), you get cancerous cells or other forms of maldevelopment.

Wikimedia/National Institutes of Health. In a eukaryotic cell, the cytoskeleton provides structure and support. In this image, microtubules, which are part of the cytoskeleton, are shown in green. These narrow, tube-like structures help support the shape of the cell. Scientists like Stuart Hameroff also believe these polymers could hold the secrets to consciousness.

While Hameroff knew that anesthetics impacted these structures, he couldn’t explain how microtubules might produce consciousness. “How would all that information processing explain consciousness? How could it explain envy, greed, pain, love, joy, emotion, the color green,” Hameroff says. “I had no idea.”

That is, until he had a chance encounter with an influential book by Nobel Prize laureate Sir Roger Penrose, Ph.D.

Within the pages of 1989’s The Emperor’s New Mind, Penrose argued that consciousness is actually quantum in nature—not computational as many theories of the mind had so far put forth. However, the famous physicist didn’t have any biological mechanism for the possible collapse of the quantum wave function—when a multi-state quantum superposition collapses to a definitive classical state—that induces conscious experiences.

“Damn straight, Roger. It’s freaking microtubules,” Hameroff remembers saying. Soon after, Hameroff struck up a partnership with Penrose, and together they set off to create one of the most fascinating—and controversial—ideas in the field of consciousness study. This idea became known as Orchestrated Objective Reduction theory, or Orch OR, and it states that microtubules in neurons cause the quantum wave function to collapse, a process known as objective reduction, which gives rise to consciousness.

Hameroff readily admits that since its inception in the mid-90s, it’s became a popular pastime in the field to bash his idea. But in recent years, a growing body of research has reported some evidence of quantum processes being possible in the brain. And while this in itself isn’t confirmation of the Orch OR theory Hameroff and Penrose came up with, it’s leading some scientists to reconsider the possibility that consciousness could be quantum in nature. Not only would this be a huge breakthrough in the understanding of human consciousness, it would mean that purely algorithmic—or computer-based—artificial intellligence could never truly be conscious.

● ● ●

In 1989, Roger Penrose was already a superstar in the world of mathematics and physics. By this time, he was already years removed from his groundbreaking work describing black hole formations (which eventually earned him the Nobel Prize in Physics in 2020), as well as his discovery of mathematical tilings, known as Penrose tilings, that are crucial to the study of quasicrystals—structures that are ordered but not periodic. With the publication of The Emperor’s New Mind, Penrose dove headfirst into the theoretical realm of human consciousness.

In the book, Penrose leveraged Kurt Gödel’s incompleteness theorem, which (in very simplified terms) argued that because the human mind can exceed existing systems to make new discoveries, then consciousness must be non-algorithmic. Instead, Penrose argues that human consciousness is fundamentally quantum in nature, and in The Emperor’s New Mind, he lays out his case over hundreds of pages, detailing how the collapse of the wave function creates a moment of consciousness. However, similar to Hameroff’s dilemma, Penrose admits in the closing pages that profound pieces of this quantum consciousness puzzle were still unknown:

I hold also to the hope that it is through science and mathematics that some profound advances in the understanding of mind must eventually come to light. There is an apparent dilemma here, but I have tried to show that there is a genuine way out.

When Hammeroff first read the book in 1991, he believed he knew what Penrose was missing.

Hameroff dashed off a letter that included some of his research and offered to visit Penrose at Oxford during one of his conferences in England. Penrose agreed, and the two soon began probing the non-algorithmic problem of human consciousness. While the duo developed their quantum consciousness theory, Hameroff also brought together minds from across disciplines—including philosophy, neuroscience, cognitive science, math, and physics—to explore ideas surrounding consciousness in the form of a biannual Science of Consciousness Conference.

The University of Arizona Center for Consciousness Studies. Dr. Stuart Hameroff (left) and Sir Roger Penrose (right) giving a lecture on consciousness and the physics of the brain at the Sanford Consortium for Regenerative Medicine in La Jolla, California, January 2020.

And from its very inception, the conference broke new ground. In 1994, philosopher David Chalmers described how neuroscience was well-suited for figuring out how the brain controlled physical processes, but the “hard problem” was figuring out why humans (and all other living things) had subjective experiences.

Roughly two years after Chalmers gave this famous talk in a hospital auditorium in Tucson, Penrose and Hameroff revealed their own possible answer to this famous hard problem.

It wasn’t well-received.

● ● ●

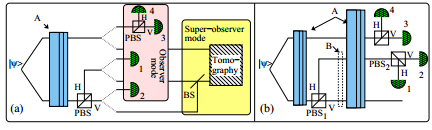

Penrose and Hameroff revealed their Orchestrated Objective Reduction theory in the April 1996 issue of Mathematics and Computers in Simulation. It detailed how microtubules orchestrate consciousness from “objective reduction,” which describes (with complicated physics) Penrose’s thoughts on quantum gravity interaction and how the collapse of the wave function produces consciousness.

The idea has since faced nearly 30 years of criticism.

Famous theoretical physicist Stephen Hawking once wrote that Penrose fell for a kind of Holmsian fallacy, stating that “his argument seemed to be that consciousness is a mystery and quantum gravity is another mystery so they must be related.” Another main criticism is that the brain’s warm and noisy environment is ill-suited for the existence of any kind of quantum interaction. Read any scientific literature about quantum computers, and lab conditions are always extra pristine and approaching-absolute-zero cold (−273.15 degrees Celsius).

“You know how long I’ve been hearing the brain is warm and noisy?” Hameroff says, dismissing the criticism of the brain as too warm and wet for quantum processes to flourish. “I think our theory is sound from the physics, biology, and anesthesia standpoint.”

In a 2022 interview with New Scientist, Penrose admitted that the original Orch OR theory was “rough around the edges,” but maintains all these decades later that consciousness lies beyond computation and perhaps even beyond our current understanding of quantum mechanics. “People used to say it is completely crazy,” Penrose told New Scientist, “but I think people take it seriously now.”

“I think our theory is sound from the physics, biology, and anesthesia standpoint.”

A lot of that slow acceptance comes from a steady tide of research showing that biological systems contain evidence of quantum interactions. Since the publication of Orch OR, scientists have found evidence of quantum mechanics at work during photosynthesis, for example, and just this year, a study from researchers at Howard University detailed quantum effects involving microtubules. This research doesn’t prove Orch OR directly; that’d be like discovering water on an exoplanet and declaring it’s home to intelligent life—not an impossibility, but very far from a certainty. The findings at least have some critics reconsidering the role quantum mechanics plays, if not in consciousness, then at least the inner workings of the brain more broadly.

However, the rise of quantum biology in the past few decades also coincided with the explosion of AI and large language models (LLMs), which has brought new urgency to the question of consciousness—both human and artificial. Hameroff believes that an influx of money for consciousness research involving AI has only biased the field further into the “consciousness is a computation” camp.

“People have thrown in the towel on the ‘hard problem’ in my view and sold out to AI,” Hameroff says. “These LLMs . . . haven’t reached their limit yet but that doesn’t mean they’ll be conscious.”

● ● ●

As the years—and eventually decades—passed, Hameroff relentlessly defended Orch OR in scientific papers, at consciousness conferences, and perhaps most energetically on his X (formerly Twitter) feed, where he regularly participates in microtubule-related debates. But when asked if he likes the arguments, he answers pretty bluntly.

“Apparently I do because I keep doing it,” Hameroff says. “I’ve always been the contrarian but it’s not on purpose—I just follow my nose.”

And that scientific sense has led Hameroff to explore potentially profound implications when you consider that consciousness doesn’t necessarily rely on the brain or even neurons. Earlier this year, Hameroff, along with colleagues at the University of Arizona and Japan’s National Institute for Materials Science, co-authored an non-peer-reviewed article asking the question of whether consciousness could possibly predate life itself.

“It never made sense to me that life started and evolved for millions of years without genes—why would organisms develop cognitive machinery? What’s their motivation?” Hameroff says, admitting that theory traipses beyond the typical confines of science. “It’s kind of spiritual—my spiritual friends like this alot.”

Hameroff admits that some of his ideas are “out there,” and even stops himself short when describing some ideas involving UFOs, saying “I’m already out on enough limbs.” While most of his ideas may have taken up residence in the fringes of mainstream science, it’s a place where he seems comfortable—at least for now. “I don’t think everybody’s going to agree . . . but I think [Orch OR] is going to be considered seriously,” Hameroff says.

Hameroff retired from his decades-long career as an anesthesiologist at the University of Arizona, and now he has even more time to dedicate to his lifelong fascination.

“I had a great career, and now I have another great career,” he says. “Plus I don’t have to get up so damn early.”

By Darren Orf – Contributing Editor. Darren lives in Portland, has a cat, and writes/edits about sci-fi and how our world works. You can find his previous stuff at Gizmodo and Paste if you look hard enough.

Dive Deeper ⬇️

Scientists Are Trying to ‘See’ Your Consciousness

A book titled “Biocentrism: How Life and Consciousness Are the Keys to Understanding the Nature of the Universe“ has stirred up the Internet, because it contained a notion that life does not end when the body dies, and it can last forever. The author of this publication, scientist Dr. Robert Lanza who was voted the 3rd most important scientist alive by the NY Times, has no doubts that this is possible.

A book titled “Biocentrism: How Life and Consciousness Are the Keys to Understanding the Nature of the Universe“ has stirred up the Internet, because it contained a notion that life does not end when the body dies, and it can last forever. The author of this publication, scientist Dr. Robert Lanza who was voted the 3rd most important scientist alive by the NY Times, has no doubts that this is possible.

Você precisa fazer login para comentar.