US president Donald Trump. Credit: Associated Press / Alamy Stock Photo

Multiple Authors

19.05.2026 | 5:22pm

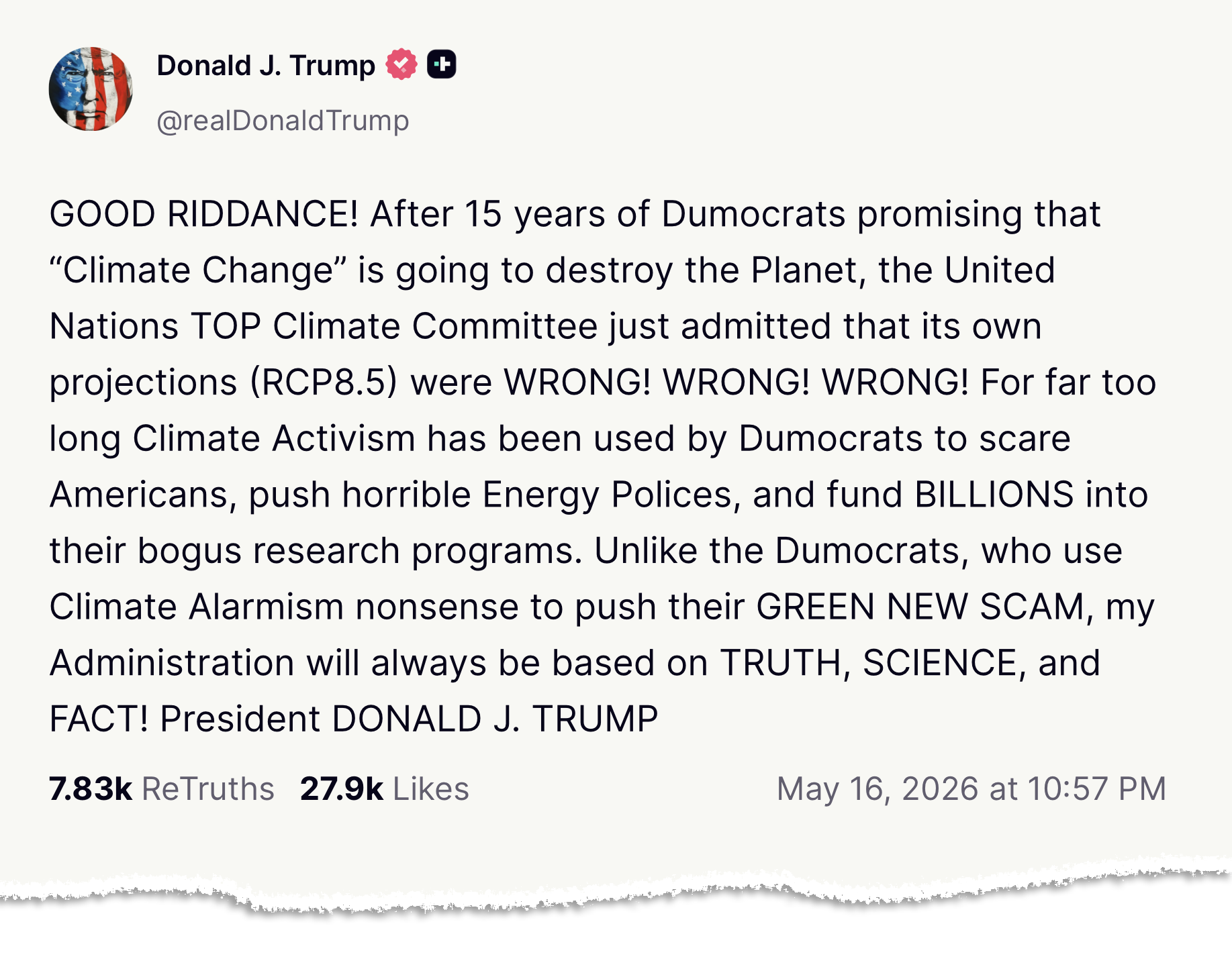

Among a flurry of posts on social media last weekend, US president Donald Trump declared “good riddance” to a specific emissions scenario used in global climate projections.

The “RCP8.5” scenario, which envisages a future of very high carbon emissions, was “wrong, wrong, wrong”, the president wrote in block capitals.

This was “just admitted” by the UN’s “top climate committee”, he falsely claimed, referring to the Intergovernmental Panel on Climate Change (IPCC).

The post was quickly picked up by right-leaning media, amplifying Trump’s misrepresentation of emissions scenarios and the role of the IPCC.

His claim follows the publication of a new set of emissions scenarios that will feed into the next IPCC reports.

While the new scenarios no longer include such high emissions as in RCP8.5, they also show it is “not possible” to limit global warming to 1.5C above pre-industrial levels without significant “overshoot”, one of the authors tells Carbon Brief.

Moreover, projections suggest that the world is still on course for between 2.5C and 3C of warming, another author says.

This level of warming was previously described as “catastrophic” by the UN.

In this factcheck, Carbon Brief looks at Trump’s comments, the debate around RCP8.5 and the “good” and “bad” news within the latest scenarios.

- What did Trump say?

- What is RCP8.5?

- Why is RCP8.5 so hotly debated?

- How has RCP8.5 been replaced?

- How is the IPCC involved?

What did Trump say?

In the late evening of Saturday 16 May, Trump posted the following message on his Truth Social social-media platform:

“Dumocrats” is a derogatory nickname for Democrat politicians, debuted by the president in a televised Fox News interview on Thursday 14 May, according to the Independent.

By “top climate committee”, the president was presumably referring to the IPCC, the UN body responsible for assessing science about human-caused climate change.

However, the IPCC does not develop, control or own climate scenarios. Moreover, it has not published anything stating that any climate scenario is “wrong”. (For more, see: How is the IPCC involved?)

Nevertheless, right-leaning media outlets have reported on Trump’s comments, in many instances repeating his false assertion that the RCP8.5 climate scenario had been developed by the IPCC.

The New York Post misleadingly claimed that the IPCC “had quietly adjusted” its framework of emission scenarios. The Daily Caller, a pro-Trump conspiratorial US outlet, adds its own falsehoods stating that “IPCC researchers revised their modelling approach last month, swapping the extreme pathway for seven alternative scenarios”. The climate-sceptic Australian claimed that scientists had “quietly scrapped the apocalyptic forecasts that have terrified policymakers and the public”.

With Fox News also covering Trump’s comments, along with an earlier article by the Times, much of the reporting around RCP8.5 in recent days has been driven by media controlled by the climate-sceptic mogul Rupert Murdoch.

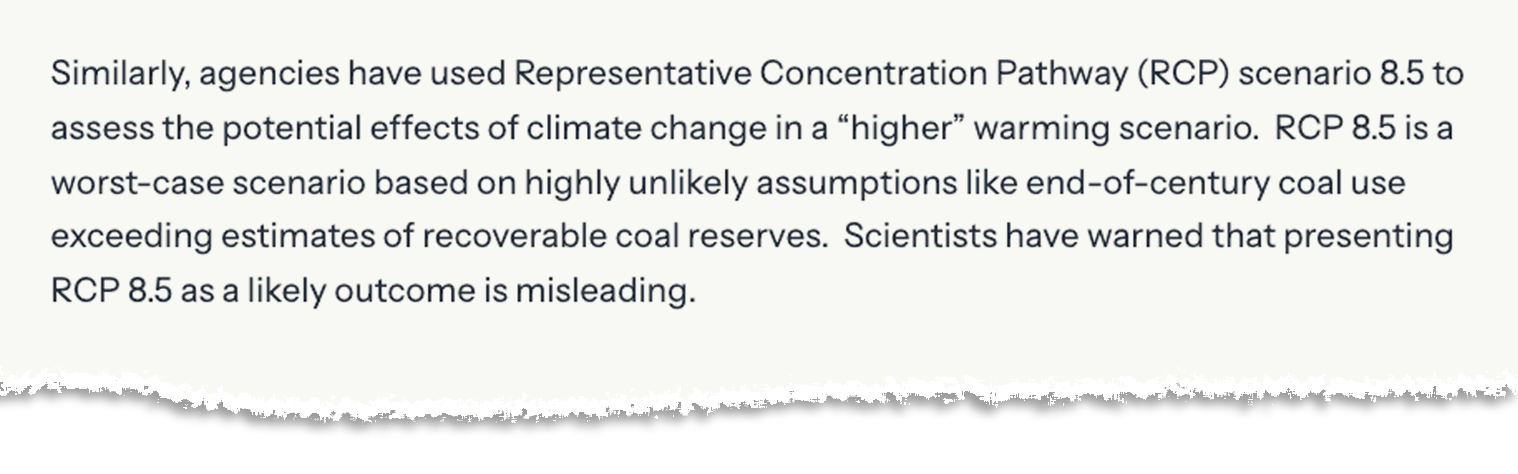

It is not the first time the Trump administration has attacked RCP8.5. In an executive order issued in May 2025 – entitled, “Restoring gold-standard science” – the White House included the climate scenario in a list of examples of how the previous government had “used or promoted scientific information in a highly misleading manner”.

Federal agencies, it claimed, had been using RCP8.5 to “assess the potential effects of climate change in a higher warming scenario”, despite scientists warning that “presenting RCP8.5 as a likely outcome is misleading”.

The executive order came after Project 2025 – a policy wishlist for Trump’s second term published in 2023 by the Heritage Foundation, an influential rightwing, climate-sceptic thinktank in the US – criticised the climate scenario.

The manifesto said a “day-one” priority for the new government should be to “eliminate” the US Environmental Protection Agency’s “use of unauthorised regulatory inputs”, such as “unrealistic climate scenarios, including those based on RCP8.5”.

What is RCP8.5?

Scientists use emissions scenarios to explore potential future climates, based on how global energy and land use could change in the decades to come.

These scenarios are not predictions or forecasts of what will happen in the future. Therefore, Trump’s declaration that projections under RCP8.5 were “wrong, wrong, wrong” misrepresents the purpose of emissions scenarios.

Different modelling groups have produced thousands of different scenarios over the years. RCP8.5 was developed by scientists back in the early 2010s as one of a set of four consistent “representative concentration pathways”, or RCPs, for climate modellers to use.

As their name suggests, the RCPs were representative of the vast array of scenarios in the scientific literature.

Their corresponding numbers – 2.6, 4.5, 6.0 and 8.5 – do not describe temperature rise (as some mistakenly assume), but the level of “radiative forcing” that each pathway reaches by 2100. This forcing level is a measure of the change in the Earth’s “energy balance” (in watts per square metre) caused by human-caused greenhouse gas emissions.

As the highest forcing of the set, RCP8.5 was a scenario of very high emissions and extensive global warming.

When it was originally published in 2011, RCP8.5 was intended to reflect the high end – roughly the 90th percentile – of the baseline scenarios available in the scientific literature at the time.

A “baseline” scenario is one that assumes no climate mitigation, explains Dr Chris Smith, senior research scholar at the International Institute for Applied Systems Analysis (IIASA) in Austria. He tells Carbon Brief:

“RCP8.5 was developed as a no-climate-policy scenario, often called ‘reference’ or ‘baseline’ scenarios. These are used to benchmark the actions of climate policy.”

Under RCP8.5, the IPCC’s fifth assessment report (AR5) in 2013 projected a best estimate of 4.3C of temperature rise by 2081-2100, compared to the pre-industrial period, with a “likely” range of 3.2C to 5.4C.

The RCPs were succeeded in 2017 by the “shared socioeconomic pathways”, or SSPs. The SSPs included a set of five socioeconomic “narratives”, which described factors such as population change, economic growth and the rate of technological development.

The SSPs were then used in the IPCC’s sixth assessment (AR6) cycle, which ran over 2015-23. The upper end of the AR6 temperature projections was provided by the successor to RCP8.5, known as SSP5-8.5, which indicated warming of 4.4C by 2081-2100, with a “very likely” range of 3.3C to 5.7C.

Why is RCP8.5 so hotly debated?

Prof Detlef van Vuuren from Utrecht University, a leading figure in the development of emissions scenarios for many years, tells Carbon Brief that RCP8.5 is a “low-probability, high-risk scenario and it was always meant like that”.

The scenario assumed a world without climate policy and was designed to explore the consequences of high levels of greenhouse gases and global warming. It was not, van Vueren says, a “best-guess scenario” of what the future held in store.

However, in some research papers, RCP8.5 was characterised as “business as usual”, suggesting that it was the likely outcome if society did not pursue climate action.

This was “incorrect”, says van Vuuren, noting that RCP8.5 “is not a likely outcome”. He adds: “It’s never been a likely outcome.”

Over time, RCP8.5 became hotly debated in academic circles, with some scientists arguing that such high emissions were becoming increasingly unlikely and others claiming that RCP8.5 was still consistent with historical cumulative carbon dioxide (CO2) emissions.

Carbon Brief unpacked the arguments in this debate in a detailed explainer in 2019.

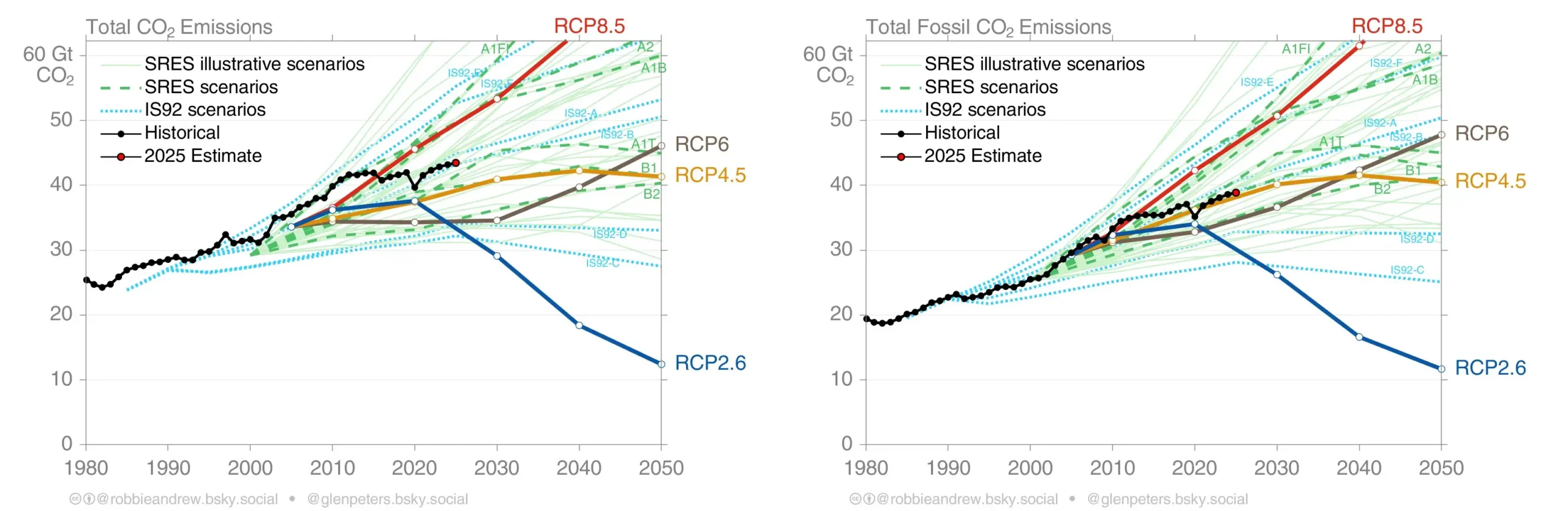

The charts below, originally included in a 2012 Nature commentary and then updated each year by the authors, shows how projected CO2 emissions under RCP8.5 (red line) compares with the other RCPs (bold coloured lines) and observations (black line).

The left-hand chart shows total CO2 emissions, including land-use change, while the right-hand chart shows CO2 emissions from burning fossil fuels and producing cement – the dominant drivers of 21st century emissions.

While emission trends up to the early 2010s approximately tracked RCP8.5, a flattening of emissions growth in the years since has meant they have not kept pace with the sustained rises that were assumed in the scenario.

Over the past decade, global emissions have more closely tracked RCP4.5, one of the two “medium stabilisation scenarios” of the original four RCPs.

The debate around RCP8.5 has not just focused on current emissions, but also on the scenario’s underlying assumptions for the future.

When it was published in 2011, the world had just seen unprecedented growth in global CO2 emissions, which had increased by 30% over the previous decade. Global coal use had increased by nearly 50% over the same period. Cleaner alternatives remained expensive in most countries and the idea of continued rapid growth in coal use seemed realistic.

Critics of RCP8.5 point to its assumptions for a dramatic expansion of coal use in the future, as well as high growth in global population.

For example, in a 2017 paper, two scientists argued that the “return to coal” envisaged in RCP8.5 would require an unprecedented five-fold increase in global coal use by the end of the century. Such an outcome was “exceptionally unlikely”, the authors wrote.

However, others have argued that while high-emissions scenarios are becoming increasingly unlikely, they still have an important role to play. For example, they highlight risks that only emerge under higher levels of warming.

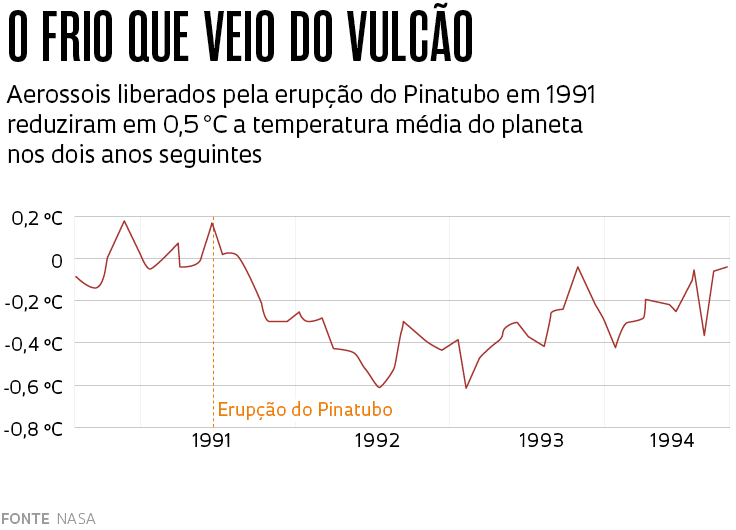

In addition, research has shown that feedbacks in the climate system – where warming triggers the release of more CO2 and methane, which warms the planet further – could mean that human-caused emissions lead to a higher radiative forcing and have a greater climate impact than initially assumed.

How has RCP8.5 been replaced?

As the IPCC heads into its seventh assessment cycle (AR7), scientists have been developing the emissions scenarios and climate model projections that will – eventually – feed into its reports.

For the emissions scenarios, that process – known as ScenarioMIP – started back in 2023 at a meeting in Reading, UK. This involved scientists representing “different climate research communities”, explains van Vuuren.

This “brainstorming” session devised the outlines for the new scenarios, he says. After more meetings, these were subsequently developed into a proposal that was – after review – translated into a journal paper. After review from scientists and the public, the final paper was published in April.

The paper sets out seven all-new emissions scenarios, replacing the SSPs (and its predecessors, the RCPs). For simplicity, the new scenarios are named according to their levels of greenhouse gas emissions.

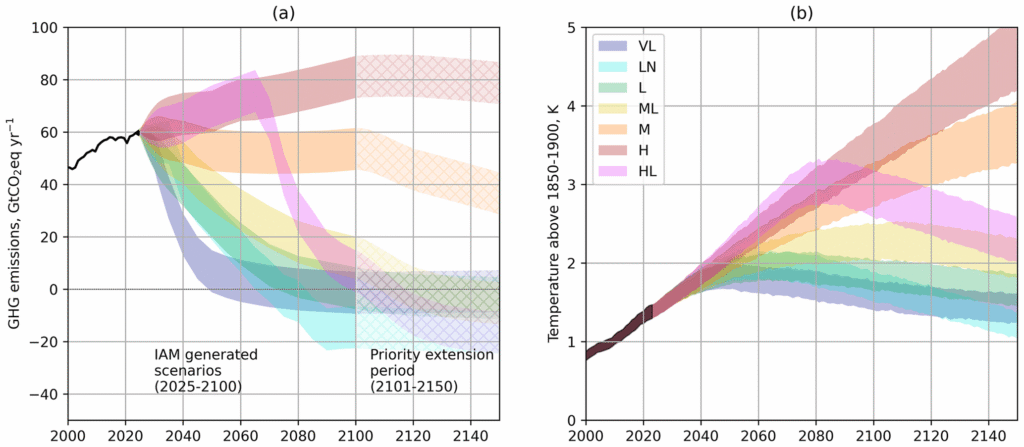

The figures below show the emissions (left) and the estimated global temperature changes (right) under the proposed scenarios, from the “low-to-negative” emissions scenario (turquoise) up to a “high-emissions” scenario (brown).

(It should be noted that, while the ScenarioMIP paper has been published, there remains an embargo on using the scenario data produced by integrated assessment models – often referred to as IAMs – to publish academic papers, analysis or even social media posts until 1 September this year. Carbon Brief will publish a detailed explainer on the new scenarios once the embargo lifts.)

When compared to the SSPs that came before, the range in future emissions in the new scenarios “will be smaller”, the authors say in the paper:

“On the high-end of the range, the…high emission levels (quantified by SSP5-8.5) have become implausible, based on trends in the costs of renewables, the emergence of climate policy and recent emission trends…At the low end, many…emission trajectories have become inconsistent with observed trends during the 2020-30 period.”

In other words, the combination of technological progress and action on climate change that, to date, remains insufficient, means that scenarios of very high or very low emissions are now not considered plausible.

Another way of looking at it is that the “range of potential futures has narrowed”, explains Smith, one of the authors on the paper.

If you “draw a fan or plume of potential future emissions that start in 2025”, it lies entirely within the spread of scenarios from a decade ago, he says:

“So you’ve ruled out futures at the high end. You’ve also ruled out futures at the low end – so it’s now not possible to limit warming to 1.5C, at least in the short term or the medium term.”

This is a mix of “good” and “bad” news, Smith adds.

“In the latest set of scenarios, the lowest [scenario sees] peaking at about 1.7C, so we’ve also lost that low end, but the good news is we’ve lost the high end…Back in 2010, RCP8.5 wasn’t an implausible future, we’ve now made it an implausible future, because we’ve actually bent the curve [on emissions] enough to eliminate that possibility.”

The new “high” scenario projects warming in 2100 of closer to 3.2C (with a range of 2.5C to 4.3C).

To be clear, this “high” scenario would still come with catastrophic climate impacts, even if the level of warming would remain slightly below what was set out in RCP8.5.

Van Vuuren adds that the world is “now on a trajectory to 2.5-3C of warming”. As a result, “we don’t have any scenario anymore that can reach 1.5C with limited overshoot – we will have a significant overshoot”.

How is the IPCC involved?

Contrary to Trump’s claims, the common set of future emissions scenarios used by climate scientists are not developed by the IPCC, the UN climate-science body that produces landmark reports about climate change.

Instead, the development process described above is driven by a group of Earth system modelling experts convened by the Coupled Model Intercomparison Project (CMIP).

CMIP – an initiative of another UN body, the World Climate Research Programme – coordinates the work of dozens of climate modelling centres around the world.

Working in six-to-eight year cycles, CMIP asks modelling centres around the world to run a common set of climate-model experiments – simulations that use the same inputs and conditions – that allows for results to be collected together and more easily compared.

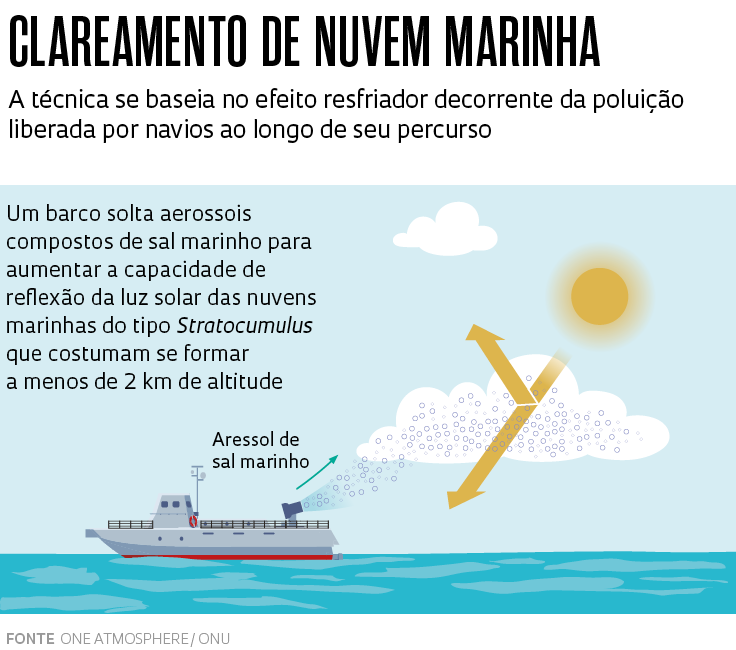

For experiments that explore how the climate might change in the future, modelling centres are instructed to run simulations against a fixed set of future climate scenarios, each with different levels of concentrations of greenhouse gases, aerosols and other drivers of climate change.

These future emissions scenarios are revisited each time CMIP embarks on a new “phase” of climate-modelling coordination, to reflect advances in scientific understanding and the pace of real-world climate action.

The group tasked with producing the design of future scenarios, as well as the “input files” for climate models, is the “scenario model intercomparison project”, or ScenarioMIP.

CMIP aligns its work with the schedule of the IPCC, coordinating a new set of model runs for each IPCC assessment cycle.

For example, the IPCC’s AR5 in 2013 featured climate models from the fifth phase of CMIP (CMIP5), whereas AR6 in 2021 used climate models from CMIP’s sixth phase (CMIP6).

AR7 will feature models from CMIP’s ongoing seventh phase (CMIP7). The first results from CMIP7 model runs are expected later this year.

The IPCC is consulted during the CMIP process, van Vuuren tells Carbon Brief, but its input is “no different from any other review comment” that the ScenarioMIP team received.

Thus, while the IPCC relies on model runs coordinated by CMIP in its landmark reports, it does not play a role in designing future emissions scenarios, nor in deciding when they should be retired.

Dr Robert Vautard, co-chair of IPCC AR7 Working Group I, tells Carbon Brief that the IPCC does not “do or coordinate research”. Its role, he says, is to “synthesise existing knowledge” and produce “regular” reviews of climate-science literature.

He adds that ScenarioMIP is just one set of scenarios the climate-science body assesses in its reports:

“IPCC assesses all scenarios, or sets of scenarios, that the scientific community produces. IPCC does not produce scenarios. CMIP7 will be [one] set of scenarios assessed by IPCC [for AR7] – but there will be many others.”

/cloudfront-eu-central-1.images.arcpublishing.com/prisa/KAZHWIUCH5FM5JORPAPL5JTWWE.jpg)

Você precisa fazer login para comentar.