Niv Dror on Feb 21

“I used to say that this is the most important graph in all the technology business. I’m now of the opinion that this is the most important graph ever graphed.”

Moore’s Law

The expectation that your iPhone keeps getting thinner and faster every two years. Happy 50th anniversary.

Components get cheaper, computers get smaller, a lot of comparisontweets.

In 1965 Intel co-founder Gordon Moore made his original observation, noticing that over the history of computing hardware, the number of transistors in a dense integrated circuit doubles approximately every two years. The prediction was specific to semiconductors and stretched out for a decade. Its demise has long been predicted, and eventually will come to an end, but continues to be valid to this day.

Expanding beyond semiconductors, and reshaping all kinds of businesses, including those not traditionally thought of as tech.

Yes, Box co-founder Aaron Levie is the official spokesperson for Moore’s Law, and we’re all perfectly okay with that. His cloud computing company would not be around without it. He’s grateful. We’re all grateful. In conversations Moore’s Law constantly gets referenced.

It has become both a prediction and an abstraction.

Expanding far beyond its origin as a transistor-centric metric.

But Moore’s Law of integrated circuits is only the most recent paradigm in a much longer and even more profound technological trend.

Humanity’s capacity to compute has been compounding for as long as we could measure it.

The Law of Accelerating Returns

In his 1999 book The Age of Spiritual Machines Google’s Director of Engineering, futurist, and author Ray Kurzweil proposed “The Law of Accelerating Returns”, according to which the rate of change in a wide variety of evolutionary systems tends to increase exponentially. A specific paradigm, a method or approach to solving a problem (e.g., shrinking transistors on an integrated circuit as an approach to making more powerful computers) provides exponential growth until the paradigm exhausts its potential. When this happens, a paradigm shift, a fundamental change in the technological approach occurs, enabling the exponential growth to continue.

It is important to note that Moore’s Law of Integrated Circuits was not the first, but the fifth paradigm to provide accelerating price-performance. Computing devices have been consistently multiplying in power (per unit of time) from the mechanical calculating devices used in the 1890 U.S. Census, to Turing’s relay-based machine that cracked the Nazi enigma code, to the vacuum tube computer that predicted Eisenhower’s win in 1952, to the transistor-based machines used in the first space launches, to the integrated-circuit-based personal computer.

This graph, which venture capitalist Steve Jurvetson describes as the most important concept ever to be graphed, is Kurzweil’s 110 year version of Moore’s Law. It spans across five paradigm shifts that have contributed to the exponential growth in computing.

Each dot represents the best computational price-performance device of the day, and when plotted on a logarithmic scale, they fit on the same double exponential curve that spans over a century. This is a very long lasting and predictable trend. It enables us to plan for a time beyond Moore’s Law, without knowing the specifics of the paradigm shift that’s ahead. The next paradigm will advance our ability to compute to such a massive scale, it will be beyond our current ability to comprehend.

The Power of Exponential Growth

Human perception is linear, technological progress is exponential. Our brains are hardwired to have linear expectations because that has always been the case. Technology today progresses so fast that the past no longer looks like the present, and the present is nowhere near the future ahead. Then seemingly out of nowhere, we find ourselves in a reality quite different than what we would expect.

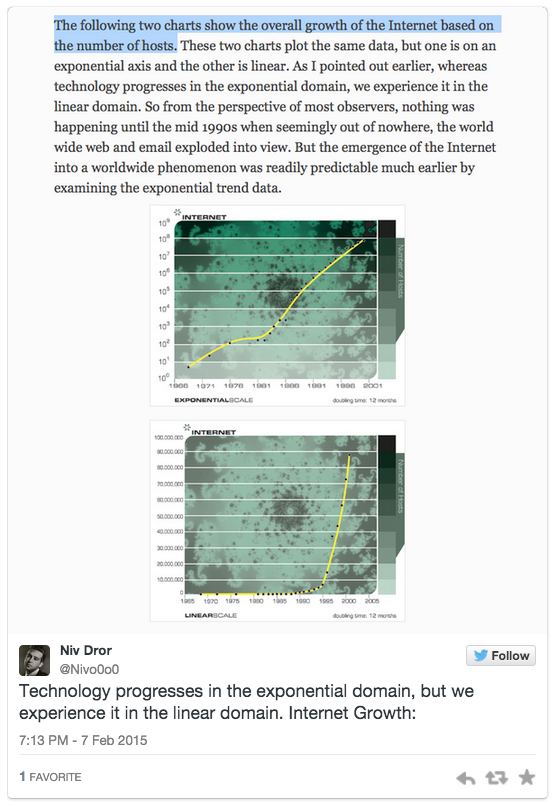

Kurzweil uses the overall growth of the internet as an example. The bottom chart being linear, which makes the internet growth seem sudden and unexpected, whereas the the top chart with the same data graphed on a logarithmic scale tell a very predictable story. On the exponential graph internet growth doesn’t come out of nowhere; it’s just presented in a way that is more intuitive for us to comprehend.

We are still prone to underestimate the progress that is coming because it’s difficult to internalize this reality that we’re living in a world of exponential technological change. It is a fairly recent development. And it’s important to get an understanding for the massive scale of advancements that the technologies of the future will enable. Particularly now, as we’ve reachedwhat Kurzweil calls the “Second Half of the Chessboard.”

(In the end the emperor realizes that he’s been tricked, by exponents, and has the inventor beheaded. In another version of the story the inventor becomes the new emperor).

It’s important to note that as the emperor and inventor went through the first half of the chessboard things were fairly uneventful. The inventor was first given spoonfuls of rice, then bowls of rice, then barrels, and by the end of the first half of the chess board the inventor had accumulated one large field’s worth — 4 billion grains — which is when the emperor started to take notice. It was only as they progressed through the second half of the chessboard that the situation quickly deteriorated.

# of Grains on 1st half: 4,294,967,295

# of Grains on 2nd half: 18,446,744,069,414,600,000

Mind-bending nonlinear gains in computing are about to get a lot more realistic in our lifetime, as there have been slightly more than 32 doublings of performance since the first programmable computers were invented.

Kurzweil’s Predictions

Kurzweil is known for making mind-boggling predictions about the future. And his track record is pretty good.

“…Ray is the best person I know at predicting the future of artificial intelligence.” —Bill Gates

Ray’s prediction for the future may sound crazy (they do sound crazy), but it’s important to note that it’s not about the specific prediction or the exact year. What’s important to focus on is what the they represent. These predictions are based on an understanding of Moore’s Law and Ray’s Law of Accelerating Returns, an awareness for the power of exponential growth, and an appreciation that information technology follows an exponential trend. They may sound crazy, but they are not based out of thin air.

And with that being said…

Second Half of the Chessboard Predictions

“By the 2020s, most diseases will go away as nanobots become smarter than current medical technology. Normal human eating can be replaced by nanosystems. The Turing test begins to be passable. Self-driving cars begin to take over the roads, and people won’t be allowed to drive on highways.”

“By the 2030s, virtual reality will begin to feel 100% real. We will be able to upload our mind/consciousness by the end of the decade.”

Not quite there yet…

“By the 2040s, non-biological intelligence will be a billion times more capable than biological intelligence (a.k.a. us). Nanotech foglets will be able to make food out of thin air and create any object in physical world at a whim.”

“By 2045, we will multiply our intelligence a billionfold by linking wirelessly from our neocortex to a synthetic neocortex in the cloud.”

Multiplying our intelligence a billionfold by linking our neocortex to a synthetic neocortex in the cloud — what does that actually mean?

In March 2014 Kurzweil gave an excellent talk at the TED Conference. It was appropriately called: Get ready for hybrid thinking.

Here is a summary:

These are the highlights:

Nanobots will connect our neocortex to a synthetic neocortex in the cloud, providing an extension of our neocortex.

Our thinking then will be a hybrid of biological and non-biological thinking(the non-biological portion is subject to the Law of Accelerating Returns and it will grow exponentially).

The frontal cortex and neocortex are not really qualitatively different, so it’s a quantitative expansion of the neocortex (like adding processing power).

The last time we expanded our neocortex was about two million years ago. That additional quantity of thinking was the enabling factor for us to take aqualitative leap and advance language, science, art, technology, etc.

We’re going to again expand our neocortex, only this time it won’t be limited by a fixed architecture of inclosure. It will be expanded without limits, by connecting our brain directly to the cloud.

We already carry a supercomputer in our pocket. We have unlimited access to all the world’s knowledge at our fingertips. Keeping in mind that we are prone to underestimate technological advancements (and that 2045 is not a hard deadline) is it really that far of a stretch to imagine a future where we’re always connected directly from our brain?

Progress is underway. We’ll be able to reverse engineering the neural cortex within five years. Kurzweil predicts that by 2030 we’ll be able to reverse engineer the entire brain. His latest book is called How to Create a Mind… This is the reason Google hired Kurzweil.

Hybrid Human Machines

“We’re going to become increasingly non-biological…”

“We’ll also have non-biological bodies…”

“If the biological part went away it wouldn’t make any difference…”

Impact on Society

A technological singularity —“the hypothesis that accelerating progress in technologies will cause a runaway effect wherein artificial intelligence will exceed human intellectual capacity and control, thus radically changing civilization” — is beyond the scope of this article, but these advancements will absolutely have an impact on society. Which way is yet to be determined.

There may be some regret…

Politicians will not know who/what to regulate.

Evolution may take an unexpected twist.

The rich-poor gap will expand.

The unimaginable will become reality and society will change.

Você precisa fazer login para comentar.