By Philip Ball

16 February 2017

“I cannot define the real problem, therefore I suspect there’s no real problem, but I’m not sure there’s no real problem.”

The American physicist Richard Feynman said this about the notorious puzzles and paradoxes of quantum mechanics, the theory physicists use to describe the tiniest objects in the Universe. But he might as well have been talking about the equally knotty problem of consciousness.

Some scientists think we already understand what consciousness is, or that it is a mere illusion. But many others feel we have not grasped where consciousness comes from at all.

The perennial puzzle of consciousness has even led some researchers to invoke quantum physics to explain it. That notion has always been met with skepticism, which is not surprising: it does not sound wise to explain one mystery with another. But such ideas are not obviously absurd, and neither are they arbitrary.

For one thing, the mind seemed, to the great discomfort of physicists, to force its way into early quantum theory. What’s more, quantum computers are predicted to be capable of accomplishing things ordinary computers cannot, which reminds us of how our brains can achieve things that are still beyond artificial intelligence. “Quantum consciousness” is widely derided as mystical woo, but it just will not go away.

Quantum mechanics is the best theory we have for describing the world at the nuts-and-bolts level of atoms and subatomic particles. Perhaps the most renowned of its mysteries is the fact that the outcome of a quantum experiment can change depending on whether or not we choose to measure some property of the particles involved.

When this “observer effect” was first noticed by the early pioneers of quantum theory, they were deeply troubled. It seemed to undermine the basic assumption behind all science: that there is an objective world out there, irrespective of us. If the way the world behaves depends on how – or if – we look at it, what can “reality” really mean?

The most famous intrusion of the mind into quantum mechanics comes in the “double-slit experiment”

Some of those researchers felt forced to conclude that objectivity was an illusion, and that consciousness has to be allowed an active role in quantum theory. To others, that did not make sense. Surely, Albert Einstein once complained, the Moon does not exist only when we look at it!

Today some physicists suspect that, whether or not consciousness influences quantum mechanics, it might in fact arise because of it. They think that quantum theory might be needed to fully understand how the brain works.

Might it be that, just as quantum objects can apparently be in two places at once, so a quantum brain can hold onto two mutually-exclusive ideas at the same time?

These ideas are speculative, and it may turn out that quantum physics has no fundamental role either for or in the workings of the mind. But if nothing else, these possibilities show just how strangely quantum theory forces us to think.

The most famous intrusion of the mind into quantum mechanics comes in the “double-slit experiment”. Imagine shining a beam of light at a screen that contains two closely-spaced parallel slits. Some of the light passes through the slits, whereupon it strikes another screen.

Light can be thought of as a kind of wave, and when waves emerge from two slits like this they can interfere with each other. If their peaks coincide, they reinforce each other, whereas if a peak and a trough coincide, they cancel out. This wave interference is called diffraction, and it produces a series of alternating bright and dark stripes on the back screen, where the light waves are either reinforced or cancelled out.

The implication seems to be that each particle passes simultaneously through both slits

This experiment was understood to be a characteristic of wave behaviour over 200 years ago, well before quantum theory existed.

The double slit experiment can also be performed with quantum particles like electrons; tiny charged particles that are components of atoms. In a counter-intuitive twist, these particles can behave like waves. That means they can undergo diffraction when a stream of them passes through the two slits, producing an interference pattern.

Now suppose that the quantum particles are sent through the slits one by one, and their arrival at the screen is likewise seen one by one. Now there is apparently nothing for each particle to interfere with along its route – yet nevertheless the pattern of particle impacts that builds up over time reveals interference bands.

The implication seems to be that each particle passes simultaneously through both slits and interferes with itself. This combination of “both paths at once” is known as a superposition state.

But here is the really odd thing.

If we place a detector inside or just behind one slit, we can find out whether any given particle goes through it or not. In that case, however, the interference vanishes. Simply by observing a particle’s path – even if that observation should not disturb the particle’s motion – we change the outcome.

The physicist Pascual Jordan, who worked with quantum guru Niels Bohr in Copenhagen in the 1920s, put it like this: “observations not only disturb what has to be measured, they produce it… We compel [a quantum particle] to assume a definite position.” In other words, Jordan said, “we ourselves produce the results of measurements.”

If that is so, objective reality seems to go out of the window.

And it gets even stranger.

If nature seems to be changing its behaviour depending on whether we “look” or not, we could try to trick it into showing its hand. To do so, we could measure which path a particle took through the double slits, but only after it has passed through them. By then, it ought to have “decided” whether to take one path or both.

The sheer act of noticing, rather than any physical disturbance caused by measuring, can cause the collapse

An experiment for doing this was proposed in the 1970s by the American physicist John Wheeler, and this “delayed choice” experiment was performed in the following decade. It uses clever techniques to make measurements on the paths of quantum particles (generally, particles of light, called photons) after they should have chosen whether to take one path or a superposition of two.

It turns out that, just as Bohr confidently predicted, it makes no difference whether we delay the measurement or not. As long as we measure the photon’s path before its arrival at a detector is finally registered, we lose all interference.

It is as if nature “knows” not just if we are looking, but if we are planning to look.

Whenever, in these experiments, we discover the path of a quantum particle, its cloud of possible routes “collapses” into a single well-defined state. What’s more, the delayed-choice experiment implies that the sheer act of noticing, rather than any physical disturbance caused by measuring, can cause the collapse. But does this mean that true collapse has only happened when the result of a measurement impinges on our consciousness?

It is hard to avoid the implication that consciousness and quantum mechanics are somehow linked

That possibility was admitted in the 1930s by the Hungarian physicist Eugene Wigner. “It follows that the quantum description of objects is influenced by impressions entering my consciousness,” he wrote. “Solipsism may be logically consistent with present quantum mechanics.”

Wheeler even entertained the thought that the presence of living beings, which are capable of “noticing”, has transformed what was previously a multitude of possible quantum pasts into one concrete history. In this sense, Wheeler said, we become participants in the evolution of the Universe since its very beginning. In his words, we live in a “participatory universe.”

To this day, physicists do not agree on the best way to interpret these quantum experiments, and to some extent what you make of them is (at the moment) up to you. But one way or another, it is hard to avoid the implication that consciousness and quantum mechanics are somehow linked.

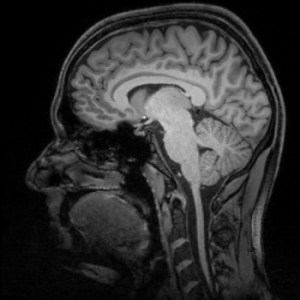

Beginning in the 1980s, the British physicist Roger Penrosesuggested that the link might work in the other direction. Whether or not consciousness can affect quantum mechanics, he said, perhaps quantum mechanics is involved in consciousness.

What if, Penrose asked, there are molecular structures in our brains that are able to alter their state in response to a single quantum event. Could not these structures then adopt a superposition state, just like the particles in the double slit experiment? And might those quantum superpositions then show up in the ways neurons are triggered to communicate via electrical signals?

Maybe, says Penrose, our ability to sustain seemingly incompatible mental states is no quirk of perception, but a real quantum effect.

Perhaps quantum mechanics is involved in consciousness

After all, the human brain seems able to handle cognitive processes that still far exceed the capabilities of digital computers. Perhaps we can even carry out computational tasks that are impossible on ordinary computers, which use classical digital logic.

Penrose first proposed that quantum effects feature in human cognition in his 1989 book The Emperor’s New Mind. The idea is called Orch-OR, which is short for “orchestrated objective reduction”. The phrase “objective reduction” means that, as Penrose believes, the collapse of quantum interference and superposition is a real, physical process, like the bursting of a bubble.

Orch-OR draws on Penrose’s suggestion that gravity is responsible for the fact that everyday objects, such as chairs and planets, do not display quantum effects. Penrose believes that quantum superpositions become impossible for objects much larger than atoms, because their gravitational effects would then force two incompatible versions of space-time to coexist.

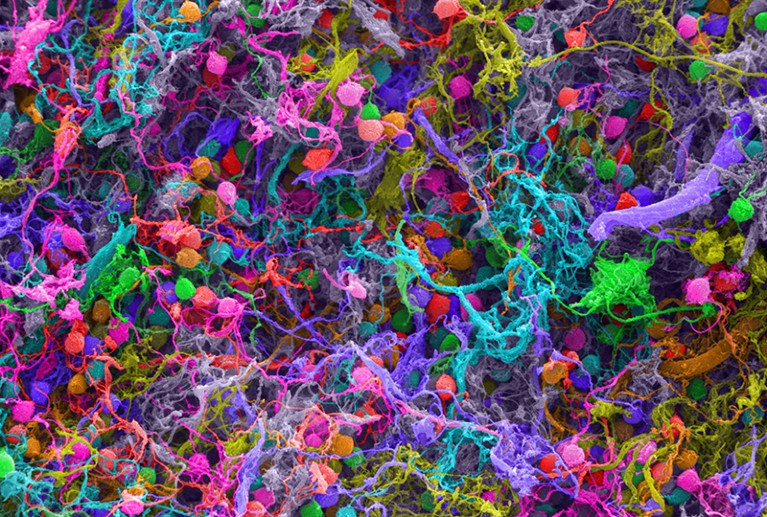

Penrose developed this idea further with American physician Stuart Hameroff. In his 1994 book Shadows of the Mind, he suggested that the structures involved in this quantum cognition might be protein strands called microtubules. These are found in most of our cells, including the neurons in our brains. Penrose and Hameroff argue that vibrations of microtubules can adopt a quantum superposition.

But there is no evidence that such a thing is remotely feasible.

It has been suggested that the idea of quantum superpositions in microtubules is supported by experiments described in 2013, but in fact those studies made no mention of quantum effects.

Besides, most researchers think that the Orch-OR idea was ruled out by a study published in 2000. Physicist Max Tegmark calculated that quantum superpositions of the molecules involved in neural signaling could not survive for even a fraction of the time needed for such a signal to get anywhere.

Other researchers have found evidence for quantum effects in living beings

Quantum effects such as superposition are easily destroyed, because of a process called decoherence. This is caused by the interactions of a quantum object with its surrounding environment, through which the “quantumness” leaks away.

Decoherence is expected to be extremely rapid in warm and wet environments like living cells.

Nerve signals are electrical pulses, caused by the passage of electrically-charged atoms across the walls of nerve cells. If one of these atoms was in a superposition and then collided with a neuron, Tegmark showed that the superposition should decay in less than one billion billionth of a second. It takes at least ten thousand trillion times as long for a neuron to discharge a signal.

As a result, ideas about quantum effects in the brain are viewed with great skepticism.

However, Penrose is unmoved by those arguments and stands by the Orch-OR hypothesis. And despite Tegmark’s prediction of ultra-fast decoherence in cells, other researchers have found evidence for quantum effects in living beings. Some argue that quantum mechanics is harnessed by migratory birds that use magnetic navigation, and by green plants when they use sunlight to make sugars in photosynthesis.

Besides, the idea that the brain might employ quantum tricks shows no sign of going away. For there is now another, quite different argument for it.

In a study published in 2015, physicist Matthew Fisher of the University of California at Santa Barbara argued that the brain might contain molecules capable of sustaining more robust quantum superpositions. Specifically, he thinks that the nuclei of phosphorus atoms may have this ability.

Phosphorus atoms are everywhere in living cells. They often take the form of phosphate ions, in which one phosphorus atom joins up with four oxygen atoms.

Such ions are the basic unit of energy within cells. Much of the cell’s energy is stored in molecules called ATP, which contain a string of three phosphate groups joined to an organic molecule. When one of the phosphates is cut free, energy is released for the cell to use.

Cells have molecular machinery for assembling phosphate ions into groups and cleaving them off again. Fisher suggested a scheme in which two phosphate ions might be placed in a special kind of superposition called an “entangled state”.

Phosphorus spins could resist decoherence for a day or so, even in living cells

The phosphorus nuclei have a quantum property called spin, which makes them rather like little magnets with poles pointing in particular directions. In an entangled state, the spin of one phosphorus nucleus depends on that of the other.

Put another way, entangled states are really superposition states involving more than one quantum particle.

Fisher says that the quantum-mechanical behaviour of these nuclear spins could plausibly resist decoherence on human timescales. He agrees with Tegmark that quantum vibrations, like those postulated by Penrose and Hameroff, will be strongly affected by their surroundings “and will decohere almost immediately”. But nuclear spins do not interact very strongly with their surroundings.

All the same, quantum behaviour in the phosphorus nuclear spins would have to be “protected” from decoherence.

This might happen, Fisher says, if the phosphorus atoms are incorporated into larger objects called “Posner molecules”. These are clusters of six phosphate ions, combined with nine calcium ions. There is some evidence that they can exist in living cells, though this is currently far from conclusive.

I decided… to explore how on earth the lithium ion could have such a dramatic effect in treating mental conditions

In Posner molecules, Fisher argues, phosphorus spins could resist decoherence for a day or so, even in living cells. That means they could influence how the brain works.

The idea is that Posner molecules can be swallowed up by neurons. Once inside, the Posner molecules could trigger the firing of a signal to another neuron, by falling apart and releasing their calcium ions.

Because of entanglement in Posner molecules, two such signals might thus in turn become entangled: a kind of quantum superposition of a “thought”, you might say. “If quantum processing with nuclear spins is in fact present in the brain, it would be an extremely common occurrence, happening pretty much all the time,” Fisher says.

He first got this idea when he started thinking about mental illness.

“My entry into the biochemistry of the brain started when I decided three or four years ago to explore how on earth the lithium ion could have such a dramatic effect in treating mental conditions,” Fisher says.

At this point, Fisher’s proposal is no more than an intriguing idea

Lithium drugs are widely used for treating bipolar disorder. They work, but nobody really knows how.

“I wasn’t looking for a quantum explanation,” Fisher says. But then he came across a paper reporting that lithium drugs had different effects on the behaviour of rats, depending on what form – or “isotope” – of lithium was used.

On the face of it, that was extremely puzzling. In chemical terms, different isotopes behave almost identically, so if the lithium worked like a conventional drug the isotopes should all have had the same effect.

But Fisher realised that the nuclei of the atoms of different lithium isotopes can have different spins. This quantum property might affect the way lithium drugs act. For example, if lithium substitutes for calcium in Posner molecules, the lithium spins might “feel” and influence those of phosphorus atoms, and so interfere with their entanglement.

We do not even know what consciousness is

If this is true, it would help to explain why lithium can treat bipolar disorder.

At this point, Fisher’s proposal is no more than an intriguing idea. But there are several ways in which its plausibility can be tested, starting with the idea that phosphorus spins in Posner molecules can keep their quantum coherence for long periods. That is what Fisher aims to do next.

All the same, he is wary of being associated with the earlier ideas about “quantum consciousness”, which he sees as highly speculative at best.

Physicists are not terribly comfortable with finding themselves inside their theories. Most hope that consciousness and the brain can be kept out of quantum theory, and perhaps vice versa. After all, we do not even know what consciousness is, let alone have a theory to describe it.

We all know what red is like, but we have no way to communicate the sensation

It does not help that there is now a New Age cottage industrydevoted to notions of “quantum consciousness“, claiming that quantum mechanics offers plausible rationales for such things as telepathy and telekinesis.

As a result, physicists are often embarrassed to even mention the words “quantum” and “consciousness” in the same sentence.

But setting that aside, the idea has a long history. Ever since the “observer effect” and the mind first insinuated themselves into quantum theory in the early days, it has been devilishly hard to kick them out. A few researchers think we might never manage to do so.

In 2016, Adrian Kent of the University of Cambridge in the UK, one of the most respected “quantum philosophers”, speculated that consciousness might alter the behaviour of quantum systems in subtle but detectable ways.

Kent is very cautious about this idea. “There is no compelling reason of principle to believe that quantum theory is the right theory in which to try to formulate a theory of consciousness, or that the problems of quantum theory must have anything to do with the problem of consciousness,” he admits.

Every line of thought on the relationship of consciousness to physics runs into deep trouble

But he says that it is hard to see how a description of consciousness based purely on pre-quantum physics can account for all the features it seems to have.

One particularly puzzling question is how our conscious minds can experience unique sensations, such as the colour red or the smell of frying bacon. With the exception of people with visual impairments, we all know what red is like, but we have no way to communicate the sensation and there is nothing in physics that tells us what it should be like.

Sensations like this are called “qualia”. We perceive them as unified properties of the outside world, but in fact they are products of our consciousness – and that is hard to explain. Indeed, in 1995 philosopher David Chalmers dubbed it “the hard problem” of consciousness.

“Every line of thought on the relationship of consciousness to physics runs into deep trouble,” says Kent.

This has prompted him to suggest that “we could make some progress on understanding the problem of the evolution of consciousness if we supposed that consciousnesses alters (albeit perhaps very slightly and subtly) quantum probabilities.”

“Quantum consciousness” is widely derided as mystical woo, but it just will not go away

In other words, the mind could genuinely affect the outcomes of measurements.

It does not, in this view, exactly determine “what is real”. But it might affect the chance that each of the possible actualities permitted by quantum mechanics is the one we do in fact observe, in a way that quantum theory itself cannot predict. Kent says that we might look for such effects experimentally.

He even bravely estimates the chances of finding them. “I would give credence of perhaps 15% that something specifically to do with consciousness causes deviations from quantum theory, with perhaps 3% credence that this will be experimentally detectable within the next 50 years,” he says.

If that happens, it would transform our ideas about both physics and the mind. That seems a chance worth exploring.

Você precisa fazer login para comentar.