agosto 27, 2020

Por Bruno Latour

Tradução: Igor Rolemberg

Para a versão original do francês clique aqui

Vou tentar responder à questão “Onde está o poder?”. Como sempre, quando somos filósofos, temos a tendência de mudar um pouco o objeto. Não basta encontrar esse poder; é preciso ainda fazer alguma coisa a partir dele. É por isso que eu vou colocar três questões: Como investigar para encontrar o poder? Como desconfiar do poder em todos os sentidos do termo “desconfiar”? Por fim, como exercê-lo depois de encontrá-lo?

Permitam-me antes de mais nada colocar uma primeira regra de deontologia de pesquisa: Como dispor dos meios de provar a presença legítima ou ilegítima do poder para evitar qualquer suspeita? Coisa esquisita a crítica; outrora difícil, hoje se tornou um automatismo, quase um reflexo: assim que uma autoridade qualquer enuncia uma certeza, imediatamente a opinião pública, as redes sociais, o bom senso, concluem que é necessariamente falso – ou pelo menos que há por trás uma manipulação. Nós nos encontramos aí diante de um problema de pesquisa como na história em quadrinhos Lucky Luke. A crítica obedece agora à regra “a gente atira primeiro, e discute depois”. Para poder pesquisar, é preciso aprender a desacelerar e suspender a acusação de manipulação.

Segunda regra: se falamos de poder, se o traçamos, designamos, mostramos, só isso não basta. A denúncia, como bem mostrou Luc Boltanski, seria vazia de sentido, nesse caso. A regra então é a seguinte: se podemos detectar uma fonte legítima de poder, é necessário também oferecer os meios de exercê-lo para aqueles a quem ele se destina; se a fonte de poder é ilegítima, então precisamos nos esforçar e oferecer os meios de contra-atacar, de se estabelecer um contrapoder. Em resumo, só se deve denunciar o poder, se a denúncia der poder a nossos interlocutores. É inútil denunciá-lo se for para oferecer uma lição sobre a impotência.

Que seja preciso em todos os casos desconfiar do poder, que a gente busque descobrir suas fontes e reprimir seus efeitos, irei demonstrá-lo em cinco etapas.

SEM PODER, AS COISAS SEGUIRIAM DE MANEIRA RETA

A primeira etapa vai nos permitir aprender a identificar o exercício do poder ao mesmo tempo em que ele se torna mais difícil de ser detectado. Comecemos por um caso simples. Se vocês lerem no Le Monde uma manchete “Laboratório Servier suspeito de ter influenciado um relatório do Senado”, não terão dificuldade para notar que alguma coisa de anormal acontece. O jornalista fez o trabalho por vocês. De fato, não é normal que um professor de medicina tenha aparentemente modificado um relatório do Senado num inquérito sobre o sofrível caso Mediator, esse medicamento do laboratório Servier que hoje é objeto de uma série de processos com muitas repercussões. Nós nos encontramos aí diante de um inquérito interrompido ou alterado por conta de uma intervenção indevida. Vocês terão razão de suspeitar, sem problema algum, que se trata aí de um exercício ilegítimo de poder.

O caso seguinte é um pouco mais delicado: “Aumenta a contestação contra as antenas de transmissão, tanto no campo quanto na cidade”. Dessa vez, a matéria não facilitou o trabalho. Trata-se de empresas de telefonia que impõem as antenas sem discutir antes? É o Estado que faz vista grossa sobre essa implantação? Aqueles que se acreditam doentes são exagerados nas suas reivindicações, ou, ao contrário, é injusto não reconhecer que se trata de uma doença real que deveria dar direito a uma indenização? Encontramo-nos aí em plena controvérsia. Há uma incerteza quanto ao escândalo que deve ser denunciado. Vocês entendem muito bem que, nesse caso, a denúncia automática não levaria a lugar algum. Precisamos continuar a investigar cuidadosamente a fim de designar quem exerce o poder ilegitimamente e quem luta para fazer oposição a ele.

Terceiro exemplo, ainda mais incerto. Vocês leem no Le Monde um artigo com a manchete “A política de cortes orçamentários repousa sobre um diagnóstico errado”. A matéria afirma que todos os Estados da Europa padecem nesse momento dessa ideia que muitos economistas consideram absurda, segundo a qual é preciso reduzir o orçamento em vez de investir massivamente no momento em que a moeda custa pouco. É o argumento que Paul Krugman, prêmio Nobel de economia, repete quase todos os dias ao mundo inteiro no New York Times, sem ser ouvido. Eis assim um caso em que parece que o poder seja exercido pelos experts, através de redes opacas, pois influenciam o que chamamos de “esferas de poder”, aqui no sentido clássico do termo: a classe política. As ideias econômicas, diz a matéria, têm assim uma influência indevida sobre a vida pública e nos obrigam a apertar os cintos em nome de uma doutrina cuja origem não parece segura. Quem tem o poder nesse caso? É a doutrina econômica? São os economistas? Aqueles que ouvem demais os economistas? Vemos que a detecção do poder começa a se tornar mais difícil.

Desses três exemplos, o princípio de análise é o mesmo: existe uma via reta que foi desviada. Deveríamos ter um relatório honesto do Senado. Não temos. Deveríamos ter uma informação clara quanto ao perigo das antenas transmissoras. Não temos. Deveríamos ter uma política econômica credível. Não temos. Assim, nesses três casos o poder é identificado pela alteração entre o caminho reto e o desvio, pelo distanciamento que foi operado. É essa distância que justifica a denúncia. Mas vocês já devem ter entendido que isso supõe evidentemente que exista uma via reta, um estado normal, direito, digamos racional, que o poder veio deformar. Nessa visão das coisas, o poder é sempre irracional. Ele não deveria ser exercido. O denunciante, no fundo, sonha com um mundo livre de poder.

EM BUSCA DO PODER INVISÍVEL

Figura 1

A segunda etapa é mais difícil: de onde vem, com efeito, a via retilínea? Podemos falar de poder nesse caso? Se sim, como proceder à investigação? Para seguir reto é preciso que um poder se exerça. Mas ele estaria então de alguma forma latente, e não teria o mesmo sentido [que abordamos] no tópico anterior. Aí se encontra o tema batido da “naturalização” das condutas. Não vemos mais o poder, porém ele foi exercido antes; simplesmente, perdemos seu rastro. É claro que aqui convém recorrer a Michel Foucault.

Tomemos por exemplo a arquitetura da prisão, uma arquitetura completamente particular, pois, a partir da cabine central, o vigia pode observar diretamente o interior de todas as celas à sua volta. Vocês talvez não tivessem a ideia de considerar esse dispositivo como a prova de um exercício ilegítimo do poder. Isso lhes aparece ao contrário como uma forma normal de organização da prisão. E vocês estão certos. Antes dos trabalhos dos historiadores, isso era de fato um exercício legítimo: os arquitetos foram pagos ordinariamente, e o Estado interveio regularmente. E, no entanto, como mostra tão bem Michel Foucault num livro célebre, “Vigiar e Punir”, isso é para o Estado um modo de governo, de exercer sobre os prisioneiros um poder total. Desde o século XIX – não retomarei todo o argumento de Foucault – a arquitetura penal se tornou um modo normal, através do qual um poder extremamente violento se exerce calma e tranquilamente, de maneira regular e cotidiana. O que Foucault chama de “governamentalidade” é uma forma de poder que não podemos mais denunciar porque ela se tornou a norma, a razão, o saber, em resumo, a via reta. O poder foi naturalizado. Ele se exerce de maneira tão indiscutível quanto as leis da natureza. E por essa razão, mantém-se indenunciável.

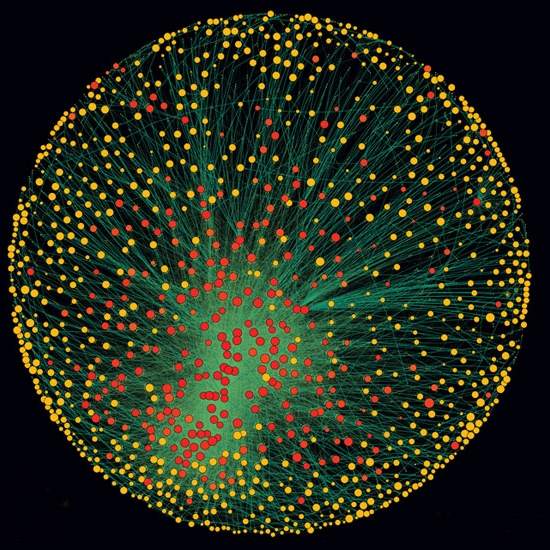

Figura 2

O segundo exemplo irá talvez lhes surpreender mais: é o metro. Fotografei em frente ao Senado, em Paris, um dos dois exemplos do metro-padrão ainda expostos in situ. Nesse espaço simbólico, em frente ao Senado carregado de leis, antes que os metros fossem difundidos em todo lugar, os parisienses de antigamente podiam ir verificar se o seu metro estava de acordo com o metro correto. Donde o nome de metro-padrão. Nada mais objetivo que o metro. Nenhum de vocês iria pensar que ele exerce o poder ao tomar as medidas em centímetros e não em polegadas, como fazem os ingleses. E, no entanto, o sistema métrico possui uma longa história, foi preciso oitenta anos para que se impusesse em grande parte do mundo ao longo de uma batalha política mundial cujos traços evidentemente esquecemos. O metro é assim um belo caso de naturalização. Mas é preciso se esforçar muito para se lembrar das polêmicas suscitadas no começo por essa tomada de poder revolucionária sobre os hábitos de tantos artesãos e comerciantes. Temos aí um caso muito interessante, pois não vemos mais a origem do poder. Em tais casos, para conseguir detectar o poder é preciso recorrer ao que Foucault chama de arqueologia, ou seja, uma imersão, graças aos arquivos, numa história controvertida, violenta, de aplicação de hábitos pouco suspeitos.

O PODER PERMITE ASSIM COMO PROÍBE

Mas então, vocês diriam, se medir com um metro ou construir uma prisão é exercer o poder, o poder está em toda parte. Eu concordo, e por isso é preciso talvez estender a noção de poder ou dispensá-la. Com efeito, e esta será a terceira etapa de nosso breve excurso, o verbo “poder”, vocês bem sabem, não é sinônimo de “proibir”. Poder é também permitir.

Tomemos o exemplo aparentemente muito simples do controle remoto. É esse aparelho que lhes autoriza a não se mexerem, lhes dá o poder de continuarem sentados mudando os canais da televisão. Sem ele, os mais velhos como eu se recordarão, vocês seriam obrigados a se levantarem toda hora para zapear à vontade. É graça ao controle remoto que vocês podem se tornar o que os americanos chamam de “batata de sofá” (a couch potato).

Figura 3

Vocês têm aí uma ocasião de fato interessante para se colocar a questão “Onde está o poder?”. Porque, afinal de contas, esse jovem que não precisa mais se levantar de seu sofá é o ser mais autônomo, livre e contente do mundo. Em outras palavras, ele tem na sua mão esquerda o controle remoto e na mão direita chips ultra salgados e refrigerantes ultra açucarados. Nada o impede de ganhar tanto peso quanto queira… A questão “onde está o poder?” pode ser posta aqui muito concretamente. Esse jovem é ao mesmo tempo o ser mais livre da história, aquele que possui menos restrições, e aquele mais atrelado, envolto, vinculado a um conjunto de bens, cada um dos quais lhe permite fazer alguma coisa. Vocês veem que, num caso como esse, é difícil denunciar um exercício ilegítimo do poder (seus pais irão jogar o controle remoto pela janela para forçá-lo a se mexer finalmente?), como também é difícil notar a origem de todos esses hábitos consistentemente postos em prática (Os pais vão processar quem? A Coca-Cola? Ou o canal de televisão? A menos que seja o fabricante das batatas chips?)

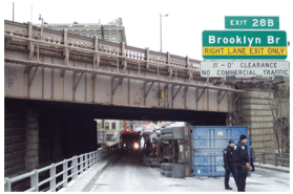

Se refinamos a análise, a noção de poder se dilui completamente, ou se torna simplesmente sinônimo de descrição concreta de uma situação. Considerem esse belo exemplo: um caminhão tombado em Nova York porque o motorista não viu que a altura da passarela era inferior à altura de seu veículo. Isso é um exercício de poder? Claro que não. O condutor seguiu seu GPS e a base de dados não havia ainda integrado a altura das pontes dentro dos itinerários pré programados. Sem querer, o motorista se lançou numa armadilha. Ora, acontece que a altura das pontes de Nova York foi objeto, no século passado, de uma feroz disputa: Robert Moses, o Haussmann americano, limitou-a deliberadamente para as avenidas utilizadas por carros de passeio, a fim de que não fossem utilizadas por caminhões, que deveriam circular em vias mais largas reservadas aos serviços de logística (acusaram-no até de ter feito isso por razões raciais[1]). Não há dúvida de que a massa de aço da ponte muito baixa exerce um poder de extrema violência sobre o caminhão azarado. Mas não há dúvida também que Robert Moses, há um século de distância, exerce também um poder sobre o conjunto da situação: modificar o tamanho de todas as pontes de Nova York para que carros e caminhões circulem igualmente significaria despender somas astronômicas. Permeando-se através de uma regulamentação, depois dentro do concreto e do aço, e de uma definição de mobilidade urbana, Moses tornou irreversíveis suas decisões e fez com que suas Tábuas da Lei sejam obedecidas até hoje – e aqueles que as infringem, como esse caminhoneiro distraído, são severamente punidos.

Figura 4

APRENDER A DISPENSAR A NOÇÃO DE PODER

O exemplo do controle-remoto, assim como o das pontes de Nova York me levam à quarta etapa: ao opor a noção de poder a outra coisa (o exercício normal e retilíneo da razão), nós nos privamos da capacidade de, no fim das contas, identificar as fontes daquilo que molda nosso ambiente. Se eu sempre desconfiei da noção de poder, é que passei muitos anos a favorecer sua extensão ali onde ninguém o via: nas ciências e nas técnicas. Frequentemente comparei a busca pelas fontes de poder àquela dos físicos para identificar a “matéria escura do universo”[2]. Para os coletivos humanos, essa matéria escura encontra-se, é claro, nos laboratórios, no sentido amplo do termo.

Veja este belo retrato de Louis Pasteur nos apresentando seus balões de ensaio com pescoço de cisne. É uma experiência célebre que eu estudei bastante[3]. Essa invenção lhe permitiu, pela primeira vez, conservar, ao abrigo de toda contaminação, líquidos bastante putrescíveis, uma vez aquecidos. Eles permaneceram intactos durante anos. E no entanto, o orifício dos balões permanecia aberto, e, dessa forma, acessível ao ar ambiente. Basta agitar o líquido para que ele entre em contato com os micróbios transportados pelo ar e que ficaram bloqueados na curva do pescoço para que, alguns dias depois, os balões tenham se tornado completamente opacos por causa da proliferação dos micro-organismos. Onde está o poder? Em todo lugar! Eis aí Pasteur que inventa uma série de gestos que permitem manter o ambiente esterilizado – aquilo que chamaremos em breve de assepsia – ou, contrariamente, de tornar esse ambiente ideal para a cultura de inúmeros micróbios suspensos no ar – o que se tornará o início dos meios de cultura.

Figura 5

Se há um caso onde todas as relações que nós costumamos manter foram modificadas por práticas inventadas em laboratório, esse é, sem dúvidas, um deles. A indústria, a higiene, a medicina foram totalmente impactadas pela introdução progressiva de inovações como essa daqui. Não precisa olhar muito longe para entender essa lição. Pensem apenas na epidemia do Ebola ano passado, ou, este ano, nos efeitos terríveis do vírus da Zika[4].

De modo mais geral, se vocês olharem à sua volta, perceberão que toda vez que as relações de força foram modificadas, é que ali foram inseridas ciências, técnicas ou ideias novas. E,toda vez, nós dependemos de saberes especializados que dependem, a seu turno, de uma infraestrutura cara, complexa, etc., e de instituições sólidas. Ora, vemos bem que nesse caso seria absurdo tentar distinguir o que pertence a um poder ilegítimo que seria necessário denunciar e o que está relacionado a um poder de controle sobre as condições de existência. Será necessário confiar nos saberes especializados, na maior parte das vezes extraordinariamente complexos, que pontuam, através de longas séries que chamei “caixas pretas”, o curso de nossas ações mais ordinárias.

Se eu desconfio da noção de “poder a denunciar”, é que ela não permite ponderar o valor justo da produção desses saberes. Por isso que muitos preferem recorrer frequentemente à teoria do complô. Ela se caracteriza por uma repartição estranha entre o que aceitamos sem nenhuma crítica – geralmente o exercício indevido de um poder ilegítimo e escondido que manipula docilmente a sociedade sem que consigamos prová-lo – e aquilo que criticamos meticulosamente exigindo um nível de prova tão elevado que nenhuma outra fonte de informação – imprensa, revistas especializadas, relatórios de especialistas – jamais poderá atingir[5]. Essa estranha patologia tem por origem a noção mesma de um poder que dissimula bem tanto a escassez de provas quanto a sua robustez. Transporta-se uma demanda de absoluto para aquilo que é necessariamente da ordem do relativo. Por causa dessa repartição, os complotistas deixam passar uma manada pela porteira enquanto caçam pelo em ovo. E a situação é ainda mais complicada porque, como mostra Luc Boltanski num livro astucioso, o que não falta é complô![6] De modo que os complotistas chegam a esse resultado estranho de duvidar de todas as provas oficiais (o que reforça esse exercício reflexo [automático] da crítica pelo qual comecei) sem conseguir no entanto identificar os verdadeiros complôs…

Assim, a suspeição pode nascer e se desenvolver independentemente das provas, e nesse caso a pessoa se torna paranoica – as teorias do complô não estão longe disso. Mas, inversamente, a ausência de provas pode diminuir a desconfiança: começa-se a acreditar que não há nada de anormal – “é necessário, as coisas são assim”. Vem então a complacência e com ela a inércia. A consequência é uma corrupção definitiva do espaço público.

UM EXCESSO DE PODER COM O QUAL NÃO SABEMOS O QUE FAZER

Estou consciente de ter ficado até aqui dando voltas em torno do mesmo ponto. “Onde está o poder?”, a questão de partida visava evidentemente a esfera pública, a da classe política. Não se tratava provavelmente de falar de controle-remoto, de antenas transmissoras, de pontes, de micróbios e de teoria econômica… Eu gostaria então nessa última etapa de tomar um caso que me é caro, que se refere muito bem à esfera pública e que exemplifica, novamente, a impotência de noções usuais de poder para interpretar situações concretas. O exemplo é o da Conferência do Clima, chamada “COP 21”, que se encerrou em 12 de dezembro de 2015, com entusiasmo. Ora, desde o dia 13 de dezembro, pela manhã, mais ninguém falava desse “acontecimento mundial”! Eis aí um caso de fato extraordinário: um poder, ou melhor, uma capacidade de agir, completamente original, com a qual ninguém sabe o que fazer.

Figura 6

Para se ter uma ideia da situação, fazendo um jogo de palavras, seria necessário falar em um enorme excesso de poder. Avaliem vocês mesmos: o termo que é utilizado pelos geólogos para descrever essa potência nova é o de Antropoceno, que eu prefiro chamar de Novo Regime Climático[7]. Os geólogos dão à humanidade (esse é o sentido do termo anthropos), tomada em bloco, uma capacidade, um poder, de modificar o estado do planeta mais rapidamente, de forma mais sustentada no tempo e irreversível do que em qualquer outra época de sua história. Temos então claramente um excesso de poder dado aos humanos, isto é, a cada um de nós, sem que evidentemente saibamos como seremos capazes de nos reunir politicamente para assumir uma tal capacidade de estrago e ação, uma tal responsabilidade[8].

Nesse caso, o que nos é dado é um poder que não sentimos capazes de assumir, este de se tornar coletivamente uma força geológica. Ora, estou certo que isso não lhes interessa em absoluto, que é precisamente algo que vocês gostariam de evitar. Quem desejaria se tornar uma força capaz de influenciar o clima? Aliás, essa é a razão pela qual tanta gente prefere ignorar ou mesmo negar as descobertas científicas. O clima é [como a obra] Amédée ou comment s’en débarasser de Ionesco.

Eis aí um caso que se refere perfeitamente ao que o grande filósofo americano, aliás muito pouco lido na França, John Dewey, chama de “o público e seus problemas”[9]. Dewey define o público não como o objeto de ocupação da classe política, mas como aquilo que é preciso constituir toda vez que um novo problema surge: “O público consiste no conjunto de todos aqueles que são tão afetados por consequências indiretas de transações que ele julga necessário vigiar sistematicamente suas consequências”. O público deve então ser criado toda vez que nós observamos consequências inesperadas de nossas ações. A mutação ecológica que nós vivemos é um problema do tipo. Exceto que, nesse caso, nós temos o problema, mas não o público que lhe corresponde!

O ponto fundamental de Dewey é que os homens ou mulheres políticas não são aqueles que sabem, mas simplesmente aqueles a quem é delegada a tarefa de explorar, tateando, numa certa obscuridade, com as ferramentas de pesquisa, as consequências de nossas ações. Como por definição essas consequências são imprevistas, o público está sempre se reformando e o Estado sempre atrasado com um problema. Aqueles da época t-1 são talvez mais ou menos levados em conta, mas aqueles da época atual, não. É evidentemente o caso do clima. Ninguém, há vinte anos, teria imaginado que fazer política para o Sr. Hollande [ex-presidente da França] teria consistido em encerrar solenemente uma operação diplomática sobre a questão do clima, bradando como ele fez no dia 12 de dezembro de 2015, “Viva o planeta!”.

Vocês veem bem que não sabemos como exercer esse novo poder geológico. Há algo de esmagador, de impressionante nesse poder planetário dado a cada um de nós, ao mesmo tempo que contamos quase nada para o balanço de carbono da humanidade em geral. É aí que é preciso lembrar a regra posta no início. A simples denúncia de um poder ilegítimo, identificado por nós mesmos ou com ajuda dos outros não basta: ela deve vir junto com a aquisição dos meios de lutar, sob pena de cairmos no desespero. É preciso que vocês possam contra-atacar, resistir, modificar, arrumar, acomodar, aceitar talvez, em todo caso reagir (o que designa o termo em inglês de empowerment). Sem isso vocês irão se sentir com os pés e as mãos atados. Nem pesquisa, nem suspeição bastam. Cabe à política assumir o controle.

É preciso ainda entrar em acordo sobre o que a política pode fazer: se ela denuncia sem indicar como podemos combater, a política se resume a uma lição de frustração e impotência. Nada mais desencorajador do que clamar contra um escândalo tendo o sentimento de não poder fazer nada. De ator passamos a espectador, a princípio indignado, depois passivo, e logo cúmplice. À investigação sobre o que é injusto deve se somar a pesquisa de novos meios de reagir.

Figura 7

Daí todo o interesse dessa última imagem que eu tirei em setembro de 2014 durante uma grande manifestação pelo (ou melhor contra o) clima nas ruas de Manhattan. O slogan orgulhoso da faixa proclama: “Nós sabemos quem é o responsável”. Aqui não estamos mais na simples denunciação: através um importante trabalho de acumulação de provas, os ativistas conseguiram transformar o esmagador fardo “nós somos todos responsáveis e não sabemos como reagir” em uma outra forma política: os emissores de CO2 não são quaisquer pessoas, mas um punhado de atores industriais privados e públicos cujos nomes, ações e capitais são conhecidos[10]. Se o poder se exerce, um contrapoder novo e original se constituiu. Uma resposta precisa, e evidentemente passível de revisão e modulável, foi encontrada para a questão inicial: “Onde está o poder?”

Notas:

[1] JOERGES, Bernward. “Do Politics Have Artifacts”. Social Studies of Science, n.29, v.3, 1999, pp. 411-31. GARUTTI, Francesco. Can design be devious ? 2015. Filme.

[2] LATOUR, Bruno. Cogitamus. Six lettres sur les humanités scientifiques. Paris: La Découverte, 2010.

[3] LATOUR, Bruno. Pasteur: guerre et paix des microbes. Paris: La Découverte, 2001.

[4] Nota do tradutor: é preciso lembrar que o texto original foi publicado em 2016.

[5] PADIS, Marc Olivier. “La passion du complot”. Esprit, n. 419, 2015.

[6] BOLTANSKI, Luc. Enigmes et complots. Une enquête à propos d’enquêtes. Paris: Gallimard, 2012.

[7] LATOUR, Bruno. Face à Gaïa. Huit conférences sur le Nouveau Régime Climatique. Paris: La découverte, 2015.

[8] BONNEUIL, Christophe; FRESSOZ Jean-Baptiste. L’évènement anthropocène. La Terre, l’histoire et nous. Paris: Le Seuil, 2013.

[9] DEWEY, John. Le public et ses problèmes (Traduit de l’anglais et préfacé par Joelle Zask). Pau: Gallimard- Folio, 2010.

[10] HEEDE, Richard. “Tracing anthropogenic carbon dioxide and methane emissions to fossil fuel and cement producers, 1854–2010.” Climate Accountability Institute (2013); CHANCEL, Lucas; PIKETTY, Thomas. Carbon and inequality: from Kyoto to Paris, 2015.

Para citar este texto:

LATOUR, Bruno. Onde está o poder? E quando o tivermos encontrado, o que fazer com ele? (Tradução por Igor Rolemberg) Blog do Labemus, 2020. [publicado em 27 de agosto de 2020]. Disponível em: https://blogdolabemus.com/2020/08/27/onde-esta-o-poder-e-quando-o-tivermos-encontrado-o-que-fazer-com-ele/(abrir em uma nova aba)

Referências bibliográficas:

BOLTANSKI, Luc. Enigmes et complots. Une enquête à propos d’enquêtes. Paris: Gallimard, 2012.

BONNEUIL, Christophe; FRESSOZ Jean-Baptiste. L’évènement anthropocène. La Terre, l’histoire et nous. Paris: Le Seuil, 2013.

CHANCEL, Lucas; PIKETTI Thomas. Carbon and inequality: from Kyoto to Paris, 2015.

DEWEY, John. Le public et ses problèmes (Traduit de l’anglais et préfacé par Joelle Zask). Pau: Gallimard- Folio, 2010.

GARUTTI, Francesco. Can design be devious ? 2015. Filme.

HEEDE, Richard. “Tracing anthropogenic carbon dioxide and methane emissions to fossil fuel and cement producers, 1854–2010”. Climate Accountability Institute. 2013

JOERGES, Bernward. “Do Politics Have Artifacts”. Social Studies of Science, n.29, v.3, 1999, pp. 411-31.

LATOUR, Bruno. Pasteur: guerre et paix des microbes. Paris: La Découverte, 2001.

LATOUR, Bruno. Cogitamus. Six lettres sur les humanités scientifiques. Paris: La Découverte, 2010.

LATOUR, Bruno. Face à Gaïa. Huit conférences sur le Nouveau Régime Climatique. Paris: La découverte, 2015.

PADIS, Marc Olivier. “La passion du complot”. Esprit, n. 419, 2015.

Tese apoiada pela FAPESP e premiada pela Capes desvenda os mecanismos que possibilitaram ao Tribunal do Santo Ofício estabelecer uma vasta rede de agentes no território (Fundação Biblioteca Nacional, RJ)

Tese apoiada pela FAPESP e premiada pela Capes desvenda os mecanismos que possibilitaram ao Tribunal do Santo Ofício estabelecer uma vasta rede de agentes no território (Fundação Biblioteca Nacional, RJ)

Você precisa fazer login para comentar.