>

How the Science of Global Warming Was Compromised

By Axel Bojanowski

14 May 2010 – Spiegel Online

To what extent is climate change actually occuring? Late last year, climate researchers were accused of exaggerating study results. SPIEGEL ONLINE has since analyzed the hacked “Climategate” e-mails and provided insights into one of the most unprecedented spats in recent scientific history.

Is our planet warming up by 1 degree Celsius, 2 degrees, or more? Is climate change entirely man made? And what can be done to counteract it? There are myriad possible answers to these questions, as well as scientific studies, measurements, debates and plans of action. Even most skeptics now concede that mankind — with its factories, heating systems and cars — contributes to the warming up of our atmosphere.

But the consequences of climate change are still hotly contested. It was therefore something of a political bombshell when unknown hackers stole more than 1,000 e-mails written by British climate researchers, and published some of them on the Internet. A scandal of gigantic proportions seemed about to break, and the media dubbed the affair “Climategate” in reference to the Watergate scandal that led to the resignation of US President Richard Nixon. Critics claimed the e-mails would show that climate change predictions were based on unsound calculations.

Although a British parliamentary inquiry soon confirmed that this was definitely not a conspiracy, the leaked correspondence provided in-depth insight into the mechanisms, fronts and battles within the climate-research community. SPIEGEL ONLINE has analyzed the more than 1,000 Climategate e-mails spanning a period of 15 years, e-mails that are freely available over the Internet and which, when printed out, fill five thick files. What emerges is that leading researchers have been subjected to sometimes brutal attacks by outsiders and become bogged down in a bitter and far-reaching trench war that has also sucked in the media, environmental groups and politicians.

SPIEGEL ONLINE reveals how the war between climate researchers and climate skeptics broke out, the tricks the two sides used to outmaneuver each other and how the conflict could be resolved.

Part 2: From Staged Scandal to the Kyoto Triumph

The fronts in the climate debate have long been etched in the sand. On the one side there is a handful of highly influential climate researchers, on the other a powerful lobby of industrial associations determined to trivialize the dangers of global warming. This latter group is supported by the conservative wing of the American political spectrum, conspiracy theorists as well as critical scientists.

But that alone would not suffice to divide the roles so neatly into good and evil. Most climate researchers were somewhere between the two extremes. They often had difficulty drawing clear conclusions from their findings. After all, scientific facts are often ambiguous. Although it is generally accepted that there is good evidence to back forecasts of coming global warming, there is still considerable uncertainty about the consequences it will have.

Both sides — the leading climate researchers on the one hand and their opponents in industry and smaller groups of naysayers on the other — played hardball from the very beginning. It all started in 1986, when German physicists issued a dramatic public appeal, the first of its kind. They warned about what they saw as a “climatic disaster.” However, their avowed goal was to promote nuclear power over carbon dioxide-belching coal-fired power stations.

The First Scandal

At the time, there was certainly clear scientific evidence of a dangerous increase in temperatures, prompting the United Nations to form the Intergovernmental Panel on Climate Change (IPCC) in 1988 to look into the matter. However, the idea didn’t take hold in the United States until the country was hit by an unusually severe drought in the summer of 1988. Politicians in Congress used the dry spell to listen to NASA scientist James Hansen, who had been publishing articles in trade journals for years warning about the threat of man-made climate change.

When Washington instructed Hansen to put more emphasis on the uncertainties in his theory, Senator and later Vice President Al Gore cried foul. Gore notified the media about the government’s alleged attempted cover-up, forcing the government’s hand on the matter.

The oil companies reacted with alarm and forged alliances with companies in other sectors who were worried about a possible rise in the price of fossil fuels. They even managed to rope in a few shrewd climate researchers like Patrick Michaels of the University of Virginia.

The aim of the industrial lobby was to focus as much as possible on the doubts about the scientific findings. According to a strategy paper by the Global Climate Science Team, a crude-oil lobby group, “Victory will be achieved when average citizens recognize uncertainties in climate science.” In the meantime, scientists found themselves on the defensive, having to convince the public time and again that their warnings were indeed well-founded.

Industrial Propaganda for the ‘Less Educated’

A dangerous dynamic had been set in motion: Any climate researcher who expressed doubts about findings risked playing into the hands of the industrial lobby. The leaked e-mails show how leading scientists reacted to the PR barrage by the so-called “skeptics lobby.” Out of fear that their opponents could take advantage of ambiguous findings, many researchers tried to simply hide the weaknesses of their findings from the public.

The lobby spent millions on propaganda campaigns. In 1991, the Information Council on the Environment (ICE) issued a strategy paper aimed at what it called “less-educated people.” This proposed a campaign that would “reposition global warming as a theory (not fact).” However, the skeptics also wanted to address better educated sectors of society. The Global Climate Coalition, for example, an alliance of energy companies, specifically tried to influence UN delegates. The advice of skeptical scientists was also given considerable credence in the US Congress.

Nonetheless, the lobbyists had less success on the international stage. In 1997, the international community agreed on the first-ever climate protection treaty: the Kyoto Protocol. “Scientists had issued a warning, the media amplified it and the politicians reacted,” recalls Peter Weingart, a science sociologist at Bielefeld University in Germany, who researched the climate debate.

But just as numerous industrial firms began to acknowledge the need for climate protection and left the Global Climate Coalition, some scientists began getting too cozy with environmental organizations.

Even before the UN climate conference in Kyoto in 1997, environmentalist groups and leading climate researchers began joining forces to put pressure on industry and politicians. In August 1997, Greenpeace sent a letter to The Times newspaper in London, appealing on behalf of British researchers. All the climatologists had to do was sign on the dotted line. In October of that year, other climate researchers — ostensibly acting on behalf of the World Wildlife Fund, or WWF — e-mailed hundreds of colleagues calling on them to sign an appeal to the politicians in connection with the Kyoto conference.

The tactic was controversial. Whereas German scientists immediately put their names on the list, others had their doubts. In a leaked e-mail dated Nov. 25, 1997, renowned American paleoclimatologist Tom Wigley told a colleague he was worried that such appeals were almost as “dishonest ” as the propaganda employed by the skeptics’ lobby. Personal views, Wigley said, should not be confused with scientific facts.

Researchers ‘Beef Up’ Appeals by Environmental Groups

Wigley’s calls fell on deaf ears, and many of his colleagues unthinkingly fell in line with the environmental lobby. Asked to comment by WWF, climate researchers in Australia and Britain, for example, made particularly pessimistic predictions. What’s more, the experts said they had been fully aware that the WWF wanted to have the warnings “beefed up,” as it had stated in an e-mail dated July 1999. One Australian climatologist wrote to colleagues on July 28, 1999, that he would be “very concerned” if environmental protection literature contained data that might suggest “large areas of the world will have negligible climate change.”

Two years later, German climate researchers at the Potsdam Institute for Climate Impact Research (PIK) and from the Hamburg-based Max Planck Institute for Meteorology also drew up a position paper together with WWF. Germany’s Wuppertal Institute for Climate, Environment and Energy scientific research institute was a pioneer in this respect. It was very open about working together with the environmental group BUND, the German chapter of Friends of the Earth, in developing climate protection strategy recommendations in the mid-1990s.

From then on, the battle was all about dominance of the media. The media are often accused of giving climate-change skeptics too much attention. Indeed theories that cast doubt over global warming with little scientific backing regularly appeared in the press. These included so-called “information brochures” sent to journalists by oil industry lobbyists.

This is partly because the US media, in particular, are extremely keen to ensure what they see as balanced reporting — in other words, giving both sides in a debate a chance to air their views. This has meant that even more outlandish theories by climate-change skeptics have been given just as much airtime as the findings of established experts.

Media researchers believe the phenomenon of newsworthiness is another reason why anti-climate-change theories are reported so widely. The more unambiguous the warnings about an impending disaster, the more interesting critical viewpoints become. The media debate about the issue also focused on the potentially scandalous question of whether climatologists had speculated about nightmare scenarios simply in order to obtain access to research grants.

Renowned climate researcher Klaus Hasselmann of the Max Planck Institute for Meteorology rebuffed these accusations in a much-quoted article in the German newspaper Die Zeit in 1997. Hasselmann pointed out that scientific findings suggest that there is an extremely high likelihood that man was indeed responsible for climate change. “If we wait until the very last doubts have been overcome, it will be too late to do anything about it,” he wrote.

‘Climatologists Tend Not to Mention their More Extreme Suspicions’

Hasselmann blamed the media for all the hype. In fact, sociologists have identified “one-up debates” in the media in which darker and darker pictures were painted of the possible consequences of global warming. “Many journalists don’t want to hear about uncertainty in the research findings,” Max Planck Institute researcher Martin Claussen complains. Sociologist Peter Weingart criticizes not just journalists but also scientists. “Climatologists tend not to mention their more extreme suspicions,” he bemoans.

Whereas the debate flared up time and again in the US, “the skeptics in Germany were quickly marginalized again,” recalls sociologist Hans Peter Peters of the Forschungszentrum Jülich research center, who analyzed climate-related reporting in Germany. Peters believes that the communication strategy of leading researchers has proven successful in the long run. “The announced climate problem has been taken seriously by the media,” he says. He even sees signs of a “strong alignment of scientists and journalists in reporting about climate change.”

Nonetheless, scientists have tried to apply pressure on the media if they disagreed with the way stories were reported. Editorial offices have been inundated with protest letters whenever news stories said that the dangers of runaway climate change appeared to be diminishing. E-mails show that climate researchers coordinated their protests, targeting specific journalists to vent their fury on. For instance, when an article entitled “What Happened to Global Warming?” appeared on the BBC website in October 2009, British scientists first discussed the matter among themselves by e-mail before demanding that an apparently balanced editor explain what was going on.

Social scientists are well aware that good press can do wonders for a person’s career. David Philips, a sociologist at the University of San Diego, suggests that the battle for supremacy in the mass media is not only a means to mobilize public support, but also a great way to gain kudos within the scientific community.

The leaked e-mails show that some researchers use tactics that are every bit as ruthless as those employed by critics outside the scientific community. Under attack from global-warming skeptics, the climatologists took to the barricades. Indeed, the criticism only seemed to increase the scientists’ resolve. And worried that any uncertainties in their findings might be pounced upon, the scientists desperately tried to conceal such uncertainties.

“Don’t leave anything for the skeptics to cling on to,” wrote renowned British climatologist Phil Jones of the University of East Anglia (UEA) in a leaked e-mail dated Oct. 4, 2000. Jones, who heads UEA’s Climate Research Unit (CRU), is at the heart of the e-mail scandal. But there have always been plenty of studies that critics could quote because the research findings continue to be ambiguous.

At times scientists have been warned by their own colleagues that they may be playing into the enemy’s hands. Kevin Trenberth from the National Center for Atmospheric Research in the US, for example, came under enormous pressure from oil-producing nations while he was drawing up the IPCC’s second report in 1995. In January 2001, he wrote an e-mail to his colleague John Christy at the University of Alabama complaining that representatives from Saudi Arabia had quoted from one of Christy’s studies during the negotiations over the third IPCC climate report. “We are under no gag rule to keep our thoughts to ourselves,” Christy replied.

‘Effective Long-Term Strategies’

Paleoclimatologist Michael Mann from Pennsylvania State University also tried to rein in his colleagues. In an e-mail dated Sept. 17, 1998, he urged them to form a “united front” in order to be able to develop “effective long-term strategies.” Paleoclimatologists try to reconstruct the climate of the past. Their primary source of data is found in old tree trunks whose annual rings give clues about the weather in years gone by.

No one knows better than the researchers themselves that tree data can be very unreliable, and an exchange of e-mails shows that they discussed the problems at length. Even so, meaningful climate reconstructions can be made if the data are analyzed carefully. The only problem is that you get different climate change graphs depending on which data you use.

Mann and his colleagues were pioneers in this field. They were the first to draw up a graph of average temperatures in the Northern Hemisphere over the past 1,000 years. That is indisputably an impressive achievement. Because of its shape, his diagram was dubbed the “hockey stick graph.” According to this, the climate changed little for about 850 years, then temperatures rose dramatically (the blade of the stick). However, a few years later, it turned out that the graph was not as accurate as first assumed.

‘I’d Hate to Give It Fodder’

In 1999, CRU chief Phil Jones and fellow British researcher Keith Briffa drew up a second climate graph. Perhaps not surprisingly, this led to a row between the two groups about which graph should be published in the summary for politicians at the front of the IPCC report.

The hockey stick graph was appealing on account of its convincing shape. After all, the unique temperature rise of the last 150 years appeared to provide clear proof of man’s influence on our climate. But Briffa cautioned about overestimating the significance of the hockey stick. In an e-mail to his colleagues in September 1999, Briffa said that Mann’s graph “should not be taken as read,” even though it presented “a nice tidy story.”

In contrast to Mann et al’s hockey stick, Briffa’s graph contained a warm period in the High Middle Ages. “I believe that the recent warmth was probably matched about 1,000 years ago,” he wrote. Fortunately for the researchers, the hefty dispute that followed was quickly defused when they realized they were better served by joining forces against the common

. Climate-change skeptics use Briffa’s graph to cast doubt over the assertion that man’s activities have affected our climate. They claim that if our atmosphere is as warm now as it was in the Middle Ages — when there was no man-made pollution — carbon dioxide emissions can’t possibly be responsible for the rise in temperatures.

“I don’t think that doubt is scientifically justified, and I’d hate to be the one to have to give it fodder,” Mann wrote to his colleagues. The tactic proved a successful one. Mann’s hockey stick graph ended up at the front of the UN climate report of 2001. In fact it became the report’s defining element.

An Innocent Phrase Seized by Republicans

In order to get unambiguous graphs, the researchers had to tweak their data slightly. In probably the most infamous of the Climategate e-mails, Phil Jones wrote that he had used Mann’s “trick” to “hide the decline” in temperatures. Following the leaking of the e-mails, the expression “hide the decline” was turned into a song about the alleged scandal and seized upon by Republican politicians in the US, who quoted it endlessly in an attempt to discredit the climate experts.

But what appeared at first glance to be fraud was actually merely a face-saving fudge: Tree-ring data indicates no global warming since the mid-20th century, and therefore contradicts the temperature measurements. The clearly erroneous tree data was thus corrected by the so-called “trick” with the temperature graphs.

The row grew more and more bitter as the years passed, as the leaked e-mails between researchers shows. Since the late 1990s, several climate-change skeptics have repeatedly asked Jones and Mann for their tree-ring data and calculation models, citing the legal right to access scientific data.

‘I Think I’ll Delete the File’

In 2003, mineralogist Stephen McIntyre and economist Ross McKitrick published a paper that highlighted systematic errors in the statistics underlying the hockey stick graph. However Michael Mann rejected the paper, which he saw as part of a “highly orchestrated, heavily funded corporate attack campaign,” as he wrote in September 2009.

More and more, Mann and his colleagues refused to hand out their data to “the contrarians,” as skeptical researchers were referred to in a number of e-mails. On Feb. 2, 2005, Jones went so far as to write, “I think I’ll delete the file rather than send it to anyone.”

Today, Mann defends himself by saying his university has looked into the e-mails and decided that he had not suppressed data at any time. However, an inquiry conducted by the British parliament came to a very different conclusion. “The leaked e-mails appear to show a culture of non-disclosure at CRU and instances where information may have been deleted to avoid disclosure,” the House of Commons’ Science and Technology Committee announced in its findings on March 31.

Sociologist Peter Weingart believes that the damage could be irreparable. “A loss of credibility is the biggest risk inherent in scientific communication,” he said, adding that trust can only be regained through complete transparency.

The two sides became increasingly hostile toward one another. They debated about whom they could trust, who was a part of their “team” — and who among them might secretly be a skeptic. All those who were between the two extremes or even tried to maintain links with both sides soon found themselves under suspicion.

This distrust helped foster a system of favoritism, as the hacked e-mails show. According to these, Jones and Mann had a huge influence over what was published in the trade press. Those who controlled the journals also controlled what entered the public arena — and therefore what was perceived as scientific reality.

All journal articles are checked anonymously by colleagues before publication as part of what is known as the “peer review” process. Behind closed doors, researchers complained for years that Mann, who is a sought-after reviewer, acted as a kind of “gatekeeper” in relation to magazine articles on paleoclimatology. It’s well-known that renowned scientists can gain influence within journals. But it’s a risky business. “The danger that deserved reputations become illegitimate power is the greatest risk that science faces,” Weingart says.

From Peer Review to Connivance

In an e-mail to SPIEGEL ONLINE, Mann rejected the claims that he exercised undue influence. He said the editors of scientific journals — not he — chose the reviewers. However, as Weingart points out, in specialist areas like paleoclimatology, which have only a handful of experts, certain scientists can gain considerable power — provided they have a good connection to the publishers of the relevant journals.

The “hockey team,” as the group around Mann and Jones liked to call itself, undoubtedly had good connections to the journals. The colleagues coordinated and discussed their reviews among themselves. “Rejected two papers from people saying CRU has it wrong over Siberia,” CRU head Jones wrote to Mann in March 2004. The articles he was referring to were about tree data from Siberia, a basis of the climate graphs. In fact, it later turned out that Jones’ CRU group probably misinterpreted the Siberian data, and the findings of the study rejected by Jones in March 2004 were actually correct.

However, Jones and Mann had the backing of the majority of the scientific community in another case. A study published in Climate Research in 2003 looked into findings on the current warm period and the medieval one, concluding that the 20th century was “probably not the warmest nor a uniquely extreme climactic period of the last millennium.” Although climate skeptics were thrilled, most experts thought the study was methodologically flawed. But if the pro-climate-change camp controlled the peer review process, then why was it ever published?

Plugging the Leak

In an e-mail dated March 11, 2003, Michael Mann said there was only one possibility: Skeptics had taken over the journal. He therefore demanded that the enemy be stopped in its tracks. The “hockey team” launched a powerful counterattack that shook Climate Research magazine to its foundations. Several of its editors resigned. Vociferous as they were, though, the skeptics did not have that much influence. If it turned out that alarmist climate studies were flawed — and this was the case on several occasions — the consequences of the climate catastrophe would not be as dire as had been predicted.

Yet there were also limits to the influence had by Mann and Jones, as became apparent in 2005, when relentless hockey stick critics Ross McKitrick and Stephen McIntyre were able to publish studies in the most important geophysical journal, Geophysical Research Letters (GRL). “Apparently, the contrarians now have an ‘in’ with GRL,” Mann wrote to his colleagues in a leaked e-mail. “We can’t afford to lose GRL.”

Mann discovered that one of the editors of GRL had once worked at the same university as the feared climate skeptic Patrick Michaels. He therefore put two and two together: “I think we now know how various papers have gotten published in GRL,” he wrote on January 20, 2005. At the same time, the scientists discussed how to get rid of GRL editor James Saiers, himself a climate researcher. Saiers quit his post a year later — allegedly of his own accord. “The GRL leak may have been plugged up now,” a relieved Mann wrote in an e-mail to the “hockey team.”

Internal Conflict and the External Façade

Climategate appears to confirm the criticism that scientific systems always benefit cartels. However, Sociologist Hans Peter Peters cautions against over-interpreting the affair. He says alliances are commonplace in every area of the scientific world. “Internal communication within all groups differs from the facade,” Peters says.

Weingart also believes the inner workings of a group should not be judged by the criteria of the outside world. After all, controversy is the very basis of science, and “demarcation and personal conflict are inevitable.” Even so, he says the extent to which camps have built up in climate research is certainly unusual.

Weingart says the political ramifications only fuelled the battle between the two sides in the global warming debate. He believes that the more an issue is politicized, the deeper the rifts between opposing stances.

Immense public scrutiny made life extremely difficult for the scientists. On May 2, 2001, paleoclimatologist Edward Cook of the Lamont Doherty Earth Observatory complained in an e-mail: “This global change stuff is so politicized by both sides of the issue that it is difficult to do the science in a dispassionate environment.” The need to summarize complex findings for a UN report appears only to have exacerbated the problem. “I tried hard to balance the needs of the science and the IPCC, which were not always the same,” Keith Briffa wrote in 2007. Max Planck researcher Martin Claussen says too much emphasis was put on consensus in an attempt to satisfy politicians’ demands.

And even scientists are not always interested solely in the actual truth of the matter. Weingart notes that public debate is mostly “only superficially about enlightenment.” Rather, it is more about “deciding on and resolving conflicts through general social agreement.” That’s why it helps to present unambiguous findings.

The Time for Clear Answers Is Over

However, it seems all but impossible to provide conclusive proof in climate research. Scientific philosopher Silvio Funtovicz foresaw this dilemma as early as 1990. He described climate research as a “postnormal science.” On account of its high complexity, he said it was subject to great uncertainty while, at the same time, harboring huge risks.

The experts therefore face a dilemma: They have little chance of giving the right advice. If they don’t sound the alarm, they are accused of not fulfilling their moral obligations. However, alarmist predictions are criticized if the predicted changes fail to materialize quickly.

Climatological findings will probably remain ambiguous even if further progress is made. Weingart says it’s now up to scientists and society to learn to come to terms with this. In particular, he warns, politicians must understand that there is no such thing as clear results. “Politicians should stop listening to scientists who promise simple answers,” Weingart says.

Translated from the German by Jan Liebelt

A colorful oracle: A visitor watches an animation demonstrating oceanic acidity levels at the UN Climate Change Conference in Copenhagen in December.

Red colors equals a warmer future: Climate prognoses forecast a noticeable warming of the planet if greenhouse-gas emissions are not curtailed.

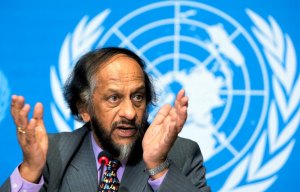

Several climate researchers are calling for the resignation of Rajendra Pachauri, a Nobel Peace Prize winner and chairman of the UN’s Intergovernmental Panel on Climate Change, because he took too long to acknowledge that the panel published inaccurate research on climate change.

The German Climate Computing Center (DKRZ) in Hamburg uses supercomputers to predict future climates.

Você precisa fazer login para comentar.