>

The Berkeley Earth project say they are about to reveal the definitive truth about global warming

Ian Sample

guardian.co.uk

Sunday 27 February 2011 20.29 GMT

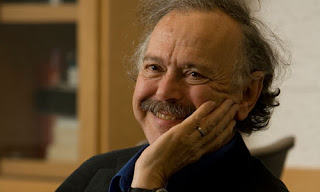

Richard Muller of the Berkeley Earth project is convinced his approach will lead to a better assessment of how much the world is warming. Photograph: Dan Tuffs for the Guardian

In 1964, Richard Muller, a 20-year-old graduate student with neat-cropped hair, walked into Sproul Hall at the University of California, Berkeley, and joined a mass protest of unprecedented scale. The activists, a few thousand strong, demanded that the university lift a ban on free speech and ease restrictions on academic freedom, while outside on the steps a young folk-singer called Joan Baez led supporters in a chorus of We Shall Overcome. The sit-in ended two days later when police stormed the building in the early hours and arrested hundreds of students. Muller was thrown into Oakland jail. The heavy-handedness sparked further unrest and, a month later, the university administration backed down. The protest was a pivotal moment for the civil liberties movement and marked Berkeley as a haven of free thinking and fierce independence.

Today, Muller is still on the Berkeley campus, probably the only member of the free speech movement arrested that night to end up with a faculty position there – as a professor of physics. His list of publications is testament to the free rein of tenure: he worked on the first light from the big bang, proposed a new theory of ice ages, and found evidence for an upturn in impact craters on the moon. His expertise is highly sought after. For more than 30 years, he was a member of the independent Jason group that advises the US government on defence; his college lecture series, Physics for Future Presidents was voted best class on campus, went stratospheric on YouTube and, in 2009, was turned into a bestseller.

For the past year, Muller has kept a low profile, working quietly on a new project with a team of academics hand-picked for their skills. They meet on campus regularly, to check progress, thrash out problems and hunt for oversights that might undermine their work. And for good reason. When Muller and his team go public with their findings in a few weeks, they will be muscling in on the ugliest and most hard-fought debate of modern times.

Muller calls his latest obsession the Berkeley Earth project. The aim is so simple that the complexity and magnitude of the undertaking is easy to miss. Starting from scratch, with new computer tools and more data than has ever been used, they will arrive at an independent assessment of global warming. The team will also make every piece of data it uses – 1.6bn data points – freely available on a website. It will post its workings alongside, including full information on how more than 100 years of data from thousands of instruments around the world are stitched together to give a historic record of the planet’s temperature.

Muller is fed up with the politicised row that all too often engulfs climate science. By laying all its data and workings out in the open, where they can be checked and challenged by anyone, the Berkeley team hopes to achieve something remarkable: a broader consensus on global warming. In no other field would Muller’s dream seem so ambitious, or perhaps, so naive.

“We are bringing the spirit of science back to a subject that has become too argumentative and too contentious,” Muller says, over a cup of tea. “We are an independent, non-political, non-partisan group. We will gather the data, do the analysis, present the results and make all of it available. There will be no spin, whatever we find.” Why does Muller feel compelled to shake up the world of climate change? “We are doing this because it is the most important project in the world today. Nothing else comes close,” he says.

Muller is moving into crowded territory with sharp elbows. There are already three heavyweight groups that could be considered the official keepers of the world’s climate data. Each publishes its own figures that feed into the UN’s Intergovernmental Panel on Climate Change. Nasa’s Goddard Institute for Space Studies in New York City produces a rolling estimate of the world’s warming. A separate assessment comes from another US agency, the National Oceanic and Atmospheric Administration (Noaa). The third group is based in the UK and led by the Met Office. They all take readings from instruments around the world to come up with a rolling record of the Earth’s mean surface temperature. The numbers differ because each group uses its own dataset and does its own analysis, but they show a similar trend. Since pre-industrial times, all point to a warming of around 0.75C.

You might think three groups was enough, but Muller rolls out a list of shortcomings, some real, some perceived, that he suspects might undermine public confidence in global warming records. For a start, he says, warming trends are not based on all the available temperature records. The data that is used is filtered and might not be as representative as it could be. He also cites a poor history of transparency in climate science, though others argue many climate records and the tools to analyse them have been public for years.

Then there is the fiasco of 2009 that saw roughly 1,000 emails from a server at the University of East Anglia’s Climatic Research Unit (CRU) find their way on to the internet. The fuss over the messages, inevitably dubbed Climategate, gave Muller’s nascent project added impetus. Climate sceptics had already attacked James Hansen, head of the Nasa group, for making political statements on climate change while maintaining his role as an objective scientist. The Climategate emails fuelled their protests. “With CRU’s credibility undergoing a severe test, it was all the more important to have a new team jump in, do the analysis fresh and address all of the legitimate issues raised by sceptics,” says Muller.

This latest point is where Muller faces his most delicate challenge. To concede that climate sceptics raise fair criticisms means acknowledging that scientists and government agencies have got things wrong, or at least could do better. But the debate around global warming is so highly charged that open discussion, which science requires, can be difficult to hold in public. At worst, criticising poor climate science can be taken as an attack on science itself, a knee-jerk reaction that has unhealthy consequences. “Scientists will jump to the defence of alarmists because they don’t recognise that the alarmists are exaggerating,” Muller says.

The Berkeley Earth project came together more than a year ago, when Muller rang David Brillinger, a statistics professor at Berkeley and the man Nasa called when it wanted someone to check its risk estimates of space debris smashing into the International Space Station. He wanted Brillinger to oversee every stage of the project. Brillinger accepted straight away. Since the first meeting he has advised the scientists on how best to analyse their data and what pitfalls to avoid. “You can think of statisticians as the keepers of the scientific method, ” Brillinger told me. “Can scientists and doctors reasonably draw the conclusions they are setting down? That’s what we’re here for.”

For the rest of the team, Muller says he picked scientists known for original thinking. One is Saul Perlmutter, the Berkeley physicist who found evidence that the universe is expanding at an ever faster rate, courtesy of mysterious “dark energy” that pushes against gravity. Another is Art Rosenfeld, the last student of the legendary Manhattan Project physicist Enrico Fermi, and something of a legend himself in energy research. Then there is Robert Jacobsen, a Berkeley physicist who is an expert on giant datasets; and Judith Curry, a climatologist at Georgia Institute of Technology, who has raised concerns over tribalism and hubris in climate science.

Robert Rohde, a young physicist who left Berkeley with a PhD last year, does most of the hard work. He has written software that trawls public databases, themselves the product of years of painstaking work, for global temperature records. These are compiled, de-duplicated and merged into one huge historical temperature record. The data, by all accounts, are a mess. There are 16 separate datasets in 14 different formats and they overlap, but not completely. Muller likens Rohde’s achievement to Hercules’s enormous task of cleaning the Augean stables.

The wealth of data Rohde has collected so far – and some dates back to the 1700s – makes for what Muller believes is the most complete historical record of land temperatures ever compiled. It will, of itself, Muller claims, be a priceless resource for anyone who wishes to study climate change. So far, Rohde has gathered records from 39,340 individual stations worldwide.

Publishing an extensive set of temperature records is the first goal of Muller’s project. The second is to turn this vast haul of data into an assessment on global warming. Here, the Berkeley team is going its own way again. The big three groups – Nasa, Noaa and the Met Office – work out global warming trends by placing an imaginary grid over the planet and averaging temperatures records in each square. So for a given month, all the records in England and Wales might be averaged out to give one number. Muller’s team will take temperature records from individual stations and weight them according to how reliable they are.

This is where the Berkeley group faces its toughest task by far and it will be judged on how well it deals with it. There are errors running through global warming data that arise from the simple fact that the global network of temperature stations was never designed or maintained to monitor climate change. The network grew in a piecemeal fashion, starting with temperature stations installed here and there, usually to record local weather.

Among the trickiest errors to deal with are so-called systematic biases, which skew temperature measurements in fiendishly complex ways. Stations get moved around, replaced with newer models, or swapped for instruments that record in celsius instead of fahrenheit. The times measurements are taken varies, from say 6am to 9pm. The accuracy of individual stations drift over time and even changes in the surroundings, such as growing trees, can shield a station more from wind and sun one year to the next. Each of these interferes with a station’s temperature measurements, perhaps making it read too cold, or too hot. And these errors combine and build up.

This is the real mess that will take a Herculean effort to clean up. The Berkeley Earth team is using algorithms that automatically correct for some of the errors, a strategy Muller favours because it doesn’t rely on human interference. When the team publishes its results, this is where the scrutiny will be most intense.

Despite the scale of the task, and the fact that world-class scientific organisations have been wrestling with it for decades, Muller is convinced his approach will lead to a better assessment of how much the world is warming. “I’ve told the team I don’t know if global warming is more or less than we hear, but I do believe we can get a more precise number, and we can do it in a way that will cool the arguments over climate change, if nothing else,” says Muller. “Science has its weaknesses and it doesn’t have a stranglehold on the truth, but it has a way of approaching technical issues that is a closer approximation of truth than any other method we have.”

He will find out soon enough if his hopes to forge a true consensus on climate change are misplaced. It might not be a good sign that one prominent climate sceptic contacted by the Guardian, Canadian economist Ross McKitrick, had never heard of the project. Another, Stephen McIntyre, whom Muller has defended on some issues, hasn’t followed the project either, but said “anything that [Muller] does will be well done”. Phil Jones at the University of East Anglia was unclear on the details of the Berkeley project and didn’t comment.

Elsewhere, Muller has qualified support from some of the biggest names in the business. At Nasa, Hansen welcomed the project, but warned against over-emphasising what he expects to be the minor differences between Berkeley’s global warming assessment and those from the other groups. “We have enough trouble communicating with the public already,” Hansen says. At the Met Office, Peter Stott, head of climate monitoring and attribution, was in favour of the project if it was open and peer-reviewed.

Peter Thorne, who left the Met Office’s Hadley Centre last year to join the Co-operative Institute for Climate and Satellites in North Carolina, is enthusiastic about the Berkeley project but raises an eyebrow at some of Muller’s claims. The Berkeley group will not be the first to put its data and tools online, he says. Teams at Nasa and Noaa have been doing this for many years. And while Muller may have more data, they add little real value, Thorne says. Most are records from stations installed from the 1950s onwards, and then only in a few regions, such as North America. “Do you really need 20 stations in one region to get a monthly temperature figure? The answer is no. Supersaturating your coverage doesn’t give you much more bang for your buck,” he says. They will, however, help researchers spot short-term regional variations in climate change, something that is likely to be valuable as climate change takes hold.

Despite his reservations, Thorne says climate science stands to benefit from Muller’s project. “We need groups like Berkeley stepping up to the plate and taking this challenge on, because it’s the only way we’re going to move forwards. I wish there were 10 other groups doing this,” he says.

For the time being, Muller’s project is organised under the auspices of Novim, a Santa Barbara-based non-profit organisation that uses science to find answers to the most pressing issues facing society and to publish them “without advocacy or agenda”. Funding has come from a variety of places, including the Fund for Innovative Climate and Energy Research (funded by Bill Gates), and the Department of Energy’s Lawrence Berkeley Lab. One donor has had some climate bloggers up in arms: the man behind the Charles G Koch Charitable Foundation owns, with his brother David, Koch Industries, a company Greenpeace called a “kingpin of climate science denial”. On this point, Muller says the project has taken money from right and left alike.

No one who spoke to the Guardian about the Berkeley Earth project believed it would shake the faith of the minority who have set their minds against global warming. “As new kids on the block, I think they will be given a favourable view by people, but I don’t think it will fundamentally change people’s minds,” says Thorne. Brillinger has reservations too. “There are people you are never going to change. They have their beliefs and they’re not going to back away from them.”

Waking across the Berkeley campus, Muller stops outside Sproul Hall, where he was arrested more than 40 years ago. Today, the adjoining plaza is a designated protest spot, where student activists gather to wave banners, set up tables and make speeches on any cause they choose. Does Muller think his latest project will make any difference? “Maybe we’ll find out that what the other groups do is absolutely right, but we’re doing this in a new way. If the only thing we do is allow a consensus to be reached as to what is going on with global warming, a true consensus, not one based on politics, then it will be an enormously valuable achievement.”