After Thomas Sargent learned on Monday morning that he and colleague Christopher Sims had been awarded the Nobel Prize in Economics for 2011, the 68-year-old New York University professor struck an aw-shucks tone with an interviewer from the official Nobel website: “We’re just bookish types that look at numbers and try to figure out what’s going on.”

But no one who’d followed Prof. Sargent’s long, distinguished career would have been fooled by his attempt at modesty. He’d won for his part in developing one of economists’ main models of cause and effect: How can we expect people to respond to changes in prices, for example, or interest rates? According to the laureates’ theories, they’ll do whatever’s most beneficial to them, and they’ll do it every time. They don’t need governments to instruct them; they figure it out for themselves. Economists call this the “rational expectations” model. And it’s not just an abstraction: Bankers and policy-makers apply these formulae in the real world, so bad models lead to bad policy.

Which is perhaps why, by the end of that interview on Monday, Prof. Sargent was adopting a more realistic tone: “We experiment with our models,” he explained, “before we wreck the world.”

Rational-expectations theory and its corollary, the efficient-market hypothesis, have been central to mainstream economics for more than 40 years. And while they may not have “wrecked the world,” some critics argue these models have blinded economists to reality: Certain the universe was unfolding as it should, they failed both to anticipate the financial crisis of 2008 and to chart an effective path to recovery.

The economic crisis has produced a crisis in the study of economics – a growing realization that if the field is going to offer meaningful solutions, greater attention must be paid to what is happening in university lecture halls and seminar rooms.

While the protesters occupying Wall Street are not carrying signs denouncing rational-expectations and efficient-market modelling, perhaps they should be.

They wouldn’t be the first young dissenters to call economics to account. In June of 2000, a small group of elite graduate students at some of France’s most prestigious universities declared war on the economic establishment. This was an unlikely group of student radicals, whose degrees could be expected to lead them to lucrative careers in finance, business or government if they didn’t rock the boat. Instead, they protested – not about tuition or workloads, but that too much of what they studied bore no relation to what was happening outside the classroom walls.

They launched an online petition demanding greater realism in economics teaching, less reliance on mathematics “as an end in itself” and more space for approaches beyond the dominant neoclassical model, including input from other disciplines, such as psychology, history and sociology. Their conclusion was that economics had become an “autistic science,” lost in “imaginary worlds.” They called their movement Autisme-economie.

The students’ timing is notable: It was the spring of 2000, when the world was still basking in the glow of “the Great Moderation,” when for most of a decade Western economies had been enjoying a prolonged period of moderate but fairly steady growth.

Some economists were daring to think the unthinkable – that their understanding of how advanced capitalist economies worked had become so sophisticated that they might finally have succeeded in smoothing out the destructive gyrations of capitalism’s boom-and-bust cycle. (“The central problem of depression prevention has been solved,” declared another Nobel laureate, Robert Lucas of the University of Chicago, in 2003 – five years before the greatest economic collapse in more than half a century.)

The students’ petition sparked a lively debate. The French minister of education established a committee on economic education. Economics students across Europe and North America began meeting and circulating petitions of their own, even as defenders of the status quo denounced the movement as a Trotskyite conspiracy. By September, the first issue of the Post-Autistic Economic Newsletter was published in Britain.

As The Independent summarized the students’ message: “If there is a daily prayer for the global economy, it should be, ‘Deliver us from abstraction.’”

It seems that entreaty went unheard through most of the discipline before the economic crisis, not to mention in the offices of hedge funds and the Stockholm Nobel selection committee. But is it ringing louder now? And how did economics become so abstract in the first place?

The great classical economists of the late 18th and early 19th centuries had no problem connecting to the real world – the Industrial Revolution had unleashed profound social and economic changes, and they were trying to make sense of what they were seeing. Yet Adam Smith, who is considered the founding father of modern economics, would have had trouble understanding the meaning of the word “economist.”

What is today known as economics arose out of two larger intellectual traditions that have since been largely abandoned. One is political economy, which is based on the simple idea that economic outcomes are often determined largely by political factors (as well as vice versa). But when political-economy courses first started appearing in Canadian universities in the 1870s, it was still viewed as a small offshoot of a far more important topic: moral philosophy.

In The Wealth of Nations (1776), Adam Smith famously argued that the pursuit of enlightened self-interest by individuals and companies could benefit society as a whole. His notion of the market’s “invisible hand” laid the groundwork for much of modern neoclassical and neo-liberal, laissez-faire economics. But unlike today’s free marketers, Smith didn’t believe that the morality of the market was appropriate for society at large. Honesty, discipline, thrift and co-operation, not consumption and unbridled self-interest, were the keys to happiness and social cohesion. Smith’s vision was a capitalist economy in a society governed by non-capitalist morality.

But by the end of the 19th century, the new field of economics no longer concerned itself with moral philosophy, and less and less with political economy. What was coming to dominate was a conviction that markets could be trusted to produce the most efficient allocation of scarce resources, that individuals would always seek to maximize their utility in an economically rational way, and that all of this would ultimately lead to some kind of overall equilibrium of prices, wages, supply and demand.

Political economy was less vital because government intervention disrupted the path to equilibrium and should therefore be avoided except in exceptional circumstances. And as for morality, economics would concern itself with the behaviour of rational, self-interested, utility-maximizing Homo economicus. What he did outside the confines of the marketplace would be someone else’s field of study.

As those notions took hold, a new idea emerged that would have surprised and probably horrified Adam Smith – that economics, divorced from the study of morality and politics, could be considered a science. By the beginning of the 20th century, economists were looking for theorems and models that could help to explain the universe. One historian described them as suffering from “physics envy.” Although they were dealing with the behaviour of humans, not atoms and particles, they came to believe they could accurately predict the trajectory of human decision-making in the marketplace.

In their desire to have their field be recognized as a science, economists increasingly decided to speak the language of science. From Smith’s innovations through John Maynard Keynes’s work in the 1930s, economics was argued in words. Now, it would go by the numbers.

The turning point came in 1947, when Paul Samuelson’s classic book Foundations of Economic Analysis for the first time presented economics as a branch of applied mathematics. Without “the invigorating kiss of mathematical method,” Samuelson maintained, economists had been practising “mental gymnastics of a particularly depraved type,” like “highly trained athletes who never run a race.” After Samuelson, no economist could ever afford to make that mistake.

And that may have been the greatest mistake of all: In a post-crisis, 2009 essay in The New York Times Magazine, Princeton economist and Nobel laureate Paul Krugman wrote, “The central cause of the profession’s failure was the desire for an all-encompassing, intellectually elegant approach that gave economists a chance to show off their mathematical prowess.”

Of course, nothing says science like a Nobel Prize. Prizes in chemistry, physics and medicine were first awarded in 1901, long before anyone would have thought that economics could or should be included. But by the late 1960s, the central bank of Sweden was determined to change that, and when the Nobel family objected, the bank agreed to put up the money itself, making it the only one of the prizes to be funded by taxpayers.

Officially, then, it is known as the Sveriges Riksbank Prize in Economic Sciences in Memory of Alfred Nobel – but that title is rarely used. On Monday morning, Prof. Sargent and Princeton University Prof. Sims were widely reported to have won the Nobel Prize in Economics.

The confusion is understandable, and deliberate, according to Philip Mirowski, an economic historian at the University of Notre Dame. “It’s part of the PR trick,” Prof. Mirowski argues. Awarding the economics prize immediately after the prizes for physics, chemistry and medicine helps to place economics on the same level as those other natural sciences.

The prize also has helped to transform one particular ideology into economic orthodoxy. Prof. Mirowski, who is co-writing a book on the history of the economics prize, notes that throughout the 1970s and 1980s, economists whose work supported neoclassical, pro-market, laissez-faire ideas won a disproportionate number of those honours, as well as support from the increasing numbers of well-funded think tanks and foundations that cleaved to the same lines. People who rejected those ideas, or were skeptical of the natural sciences model, were quickly marginalized, and their road to academic advancement often blocked.

The result was a homogenization of economic thought that Prof. Mirowski believes “has been pretty deleterious for economics on the whole.”

The road to hell is paved with good intentions,

rational expectations and efficient markets

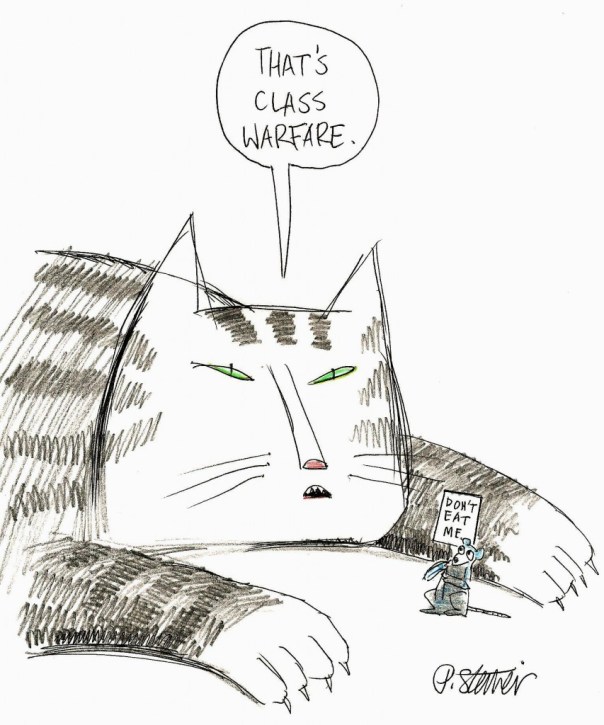

Many critics of neo-classical economics argue that it has a powerful pro-market bias that’s provided an intellectual justification for politicians ideologically disposed to reduce government involvement in the economy.

The rational-expectations model, for example, assumes that consumers and producers all inform themselves with all available data, understand how the world around them operates and will therefore respond to the same stimulus in essentially the same way. That allows economists to mathematically forecast how these “representative” consumers and producers would behave.

During a recession, say, a well-meaning government might want to enhance benefits for the unemployed. Prof. Sargent, for one, would caution against that, because a “rational” unemployed worker might then calculate that it’s better to reject a lower-paying job. He’s blamed much of the chronically high unemployment in some European countries on the presence of an army of voluntarily unemployed workers, and spoken out against the Obama administration’s recent efforts to extend unemployment benefits.

Indeed, under the rational-expectations model, most market interventions by governments and central banks wind up looking counterproductive.

Meanwhile, the efficient-markets hypothesis, developed by University of Chicago economist Eugene Fama in the 1970s, has dominated thinking about financial markets. It posits that the prices of stocks and other financial assets are always “efficient” because they accurately reflect all the available information about economic fundamentals.

By this reasoning, there can be no speculative price bubbles or busts in the stock or housing markets, and speculators with evil intentions cannot successfully manipulate markets. Conveniently, since markets are self-stabilizing, there’s no need for government regulation of them.

Critics point out that both these theories tend to ignore what John Maynard Keynes called the “animal spirits” – playing down human irrationality, inefficiency, venality and ignorance. Those are qualities that are hard to plug into a mathematical equation that purports to model human behaviour.

These models also have failed to take into account the profound changes wrought by globalization, and the growing importance of banks, hedge funds and other financial institutions. Yet they have successfully provided a “scientific” cover for an anti-regulatory political agenda that is popular on Wall Street and in some Washington political circles.

Inside jobs: Pay no attention to that banker behind the curtain

The Great Depression of the 1930s led many economists of the day to question some of their discipline’s most fundamental assumptions and produced a decades-long heyday for Keynesian economics. So far, the Great Recession has led to less of a fundamental shift.

Notre Dame’s Prof. Mirowski believes that more rethinking is necessary. “Everyone thought the banks would have to change their behaviour, but they got bailed out and nothing changed. The economics profession has also been bailed out because it is so highly interlinked with the financial profession, so of course they don’t change. Why would they change?”

Indeed, economics may be the dismal science, but there is nothing dismal about the payoffs for those at the top of the heap serving as advisers and consultants and sitting on various boards. Unlike some disciplines, economics has no guidelines governing conflict of interest and disclosure.

In 2010, the Academy Award-winning documentary Inside Job exposed several disturbing examples of academic economists calling for deregulation while working for financial-services companies. And in a study of 19 prominent financial economists, published last year by the Political Economy Research Institute at the University of Massachusetts Amherst, 13 were found to own stock or sit on the boards of private financial institutions, but in only four cases were those affiliations revealed when they testified or wrote op-eds concerning financial regulation.

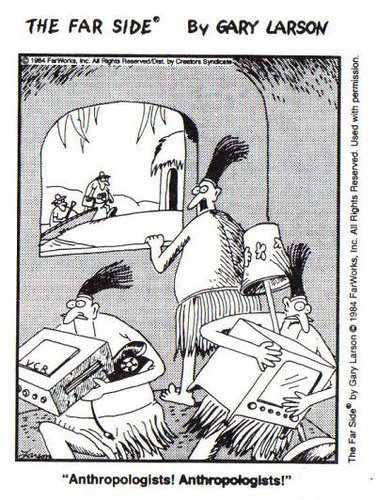

This year, the American Economics Association agreed to set up a committee to investigate whether economists should develop ethical guidelines similar to those already in place for sociologists, psychologists, statisticians and anthropologists.

But there appears to be little enthusiasm for the idea among mainstream economists. Prof. Lucas of the University of Chicago, in an interview with The New York Times, objected: “What disciplines economics, like any science, is whether your work can be replicated. It either stands up or it doesn’t. Your motivations and whatnot are secondary.”

Several billion pennies for their thoughts

The critics, however, are more numerous and considerably better financed than the French students a decade ago. In October, 2009, billionaire financier George Soros said that “the current paradigm has failed.” He resolved to help save economics from itself. He pledged $50-million toward the establishment of the New York-based Institute for New Economic Thinking (INET), with a mandate to promote changes in economic theory and practice through conferences, grants and campaigns for graduate and undergraduate education reforms.

Perry Mehrling, a professor of economics at New York’s Columbia University, is the chair of the curriculum task force at INET. He says his graduate students at Columbia are growing increasingly frustrated by at the tendency to define the discipline by its tools instead of its subject matter – like the students in Paris a decade ago, they find little relationship between the mathematical models in class and the world outside the door.

Prof. Mehrling believes that economics education has become far too insular. Never mind cross-disciplinary study – even courses in economic history and the history of economic thought have all but disappeared, so students spend almost no time reading Smith, Keynes or other past masters.

“It’s not just that we’re not listening to sociologists,” Prof. Mehrling laments. “We’re not even listening to economists.”

He says he has no problem with teaching efficient-markets and rational-expectations theories, but as hypothesis, not catechism. “I object to the idea that these are articles of faith and if you don’t accept them, you are not a member of the tribe. These things need to be questioned and we need a broader conversation.”

The challenge, as Columbia University economist Joseph Stiglitz said at the opening conference of INET, is that “we need better theories of persistent deviations from rationality.”

Some of those theories are coming from the rapidly growing field of behavioural economics, which borrows insights about human motivation from cognitive psychology: A paper titled The Hubris Hypothesis of Corporate Takeovers, for example, examines how the egos of ambitious chief executive officers can lead them to pursue takeovers, even when all available evidence suggests that the move could be a disaster.

It is not yet clear how such new approaches can evolve into workable models, but they hint at what a post-autistic economics might look like.

Prof. Mehrling is cautiously optimistic. “There’s a recognition that things we thought were true aren’t necessarily true,” he argues, “and the world is more complicated and interesting than we thought – so all bets are off, and that’s exciting intellectually.”

Change comes slowly in academia. The few jobs that are available don’t generally go to people who challenge orthodoxy. But over the next decade, as the post-crash crop of economics students make their impact felt in government, business and schools, the lessons learned may well seep into the mainstream.

Theories based on assumptions of rationality, efficiency and equilibrium in the marketplace are likely to be treated with a great deal more skepticism. Homo economicus is a lot more anxious, irrational, unpredictable and complex than most economists believed. And, as Adam Smith recognized, he has a moral and ethical dimension that should not be ignored.

Today, the Post-Autistic Economic Network continues to publish its newsletter, now known as the Real-World Economic Review. It remains a thorn in the side of mainstream economics. In an editorial in January, 2010, the editors called for major economics organizations to censure those economists who “through their teachings, pronouncements and policy recommendations facilitated the global financial collapse” and pointed to the “continuing moral crisis within the economics profession.”

It is unlikely that Prof. Sargent will acknowledge any of this when he travels to Stockholm to accept his (sort of) Nobel Prize in December. Nor is he likely to speak about what role, if any, his models really might have played in “wrecking the world.”

But he did make one concession in his interview with the Nobel website this week: “Many of the practical problems are ahead of where the models are,” he admitted. “That’s life.”

Ira Basen is a radio producer, journalist and educator based in Toronto.

Grupo afirma que estações meteorológicas dão dados precisos sobre aquecimento

Grupo afirma que estações meteorológicas dão dados precisos sobre aquecimento

Céticos dizem que proximidade de cidades alteram dados de estações

Céticos dizem que proximidade de cidades alteram dados de estações

Lucas Jackson/ReutersA protest march through the financial district of New York on October 12.

Lucas Jackson/ReutersA protest march through the financial district of New York on October 12.

Você precisa fazer login para comentar.