Published: July 12th, 2011, Last Updated: July 13th, 2011

By Andrew Freedman

In Monday’s New York Times, Kim Severson and Kirk Johnson wrote an eloquent story on the intense drought that is maintaining a tight grip on a broad swath of America’s southern tier, from Arizona to Florida. Reporting from Georgia, Severson and Johnson detailed the plight of farmers struggling to make ends meet as the parched soil makes it nearly impossible for them to grow crops and feed livestock.

Monday’s story from the New York Times on drought.

Monday’s story from the New York Times on drought.

The piece is a great example of how emotionally moving storytelling from a local perspective can convey the consequences of broad issues and trends, in this case, a major drought that has enveloped 14 states. In that sense, it served Times readers extraordinarily well.

However, when it came to providing readers with a thorough understanding of the drought’s causes and aggravating factors, Severson and Johnson left out any mention of the elephant in the room — global climate change, and pinned the entire drought on one factor, La Niña. For this, it was overly simplistic, and even just downright inaccurate.

Here’s how the story framed the drought’s causes:

From a meteorological standpoint, the answer is fairly simple. “A strong La Niña shut off the southern pipeline of moisture,” said David Miskus, who monitors drought for the National Oceanic and Atmospheric Administration.

The La Niña “lone gunman” theory is problematic from a scientific standpoint. Just last week, Marty Hoerling, the federal government’s top researcher tasked with examining how climate change may be influencing extreme weather and climate events, told reporters that “we cannot reconcile it [the drought] with just the La Niña impact alone, at least not at this time.”

Instead, the causal factors are more nuanced than that, and they do include global warming, since it is changing the background conditions in which such extreme events occur.

During a press conference last week from a drought management meeting in the parched city of Austin, Texas, Hoerling made clear that climate change is already increasing average temperatures across the drought region, and is expected to lead to more frequent and intense droughts in the Southwest. Other research indicates the trend towards a drier Southwest is already taking place. “There are recent regional tendencies toward more severe droughts in the southwestern United States, parts of Canada and Alaska, and Mexico,” stated a 2008 report from the U.S. Global Change Research Program.

As is the case with any extreme weather or climate event now, one cannot truly separate climate change from the mix, considering that droughts, floods, and other extreme events now occur in an environment that has been profoundly altered by human emissions of greenhouse gases, such as carbon dioxide. This doesn’t mean that climate change is causing all of these extreme events, but it does mean that climate change may be increasing the likelihood that some types of events will occur, and may be changing the characteristics of some extreme events, such as by making heat waves more intense.

The fact that the Times story detailed both the drought and the record heat accompanying it, yet left out any mention of climate change, was a particularly puzzling error of omission. Hoerling, for one, pointed to the extreme heat seen during this drought as a possible sign of things to come, as climate change helps produce dangerous combinations of heat and drought.

“We haven’t necessarily dealt with drought and heat at the same time in such a persistent way, and that’s a new condition,” Hoerling said, noting that higher temperatures only hasten the drying of soils.

Many ponds in Texas, such as this one in Rusk County, were nearly dry by late June 2011. Credit: agrilifetoday/flickr.

Many ponds in Texas, such as this one in Rusk County, were nearly dry by late June 2011. Credit: agrilifetoday/flickr.

Texas had its warmest June on record, for example, and on June 26th, Amarillo, Texas recorded its warmest temperature on record for any month, at 111°F. According to the Weather Channel, parts of Oklahoma and Texas have already exceeded their yearly average number of days at or above 100 degrees, including Oklahoma City, Dallas, and Austin. The heat is related to the drought, because when soil moisture is so low, more of the sun’s energy goes towards heating the air directly.

It’s unfortunate that the Times story, which was a searing portrayal of how a drought can impact communities that are already down on their luck due to economic troubles, did not include at least some discussion on climate change. As I’ve shown here, and climate blogger Joe Romm has also pointed out, there was sufficient evidence to justify raising the climate change topic in that story, and many others like it. After all, if the media doesn’t make an effort to evaluate the evidence on the links between extreme weather and climate change, then how can we expect the public to understand how global warming may affect their lives?

At Climate Central, our scientists are working to better understand whether and how climate change is increasing the likelihood of certain extreme weather events, such as heat waves, while at the same time, our journalists are covering the Southern drought and wildfire situation with the goal of making sure our readers understand what scientific studies show about global warming and extreme events.

This is not an easy task, but it need not be such a lonely one.

Update, July 13: The Times published an editorial on the drought today, which also blames the drought squarely on La Niña-related weather patterns, and makes no mention of climate change impacts or projections.

* * *

EDITORIAL (New York Times)

Suffering in the Parched South

Published: July 12, 2011

Right now, the official drought map of the United States looks as if it has been set on fire and scorched at the bottom edge. Scorched is how much of the Southeast and Southwest feel, in the midst of a drought that is the most extreme since the 1950s and possibly since the Dust Bowl of the 1930s. The government has classified much of this drought as D4, which means exceptional. The outlook through late September shows possible improvement in some places, but in most of Texas, Oklahoma, southern Arkansas, and northern Louisiana and Mississippi the drought is expected to worsen.

Dry conditions began last year and have only intensified as temperatures rose above 100 in many areas. Rain gauges have been empty for months, causing a region-wide search for new underground sources of water as streams and lakes dry up. The drought is produced by a pattern of cooling in the Pacific called La Niña. A cooler ocean means less moisture in the atmosphere, which shuts down the storms shuttling east across the region.

Droughts are measured in dollars as well as degrees. The prospects for cattle and wheat, corn and cotton crops across the South are dire. There is no way yet to estimate the ultimate cost of this drought because there is no realistic estimate of when it will end. Farmers have been using crop insurance payments, and federal relief is available in disaster areas, including much of Texas. But the only real relief will be the end of the dry, hot winds and the beginning of long, settled rains.

* * *

Drought Spreads Pain From Florida to Arizona

Grant Blankenship for The New York Times. Buster Haddock, an agricultural scientist at the University of Georgia, in a field where cotton never had the chance to grow.

Grant Blankenship for The New York Times. Buster Haddock, an agricultural scientist at the University of Georgia, in a field where cotton never had the chance to grow.

By KIM SEVERSON and KIRK JOHNSON

Published: July 11, 2011

COLQUITT, Ga. — The heat and the drought are so bad in this southwest corner of Georgia that hogs can barely eat. Corn, a lucrative crop with a notorious thirst, is burning up in fields. Cotton plants are too weak to punch through soil so dry it might as well be pavement.

Waiting for Rain

Waiting for Rain

Dangerously Dry – Nearly a fifth of the contiguous United States has been faced with the worst drought in recent years.

The Dry Season

The Dry Season

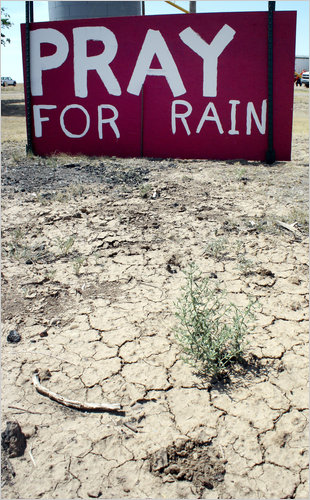

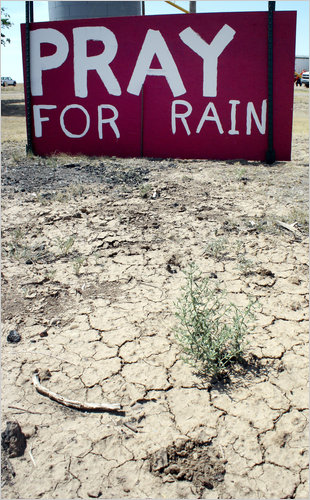

OKLAHOMA A simple, if plaintive, message from the residents of Hough, in the panhandle, late last month. Shawn Yorks/The Guymon Daily Herald, via Associated Press

OKLAHOMA A simple, if plaintive, message from the residents of Hough, in the panhandle, late last month. Shawn Yorks/The Guymon Daily Herald, via Associated Press

Farmers with the money and equipment to irrigate are running wells dry in the unseasonably early and particularly brutal national drought that some say could rival the Dust Bowl days.

“It’s horrible so far,” said Mike Newberry, a Georgia farmer who is trying grow cotton, corn and peanuts on a thousand acres. “There is no description for what we’ve been through since we started planting corn in March.”

The pain has spread across 14 states, from Florida, where severe water restrictions are in place, to Arizona, where ranchers could be forced to sell off entire herds of cattle because they simply cannot feed them.

In Texas, where the drought is the worst, virtually no part of the state has been untouched. City dwellers and ranchers have been tormented by excessive heat and high winds. In the Southwest, wildfires are chewing through millions of acres.

Last month, the United States Department of Agriculture designated all 254 counties in Texas natural disaster areas, qualifying them for varying levels of federal relief. More than 30 percent of the state’s wheat fields might be lost, adding pressure to a crop in short supply globally.

Even if weather patterns shift and relief-giving rain comes, losses will surely head past $3 billion in Texas alone, state agricultural officials said.

Most troubling is that the drought, which could go down as one of the nation’s worst, has come on extra hot and extra early. It has its roots in 2010 and continued through the winter. The five months from this February to June, for example, were so dry that they shattered a Texas record set in 1917, said Don Conlee, the acting state climatologist.

Oklahoma has had only 28 percent of its normal summer rainfall, and the heat has blasted past 90 degrees for a month.

“We’ve had a two- or three-week start on what is likely to be a disastrous summer,” said Kevin Kloesel, director of the Oklahoma Climatological Survey.

The question, of course, becomes why. In a spring and summer in which weather news has been dominated by epic floods and tornadoes, it is hard to imagine that more than a quarter of the country is facing an equally daunting but very different kind of natural disaster.

From a meteorological standpoint, the answer is fairly simple. “A strong La Niña shut off the southern pipeline of moisture,” said David Miskus, who monitors drought for the National Oceanic and Atmospheric Administration.

The weather pattern called La Niña is an abnormal cooling of Pacific waters. It usually follows El Niño, which is an abnormal warming of those same waters.

Although a new forecast from the National Weather Service’s Climate Prediction Center suggests that this dangerous weather pattern could revive in the fall, many in the parched regions find themselves in the unlikely position of hoping for a season of heavy tropical storms in the Southeast and drenching monsoons in the Southwest.

Climatologists say the great drought of 2011 is starting to look a lot like the one that hit the nation in the early to mid-1950s. That, too, dried a broad part of the southern tier of states into leather and remains a record breaker.

But this time, things are different in the drought belt. With states and towns short on cash and unemployment still high, the stress on the land and the people who rely on it for a living is being amplified by political and economic forces, state and local officials say. As a result, this drought is likely to have the cultural impact of the great 1930s drought, which hammered an already weakened nation.

“In the ’30s, you had the Depression and everything that happened with that, and drought on top,” said Donald A. Wilhite, director of the school of natural resources at the University of Nebraska in Lincoln and former director of the National Drought Mitigation Center. “The combination of those two things was devastating.”

Although today’s economy is not as bad, many Americans ground down by prolonged economic insecurity have little wiggle room to handle the effects of a prolonged drought. Government agencies are in the same boat.

“Because we overspent, the Legislature overspent, we’ve been cut back and then the drought comes along and we don’t have the resources and federal government doesn’t, and so we just tighten our belt and go on,” said Donald Butler, the director of the Arizona Department of Agriculture.

The drought is having some odd effects, economically and otherwise.

“One of the biggest impacts of the drought is going to be the shrinking of the cattle herd in the United States,” said Bruce A. Babcock, an agricultural economist at Iowa State University in Ames. And that will have a paradoxical but profound impact on the price of a steak.

Ranchers whose grass was killed by drought cannot afford to sustain cattle with hay or other feed, which is also climbing in price. Their response will most likely be to send animals to slaughter early. That glut of beef would lower prices temporarily.

But America’s cattle supply will ultimately be lower at a time when the global supply is already low, potentially resulting in much higher prices in the future.

There are other problems. Fishing tournaments have been canceled in Florida and Mississippi, just two of the states where low water levels have kept recreational users from lakes and rivers. In Texas, some cities are experiencing blackouts because airborne deposits of salt and chemicals are building up on power lines, triggering surges that shut down the system. In times of normal weather, rain usually washes away the environmental buildup. Instead, power company crews in cities like Houston are being dispatched to spray electrical lines.

In this corner of Georgia, where temperatures have been over 100 and rainfall has been off by more than half, fish and wildlife officials are worried over the health of the shinyrayed pocketbook and the oval pigtoe mussels, both freshwater species on the endangered species list.

The mussels live in Spring Creek, which is dangerously low and borders Terry Pickle’s 2,000-acre farm here. He pulls his irrigation from wells that tie into the water system of which Spring Creek is a part.

Whether nature or agriculture is to blame remains a debate in a state that for 20 years has been embroiled in a water war with Alabama and Florida. Meanwhile, Colquitt has allowed the state to drill a special well to pump water back into the creek to save the mussels from extinction.

Most farmers here are much more worried about the crops than the mussels. With cotton and corn prices high, they had high hopes for the season. But many have had to replant fields several times to get even one crop to survive. Others, like Mr. Pickle, have relied on irrigation so expensive that it threatens to eat into any profits.

The water is free, but the system used to get it from the ground runs on diesel fuel. His bill for May and June was an unheard of $88,442.

Thousands of small stories like that will all contribute to the ultimate financial impact of the drought, which will not be known until it is over. And no one knows when that will be.

The United States Department of Agriculture’s Farm Service Agency has already provided over $75 million in assistance to ranchers nationwide, with most of it going to Florida, New Mexico and Texas. An additional $62 million in crop insurance indemnities have already been provided to help other producers.

Economists say that adding up the effects of drought is far more complicated than, say, those of a hurricane or tornado, which destroy structures that have set values. With drought, a shattered wheat or corn crop is a loss to one farmer, and it has a specific price tag. But all those individual losses punch a hole in the food supply and drive prices up. That is good news for a farmer who manages to get a crop in. The final net costs down the line are thus dispersed, and mostly passed along.

That means grocery shoppers will feel the effects of the drought at the dinner table, where the cost of staples like meat and bread will most likely rise, said Michael J. Roberts, an associate professor of agricultural and resource economics at North Carolina State University in Raleigh, N.C. “The biggest losers are consumers,” he said.

Kim Severson reported from Colquitt, Ga., and Kirk Johnson from Denver. Dan Frosch contributed reporting from Denver.

Você precisa fazer login para comentar.