In the Salinas Valley, America’s “Salad Bowl,” startups selling machine learning and remote sensing are finding customers.

Rowan Moore Gerety – Dec. 18, 2020

As a machine operator for the robotics startup FarmWise, Diego Alcántar spends each day walking behind a hulking robot that resembles a driverless Zamboni, helping it learn to do the work of a 30-person weeding crew.

On a Tuesday morning in September, I met Alcántar in a gigantic cauliflower field in the hills outside Santa Maria, at the southern end of the vast checkerboard of vegetable farms that line California’s central coast, running from Oxnard north to Salinas and Watsonville. Cooled by coastal mists rolling off the Pacific, the Salinas valley is sometimes called America’s Salad Bowl. Together with two adjacent counties to the south, the area around Salinas produces the vast majority of lettuce grown in the US during the summer months, along with most of the cauliflower, celery, and broccoli, and a good share of the berries.

It was the kind of Goldilocks weather that the central coast is known for—warm but not hot, dry but not parched, with a gentle breeze gliding in from the coast. Nearby, a harvest crew in straw hats and long sleeves was making quick work of an inconceivable quantity of iceberg lettuce, stacking boxes 10 high on the backs of tractor-trailers lining a dirt road.

In another three months, the same scene would unfold in the cauliflower field where Alcántar now stood, surrounded by tens of thousands of two- and three-leaf seedlings. First, though, it had to be weeded.

The robot straddled a planted bed three rows wide with its wheels in adjacent furrows. Alcántar followed a few paces back, holding an iPad with touch-screen controls like a joystick’s. Under the hood, the robot’s cameras flashed constantly. Bursts of air, like the pistons in a whack-a-mole arcade game, guided sets of L-shaped blades in precise, short strokes between the cauliflower seedlings, scraping the soil to uproot tiny weeds and then parting every 12 inches so that only the cauliflower remained, unscathed.

Periodically, Alcántar stopped the machine and kneeled in the furrow, bending to examine a “kill”—spots where the robot’s array of cameras and blades had gone ever so slightly out of alignment and uprooted the seedling itself. Alcántar was averaging about an acre an hour, and only one kill out of every thousand plants. The kills often came in sets of twos and threes, marking spots where one wheel had crept out of the furrow and onto the bed itself, or where the blades had parted a fraction of a second too late.

Taking an iPhone out of his pocket, Alcántar pulled up a Slack channel called #field-de-bugging and sent a note to a colleague 150 miles away about five kills in a row, with a hypothesis about the cause (latency between camera and blade) and a time stamp so he could find the images and see what had gone wrong.

In this field, and many others like it, the ground had been prepared by a machine, the seedlings transplanted by a machine, and the pesticides and fertilizers applied by a machine. Irrigation crews still laid sprinkler pipe manually, and farmworkers would harvest this cauliflower crop when the time came, but it isn’t a stretch to think that one day, no person will ever lay a hand to the ground around these seedlings.

Technology’s race to disrupt one of the planet’s oldest and largest occupations centers on the effort to imitate, and ultimately outdo, the extraordinary powers of two human body parts: the hand, able to use tweezers or hold a baby, catch or throw a football, cut lettuce or pluck a ripe strawberry with its calyx intact; and the eye, which is increasingly being challenged by a potent combination of cloud computing, digital imagery, and machine learning.

The term “ag tech” was coined at a conference in Salinas almost 15 years ago; boosters have been promising a surge of gadgets and software that would remake the farming industry for at least that long. And although ag tech startups have tended to have an easier time finding investors than customers, the boosters may finally be on to something.

Ag tech boosters have been promising a surge of gadgets and software that would remake the farming industry for at least 15 years. They may finally be on to something.

Silicon Valley is just over the hill from Salinas. But by the standards of the Grain Belt, the Salad Bowl is a relative backwater—worth about $10 billion a year, versus nearly $100 billion for commodity crops in the Midwest. Nobody trades lettuce futures like soybean futures; behemoths like Cargill and Conagra mostly stay away. But that’s why the “specialty crop” industry seemed to me like the best place to chart the evolution of precision farming: if tech’s tools can work along California’s central coast, on small plots with short growing cycles, then perhaps they really are ready to stage a broader takeover.

Alcántar, who is 28, was born in Mexico and came to the US as a five-year-old in 1997, walking across the Sonoran Desert into Arizona with his uncle and his younger sister. His parents, who are from the central Mexican state of Michoacán, were busily setting up the ingredients for a new life as farmworkers in Salinas, sleeping in a relative’s walk-in closet before renting a converted garage apartment. Alcántar spent the first year at home, watching TV and looking after his sister while his parents worked: there was a woman living in the main house who checked on them and kept them fed during the day, but no one who could drive them to elementary school.

Workers harvest broccoli as part of a joint project between NASA and the University of California.

In high school, Alcántar often worked as a field hand on the farm where his father had become a foreman. He cut and weeded lettuce, stacked strawberry boxes after the harvest, drove a forklift in the warehouse. But when he turned 22 and saw friends he’d grown up with getting their first jobs after college, he decided he needed a plan to move on from manual labor. He got a commercial driver’s license and went to work for a robotics startup.

During this first stint, Alcántar recalls, relatives sometimes chided him for helping to accelerate a machine takeover in the fields, where stooped, sweaty work had cleared a path for his family’s upward mobility. “You’re taking our jobs away!” they’d say.

Five years later, Alcántar says, the conversation has shifted completely. Even FarmWise has struggled to find people willing to “walk behind the machine,” he says. “People would rather work at a fast food restaurant. In-N-Out is paying $17.50 an hour.”

II

Even up close, all kinds of things can foul the “vision” of the computers that power automated systems like the ones FarmWise uses. It’s hard for a computer to tell, for instance, whether a contiguous splotch of green lettuce leaves represents a single healthy seedling or a “double,” where two seeds germinated next to one another and will therefore stunt each other’s growth. Agricultural fields are bright, hot, and dusty: hardly ideal conditions for keeping computers running smoothly. A wheel gets stuck in the mud and temporarily upends the algorithm’s sense of distance: the left tires have now spun a quarter-turn more than the right tires.

Other ways of digital seeing have their own challenges. For satellites, there’s cloud cover to contend with; for drones and planes, wind and vibration from the engines that keep them aloft. For all three, image-recognition software must take into account the shifting appearance of the same fields at different times of day as the sun moves across the sky. And there’s always a trade-off between resolution and price. Farmers have to pay for drones, planes, or any field machinery. Satellite imagery, which has historically been produced, paid for, and shared freely by public space agencies, has been limited to infrequent images with coarse resolution.

NASA launched the first satellite for agricultural imagery, known as Landsat, in 1972. Clouds and slow download speeds conspired to limit coverage of most of the world’s farmland to a handful of images a year of any given site, with pixels from 30 to 120 meters per side.

A half-dozen more iterations of Landsat followed through the 1980s and ’90s, but it was only in 1999, with the Moderate Resolution Imaging Spectroradiometer, or MODIS, that a satellite could send farmers daily observations over most of the world’s land surface, albeit with a 250-meter pixel. As cameras and computing have improved side by side over the past 20 years, a parade of tech companies have become convinced there’s money to be made in providing insights derived from satellite and aircraft imagery, says Andy French, an expert in water conservation at the USDA’s Arid-Land Agricultural Research Center in Arizona. “They haven’t been successful,” he says. But as the frequency and resolution of satellite images both continue to increase, that could now change very quickly, he believes: “We’ve gone from Landsat going over our head every 16 days to having near-daily, one- to four-meter resolution.”

“We’ve gone from Landsat going over our head every 16 days to having near-daily, one- to four-meter resolution.”

Andy French

In 2014, Monsanto acquired a startup called the Climate Corporation, which billed itself as a “digital farming” company, for a billion dollars. “It was a bunch of Google guys who were experts in satellite imagery, saying ‘Can we make this useful to farmers?’” says Thad Simons, a longtime commodities executive who cofounded a venture capital firm called the Yield Lab. “That got everybody’s attention.”

In the years since, Silicon Valley has sent forth a burst of venture-funded startups whose analytic and forecasting services rely on tools that can gather and process information autonomously or at a distance: not only imagery, but also things like soil sensors and moisture probes. “Once you see the conferences making more money than people actually doing work,” Simons says with a chuckle, “‘you know it’s a hot area.’’

A subset of these companies, like FarmWise, are working on something akin to hand-eye coordination, chasing the perennial goal of automating the most labor-intensive stages of fruit and vegetable farming—weeding and, above all, harvesting—against a backdrop of chronic farm labor shortages. But many others are focused exclusively on giving farmers better information.

One way to understand farming is as a neverending hedge against the uncertainties that affect the bottom line: weather, disease, the optimal dose and timing of fertilizer, pesticides, and irrigation, and huge fluctuations in price. Each one of these factors drives thousands of incremental decisions over the course of a season—decisions based on long years of trial and error, intuition, and hard-won expertise. So the tech question on farmers’ lips everywhere, as Andy French told me, is: “What are you telling us that we didn’t already know?”

III

Josh Ruiz, the vice president of ag operations for Church Brothers, which grows greens for the food service industry, manages more than a thousand separate blocks of farmland covering more than 20,000 acres. Affable, heavy-set, and easy to talk to, Ruiz is known across the industry as an early adopter who’s not afraid to experiment with new technology. Over the last few years, he has become a regular stop on the circuit that brings curious tech executives in Teslas down from San Francisco and Mountain View to stand in a lettuce field and ask questions about the farming business. “Trimble, Bosch, Amazon, Microsoft, Google—you name it, they’re all calling me,” Ruiz says. “You can get my attention real fast if you solve a problem for me, but what happens nine times out of 10 is the tech companies come to me and they solve a problem that wasn’t a problem.”

What everyone wants, in a word, is foresight. For more than a generation, the federal government has sheltered growers of corn, wheat, soybeans, and other commodities from the financial impact of pests and bad weather by offering subsidies to offset the cost of crop insurance and, in times of bountiful harvests, setting an artificial “floor” price at which the government steps in as a buyer of last resort. Fruits and vegetables do not enjoy the same protection: they account for less than 1% of the $25 billion the federal government spends on farm subsidies. As a result, the vegetable market is subject to wild variations based on weather and other only vaguely predictable factors.

Josh Ruiz, the vice president of ag operations at Church Brothers, a greens-growing concern, with “Big Red,” an automated broccoli harvester of his design.

When I visited Salinas, in September, the lettuce industry was in the midst of a banner week price-wise, with whole heads of iceberg and romaine earning shippers as much as $30 a box, or roughly $30,000 an acre. “Right now, you have the chance to lose a fortune and make it back,” Ruiz said as we stood at the edge of a field. The swings can be dramatic: a few weeks earlier, he explained, iceberg was selling for a fraction of that amount—$5 a box, about half what it costs to produce and harvest.

In the next field over, rows of young iceberg lettuce seedlings were ribbed with streaks of tawny brown—the mark of the impatiens necrotic spot virus, or INSV, which has been wreaking havoc on Salinas lettuce since the mid-aughts. These were the early signs. Come back after a couple more weeks, Ruiz said, and half the plants will be dead: it won’t be worthwhile to harvest at all. As it was, that outcome would represent a $5,000 loss, based on the costs of land, plowing, planting, and inputs. If they decided to weed and harvest, that loss could easily double. Ruiz said he wouldn’t have known he was wasting $5,000 if he hadn’t decided to take me on a drive that day. Multiply that across more than 20,000 acres. Assuming a firm could reliably deliver that kind of advance knowledge about INSV, how much would it be worth to him?

One firm trying to find out is an imagery and analytics startup called GeoVisual Analytics, based in Colorado, which is working to refine algorithms that can project likely yields a few weeks ahead of time. It’s a hard thing to model well. A head of lettuce typically sees more than half its growth in the last three weeks before harvest; if it stays in the field just a couple of days longer, it could be too tough or spindly to sell. Any model the company builds has to account for factors like that and more. A ball of iceberg watered at the wrong time swells to a loose bouquet. Supermarket carrots are starved of water to make them longer.

When GeoVisual first got to Salinas, in 2017, “we came in promising the future, and then we didn’t deliver,” says Charles McGregor, its 27-year-old general manager. Ruiz, less charitably, calls their first season an “epic fail.” But he gives McGregor credit for sticking around. “They listened and they fixed it,” he says. He’s just not sure what he’s willing to pay for it.

“We came in promising the future, and then we didn’t deliver.”

Charles McGregor

As it stands, the way field men arrive at yield forecasts is decidedly analog. Some count out heads of lettuce pace by pace and then extrapolate by measuring their boots. Others use a 30-foot section of sprinkler pipe. There’s no way methods like these can match the scale of what a drone or an airplane might capture, but the results have the virtue of a format growers can easily process, and they’re usually off by no more than 25 to 50 boxes an acre, or about 3% to 5%. They’re also part of a farming operation’s baseline expenses: if the same employee spots a broken irrigation valve or an empty fertilizer tank and makes sure the weeding crew starts on time, then asking him to deliver a decent harvest forecast isn’t necessarily an extra cost. By contrast, the pricing of tech-driven forecasts tends to be uneven. Tech salespeople lowball the cost of service in order to get new customers and then, eventually, have to figure out how to make money on what they sell.

“At 10 bucks an acre, I’ll tell [GeoVisual] to fly the whole thing, but at $50 an acre, I have to worry about it,” Ruiz told me. “If it costs me a hundred thousand dollars a year for two years, and then I have that aha! moment, am I gonna get my two hundred thousand dollars back?”

IV

All digital sensing for agriculture is a form of measurement by proxy: a way to translate slices of the electromagnetic spectrum into understanding of biological processes that affect plants. Thermal infrared reflectance correlates with land surface temperature, which correlates with soil moisture and, therefore, the amount of water available to plants’ roots. Measuring reflected waves of green, red, and near-infrared light is one way to estimate canopy cover, which helps researchers track evapotranspiration—that is, how much water evaporates through a plant’s leaves, a process with clear links to plant health.

Improving these chains of extrapolation is a call and response between data generated by new generations of sensors and the software models that help us understand them. Before the launch of the EU’s first Sentinel satellite in 2014, for instance, researchers had some understanding of what synthetic aperture radar, which builds high-resolution images by simulating large antennas, could reveal about plant biomass, but they lacked enough real-world data to validate their models. In the American West, there’s abundant imagery to track the movement of water over irrigated fields, but no crop model sufficiently advanced to reliably help farmers decide when to “order” irrigation water from the Colorado River, which is usually done days ahead of time.

As with any Big Data frontier, part of what’s driving the explosion of interest in ag tech is simply the availability of unprecedented quantities of data. For the first time, technology can deliver snapshots of every individual broccoli crown on a 1,000-acre parcel and show which fields are most likely to see incursions from the deer and wild boars that live in the hills above the Salinas Valley.

The problem is that turning such a firehose of 1s and 0s into any kind of useful insight—producing, say, a text alert about the top five fields with signs of drought stress—requires a more sophisticated understanding of the farming business than many startups seem to have. As Paul Fleming, a longtime farming consultant in Salinas, put it, “We only want to know about the things that didn’t go the way they’re supposed to.”

“We only want to know about the things that didn’t go the way they’re supposed to.”

Paul Fleming

And that’s just the beginning. Retail shippers get paid for each head of cauliflower or bundle of kale they produce; processors, who sell pre-cut broccoli crowns or bags of salad mix, are typically paid by weight. Contract farmers, hired to grow a crop for someone else for a per-acre fee, might never learn whether a given harvest was a “good” or a “bad” one, representing a profit or a loss for the shipper that hired them. It’s often in a shipper’s interest to keep individual farmers in the dark about where they stand relative to their nearby competitors.

In Salinas, the challenge of making big data relevant to farm managers is also about consolidating the universe of information farms already collect—or, perhaps, don’t. Aaron Magenheim, who grew up in his family’s irrigation business and now runs a consultancy focused on farm technology, says the particulars of irrigation, fertilizer, crop rotations, or any number of variables that can influence harvest tend to get lost in the hubbub of the season, if they’re ever captured at all. “Everyone thinks farmers know how they grow, but the reality is they’re pulling it out of the air. They don’t track that down to the lot level,” he told me, using an industry term for an individual tract of farmland. As many as 40 or 50 lots might share the same well and fertilizer tank, with no precise way of accounting for the details. “When you’re applying fertilizer, the reality is it’s a guy opening a valve on a tank and running it for 10 minutes, and saying, ‘Well that looks okay.’ Did Juan block number 6 or number 2 because of a broken pipe? Did they write it down?” Magenheim says. “No! Because they have too many things to do.”

Then there are the maps. Compared with corn and soybean operations, where the same crops get planted year after year, or vineyards and orchards, where plantings may not change for more than a generation, growers of specialty crops deal with a never-ending jigsaw puzzle of romaine following celery following broccoli, with plantings that change size and shape according to the market, and cycles as short as 30 days from seed to harvest.

For many companies in Salinas, the man standing astride the gap between what happens in the field and the record-keeping needs of a modern farming business is a 50-year-old technology consultant named Paul Mariottini. Mariottini—who planned to become a general contractor until he got a computer at age 18 and, as he puts it, “immediately stopped sleeping”—runs a one-man operation out of his home in Hollister, with a flip phone and a suite of bespoke templates and plug-ins he writes for Microsoft Access and Excel. When I asked the growers I met how they handled this part of the business, the reply, to a person, was: “Oh, we use Paul.”

Mariottini’s clients include some of the largest produce companies in the world, but only one uses tablets so that field supervisors can record the acreage and variety of each planting, the type and date of fertilizer and pesticide applications, and other basic facts about the work they supervise while it’s taking place. The rest take notes on paper, or enter the information from memory at the end of the day.

When I asked Mariottini whether anyone used software to link paper maps to the spreadsheets showing what got planted where, he chuckled and said, “I’ve been doing this for 20 years trying to make that happen.” He once programmed a PalmPilot; he calls one of his plug-ins “Close-Enough GPS.” “The tech industry would probably laugh at it, but the thing that the tech industry doesn’t understand is the people you’re working with,” he said.

V

The goal of automation in farming is best understood as all encompassing. The brief weeks of harvest consume a disproportionate share of the overall budget—as much as half the cost of growing some crops. But there are also efforts to optimize and minimize labor throughout the growing cycle. Strawberries are being grown with spray-on, biodegradable weed barriers that could eliminate the need to spread plastic sheeting over every bed. Automated tractors will soon be able to plow vegetable fields to a smoother surface than a human driver could, improving germination rates. Even as analytics companies race to deliver platforms that can track the health of an individual head of lettuce from seed to supermarket and optimize the order in which fields get harvested, other startups are developing new “tapered” varieties of lettuce—similar to romaine—with a compact silhouette and leaves that rest higher off the ground, in order that they might be more easily “seen” and cut by a robot.

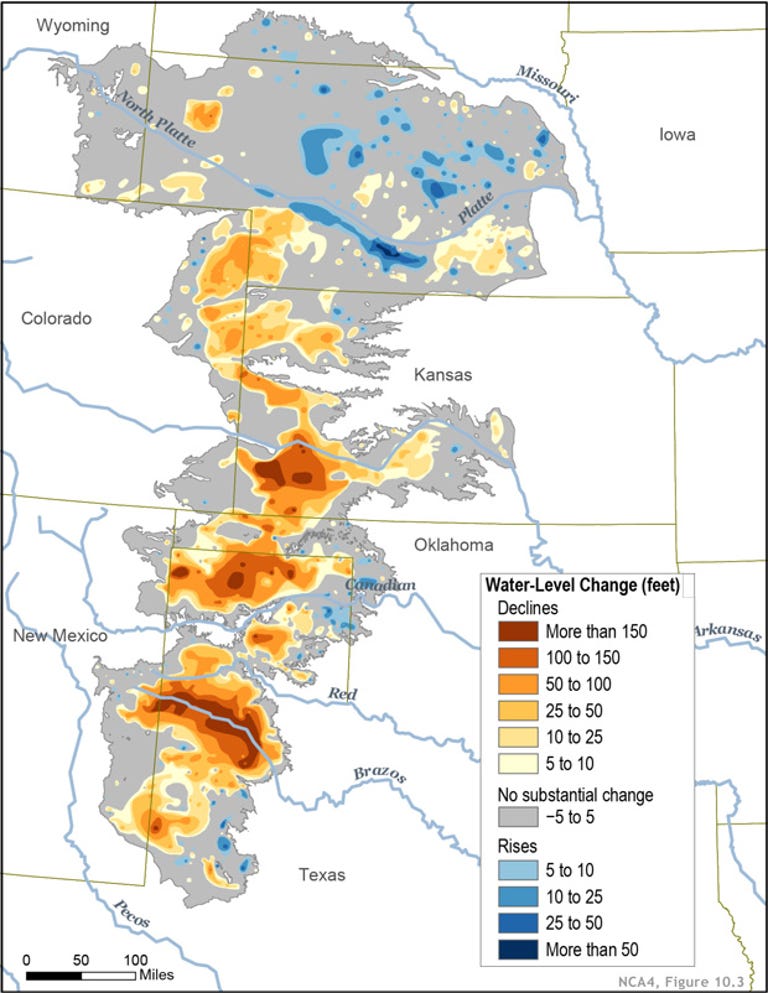

Overall, though, the problems with the American food system aren’t about technology so much as law and politics. We’ve known for a long time that the herbicide Roundup is tied to increased cancer rates, yet it remains widely used. We’ve known for more than 100 years that the West is short on water, yet we continue to grow alfalfa in the desert, and use increasingly sophisticated drilling techniques in a kind of water arms race. These are not problems caused by a lack of technology.

On my last day in Salinas, I met a grower named Mark Mason just off Highway 101, which cuts the valley in two, and followed him to a nine-acre block of celery featuring a tidy tower of meteorological equipment in the center. The equipment is owned by NASA, part of a joint project with the University of California’s Agriculture and Natural Resources cooperative extension office, or UCANR.

Eight years ago, amid news of droughts and forest fires across the West, Mason felt a gnawing sense that he ought to be a more careful steward of the groundwater he uses to irrigate, even if the economics suggested otherwise. That led him to contact Michael Cahn, a researcher at UCANR.

Historically, water in Salinas has always been cheap and abundant: the downside of under-irrigating, or of using too little fertilizer, has always been far larger than the potential savings. “Growers want to sell product; efficient use is secondary. They won’t cut it close and risk quality,” Cahn said. The risk might even extend to losing a crop.

Of late, though, nitrate contamination of drinking water, caused by heavy fertilizer use and linked to thyroid disease and some types of cancer, has become a major political issue in Salinas. The local water quality control board is currently developing a new standard that will limit the amount of nitrogen fertilizer growers can apply to their fields, and it’s expected to be finalized in 2021. As Cahn explained, “You can’t control nitrogen without controlling your irrigation water.” In the meantime, Mason and a handful of other growers are working with UCANR on a software platform called Crop Manage, designed to ingest weather and soil data and deliver customized recommendations on irrigation and fertilizer use for each crop.

Michael Cahn, a researcher at the University of California who’s developing software to optimize water and fertilizer use, at a water trial for artichokes.

Cahn says he expects technological advances in water management to follow a course similar to the one being set by the threat of tighter regulations on nitrogen fertilizer. In both cases, the business argument for a fix and the technology required to get there lie somewhere downstream of politics. Outrage over lack of access to clean groundwater brought forth a new regulatory mechanism, which unlocked the funding to figure out how to measure it, and which will, in turn, inform the management approaches farmers use.

In the end, then, it’s political pressure that has created the conditions for science and technology to advance. For now, venture capital and federal research grants continue to provide an artificial boost for ag tech while its potential buyers—such as lettuce growers—continue to treat it with a degree of caution.

But just as new regulations can reshape the cost-benefit analysis around nitrogen or water use from one day to the next, so too can a product that brings clear returns on investment. All the growers I spoke to spend precious time keeping tabs on the startup world: taking phone calls, buying and testing tech-powered services on a sliver of their farms, making suggestions on how to target analytics or tweak a farm-facing app. Why? To have a say in how the future unfolds, or at least to get close enough to see it coming. One day soon, someone will make a lot of money following a computer’s advice about how high to price lettuce, or when to spray for a novel pest, or which fields to harvest and which ones to abandon. When that happens, these farmers want to be the first to know.