Arquivo da tag: Clima

Weathering Fights – Science: What’s It Up To? (The Daily Show with Jon Stewart)

http://media.mtvnservices.com/mgid:cms:video:thedailyshow.com:400760

Science claims it’s working to cure disease, save the planet and solve the greatest human mysteries, but Aasif Mandvi finds out what it’s really up to. (05:47) – Comedy Central

Global Warming May Worsen Effects of El Niño, La Niña Events (Climate Central)

Published: October 12th, 2011

By Michael D. Lemonick

Does this mean Texas is toast?

As just about everyone knows, El Niño is a periodic unusual warming of the surface water in the eastern and central tropical Pacific Ocean. Actually, that’s pretty much a lie. Most people don’t know the definition of El Niño or its mirror image, La Niña, and truthfully, most people don’t much care.

What you do care about if you’re a Texan suffering through the worst one-year drought on record, or a New Yorker who had to dig out from massive snowstorms last winter (tied in part to La Niña), or a Californian who has ever had to deal with the torrential rains that trigger catastrophic mudslides (linked to El Niño), is that these natural climate cycles can elevate the odds of natural disasters where you live.

At the moment, we’re now entering the second year of the La Niña part of the cycle. La Niña is one key reason why the Southwest was so dry last winter and through the spring and summer, and since La Niña is projected to continue through the coming winter, Texas and nearby states aren’t likely to get much relief.

Precipitation outlook for winter 2011-12, showing the likelihood of below average precipitation in Texas and other drought-stricken states.

Precipitation outlook for winter 2011-12, showing the likelihood of below average precipitation in Texas and other drought-stricken states.

But Niñas and Niños (the broader cycle, for you weather/climate geeks, is known as the “El Niño-Southern Oscillation,” or “ENSO”) don’t just operate in isolation. They’re part of the broader climate system, which means that climate change could theoretically change how they operate — make them develop more frequently, for example, or less frequently, or be more or less pronounced. Climate change could also intensify the effects of El Niño and La Niña events.

Climate scientists have been wrestling with the first question for a while now, and they still don’t really have a definitive answer. Some climate models have suggested that global warming has already begun to cause subtle changes in ENSO cycles, and that the changes will become more pronounced later this century. But a new study, published in the Journal of Climate, doesn’t find much evidence for that.

But on the second question, the new study is a lot more definitive. “Due to a warmer and moister atmosphere,” said co-author Baylor Fox-Kemper, of the University of Colorado in a press release, “the impacts of El Niño are changing even though El Niño itself doesn’t change.”

That’s because global warming has begun to change the playing field on which El Niño and La Niña operate, just as it’s changing the background conditions that give rise to our everyday weather. The Texas drought is a prime example. Its most likely cause is reduced rainfall from La Niña-related weather patterns. But however dry Texas and Oklahoma might have been otherwise, the killer heat wave that plagued the region this past summer — the sort of heat wave global warming is already making more commonplace — baked much of the remaining moisture out of both the soil and vegetation. No wonder large parts of the Lone Star State have gone up in smoke.

A map of sea surface temperature anomalies, showing a swath of cooler than average waters in the central and eastern tropical Pacific Ocean – a telltale sign La Niña conditions.

A map of sea surface temperature anomalies, showing a swath of cooler than average waters in the central and eastern tropical Pacific Ocean – a telltale sign La Niña conditions.

When the next El Niño occurs in a year or two, it will probably bring heavy rains to places like Southern California, whose unstable hillsides tend to slide when soggy. Except now, thanks to global warming, the typical El Niño-related storms that roll in off the Pacific may well be turbocharged, since a warmer atmosphere can hold more water. This is the reason, say many climate scientists, that downpours have become heavier in recent decades across broad geographical areas.

La Niña, plus the added moisture in the air from global warming, have also been partially implicated in the massive snowstorms that struck the Northeast and Mid-Atlantic states during the last two winters. Those could get worse as well, suggests the new analysis. “What we see,” says Fox-Kemper, “is that certain atmospheric patterns, such as the blocking high pressure south of Alaska typical of La Niña winters, strengthen…so, the cooling of North America expected in a La Niña winter would be stronger in future climates.” So to pre-answer the question that will inevitably be asked next winter: no, more snow does NOT contradict the idea that the planet is warming. Quite the contrary.

Finally, for those who really do want to know what El Niño and La Niña actually are, as opposed to what they do, you can go to NOAA’s El Niño page. But be warned: there will be a quiz, and the word “thermocline” will appear.

Comments

By Kirk Petersen (Maplewood, NJ 07040)

on October 13th, 2011

Seventh paragraph, third sentence should begin “Its most likely cause”—not “it’s”.

Vital Details of Global Warming Are Eluding Forecasters (Science)

Vol. 334 no. 6053 pp. 173-174

DOI: 10.1126/science.334.6053.173

PREDICTING CLIMATE CHANGE

Richard A. Kerr

Decision-makers need to know how to prepare for inevitable climate change, but climate researchers are still struggling to sharpen their fuzzy picture of what the future holds.

Seattle Public Utilities officials had a question for meteorologist Clifford Mass. They were planning to install a quarter-billion dollars’ worth of storm-drain pipes that would serve the city for up to 75 years. “Their question was, what diameter should the pipe be? How will the intensity of extreme precipitation change?” Mass says. If global warming means that the past century’s rain records are no guide to how heavy future rains will be, he was asked, what could climate modeling say about adapting to future climate change? “I told them I couldn’t give them an answer,” says the University of Washington (UW), Seattle, researcher.

Climate researchers are quite comfortable with their projections for the world under a strengthening greenhouse, at least on the broadest scales. Relying heavily on climate modeling, they find that on average the globe will continue warming, more at high northern latitudes than elsewhere. Precipitation will tend to increase at high latitudes and decrease at low latitudes.

But ask researchers what’s in store for the Seattle area, the Pacific Northwest, or even the western half of the United States, and they’ll often demur. As Mass notes, “there’s tremendous uncertainty here,” and he’s not just talking about the Pacific Northwest. Switching from global models to models focusing on a single region creates a more detailed forecast, but it also “piles uncertainty on top of uncertainty,” says meteorologist David Battisti of UW Seattle.

First of all, there are the uncertainties inherent in the regional model itself. Then there are the global model’s uncertainties at the regional scale, which it feeds into the regional model. As the saying goes, if the global model gives you garbage, regional modeling will only give you more detailed garbage. And still more uncertainties are created as data are transferred from the global to the regional model.

Although uncertainties abound, “uncertainty tends to be downplayed in a lot of [regional] modeling for adaptation,” says global modeler Christopher Bretherton of UW Seattle. But help is on the way. Regional modelers are well into their first extensive comparison of global-regional model combinations to sort out the uncertainties, although that won’t help Seattle’s storm-drain builders.

Most humble origins

Policymakers have long asked for regional forecasts to help them adapt to climate change, some of which is now unavoidable. Even immediate, rather drastic action to curb emissions of greenhouse gases would not likely limit warming globally to 2°C, generally considered the threshold above which “dangerous” effects set in. And nothing at all can be done to reduce the global warming effects expected in the next several decades. They are already locked into climate change.

So scientists have been doing what they can for decision-makers. Early on, it wasn’t much. A U.S. government assessment released in 2000, Climate Change Impacts on the United States, relied on the most rudimentary regional forecasting technique (Science, 23 June 2000, p. 2113). Expert committee members divided the country into eight regions and then considered what two of their best global climate models had to say about each region over the next century. The two models were somewhat consistent in the far southwest, where the report’s authors found it was likely that warmer and drier conditions would eliminate alpine ecosystems and shorten the ski season.

But elsewhere, there was far less consistency. Over the eastern two-thirds of the contiguous 48 states, for example, the two models couldn’t agree on how much moisture soils would hold in the summer. Kansas corn would either suffer severe droughts more frequently, as one model had it, or enjoy even more moisture than it currently does, as the other indicated. But at least the uncertainties were plain for all to see.

The uncertainties of regional projections nearly faded from view in the next U.S. effort, Global Climate Change Impacts in the United States. The 2009 study drew on not two but 15 global models melded into single projections. In a technique called statistical downscaling, its authors assumed that local changes would be proportional to changes on the larger scales. And they adjusted regional projections of future climate according to how well model simulations of past climate matched actual climate.

Statistical downscaling yielded a broad warming across the lower 48 states with less warming across the southeast and up the West Coast. Precipitation was mostly down, especially in the southwest. But discussion of uncertainties in the modeling fell largely to a footnote (number 110), in which the authors cite a half-dozen papers to support their assertion that statistical downscaling techniques are “well-documented” and thoroughly corroborated.

The other sort of downscaling, known as dynamical downscaling or regional modeling, has yet to be fully incorporated into a U.S. national assessment. But an example of state-of-the-art regional modeling appeared 30 June in Environmental Research Letters. To investigate what will happen in the U.S. wine industry, regional modeler Noah Diffenbaugh of Purdue University in West Lafayette, Indiana, and his colleagues embedded a detailed model that spanned the lower 48 states in a climate model that spanned the globe. The global model’s relatively fuzzy simulation of evolving climate from 1950 to 2039—calculated at points about 150 kilometers apart—then fed into the embedded regional model, which calculated a sharper picture of climate change at points only 25 kilometers apart.

Closely analyzing the regional model’s temperature projections on the West Coast, the group found that the projected warming would decrease the area suitable for production of premium wine grapes by 30% to 50% in parts of central and northern California. The loss in Washington state’s Columbia Valley would be more than 30%. But adaptation to the warming, such as the introduction of heat-tolerant varieties of grapes, could sharply reduce the losses in California and turn the Washington loss into a 150% gain.

Not so fast

A rapidly growing community of regional modelers is turning out increasingly detailed projections of future climate, but many researchers, mostly outside the downscaling community, have serious reservations. “Many regional modelers don’t do an adequate job of quantifying issues of uncertainty,” says Bretherton, who is chairing a National Academy of Sciences study committee on a national strategy for advancing climate modeling. “We’re not confident predicting the very things people are most interested in being predicted,” such as changes in precipitation.

Regional models produce strikingly detailed maps of changed climate, but they might be far off base. “The problem is that precision is often mistaken for accuracy,” Bretherton says. Battisti just doesn’t see the point of downscaling. “I would never use one of these products,” he says.

The problems start with the global models, as critics see it. Regional models must fill in the detail in the fuzzy picture of climate provided by global models, notes atmospheric scientist Edward Sarachik, professor emeritus at UW Seattle. But if the fuzzy picture of the region is wrong, the details will be wrong as well. And global models aren’t very good at painting regional pictures, he says. A glaring example, according to Sarachik, is the way global models place the cooler waters of the tropical Pacific farther west than they are in reality. Such ocean temperature differences drive weather and climate shifts in specific regions halfway around the world, but with the cold water in the wrong place, the global models drive climate change in the wrong regions.

Gregory Tripoli’s complaint about the global models is that they can’t create the medium-size weather systems that they should be sending into any embedded regional model. Tripoli, a meteorologist and modeler at the University of Wisconsin, Madison, cites the case of summertime weather disturbances that churn down off the Rocky Mountains and account for 80% of the Midwest’s summer rainfall. If a regional model forecasting for Wisconsin doesn’t extend to the Rockies, Wisconsin won’t get the major weather events that add up to be climate. And some atmospheric disturbances travel from as far away as Thailand to wreak havoc in the Midwest, he says, so they could never be included in the regional model.

Even the things the global models get right have a hard time getting into regional models, critics say. “There are a lot of problems matching regional and global models,” Tripoli says. In one problem area, global and regional models usually have different ways of accounting for atmospheric processes such as individual cloud development that neither model can simulate directly, creating further clashes. Even the different philosophies involved in building global models and regional models can lead to mismatches that create phantom atmospheric circulations, Tripoli says. “It’s not straightforward you’re going to get anything realistic,” he says.

Redeeming regional modeling

“You could say all the global and regional models are wrong; some people do say that,” notes regional modeler Filippo Giorgi of the Abdus Salam International Centre for Theoretical Physics in Trieste, Italy. “My personal opinion is we do know something now. A few reports ago, it was really very, very difficult to say anything about regional climate change.”

But Giorgi says that in recent years he has been seeing increasingly consistent regional projections coming from combinations of many different models and from successive generations of models. “This means the projections are more and more reliable,” he says. “I would be confident saying the Mediterranean area will see a general decrease in precipitation in the next decades. I’ve seen this in several generations of models, and we understand the processes underlying this phenomenon. This is fairly reliable information, qualitatively. Saying whether the decrease will be 10% or 50% is a different issue.”

The skill of regional climate forecasting also varies from region to region and with what is being forecast. “Temperature is much, much easier” than precipitation, Giorgi notes. Precipitation depends on processes like atmospheric convection that operate on scales too small for any model to render in detail. Trouble simulating convection also means that higher-latitude climate is easier to project than that of the tropics, where convection dominates.

Regional modeling does have a clear advantage in areas with complex terrain such as mountainous regions, notes UW’s Mass, who does regional forecasting of both weather and climate. In the Pacific Northwest, the mountains running parallel to the coast direct onshore winds upward, predictably wringing rain and snow from the air without much difficult-to-simulate convection.

The downscaling of climate projections should be getting a boost as the Coordinated Regional Climate Downscaling Experiment (CORDEX) gets up to speed. Begun in 2009, CORDEX “is really the first time we’ll get a handle on all these uncertainties,” Giorgi says. Various groups will take on each of the world’s continent-size regions. Multiple global models will be matched with multiple regional models and run multiple times to tease out the uncertainties in each. “It’s a landmark for the regional climate modeling community,” Giorgi says.

Vol. 288 no. 5474 p. 2113

DOI: 10.1126/science.288.5474.2113

GREENHOUSE WARMING

Dueling Models: Future U.S. Climate Uncertain

Richard A. Kerr

When Congress started funding a global climate change research program in 1990, it wanted to know what all this talk about greenhouse warming would mean for United States voters. Ten years later, a U.S. national assessment, drawing on the best available climate model predictions, concludes that the United States will indeed warm, affecting everything from the western snowpacks that supply California with water to New England’s fall foliage. But on a more detailed level, the assessment often draws a blank. Whether the cornfields of Kansas will be gripped by frequent, severe droughts, as one climate model has it, or blessed with more moisture than they now enjoy, as another predicts, the report can’t say. As much as policy-makers would like to know exactly what’s in store for Americans, the rudimentary state of regional climate science will not soon allow it, and the results of this 3-year effort brought the point home.

“This is the first time we’ve tried to take the physical [climate] system and see what effect it might have on ecosystems and socioeconomic systems,” says Thomas Karl, director of the National Oceanic and Atmospheric Administration’s (NOAA’s) National Climatic Data Center in Asheville, North Carolina, and a co-chair of the committee of experts that pulled together the assessment report “Climate Change Impacts on the United States” (available at http://www.nacc.usgcrp.gov/). “We don’t say we know there’s going to be catastrophic drought in Kansas,” he says. “What we do say is, ‘Here’s the range of our uncertainties.’ This document should get people to think.” If anything is certain, Karl says, it’s that “the past isn’t going to be a very good guide to future climate.”

By chance, the assessment had a handy way to convey the range of uncertainty that regional modeling serves up. The report, which divides the country into eight regions, is based on a pair of state-of-the-art climate models—one from the Canadian Climate Center and one from the U.K. Hadley Center for Climate Research and Prediction—that couple a simulated atmosphere and ocean. The two models solved the problems of simplifying a complex world in different ways, leading to very different predicted U.S. climates. “In terms of temperature, the Canadian model is at the upper end of the warming by 2100” predicted by a range of models, says modeler Eric Barron of Pennsylvania State University, University Park, and a member of the assessment team. “The Hadley model is toward the lower end. The Canadian model is on the dry side, and the Hadley model is on the wet side. We’re capturing a substantial portion of the range of simulations. We tried hard to convey that uncertainty.”

On a broad scale, the report can conclude: “Overall productivity of American agriculture will likely remain high, and is projected to increase throughout the 21st century,” although there will be winners and losers from place to place, and adapting agricultural practice to climate change will be key. Where the models are somewhat consistent, as in the far southwest, the report ventures what could be construed as predictions: “It is likely that some ecosystems, such as alpine ecosystems, will disappear entirely from the region,” or “Higher temperatures are likely to mean … a shorter season for winter activities, such as skiing.” Where the models clash, as on summer soil moisture over the eastern two-thirds of the lower 48 states, it explains the alternatives and suggests ways to adapt, such as switching crops.

The range of possible climate impacts laid out by the models “fairly reflects where we are in the science,” says Karl. But he notes that the effort did lack one important input: Congress mandated the assessment without funding it. “You get what you pay for,” says climatologist Kevin Trenberth of the National Center for Atmospheric Research in Boulder, Colorado. “A lot of it was done hastily.” Karl concedes that everyone involved would have liked to have had more funding delivered more reliably.

Even given more time and money, however, the assessment may not have come up with much better small-scale predictions, given the inherent limitations of the science. Even the best models today can say little that’s reliable about climate change at the regional level, never mind at the scale of a congressional district. Their picture of future climate is fuzzy—they might lump together San Francisco and Los Angeles because the models have such coarse geographic resolution—and the realism of such meteorological phenomena as clouds and precipitation is compromised by the inevitable simplifications of simulating the world in a computer.

“For the most part, these sorts of models give a warming,” says modeler Filippo Giorgi, “but they tend to give very different predictions, especially at the regional level, and there’s no way to say one should be believed over another.” Giorgi and his colleague Raquel Francisco of the Abdus Salam International Center for Theoretical Physics in Trieste, Italy, recently evaluated the uncertainties in five coupled climate models—including the two used in the national assessment—within 23 regions, the continental United States comprising roughly three regions. Giorgi concludes that as the scale of prediction shrinks, reliability drops until for small regions “the model data are not believable at all.”

Add in uncertainties external to the models, such as population and economic growth rates, says modeler Jerry D. Mahlman, director of NOAA’s Geophysical Fluid Dynamics Laboratory in Princeton, New Jersey, and the details of future climate recede toward unintelligibility. Some people in Congress and the policy community had “almost silly expectations there would be enormously useful, small-scale specifics, if you just got the right model. But the right model doesn’t exist,” says Mahlman.

Still, even though the national assessment does not offer the list of region-by-region impacts that Congress might have hoped for, it does show “where we are adaptable and where we are vulnerable,” says global change researcher Stephen Schneider of Stanford University. In 10 years, modelers say, they’ll do better.

The Post-Normal Seduction of Climate Science (Forbes)

William Pentland, 10/14/2011 @ 12:22AM |2,770 views

In early 2002, former U.S. Defense Secretary Donald Rumsfeld explained why the lack of evidence linking Saddam Hussein with terrorist groups did not mean there was no connection during a televised press conference.

“[T]here are known ‘knowns’ – there are things we know we know,” said Rumsfeld. “We also know there are known ‘unknowns’ – that is to say we know there are some things we do not know. But there are also unknown ‘unknowns’ – the ones we don’t know we don’t know . . . it is the latter category that tend to be the difficult ones.”

Rumsfeld turned out to be wrong about Hussein, but what if he had been talking about global warming? Well, he probably would have been on to something there. Unknowns of any ilk are a real pickle in climate science.

Indeed, uncertainty in climate science has induced a state of severe political paralysis. The trouble is that nobody really knows why. A rash of recent surveys and studies have exonerated most of the usual suspects – scientific illiteracy, industry distortions, skewed media coverage.

Now, the climate-science community is scrambling to crack the code on the “uncertainty” conundrum. Exhibit A: the October 2011 issue of the journal Climatic Change, the closest thing in climate science to gospel truth, which is devoted entirely to the subject of uncertainty.

While I have yet to digest all of the dozen or so essays, I suspect they are only the opening salvo in what is will soon become a robust debate about the significance of uncertainty in climate-change science. The first item up on the chopping block is called post-normal science (PNS).

PNS is a model of the scientific process pioneered by Jerome Ravetz and Silvio Funtowicz, which describes the peculiar challenges science encounters where “facts are uncertain, values in dispute, stakes high and decisions urgent.” Unlike “normal” science in the sense described by the philosopher of science Thomas Kuhn, post-normal science commonly crosses disciplinary lines and involves new methods, instruments and experimental systems.

Judith Curry, a professor at Georgia Tech, weighs the wisdom of taking the plunge on PNS in an excellent piece called “Reasoning about climate uncertainty.” Drawing on the work of Dutch wunderkind, Jeroen van der Sluijs, Curry calls on the Intergovernmental Panel on Climate Change to stop marginalizing uncertainty and get real about bias in the consensus building process. Curry writes:

The consensus approach being used by the IPCC has failed to produce a thorough portrayal of the complexities of the problem and the associated uncertainties in our understanding . . . Better characterization of uncertainty and ignorance and a more realistic portrayal of confidence levels could go a long way towards reducing the “noise” and animosity portrayed in the media that fuels the public distrust of climate science and acts to stymie the policy process.

PNS is especially seductive in the context of uncertainty. Not surprisingly, Curry suggests that instituting PNS-like strategies at the IPCC “could go a long way towards reducing the ‘noise’ and animosity” surrounding climate-change science.

While I personally believe PNS is persuasive, the PNS model provokes something closer to revulsion in many people. Last year, members of the U.S. House of Representatives filed a petition challenging the U.S. Environmental Protection Agency‘s Greenhouse Gas Endangerment seemed less sanguine about post-normal science:

. . . the conclusions of organizing bodies, especially the IPCC, cannot be said to reflect scientific “consensus” in any meaningful sense of that word. Instead, they reflect a political movement that has commandeered science to the service of its agenda. This is “post-normal science”: the long-dreaded arrival of deconstructionism to the natural sciences, according to which scientific quality is determined not by its fidelity to truth, but by its fidelity to the political agenda.

It seems unlikely that taking the PNS plunge would appreciably improve the U.S. public’s perception of the credibility, legitimacy and salience of climate-change assessments. This probably says more about Americans than it does about the analytic force of the PNS model.

Let’s face it. Americans do not agree on a whole hell of a lot. And they never have. Many U.S. institutions were deliberately designed to tolerate the coexistence of free states and slave-owning states. Ironically, Americans appear to agree more on climate-change science than other high-profile scientific controversies like the safety of genetically-modified organisms.

While it pains me to admit this, I am increasingly convinced that the IPCC’s role in assessing the science of climate change needs to be scaled back. The IPCC was an overly optimistic experiment in international governance designed for a world that never materialized. The U.N. General Assembly established the IPCC in the months immediately preceding the fall of the Berlin Wall. Only two few years later, the IPCC’s first assessment report and the creation of the U.N. Framework Convention on Climate Change coincided with the collapse of the Soviet Union and the end of the Cold War.

A new world order seemed to be dawning in those days, which is probably why it seemed like a good idea to ask scientists to tell us what constitutes “dangerous climate change.” Two decades and two world trade towers later, the world is a decidedly less hospitable place for institutions like the IPCC.

The proof is in the pudding – or, in this case, the atmosphere.

Climate Change Tumbles Down Europe’s Political Agenda as Economic Worries Take the Stage (N.Y. Times)

By JEREMY LOVELL of ClimateWire. Published: October 13, 2011

LONDON — Climate change has all but fallen off the political agenda across Europe as the resurging economic crisis empties national coffers and shakes economic confidence, and the public and the press turn their attention to more immediate issues of rising fuels bills and joblessness, analysts say.

Sputtering economies, a shift of attention to looming elections and the prospect of little or no movement in the December climate talks in Durban, South Africa, have combined to take the political momentum out of an issue that was a major cause in Europe.

“It is way down the agenda and will not feature in elections,” said Edward Cameron, director of the World Resources Institute think tank’s international climate initiative, on the sidelines of a meeting on climate change at London’s Chatham House think tank. “At a time of joblessness and fiscal crises, it is very difficult to advance the climate change issue.”

That is as true for next year’s presidential elections in the United States as it will be in France, despite the fact that there has been a series of environmental disasters, from the Texas drought this year to Russia’s heat wave and consequent steep rise in wheat prices last year.

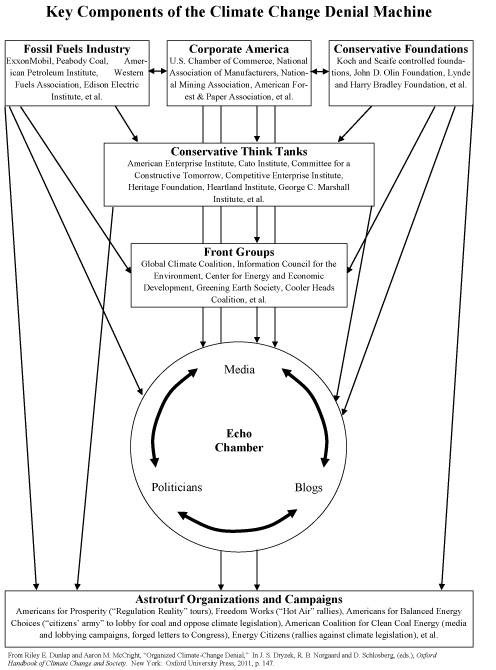

According to acclaimed NASA scientist James Hansen, who has been warning of impending climatic doom for decades, the lack of focus on these events is in no small part due to the fact that scientists are poor communicators while the climate change skeptics have mounted a smoothly run campaign to capitalize on any mistakes and admissions of uncertainty.

“There is a strong campaign by those people who want to continue the fossil fuel business as usual. Climate contrarians … have managed in the public’s eye to muddy the waters enough that there is uncertainty why should we do anything yet,” he said on a visit to London’s Royal Society for a meeting on lessons to be learned from past climate change battles.

“They have been winning the argument in the last several years, even though the science has become clearer,” he added.

Nuclear power issue distracts Berlin

In Germany, where a generous feed-in tariff scheme has produced some 28 gigawatts of wind power capacity and more than 18 GW of solar photovoltaic capacity, Chancellor Angela Merkel’s coalition government was forced into an abrupt U-turn on a controversial move to extend the lives of the country’s fleet of nuclear power plants. There was a political revolt after the March 11 nuclear disaster at Fukushima in Japan.

The oldest seven of Germany’s nuclear plants were closed immediately after Fukushima and will now never reopen, while the remainder will close by 2022.

This has had the perverse effect in a country proud of its renewable energy efforts of increasing the use of coal-fired power plants and increasing the likelihood of new coal- or gas-fired plants being built. The price tag will include higher carbon emissions at exactly the time that the Germany along with the rest of the European Union is pledged to cut emissions.

While political observers believe the climate change issue will come back to the fore at some point in Germany — a country where the Greens have played a pivotal political role — the nuclear power issue is so politically charged that it is off the agenda for now.

Even in the United Kingdom, which has a huge wind energy program and where the Conservative-Liberal Democrat coalition came to power 15 months ago pledging to be the “greenest government ever,” there are major signs of backsliding. A long-awaited energy bill has been shelved, and renewable energy support costs and carbon emission reduction targets are either under review or about to be.

At the Conservative Party’s annual conference earlier this month, climate change was consigned to a brief debate on the opening Sunday, when delegates were mostly just arriving and finding their way around or still traveling to get there.

Damned by faint praise in London

Prime Minister David Cameron did not mention the issue in his speech to the conference — a performance that usually sets the broad agenda for the following year — and Chancellor of the Exchequer George Osborne caused environmental outrage but satisfaction to the party’s right wing by pledging that the United Kingdom would not go any faster than its E.U. neighbors on emission cuts.

This is despite the fact that the United Kingdom has a legal target to cut its carbon emissions by at least 80 percent below 1990 levels by 2050, with cuts of 35 percent by 2022 and 50 percent by 2025, whereas the European Union’s goal is 20 percent by 2020.

It was widely reported that the 2022 target was only agreed to after a major battle in the Cabinet between supporters of Conservative Osborne and those of Liberal Democrat Energy and Climate Change Minister Chris Huhne. It has since been announced that the carbon targets will be reviewed in 2014.

Even in London, where charismatic Conservative Mayor Boris Johnson came to power in 2008 in part on a green ticket, the issue has largely been parked and replaced by transport in the run-up to next year’s mayoral elections. The city’s aging transport system is feared likely to come under massive strain during the 2012 Olympic Games.

Then there is the strange case of a strategic plan on adapting London to climate change, the draft of which was launched with great fanfare and declarations of urgency in February 2010. It was on the brink of publication in September 2010, but after that, it appeared to have vanished without trace.

At the same time, most members of City Hall’s climate change team, set up under the previous Labour administration, have been moved to other jobs.

‘Too difficult — and not a vote winner’

“Political leaders get it, but the treasuries don’t. The men with the money don’t want to be first movers,” said Nick Mabey, co-founder of environmental think tank E3G. “But the political froth has gone. It has become too difficult — and not a vote winner.”

Compounding that problem, at least in the United Kingdom, has been a series of reports underscoring the likely high cost to households of green energy policies at a time when the prices of domestic electricity and gas are already rising sharply.

A recent opinion poll found that the climate change issue has been replaced by concerns over rising fuel bills and energy security.

But Mabey is not too concerned. While the subject may be off the immediate political agenda, behind the scenes, the more enlightened corporate leaders and investment fund managers have been making their own calculations. They are moving their money into the low-carbon economic transformation that in some cases is already profitable and in many eyes essential and inevitable.

The main danger, they say, is that if climate change as a driver of action is allowed to languish too long and become too invisible while energy becomes the main motivator, it will become far harder to resurrect climate change.

For Mabey and WRI’s Cameron, while the deep and seemingly returning global economic crisis has proved a serious distraction internationally as well as domestically, all is not lost.

For a number of reasons, including the rise of a new and major climate player — China — and a series of new scientific reports on climate change due over the next two or three years, 2015 will be the next pivotal moment for the world to take collective action, they say.

“Climate change doesn’t keep people awake at night. Our task for the next few years is to move it back up the political agenda again,” said WRI’s Cameron.

Copyright 2011 E&E Publishing. All Rights Reserved.

Group Urges Research Into Aggressive Efforts to Fight Climate Change (N.Y. Times)

By CORNELIA DEAN, Published: October 4, 2011

With political action on curbing greenhouse gases stalled, a bipartisan panel of scientists, former government officials and national security experts is recommending that the government begin researching a radical fix: directly manipulating the Earth’s climate to lower the temperature.

Members said they hoped that such extreme engineering techniques, which include scattering particles in the air to mimic the cooling effect of volcanoes or stationing orbiting mirrors in space to reflect sunlight, would never be needed. But in itsreport, to be released on Tuesday, the panel said it is time to begin researching and testing such ideas in case “the climate system reaches a ‘tipping point’ and swift remedial action is required.”

The 18-member panel was convened by the Bipartisan Policy Center, a research organization based in Washington founded by four senators — Democrats and Republicans — to offer policy advice to the government. In interviews, some of the panel members said they hoped that the mere discussion of such drastic steps would jolt the public and policy makers into meaningful action in reducing greenhouse gas emissions, which they called the highest priority.

The idea of engineering the planet is “fundamentally shocking,” David Keith, an energy expert at Harvard and the University of Calgary and a member of the panel, said. “It should be shocking.”

In fact, it is an idea that many environmental groups have rejected as misguided and potentially dangerous.

Jane Long, an associate director of the Lawrence Livermore National Laboratory and the panel’s co-chairwoman, said that by spewing greenhouse gases into the atmosphere, human activity was already engaged in climate modification. “We are doing it accidentally, but the Earth doesn’t know that,” she said, adding, “Going forward in ignorance is not an option.”

The panel, the Task Force on Climate Remediation Research, suggests that the White House Office of Science and Technology Policy begin coordinating research and estimates that a valuable effort could begin with a few million dollars in financing over the next few years.

One reason that the United States should embrace such research, the report suggests, is the threat of unilateral action by another country. Members say research is already under way in Britain, Germany and possibly other countries, as well as in the private sector.

“A conversation about this is going to go on with us or without us,” said David Goldston, a panel member who directs government affairs at the Natural Resources Defense Counciland is a former chief of staff of the House Committee on Science. “We have to understand what is at stake.”

In interviews, panelists said again and again that the continuing focus of policy makers and experts should be on reducing emissions of carbon dioxide and other greenhouse gases. But several acknowledged that significant action remained a political nonstarter. Last month, for example, the Obama administration told the federal Environmental Protection Agency to hold off on tightening ozone standards, citing complications related to the weak economy.

According to the United Nations Intergovernmental Panel on Climate Change, greenhouse gas emissions have contributed to raising the global average surface temperatures by about 1.3 degrees Fahrenheit in the past 100 years. It is impossible to predict how much impact the report will have. But given the panelists’ varied political and professional backgrounds, they seem likely to achieve one major goal: starting a broader conversation on the issue. Some climate experts have been working on it for years, but they have largely kept their discussions to themselves, saying they feared giving the impression that there might be quick fixes for climate change.

“Climate adaptation went through the same period of concern,” Mr. Goldston said, referring to the onetime reluctance of some researchers to discuss ways in which people, plants and animals might adjust to climate change. Now, he said, similar reluctance to discuss geoengineering is giving way, at least in part because “it’s possible we may have to do this no matter what.”

Although the techniques, which fall into two broad groups, are more widely known as geoengineering, the panel prefers “climate remediation.”

The first is carbon dioxide removal, in which the gas is absorbed by plants, trapped and stored underground or otherwise removed from the atmosphere. The methods are “generally uncontroversial and don’t introduce new global risks,” said Ken Caldeira, a climate expert at Stanford University and a panel member. “It’s mostly a question of how much do these things cost.”

Controversy arises more with the second group of techniques, solar radiation management, which involves increasing the amount of solar energy that bounces back into space before it can be absorbed by the Earth. They include seeding the atmosphere with reflective particles, launching giant mirrors above the earth or spewing ocean water into the air to form clouds.

These techniques are thought to pose a risk of upsetting earth’s natural rhythms. With them, Dr. Caldeira said, “the real question is what are the unknown unknowns: Are you creating more risk than you are alleviating?”

At the influential blog Climate Progress, Joe Romm, a fellow at the Center for American Progress, has made a similar point, likening geo-engineering to a dangerous course of chemotherapy and radiation to treat a condition curable through diet and exercise — or, in this case, emissions reduction.

The panel rejected any immediate application of climate remediation techniques, saying too little is known about them. In 2009, the Royal Society in Britain said much the same, assessing geoengineering technologies as “technically feasible” but adding that their potential costs, effectiveness and risks were unknown.

Similarly, in a 2010 review of federal research that might be relevant to climate remediation, the federal Government Accountability Office noted that “major uncertainties remain on the efficacy and potential consequences” of the approach. Its report also recommended that the White House Office of Science and Technology Policy “establish a clear strategy for geoengineering research.”

John P. Holdren, who heads that office, declined interview requests. He issued a statement reiterating the Obama administration’s focus on “taking steps to sensibly reduce pollution that is contributing to climate change.”

Yet in an interview with The Associated Press in 2009, Dr. Holdren said the possible risks and benefits of geoengineering should be studied very carefully because “we might get desperate enough to want to use it.”

In a draft plan made public on Friday, the U.S. Global Change Research Program, a coordinating effort administered by his office, outlined its own climate change research agenda, including studies of the impacts of rapid climate change.

The plan said that climate-related projections would be crucial to future studies of the “feasibility, effectiveness and unintended consequences of strategies for deliberate, large-scale manipulations of Earth’s environment,” including carbon dioxide removal and solar radiation management.

Many countries fault the United States for government inaction on climate change, especially given its longtime role as a chief contributor to the problem.

Frank Loy, a panelist and former chief climate negotiator for the United States, suggested that people around the world would see past those issues if the United States embraced geoengineering studies, provided that it was “very clear about what kind of research is undertaken and what the safeguards are.”

This article has been revised to reflect the following correction:

Correction: October 4, 2011

An earlier version of this article mistakenly referred to Frank Loy as the nation’s chief climate negotiator; he is a former chief climate negotiator. It also misstated the name of a federal agency that reported on the potential effectiveness of climate remediation. It is the Government Accountability Office, not the General Accountability Office.

The scientific finding that settles the climate-change debate (Washington Post)

By Eugene Robinson, Published: October 24

For the clueless or cynical diehards who deny global warming, it’s getting awfully cold out there.

The latest icy blast of reality comes from an eminent scientist whom the climate-change skeptics once lauded as one of their own. Richard Muller, a respected physicist at the University of California, Berkeley, used to dismiss alarmist climate research as being “polluted by political and activist frenzy.” Frustrated at what he considered shoddy science, Muller launched his own comprehensive study to set the record straight. Instead, the record set him straight.

“Global warming is real,” Muller wrote last week in The Wall Street Journal.

Rick Perry, Herman Cain, Michele Bachmann and the rest of the neo-Luddites who are turning the GOP into the anti-science party should pay attention.

“When we began our study, we felt that skeptics had raised legitimate issues, and we didn’t know what we’d find,” Muller wrote. “Our results turned out to be close to those published by prior groups. We think that means that those groups had truly been careful in their work, despite their inability to convince some skeptics of that.”

In other words, the deniers’ claims about the alleged sloppiness or fraudulence of climate science are wrong. Muller’s team, the Berkeley Earth Surface Temperature project, rigorously explored the specific objections raised by skeptics — and found them groundless.

Muller and his fellow researchers examined an enormous data set of observed temperatures from monitoring stations around the world and concluded that the average land temperature has risen 1 degree Celsius — or about 1.8 degrees Fahrenheit — since the mid-1950s.

This agrees with the increase estimated by the United Nations-sponsored Intergovernmental Panel on Climate Change. Muller’s figures also conform with the estimates of those British and American researchers whose catty e-mails were the basis for the alleged “Climategate” scandal, which was never a scandal in the first place.

The Berkeley group’s research even confirms the infamous “hockey stick” graph — showing a sharp recent temperature rise — that Muller once snarkily called “the poster child of the global warming community.” Muller’s new graph isn’t just similar, it’s identical.

Muller found that skeptics are wrong when they claim that a “heat island” effect from urbanization is skewing average temperature readings; monitoring instruments in rural areas show rapid warming, too. He found that skeptics are wrong to base their arguments on the fact that records from some sites seem to indicate a cooling trend, since records from at least twice as many sites clearly indicate warming. And he found that skeptics are wrong to accuse climate scientists of cherry-picking the data, since the readings that are often omitted — because they are judged unreliable — show the same warming trend.

Muller and his colleagues examined five times as many temperature readings as did other researchers — a total of 1.6 billion records — and now have put that merged database online. The results have not yet been subjected to peer review, so technically they are still preliminary. But Muller’s plain-spoken admonition that “you should not be a skeptic, at least not any longer” has reduced many deniers to incoherent grumbling or stunned silence.

Not so, I predict, with the blowhards such as Perry, Cain and Bachmann, who, out of ignorance or perceived self-interest, are willing to play politics with the Earth’s future. They may concede that warming is taking place, but they call it a natural phenomenon and deny that human activity is the cause.

It is true that Muller made no attempt to ascertain “how much of the warming is due to humans.” Still, the Berkeley group’s work should help lead all but the dimmest policymakers to the overwhelmingly probable answer.

We know that the rise in temperatures over the past five decades is abrupt and very large. We know it is consistent with models developed by other climate researchers that posit greenhouse gas emissions — the burning of fossil fuels by humans — as the cause. And now we know, thanks to Muller, that those other scientists have been both careful and honorable in their work.

Nobody’s fudging the numbers. Nobody’s manipulating data to win research grants, as Perry claims, or making an undue fuss over a “naturally occurring” warm-up, as Bachmann alleges. Contrary to what Cain says, the science is real.

It is the know-nothing politicians — not scientists — who are committing an unforgivable fraud.

Bleak Prospects for Avoiding Dangerous Global Warming (Science)

by Richard A. Kerr on 23 October 2011, 1:00 PM

The bad news just got worse: A new study finds that reining in greenhouse gas emissions in time to avert serious changes to Earth’s climate will be at best extremely difficult. Current goals for reducing emissions fall far short of what would be needed to keep warming below dangerous levels, the study suggests. To succeed, we would most likely have to reverse the rise in emissions immediately and follow through with steep reductions through the century. Starting later would be far more expensive and require unproven technology.

Published online today in Nature Climate Change, the new study merges model estimates of how much greenhouse gas society might put into the atmosphere by the end of the century with calculations of how climate might respond to those human emissions. Climate scientist Joeri Rogelj of ETH Zurich and his colleagues combed the published literature for model simulations that keep global warming below 2°C at the lowest cost. They found 193 examples. Modelers running such optimal-cost simulations tried to include every factor that might influence the amount of greenhouse gases society will produce —including the rate of technological progress in burning fuels efficiently, the amount of fossil fuels available, and the development of renewable fuels. The researchers then fed the full range of emissions from the scenarios into a simple climate model to estimate the odds of avoiding a dangerous warming.

The results suggest challenging times ahead for decision makers hoping to curb the greenhouse. Strategies that are both plausible and likely to succeed call for emissions to peak this decade and start dropping right away. They should be well into decline by 2020 and far less than half of current emissions by 2050. Only three of the 193 scenarios examined would be very likely to keep the warming below the danger level, and all of those require heavy use of energy systems that actually remove greenhouse gases from the atmosphere. That would require, for example, both creating biofuels and storing the carbon dioxide from their combustion in the ground.

“The alarming thing is very few scenarios give the kind of future we want,” says climate scientist Neil Edwards of The Open University in Milton Keynes, U.K. Both he and Rogelj emphasize the uncertainties inherent in the modeling, especially on the social and technological side, but the message seems clear to Edwards: “What we need is at the cutting edge. We need to be as innovative as we can be in every way.” And even then, success is far from guaranteed.

A skeptical physicist ends up confirming climate data (Washington Post)

Posted by Brad Plumer at 04:18 PM ET, 10/20/2011

Back in 2010, Richard Muller, a Berkeley physicist and self-proclaimed climate skeptic, decided to launch the Berkeley Earth Surface Temperature (BEST) project to review the temperature data that underpinned global-warming claims. Remember, this was not long after the Climategate affair had erupted, at a time when skeptics were griping that climatologists had based their claims on faulty temperature data. (Jonathan Hayward/AP)Muller’s stated aims were simple. He and his team would scour and re-analyze the climate data, putting all their calculations and methods online. Skeptics cheered the effort. “I’m prepared to accept whatever result they produce, even if it proves my premise wrong,” wrote Anthony Watts, a blogger who has criticized the quality of the weather stations in the United Statse that provide temperature data. The Charles G. Koch Foundation even gave Muller’s project $150,000 — and the Koch brothers, recall, are hardly fans of mainstream climate science.So what are the end results? Muller’s team appears to have confirmed the basic tenets of climate science. Back in March, Muller told the House Science and Technology Committee that, contrary to what he expected, the existing temperature data was “excellent.” He went on: “We see a global warming trend that is very similar to that previously reported by the other groups.” And, today, the BEST team has released a flurry of new papers that confirm that the planet is getting hotter. As the team’s two-page summary flatly concludes, “Global warming is real.”Here’s a chart comparing their findings with existing data:

(Jonathan Hayward/AP)Muller’s stated aims were simple. He and his team would scour and re-analyze the climate data, putting all their calculations and methods online. Skeptics cheered the effort. “I’m prepared to accept whatever result they produce, even if it proves my premise wrong,” wrote Anthony Watts, a blogger who has criticized the quality of the weather stations in the United Statse that provide temperature data. The Charles G. Koch Foundation even gave Muller’s project $150,000 — and the Koch brothers, recall, are hardly fans of mainstream climate science.So what are the end results? Muller’s team appears to have confirmed the basic tenets of climate science. Back in March, Muller told the House Science and Technology Committee that, contrary to what he expected, the existing temperature data was “excellent.” He went on: “We see a global warming trend that is very similar to that previously reported by the other groups.” And, today, the BEST team has released a flurry of new papers that confirm that the planet is getting hotter. As the team’s two-page summary flatly concludes, “Global warming is real.”Here’s a chart comparing their findings with existing data:

The BEST team tried to take a number of skeptic claims seriously, to see if they panned out. Take, for instance, their paper on the “urban heat island effect.” Watts has long argued that many weather stations collecting temperature data could be biased by being located in cities. Since cities are naturally warmer than rural areas (because building materials retain more heat), the uptick in recorded temperatures might be exaggerated, an illusion spawned by increased urbanization. So Muller’s team decided to compare overall temperature trends with only those weather stations based in rural areas. And, as it turns out the trends match up well. “Urban warming does not unduly bias estimates of recent global temperature change,” Muller’s group concluded.

The BEST team tried to take a number of skeptic claims seriously, to see if they panned out. Take, for instance, their paper on the “urban heat island effect.” Watts has long argued that many weather stations collecting temperature data could be biased by being located in cities. Since cities are naturally warmer than rural areas (because building materials retain more heat), the uptick in recorded temperatures might be exaggerated, an illusion spawned by increased urbanization. So Muller’s team decided to compare overall temperature trends with only those weather stations based in rural areas. And, as it turns out the trends match up well. “Urban warming does not unduly bias estimates of recent global temperature change,” Muller’s group concluded.

That shouldn’t be so jaw-dropping. Previous analyses — like this one from the National Oceanic and Atmospheric Administration — have responded to Watts’ concerns by showing that a few flawed stations don’t warp the overall trend. But maybe Muller’s team can finally put this controversy to rest, right? Well, not yet. As Watts responds over at his site, the BEST papers still haven’t been peer-reviewed (an important caveat, to be sure). And Watts isn’t pleased with how much pre-publication hype the studies are getting. But so far, what we have is a prominent skeptic casting a critical eye at the data and finding, much to his own surprise, that the data holds up.

Estudo americano confirma aquecimento da superfície terrestre (BBC)

Richard Black

Da BBC News

Grupo afirma que estações meteorológicas dão dados precisos sobre aquecimento

Grupo afirma que estações meteorológicas dão dados precisos sobre aquecimento

Uma nova análise de um grupo de cientistas dos Estados Unidos concluiu que a superfície da Terra está ficando mais quente.

Desde 1950, a temperatura média em terra aumentou em um grau centígrado, segundo as descobertas do grupo Berkeley Earth Project.

O Berkeley Earth Project usou novos métodos e novos dados, mas as descobertas do grupo seguem a mesma tendência climática vista pela Nasa e pelo Escritório de Meteorologia da Grã-Bretanha, por exemplo.

“Nossa maior surpresa foi que os novos resultados concordam com os valores de aquecimento publicados anteriormente por outras equipes nos Estados Unidos e Grã-Bretanha”, afirmou o professor Richard Muller, que estabeleceu o Berkeley Earth Project na Universidade da Califórnia reunindo dez cientistas renomados.

“Isto confirma que estes estudos foram feitos cuidadosamente e que o potencial de (estudos) tendenciosos, identificados pelos céticos em relação ao aquecimento global, não afetam seriamente as conclusões”, acrescentou.

O grupo de cientistas também relata que, apesar de o efeito de aumento de calor perto de cidades – o chamado efeito de ilha de calor urbana – ser real e já ter sido estabelecido, ele não é o responsável pelo aquecimento registrado pela maioria das estações climáticas no mundo todo.

Ceticismo

O grupo examinou as alegações de blogueiros “céticos” em relação ao fenômeno, que afirmam que os dados de estações meteorológicas não mostram uma tendência verdadeira de aquecimento global.

Eles dizem que muitas estações meteorológicas registraram aquecimento pois estão localizadas perto de cidades e as cidades crescem, aumentando o calor.

No entanto, o grupo de cientistas descobriu cerca de 40 mil estações meteorológicas no mundo todo cujas informações foram gravadas e armazenadas no formato digital.

Os pesquisadores então desenvolveram uma nova forma de analisar os dados para detectar a tendência das temperaturas globais em terra desde 1800.

O resultado foi um gráfico muito parecido com aqueles produzidos pelos grupos mais importantes do mundo, que tiveram seus trabalhos criticados pelos céticos.

Dois destes três registros são mantidos pelos Estados Unidos, na Administração Oceânica e Atmosférica Nacional (NOAA) e na Nasa. O terceiro é uma colaboração entre o Escritório de Meteorologia da Grã-Bretanha e o Centro de Pesquisa Climática da Universidade de East Anglia (UEA).

O professor Phil Jones, do Centro de Pesquisa Climática da UEA, encarou o trabalho do grupo com cautela e afirmou que espera ler “o relatório final”, quando for publicado.

“Estas descobertas iniciais são muito encorajadoras e ecoam nossos resultados e nossa conclusão de que o impacto das ilhas urbanas de calor na média global de temperatura é mínimo”, disse.

Céticos dizem que proximidade de cidades alteram dados de estações

Céticos dizem que proximidade de cidades alteram dados de estações

Phil Jones foi um dos cientistas britânicos acusados de manipular dados para exagerar a influência humana no aquecimento global. Os cientistas foram inocentados em 2010.

O caso teve início em 2009, com o vazamento de e-mails de Jones nos quais o cientista parecia sugerir que alguns dados de pesquisas sobre o aquecimento global fossem excluídos de apresentações que seriam realizadas na conferência da ONU sobre mudanças climáticas.

O episódio deu munição aos céticos em relação ao papel dos seres humanos nas alterações climáticas. Mas a sindicância da Universidade de East Anglia concluiu que não havia dúvidas sobre o rigor e a honestidade dos cientistas.

Sem publicação

Bob Ward, diretor de política e comunicações para o Instituto Graham de Mudança Climática e Meio Ambiente, de Londres, afirmou que o aquecimento global é claro.

“Os chamados céticos devem deixar de lado sua alegações de que o aumento na temperatura média global pode ser atribuído ao impacto do crescimento das cidades”, disse.

A equipe do Berkeley Earth Project decidiu divulgar os dados de suas pesquisas inicialmente em seu próprio website, ao invés de fazê-lo em uma publicação especializada.

Os pesquisadores estão pedindo para que os internautas comentem e forneçam suas opiniões antes de preparar os manuscritos para a publicação científica formal.

Richard Muller, que criou o grupo de pesquisa, afirmou que esta livre circulação de informações marca uma volta à forma como a ciência precisa ser feita, ao invés de apenas publicar o estudo em revistas científicas.

Rick Perry officials spark revolt after doctoring environment report (The Guardian)

Scientists ask for names to be removed after mentions of climate change and sea-level rise taken out by Texas officials

Suzanne Goldenberg, US environment correspondent

guardian.co.uk, Friday 14 October 2011 13.05 BST

Rick Perry’s administration deleted references to climate change and sea-level rise from the report. Photograph: Evan Vucci/AP

Officials in Rick Perry’s home state of Texas have set off a scientists’ revolt after purging mentions of climate change and sea-level rise from what was supposed to be a landmark environmental report. The scientists said they were disowning the report on the state of Galveston Bay because of political interference and censorship from Perry appointees at the state’s environmental agency.

By academic standards, the protest amounts to the beginnings of a rebellion: every single scientist associated with the 200-page report has demanded their names be struck from the document. “None of us can be party to scientific censorship so we would all have our names removed,” said Jim Lester, a co-author of the report and vice-president of the Houston Advanced Research Centre.

“To me it is simply a question of maintaining scientific credibility. This is simply antithetical to what a scientist does,” Lester said. “We can’t be censored.” Scientists see Texas as at high risk because of climate change, from the increased exposure to hurricanes and extreme weather on its long coastline to this summer’s season of wildfires and drought.

However, Perry, in his run for the Republican nomination, has elevated denial of science, from climate change to evolution, to an art form. He opposes any regulation of industry, and has repeatedly challenged the authority of the Environmental Protection Agency.

Texas is the only state to refuse to sign on to the federal government’s new regulations on greenhouse gas emissions. “I like to tell people we live in a state of denial in the state of Texas,” said John Anderson, an oceanography at Rice University, and author of the chapter targeted by the government censors.

That state of denial percolated down to the leadership of the Texas Commission on Environmental Quality. The agency chief, who was appointed by Perry, is known to doubt the science of climate change. “The current chair of the commission, Bryan Shaw, commonly talks about how human-induced climate change is a hoax,” said Anderson.

But scientists said they still hoped to avoid a clash by simply avoiding direct reference to human causes of climate change and by sticking to materials from peer-reviewed journals. However, that plan began to unravel when officials from the agency made numerous unauthorised changes to Anderson’s chapter, deleting references to climate change, sea-level rise and wetlands destruction.

“It is basically saying that the state of Texas doesn’t accept science results published in Science magazine,” Anderson said. “That’s going pretty far.”

Officials even deleted a reference to the sea level at Galveston Bay rising five times faster than the long-term average – 3mm a year compared to .5mm a year – which Anderson noted was a scientific fact. “They just simply went through and summarily struck out any reference to climate change, any reference to sea level rise, any reference to human influence – it was edited or eliminated,” said Anderson. “That’s not scientific review that’s just straight forward censorship.”

Mother Jones has tracked the changes. The agency has defended its actions. “It would be irresponsible to take whatever is sent to us and publish it,” Andrea Morrow, a spokeswoman said in an emailed statement. “Information was included in a report that we disagree with.”

She said Anderson’s report had been “inconsistent with current agency policy”, and that he had refused to change it. She refused to answer any questions. Campaigners said the censorship by the Texas state authorities was a throwback to the George Bush era when White House officials also interfered with scientific reports on climate change.

In the last few years, however, such politicisation of science has spread to the states. In the most notorious case, Virginia’s attorney general Ken Cuccinelli, who is a professed doubter of climate science, has spent a year investigating grants made to a prominent climate scientist Michael Mann, when he was at a state university in Virginia.

Several courts have rejected Cuccinelli’s demands for a subpoena for the emails. In Utah, meanwhile, Mike Noel, a Republican member of the Utah state legislature called on the state university to sack a physicist who had criticised climate science doubters.

The university rejected Noel’s demand, but the physicist, Robert Davies said such actions had had a chilling effect on the state of climate science. “We do have very accomplished scientists in this state who are quite fearful of retribution from lawmakers, and who consequently refuse to speak up on this very important topic. And the loser is the public,” Davies said in an email.

“By employing these intimidation tactics, these policymakers are, in fact, successful in censoring the message coming from the very institutions whose expertise we need.”

Expedição no Amazonas vai divulgar astronomia indígena na Semana Nacional de C&T (Jornal A Crítica, de Manaus)

JC e-mail 4365, de 17 de Outubro de 2011.

Calendário indígena do povo dessana associa constelações às mudanças do clima e ao ecossistema amazônico.

Surucucu não é apenas a mais perigosa serpente da Amazônia. Para os povos indígenas da etnia dessana, também é uma das inúmeras constelações que os ajudam a identificar o ciclo dos rios, o período da piracema, a formação de chuvas e sugere o momento ideal para a realização de rituais.

Na astronomia indígena, outubro é o mês do desaparecimento da constelação surucucu (añá em língua dessana) no horizonte oeste – o equivalente a escorpião na astronomia ocidental. O desaparecimento da figura da cobra está associado ao fim do período da vazante. Os dessana têm outras 13 constelações, sempre associadas às alterações climáticas.

Para divulgar a respeito da pouco conhecida astronomia indígena, um grupo de estudiosos promoverá no próximo dia 19 uma expedição de dois dias a uma aldeia da etnia dessana localizada na Reserva de Desenvolvimento Sustentável Tupé, em Manaus.

Expedição – A comunidade é composta por famílias dessana que se deslocaram da região do alto Rio Negro, no Norte do Amazonas, e ressignificaram suas tradições, cosmologias e rituais na comunidade onde se estabeleceram na zona rural de Manaus. O astrônomo Germano Afonso, do Museu da Amazônia (Musa), que desenvolve há 20 anos estudo sobre constelações indígenas no país, coordenará a expedição. Com os dessana, o trabalho de Germano Afonso é desenvolvimento há dois anos.

Ele descreve a programação como um “diálogo” entre a astronomia indígena e o conhecimento científico. “Será um diálogo entre os dois conhecimentos. Vamos escutar os indígenas e ao mesmo tempo levar uma pequena estação meteorológica que mede temperatura e velocidade. A ciência observa com equipamentos, o indígena vê isso empiricamente”, explicou.

Uma embarcação da Secretaria Municipal de Educação (Semed) levará as pessoas interessadas em participar da experiência. “Vamos fazer atividades de astronomia, meteorologia e química com os indígenas. Será uma atividade integrada à Semana de Ciência e Tecnologia”, explica Afonso.

O traço identificado como surucuru pelos indígenas é mais visível por volta de 19h, pelo lado oeste. Depois da surucuru, é a vez do tatu – outra espécie comum na fauna amazônica.

Desastres – Germano Afonso conta que os povos indígenas observam o céu, a lua, as constelações e sabem exatamente qual a época ideal para fazer o roçado, para se prevenir de uma cheia ou de uma seca. Também sabem qual o momento ideal para realizar um ritual.

A diferença em relação ao conhecimento científico, ocidental, é que não utilizam equipamentos e tecnologia para prever alterações do tempo e mudanças do clima. Mas há uma diferença mais significativa: os indígenas não caem vítimas de desmoronamentos, de grandes cheias ou de uma vazante extraordinária.

“Quem tem mais cuidado com o meio ambiente e evitar os desastres ambientais? Os índios sabem exatamente quando vai cair uma chuva forte e teremos uma grande enchente. Mas eles não morrem por causa disso”, destaca Afonso, que tem ascendência indígena guarani.

Secitece promove I Fórum “Ceará Faz Ciência” (Funcap)

POR ADMIN, EM 13/10/2011

Com a Assessoria de Comunicação da Secitece

O evento será realizado nos dias 17 e 18 de outubro, no auditório do Planetário do Centro Dragão do Mar de Arte e Cultura.

Nos dias 17 e 18 de outubro, a Secretaria da Ciência Tecnologia e Educação Superior (Secitece), realizará o “I Fórum Ceará Faz Ciência”, com o tema” Mudanças climáticas, desastres naturais e prevenção de riscos”. A iniciativa integra a programação estadual da Semana Nacional de Ciência e Tecnologia.

O secretário da Ciência e Tecnologia, René Barreira, fará a abertura do evento, dia 17, às 17h, no auditório do Planetário Rubens de Azevedo. Na ocasião, será prestada homenagem ao pesquisador cearense Expedito Parente, conhecido como o pai do biodiesel, que faleceu em setembro.

No dia 18, partir das 9h, as atividades serão retomadas com as seguintes palestras: “Onda gigante no litoral brasileiro. É possível?”, com o prof. Francisco Brandão, chefe do Laboratório de Sismologia da Coordenadoria Estadual de Defesa Civil, e “As quatro estações do ano no Ceará: perceba suas interferências na fisiologia e no meio ambiente”, ministrada por Dermeval Carneiro, prof. de Física e Astronomia, presidente da Sociedade Brasileira dos Amigos da Astronomia e diretor do Planetário Rubens de Azevedo – Dragão do Mar.

No período da tarde, a partir das 14h30, será a vez da palestra “Desastres Naturais: como prevenir e atuar em situações de risco”, com o Tenente Coronel Leandro Silva Nogueira, secretário Executivo da Coordenadoria Estadual de Defesa Civil. Para finalizar o Fórum, a engenheira agrônoma do Departamento de Recursos Hidricos e Meio Ambiente da Fundação Cearense de Meteorologia e Recursos Hídricos (Funceme), Sonia Barreto Perdigão, ministrará palestra sobre “Mudanças Climáticas e Desertificação no Ceará”, às 16h30.

Os interessados em participar do I Fórum “Ceará Faz Ciência”, a ser realizado nos dias 17 e 18/10, no Dragão do Mar, em Fortaleza, devem fazer sua pré-inscrição. O formulário a ser preenchido está disponível no site da Secitece. A participação é gratuita.

Serviço

I Fórum Ceará Faz Ciência

Data: 17 e 18 de outubro de 2011

Local: Auditório do Planetário Rubens de Azevedo

Informações: (85) 3101-6466

Inscrições gratuitas.

Tim Ingold: Projetando ambientes para a vida – um esboço (Blog Noquetange)

Projetando ambientes para a vida – um esboço*

Por Maycon Lopes

10/10/2011

Imbuído de pensar uma antropologia do vir-a-ser, uma antropologia do devir, quer dizer, aquela que não seja sobre as coisas, mas que se mova com elas, Ingold esboçou, no que os organizadores chamaram desde o início da série de conferências na UFMG de sua “grande conferência”, críticas e proposições para trilharmos o futuro. Trilhar não se trata de percorrer um caminho pré-definido; é deixar pegadas no seu percorrer, marcar com trilho, traçar. O traçado é como um desenho, um projeto, e o ato de fazê-lo já nos desloca da condição de “meros usuários” do design. Para Ingold, os designs têm de falhar, para que o futuro possa deles se apropriar, destruí-los. Eles poderiam ser pensados como previsões – e toda previsão é errada. Ou, seguindo a linha de análise deleuziana, o design poderia ser compreendido como uma tentativa de controlar o devir.

Tim Ingold propõe que ele (o design) seja concebido, no âmbito de um processo vital cuja essência é de abertura e improvisação, como um aspecto, menos como meta pré-determinada que como a continuidade de um andamento. Neste sentido, o design seria produção de futuros e não definição de. Essa ideia contudo contrasta – e esse é o ponto, creio eu, de Ingold e desse post – com a forma como tem sido predominantemente compreendida a natureza no discurso tecnocientífico: com objetivos precisos, o ambiente seria nada mais que um meio, uma coisa manipulável, vida sequestrada tendo em vista a atingir determinados fins. A natureza dos cientistas e dos criadores de política é conhecida através de cálculos, gráficos, imagens independentes daquelas do mundo que conhecemos (ou mundo fenomenal) e com o qual estamos familiarizados pelo próprio habitar. Essa dissociação artificial, que para nós aparece na figura do “globo”, espaço a que não sentimos pertencer, em contraposição com a terra, que de fato habitamos, é um modo nada adequado de abordar as constantes ameaças sofridas pela natureza. A mesma dissociação provoca uma lacuna entre o mundo diário e o mundo projetado pelos instrumentos de conhecimento a que me referi anteriormente, opondo conhecimento do habitante a conhecimento científico, como se os cientistas não habitassem mundo.

Uma expressão muito em voga como “desenvolvimento sustentável”, em geral usada tanto por políticos como por grandes corporações com intuito de proteger o lucro, é amparada por registros contábeis, ou pela perspectiva, segundo Tim Ingold do ex-habitante. Nós outros, habitantes, não temos acesso a essa linguagem contábil, e somos assim furtados da responsabilidade de cuidar do meio ambiente, sendo dele (verticalmente) expelidos, em vez de fazer do mesmo um projeto comum, pela via do que Ingold denominou de “projetar ambientes para a vida”. Repousaria pois na unidade da vida esse elo ontológico, unidade esta que nem o catálogo taxonômico “biodiversidade” e nem a concepção kantiana de superfície – palco das nossas habilidades – dão conta. Tim Ingold se esforça, em nome de uma vida social sempre indivisível da vida ecológica (se é que é possível já assim polarizá-las – ressalta Ingold), por uma genealogia da unidade da vida, uma partilha histórica entre sociedade e natureza, sendo a última em geral concebida como facticidade, coisa bruta do mundo.

Para Ingold os conceitos são inerentemente políticos, e deste modo é interessante para alguns distinguir humanos de inumanos, que, embora estejam num único mundo, apenas os primeiros, pelo viés da “ação humana”, são passíveis de construir. Seriam assim os humanos “menos naturais”, todavia envolvidos mutuamente ao longo do mundo orgânico. Que pensar a respeito do vento, do sol, das árvores e suas raízes (onde residiria o seu caminhar)? Ele propõe, a fim de evitar – e agravar – essa infeliz dicotomia, a concepção de ambiente como uma zona de envolvimento mútuo, cujo relacionamento entre os seres se dá justamente por feixes de linhas, como luz, como ar, e caminhos. Contra as tentativas coercitivas de suprimir o ambiente cobrindo-o de superfícies duras/impermeáveis, Ingold oferece o rolar sobre o mundo e não através do. Segundo ele, o rolar sobre significa o nosso envolvimento com o ambiente, a nossa própria experiência, que difere do global da tecnociência. Aqui se situa o design, mas não o design que inova, e sim o design que improvisa. A inovação seria oriunda de uma leitura de “trás pra frente”, já a improvisação uma leitura do ler para a frente, por onde o mundo se desdobra. Toda improvisação para o antropólogo consiste em criatividade, e criatividade implica já crescimento. O design não prevê, o design antecipa.