Arqueólogo e antropólogo da USP conta como formulou uma teoria sobre a chegada do homem às Américas

© LEO RAMOS

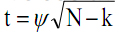

Ele é o pai de Luzia, um crânio humano de 11 mil anos, o mais antigo até agora encontrado nas Américas, que pertenceu a um extinto povo de caçadores-coletores da região de Lagoa Santa, nos arredores de Belo Horizonte. O arqueólogo e antropólogo Walter Neves, coordenador do Laboratório de Estudos Evolutivos Humanos do Instituto de Biociências da Universidade de São Paulo (USP), não foi o responsável por ter resgatado esse antigo esqueleto de um sítio pré-histórico, mas foi graças a seus estudos que Luzia, assim batizada por ele, tornou-se o símbolo de sua polêmica teo-ria de povoamento das Américas: o modelo dos dois componentes biológicos.Formulada há mais de duas décadas, a teoria advoga que nosso continente foi colonizado por duas levas de Homo sapiensvindas da Ásia. A primeira onda migratória teria ocorrido há uns 14 mil anos e fora composta por indivíduos parecidos com Luzia, com morfologia não mongoloide, semelhante à dos atuais australianos e africanos, mas que não deixaram descendentes. A segunda leva teria entrado aqui há uns 12 mil anos e seus membros apresentavam o tipo físico característico dos asiáticos, dos quais os índios modernos derivam.

Nesta entrevista, Neves, um cientista tão aguerrido como popular, que gosta de uma boa briga acadêmica, fala de Luzia e de sua carreira.

Como surgiu seu interesse por ciência?

Venho de uma família pobre, de Três Pontas, Minas Gerais. Por alguma razão, aos 8 anos, eu já sabia que queria ser cientista. As 12 anos, que queria trabalhar com evolução humana. Não tenho explicação para isso.

Quando você veio para São Paulo?

Foi em 1970, depois da Copa. Migramos para São Bernardo, onde morei grande parte da minha vida.

Como era sua vida?

Todo mundo em casa tinha que trabalhar. A família era pequena. Era meu pai, minha mãe, eu e meu irmão, três anos mais velho. Quando chegamos a São Paulo, meu pai era pedreiro e minha mãe vendia Yakult na rua. Eu tinha de 12 para 13 anos. Um ano depois de chegar aqui, comecei a trabalhar. Vendia massas uma vez por semana numa barraca de feirantes do meu bairro. Meu primeiro emprego fixo foi de ajudante-geral na Malas Primicia, para fazer fechadura de mala. E eu odiava. Era chato, não exigia qualificação. Durou pouco. Um mês depois fui contratado na fábrica de turbinas de avião da Rolls-Royce, em São Bernardo. Eu me beneficiei muito desse ambiente, que era refinado, cheio de regras e de valorização da hierarquia. Acho que desenvolvi minha excelente capacidade administrativa nos anos que passei na Rolls-Royce. Tive uma formação burocrática de primeira. Todos os dias, quando a gente chegava à fábrica, tinha um quadro da rainha da Inglaterra e a gente tinha que fazer mesura. Eu achava o máximo. Para quem vivia no mato, era um upgrade de glamour na vida. Tinha de 13 para 14 anos.

O que fazia?

Comecei como office-boy e quando saí era assistente da diretoria técnica. A Rolls-Royce no Brasil recebia as turbinas para fazer reparos e revisão geral. Meu chefe era diretor dessa parte e eu o ajudava em tudo. Trabalhava oito horas por dia e estudava à noite. Estudei em escola pública e entrei na USP, em biologia, em 1976. Tínhamos um ensino médio público de excelente nível.

Por que escolheu biologia?

Sempre achei que o caminho para estudar evolução humana era estudar história. Numa visita à USP no colegial, conheci o Instituto de Pré-história, que não existe mais. O instituto fora fundado por Paulo Duarte e funcionava no prédio da Zoo-logia, onde hoje fica a Ecologia. Nessa visita, fui ao prédio da História atrás de informação sobre o curso e me disseram que, se eu fizesse história, não aprenderia nada sobre evolução humana. Descendo a rua do Matão, vi numa plaquinha escrito Instituto de Pré-história, onde conheci a arqueó-loga Dorath Uchôa. Lá vi as réplicas de hominídeos fósseis e esqueletos pré-históricos escavados nos sambaquis da costa brasileira. Então disse para Dorath: “Quero fazer arqueologia e estudar esqueleto”. E ela disse: “Não faça história. Ou você faz biologia ou medicina”. Medicina não dava porque era em tempo integral. Optei por biologia. Foi um bom negócio. Em 1978 fui contratado, ainda na graduação, pelo Instituto de Pré-história como técnico.

Você estava em que ano da faculdade?

Do segundo para o terceiro, acho. Quando concluí a licenciatura em 1980, fui contratado como pesquisador e professor. Não tinha concurso. Era indicação.

Era um instituto independente?

Sim. Depois foi anexado ao Museu de Arqueologia e Etnologia, o MAE. Na época se fazia arqueologia em três lugares na USP: no Instituto de Pré-história, o mais antigo, no MAE e no setor de arqueologia do Museu Paulista, no Ipiranga. No final dos anos 1980, os três foram unidos em um só. Trabalhei no Instituto de Pré-história como pesquisador de 1980 a 1985. Em 1982 fui fazer doutorado sanduíche na Universidade Stanford. Eu era autodidata, porque não havia no Brasil especialista nessa área. No Instituto de Pré-história, o material estava lá, a biblioteca estava lá, mas não havia quem me orientasse.

Eles não trabalhavam com evolução humana?

O instituto era muito pequeno, tinha dois pesquisadores, que se achavam donos daquilo. Quando fui contratado, outra arqueóloga, a Solange Caldarelli, também foi contratada. Formamos um par muito produtivo. Trabalhamos no interior de São Paulo com grupos de caçadores-coletores, na faixa cronológica dos 3 mil aos 5 mil anos. Foi com ela que me tornei um arqueólogo. Minha transformação de biólogo para antropólogo físico foi autodidata. O crescimento do nosso grupo de pesquisa começou a expor a mediocridade do trabalho feito no Instituto de Pré-história e no Brasil. Isso levou a uma guerra entre nós e o establishment. Em 1985 fomos expulsos da universidade.

Como assim?

Expulsos. Demitidos sumariamente.

O que alegavam?

Nada. Não tínhamos estabilidade. A maior parte dos docentes era contratada a título precário e fomos chutados do Instituto de Pré-história pelo pessoal mais velho.

Qual a diferença do antropólogo físico e do arqueólogo? Você se considera o que hoje?

Me considero antropólogo e arqueólogo. Na verdade me considero uma categoria que tem nos Estados Unidos e se chama evolutionary anthopologyst, antropólogo evolutivo. Mesmo entre os antropólogos evolutivos são raros os que têm uma trajetória em antropologia física, arqueologia e antropologia sociocultural. Nesse sentido tenho uma carreira única, que os meus colegas no exterior não entendiam. Eu fazia antropologia física e antropologia biológica e tinha projetos de arqueologia. Quando fui para a Amazônia, trabalhei com antropologia ecológica. Sou uma das únicas pessoas no mundo que passou por todas as antropologias possíveis. Se por um lado não sou bom em nenhuma delas, por outro eu tenho uma compreensão do humano muito mais multifacetada do que meus colegas.

O arqueólogo faz o trabalho de campo e o antropólogo físico espera o material?

O antropólogo físico pode ir a campo, mas não vai. Espera os arqueólogos entregarem o material para ele estudar. Me rebelei contra isso no Brasil. Falei: quero ser arqueólogo também. Nos Estados Unidos, no final dos anos 1980, se definiu uma área chamada bioarqueologia, composta por antropólogos físicos que não aguentavam mais ficar na dependência dos arqueólogos. Aqui de maneira independente me rebelei contra essa situação. E a demissão do instituto em 1985 foi traumática porque tínhamos sete anos de pesquisa de campo e perdemos tudo. De uma hora para outra minha carreira foi zerada. A sorte é que àquela altura eu tinha defendido meu doutorado.

Aqui?

Aqui na Biologia, mas sobre paleogenética. Fui para Stanford por meio de uma bolsa sanduíche de seis meses do CNPq. Para me manter em Stanford e em Berkeley, eu contava com meu salário daqui, na época dava US$ 250, e o [Luigi Luca] Cavalli-Sforza, com quem trabalhei, me pagava no laboratório mais US$ 250.

Ele é um grande pesquisador, mas da genética de populações.

Me perguntam por que não fui trabalhar com um antropólogo físico, se eu era autodidata na parte osteológica. Não fui porque o que o Cavalli-Sforza faz é fascinante. Ele une várias áreas do conhecimento. Na época eu estava matriculado no mestrado na Biologia, quem me orientava aqui era o [Oswaldo] Frota-Pessoa.

Que também é da genética.

Da genética, mas com uma visão muito abrangente do ser humano. Se o Frota não existisse, eu não teria conseguido fazer o mestrado. Ele percebeu minha situação e foi muito generoso. Quando eu estava terminando o trabalho em Stanford, o Cavalli-Sforza descobriu que eu estava fazendo mestrado, e não doutorado. Ele olhava para mim e dizia: “Como você pode estar fazendo mestrado se já tem diversas publicações, coordena dois projetos de arqueologia e tem sete estudantes? Não tem sentido. Vou mandar uma correspondência para o Frota-Pessoa sugerindo que você faça direto o doutorado”. Hoje isso é comum. Foi o que me salvou. Defendi o doutorado em dezembro de 1984 e, meses depois, fui demitido. A Solange Caldarelli saiu tão enojada com a academia que nunca mais quis saber de carreira universitária. Eu queria voltar para a academia. Aí surgiram três possibilidades. Uma era fazer um pós-doc em Harvard; outra um pós-doc na Universidade Estadual da Pensilvânia e uma terceira coisa, inesperada. Quando eu fui demitido disse para o Frota que ia para o exterior. Sabia que a minha condição ia ser sempre conflituosa com a arqueologia brasileira. Nessa época existia o programa integrado de genética, do CNPq, importante para o desenvolvimento da genética no Brasil, e o Frota coordenava alguns cursos itinerantes. Aí o Frota disse: “Agora que a gente ia ter um especialista em evolução humana você vai embora. Eu entendo, mas eu vou te convidar para, antes de ir para o exterior, você dar um curso itinerante pelo Brasil sobre evolução humana”. Dei o curso na Universidade Federal da Bahia, na Federal do Rio Grande do Norte, no Museu Goeldi e na Universidade de Brasília. Fiquei muito bem impressionado com o Goeldi. No último dia de curso no Goeldi, o diretor quis me conhecer. Falei da minha trajetória e que estava indo para os Estados Unidos. Ele me perguntou: “Não tem nada que possa demover você dessa ideia?” Eu disse: “Olha, Guilherme”, o nome dele é Guilherme de La Penha, “a única coisa que me faria ficar no Brasil seria ter a oportunidade de criar meu próprio centro de estudos, que pudesse ser interdisciplinar e não estivesse ligado nem à antropologia, nem à arqueologia”. E ele me convidou para criar lá o que na época se chamou de núcleo de biologia e ecologia humana. Aconteceu também uma coisa no nível pessoal que me levou a optar por Belém.

Isso em 1985?

Ainda em 1985. Um pouco antes de eu dar esse curso pelo Brasil, eu me apaixonei profundamente pela primeira vez. Me apaixonei pelo Wagner, a melhor coisa que aconteceu na minha vida. Se fosse para os Estados Unidos, dificilmente conseguiria levá-lo. Em Belém, seria mais fácil arrumar um emprego para ele e continuar o relacionamento. Por isso aceitei a ida para o Goeldi. Só que tive de me afastar dos esqueletos. Na Amazônia a última coisa do mundo que se pode fazer é trabalhar com esqueletos, porque eles não se preservam.

O que você fazia?

Comecei a me dedicar à antropologia ecológica.

E o que é antropologia ecológica?

Ela estuda as adaptações de sociedades tradicionais ao ambiente. Até então, era uma linha que os americanos trabalhavam muito na Amazônia. Como a nossa antropologia aqui é eminentemente estruturalista, e tem urticária de alguma coisa que seja biológica, essa linha nunca progrediu no Brasil. Aí pensei: “Bárbaro, vou comprar outra briga. Vou formar uma primeira geração em antropologia ecológica”. Grande parte das pesquisas sobre antropologia ecológica na Amazônia era feita com indígenas. Então decidi estudar as populações caboclas tradicionais.

Vocês publicaram um livro, não?

Publicamos a primeira grande síntese sobre a adaptação cabocla na Amazônia, que saiu aqui e no exterior. Coloquei alunos que trabalharam comigo na Amazônia para fazer doutorado no exterior.

Quais conclusões você destaca dessa síntese?

Estudando essas populações amazônicas tradicionais, ficou claro que todo mundo que chega lá, as ONGs principalmente, acha que eles têm problema de nutrição. De fato, eles têm um déficit de crescimento em relação aos padrões internacionais. Mas nosso trabalho mostrou que na verdade eles não têm deficiência de ingestão de carboidratos e de proteínas. O problema é parasitose.

Como você retornou para a USP?

Em 1988, pouco depois de mudar para a Amazônia, o Wagner foi diagnosticado com Aids, e fizemos um trato. Quando ele chegasse na fase terminal, voltaríamos para São Paulo. Vim fazer um pós-doc na antropologia. Quando o Wagner morreu, em 1992, eu não queria mais voltar para a Amazônia e prestei dois concursos.

Você fazia pós-doc em antropologia na USP?

Sim, na Faculdade de Filosofia, Letras e Ciências Humanas. Aí prestei dois concursos. Um na Federal de Santa Catarina, na área de antropologia ecológica, mas eu queria ficar em São Paulo. Como eu já tinha feito em 1989 a primeira descoberta do que se tornou o meu modelo de ocupação das Américas, pensei: “Tenho que ir para um lugar em que possa me dedicar a isso e voltar a me concentrar em esqueletos humanos”. Aí surgiu uma vaga aqui no departamento, na área de evolução. Passei em ambos os lugares, mas optei por aqui. Sabia que poderia criar um centro de estudos evolutivos humanos que tivesse arqueologia, antropologia física, antropologia ecológica.

Como teve a ideia de criar um modelo alternativo de colonização das Américas?

Um dia, o Guilherme de La Penha, diretor do Goeldi, me chamou e disse: “Olha, Walter, daqui a uma semana tenho de ir a um congresso em Estocolmo sobre arqueologia de salvamento. Preciso que você me substitua”. Eu disse: “Mas assim, em cima da bucha?” Então lembrei que Copenhague fica na rota de Estocolmo. Negociei com ele a permissão para passar uns cinco dias em Copenhague e conhecer a coleção Lund. Fiz a viagem e não só conheci como medi os crânios de Lagoa Santa da coleção Lund. Quando voltei, falei com um pesquisador da Argentina que passava um tempo no Goeldi, o Hector Pucciarelli, meu maior parceiro de pesquisa e o mais importante bioantropólogo da América do Sul. Propus que fizéssemos um trabalhinho com esse material. Na época estavam surgindo os trabalhos de Niède Guidon com conclusões que me pareciam loucura, como dizer que o homem estava nas Américas havia 30 mil anos. Minha ideia no trabalho sobre os crânios de Lund era mostrar que os primeiros americanos não eram diferentes dos índios atuais. Bom, imagina nossa cara quando vimos que os crânios de Lagoa Santa eram mais parecidos com os australianos e os africanos do que com os asiáticos. Entramos em pânico. Vimos que precisávamos de um modelo para explicar isso.

O que vocês fizeram então?

Alguns autores clássicos, dos anos 1940 e 1950, como o antropólogo francês Paul Rivet, já haviam reconhecido uma similaridade entre o material de Lagoa Santa e o da Austrália. Só que o Rivet propôs uma migração direta da Austrália para a América do Sul para explicar a semelhança. Mais tarde, com o avanço dos estudos de genética indígena, principalmente com o trabalho do (Francisco) Salzano, ficou claro que todos os marcadores genéticos daqui apontavam para a Ásia. Não havia similaridade com os australianos. Pensamos então em criar um modelo que explorasse essa dualidade morfológica. Não queríamos cair em desgraça como o Rivet e começamos a estudar a ocupação da Ásia. Descobrimos que lá, no final do Pleistoceno, também havia uma dualidade morfológica. Havia os pré-mongoloides e os mongoloides. Nossas populações de Lagoa Santa eram parecidas com os pré-mongoloides. Os índios atuais são parecidos com os mongoloides. Foi daí que surgiu a ideia de que a América foi ocupada por duas levas distintas: uma com morfologia generalizada, parecida com os africanos e os australianos; e outra parecida com os asiáticos. Nosso primeiro trabalho foi publicado na revista Ciência e Cultura, em 1989. A partir de 1991 começamos a publicar no exterior.

Você então formulou esse modelo antes de examinar o crânio da Luzia.

Dez anos antes. No Brasil vários museus tinham acervos da região de Lagoa Santa. Mas, como eu era o enfant gâté da arqueologia brasileira, não me davam acesso às coleções. Por isso fui estudar a coleção Lund. Só passei a ter acesso às coleções no Brasil a partir de 1995, quando algumas das pessoas que colocavam barreiras morreram. Um dos crânios que eu tinha mais curiosidade de estudar era o da Luzia.

Já tinha esse nome?

Não. Eu é que dei. A gente conhecia como esqueleto da Lapa Vermelha IV, nome do sítio em que foi encontrado. O sítio foi escavado pela missão franco-brasileira, coordenada pela madame Annette Emperaire. O esqueleto da Luzia foi achado nas etapas de 1974 e 1975. Mas a madame Emperaire morreu inesperadamente. Com exceção de um artigo que ela publicou, não tinha mais nada escrito sobre a Lapa Vermelha.

No artigo ela falava que o crânio era antigo?

Madame Emperaire achava que havia dois esqueletos na Lapa Vermelha: um mais recente e outro mais antigo, datado de mais de 12 mil anos, antes da cultura Clovis, ao qual pertenceria o crânio da Luzia. Só que o André Prous (arqueólogo francês que participou da missão e hoje é professor da UFMG) revisou as anotações dela e percebeu que o crânio era do esqueleto mais recente, que estava cerca de um metro acima. Luzia não foi sepultada, foi depositada no chão do abrigo, numa fenda. Prous demonstrou que o crânio tinha rolado e caído num buraco de uma raiz de gameleira que tinha apodrecido. Portanto, o crânio pertencia a esses restos que estavam na faixa dos 11 mil anos de idade. Madame Emperaire morreu acreditando que tinha encontrado uma evidência pré-Clovis na América do Sul, o crânio que apelidei de Luzia.

Onde estava o crânio da Luzia quando você o examinou?

Sempre esteve no Museu Nacional do Rio de Janeiro, mas as informações não. O museu era a instituição parceira da missão francesa.

O povo de Luzia era restrito a Lagoa Santa?

Lagoa Santa é uma situação excepcional. No artigo síntese do meu trabalho, que publiquei em 2005 na revista PNAS, usamos 81 crânios da região. Para se ter uma ideia de como são raros os esqueletos com mais de 7 mil anos no nosso continente, os Estados Unidos e o Canadá, juntos, têm cinco. Temos o que chamamos de fossil powerno que se refere à questão da origem do homem americano. Estudei também algum material de outras partes do Brasil, do Chile, do México e da Flórida e demonstrei que a morfologia pré-mongoloide não era uma peculiaridade de Lagoa Santa. Acredito que os não mongoloides devem ter entrado lá em cima por volta de uns 14 mil anos e os mongoloides por volta de 10 ou 12 mil anos. Na verdade, a morfologia mongoloide na Ásia é muito recente. Imagino que, entre uma e outra, não deve ter mais do que 2 ou 3 mil anos de diferença. Mas é puro chute.

Dois ou 3 mil anos são o suficiente para mudar o fenótipo?

Foram o suficiente para mudar na Ásia. Hoje está mais ou menos claro que a morfologia mongoloide é resultado da exposição das populações que saíram da África, com uma morfologia tipicamente africana, e se submeteram ao frio extremo da Sibéria. Meu modelo não é totalmente aceito por alguns colegas, inclusive argentinos. Eles acham que o processo de mongolização ocorreu na Ásia e na América de forma paralela e independente. Não vamos resolver o assunto por falta de amostras. Mas, em evolução, a gente sempre opta pela lei da parcimônia. Você escolhe o modelo que envolve o menor número de passos evolutivos para explicar o que encontrou. Pela regra da parcimônia, meu modelo é melhor do que outros, que dependem de ter havido dois eventos evolutivos paralelos e independentes. Mas há oposição ao meu modelo.

De quem?

Dos geneticistas. Mas acho que não dá para enterrar o meu modelo com esse tipo de dado. Não há razão para o DNA mitocondrial, por exemplo, se comportar evolutivamente do mesmo jeito que a morfologia craniana. Onde geneticistas veem certa homogeneidade do ponto de vista do DNA, posso encontrar fenótipos diferentes.

Também tem o argumento de que teria havido uma só leva migratória para as Américas, já composta por uma população com tipos mongoloides e não mongoloides como Luzia.

Existe essa terceira possibilidade. Mas teria que ter havido uma taxa de deriva genética assombrosa para explicar a colonização dessa forma. Por que teria desaparecido um fenótipo e ficado apenas o outro? Das opções ao meu modelo, acho essa a mais fraca.

Mas como você explica o desaparecimento da morfologia de Luzia?

Na verdade, descobrimos nos últimos anos que ela não desapareceu. Quando propusemos o modelo, achávamos que uma população tinha substituído a outra. Mas em 2003 ou 2004 um colega argentino mostrou que uma tribo mexicana que viveu isolada do resto dos índios, num território hoje pertencente à Califórnia, manteve a morfologia não mongoloide até o século XVI, quando os europeus chegaram pelo mar. Estamos descobrindo também que os índios botocudos, do Brasil Central, mantiveram essa morfologia até o século XIX. Quando se estuda a etnografia dos botocudos, vê-se que eles se mantiveram como caçadores-coletores até o fim do século XIX. Estavam cercados por outros grupos indígenas, com os quais tinham relação belicosa. O cenário foi esse. Sobrou um pouquinho da morfologia não mongoloide até recentemente.

O que você acha do trabalho da arqueóloga Niède Guidon no Parque Nacional Serra da Capivara? Para ela, o homem chegou ao Piauí há 50 mil, talvez 100 mil anos.

Mas cadê as publicações? Ela publicou uma nota na Nature nos anos 1990 e estamos esperando as publicações. Eu e a Niède fomos inimigos mortais por 20 anos. Uns anos atrás, a gente fumou o cachimbo da paz. Já estive no Piauí algumas vezes e até publicamos trabalhos sobre esqueletos de lá. No parque havia as duas morfologias de crânio. É muito interessante. Tive uma boa formação em análise de indústria da pedra lascada. A Niède abriu toda a coleção lítica para mim e o Astolfo Araujo (hoje no MAE). Saí 99,9% convencido do fato de que houve ali uma ocupação humana com mais de 30 mil anos. Mas tenho esse 0,1% de dúvida, que é muito significativo.

O que seria preciso para acabar com a dúvida?

A Niède deveria convidar os melhores especialistas internacionais em tecnologia lítica para ver o material e publicar os resultados das análises. Se ela estiver certa, teremos de jogar tudo que sabemos fora. Meu trabalho não terá servido para nada. Mas, graças a Deus, não só o meu, o de todo mundo.

The study identified that high biodiversity areas also had high linguistic diversity

The study identified that high biodiversity areas also had high linguistic diversity

I’ve been doing fieldwork with preppers for the past two years, focusing on a group called Zombie Squad. It is ‘the nation’s premier non-stationary cadaver suppression task force,’ as well as a grassroots, 501(c)3 charity organization. Zombie Squad’s story is that while the zombie removal business is generally slow, there is no reason to be unprepared. So, while it is waiting for the “zombpacolpyse,” it focuses its time on disaster preparedness education for the membership and community.

I’ve been doing fieldwork with preppers for the past two years, focusing on a group called Zombie Squad. It is ‘the nation’s premier non-stationary cadaver suppression task force,’ as well as a grassroots, 501(c)3 charity organization. Zombie Squad’s story is that while the zombie removal business is generally slow, there is no reason to be unprepared. So, while it is waiting for the “zombpacolpyse,” it focuses its time on disaster preparedness education for the membership and community. This education is individual, feeding directly into the online forum they maintain (which has just under 30,000 active members from all over the world), and by monthly local meetings all over the country, as well as annual national gatherings in southern Missouri, where they socialize, learn survival skills and practice sharpshooting.

This education is individual, feeding directly into the online forum they maintain (which has just under 30,000 active members from all over the world), and by monthly local meetings all over the country, as well as annual national gatherings in southern Missouri, where they socialize, learn survival skills and practice sharpshooting.

Você precisa fazer login para comentar.