Tim Ingold speaking at LSE, 27 April 2010

Part 2

Part 3

Part 4

Part 5

Part 6

Tim Ingold speaking at LSE, 27 April 2010

Part 2

Part 3

Part 4

Part 5

Part 6

By DENNIS OVERBYE

Published: November 15, 2005

Once it was the left who wanted to redefine science.

In the early 1990’s, writers like the Czech playwright and former president Vaclav Havel and the French philosopher Bruno Latour proclaimed “the end of objectivity.” The laws of science were constructed rather than discovered, some academics said; science was just another way of looking at the world, a servant of corporate and military interests. Everybody had a claim on truth.

The right defended the traditional notion of science back then. Now it is the right that is trying to change it.

On Tuesday, fueled by the popular opposition to the Darwinian theory of evolution, the Kansas State Board of Education stepped into this fraught philosophical territory. In the course of revising the state’s science standards to include criticism of evolution, the board promulgated a new definition of science itself.

The changes in the official state definition are subtle and lawyerly, and involve mainly the removal of two words: “natural explanations.” But they are a red flag to scientists, who say the changes obliterate the distinction between the natural and the supernatural that goes back to Galileo and the foundations of science.

The old definition reads in part, “Science is the human activity of seeking natural explanations for what we observe in the world around us.” The new one calls science “a systematic method of continuing investigation that uses observation, hypothesis testing, measurement, experimentation, logical argument and theory building to lead to more adequate explanations of natural phenomena.”

Adrian Melott, a physics professor at the University of Kansas who has long been fighting Darwin’s opponents, said, “The only reason to take out ‘natural explanations’ is if you want to open the door to supernatural explanations.”

Gerald Holton, a professor of the history of science at Harvard, said removing those two words and the framework they set means “anything goes.”

The authors of these changes say that presuming the laws of science can explain all natural phenomena promotes materialism, secular humanism, atheism and leads to the idea that life is accidental. Indeed, they say in material online at kansasscience2005.com, it may even be unconstitutional to promulgate that attitude in a classroom because it is not ideologically “neutral.”

But many scientists say that characterization is an overstatement of the claims of science. The scientist’s job description, said Steven Weinberg, a physicist and Nobel laureate at the University of Texas, is to search for natural explanations, just as a mechanic looks for mechanical reasons why a car won’t run.

“This doesn’t mean that they commit themselves to the view that this is all there is,” Dr. Weinberg wrote in an e-mail message. “Many scientists (including me) think that this is the case, but other scientists are religious, and believe that what is observed in nature is at least in part a result of God’s will.”

The opposition to evolution, of course, is as old as the theory itself. “This is a very long story,” said Dr. Holton, who attributed its recent prominence to politics and the drive by many religious conservatives to tar science with the brush of materialism.

How long the Kansas changes will last is anyone’s guess. The state board tried to abolish the teaching of evolution and the Big Bang in schools six years ago, only to reverse course in 2001.

As it happened, the Kansas vote last week came on the same day that voters in Dover, Pa., ousted the local school board that had been sued for introducing the teaching of intelligent design.

As Dr. Weinberg noted, scientists and philosophers have been trying to define science, mostly unsuccessfully, for centuries.

When pressed for a definition of what they do, many scientists eventually fall back on the notion of falsifiability propounded by the philosopher Karl Popper. A scientific statement, he said, is one that can be proved wrong, like “the sun always rises in the east” or “light in a vacuum travels 186,000 miles a second.” By Popper’s rules, a law of science can never be proved; it can only be used to make a prediction that can be tested, with the possibility of being proved wrong.

But the rules get fuzzy in practice. For example, what is the role of intuition in analyzing a foggy set of data points? James Robert Brown, a philosopher of science at the University of Toronto, said in an e-mail message: “It’s the widespread belief that so-called scientific method is a clear, well-understood thing. Not so.” It is learned by doing, he added, and for that good examples and teachers are needed.

One thing scientists agree on, though, is that the requirement of testability excludes supernatural explanations. The supernatural, by definition, does not have to follow any rules or regularities, so it cannot be tested. “The only claim regularly made by the pro-science side is that supernatural explanations are empty,” Dr. Brown said.

The redefinition by the Kansas board will have nothing to do with how science is performed, in Kansas or anywhere else. But Dr. Holton said that if more states changed their standards, it could complicate the lives of science teachers and students around the nation.

He added that Galileo – who started it all, and paid the price – had “a wonderful way” of separating the supernatural from the natural. There are two equally worthy ways to understand the divine, Galileo said. “One was reverent contemplation of the Bible, God’s word,” Dr. Holton said. “The other was through scientific contemplation of the world, which is his creation.

“That is the view that I hope the Kansas school board would have adopted.”

Boston Review – SEPTEMBER/OCTOBER 2011

A San Francisco mural depicting Archbishop Óscar Romero / Photograph: Franco Folini

A San Francisco mural depicting Archbishop Óscar Romero / Photograph: Franco Folini

Since we often cannot see what is happening before our eyes, it is perhaps not too surprising that what is at a slight distance removed is utterly invisible. We have just witnessed an instructive example: President Obama’s dispatch of 79 commandos into Pakistan on May 1 to carry out what was evidently a planned assassination of the prime suspect in the terrorist atrocities of 9/11, Osama bin Laden. Though the target of the operation, unarmed and with no protection, could easily have been apprehended, he was simply murdered, his body dumped at sea without autopsy. The action was deemed “just and necessary” in the liberal press. There will be no trial, as there was in the case of Nazi criminals—a fact not overlooked by legal authorities abroad who approve of the operation but object to the procedure. As Elaine Scarry reminds us, the prohibition of assassination in international law traces back to a forceful denunciation of the practice by Abraham Lincoln, who condemned the call for assassination as “international outlawry” in 1863, an “outrage,” which “civilized nations” view with “horror” and merits the “sternest retaliation.”

In 1967, writing about the deceit and distortion surrounding the American invasion of Vietnam, I discussed the responsibility of intellectuals, borrowing the phrase from an important essay of Dwight Macdonald’s after World War II. With the tenth anniversary of 9/11 arriving, and widespread approval in the United States of the assassination of the chief suspect, it seems a fitting time to revisit that issue. But before thinking about the responsibility of intellectuals, it is worth clarifying to whom we are referring.

The concept of intellectuals in the modern sense gained prominence with the 1898 “Manifesto of the Intellectuals” produced by the Dreyfusards who, inspired by Emile Zola’s open letter of protest to France’s president, condemned both the framing of French artillery officer Alfred Dreyfus on charges of treason and the subsequent military cover-up. The Dreyfusards’ stance conveys the image of intellectuals as defenders of justice, confronting power with courage and integrity. But they were hardly seen that way at the time. A minority of the educated classes, the Dreyfusards were bitterly condemned in the mainstream of intellectual life, in particular by prominent figures among “the immortals of the strongly anti-Dreyfusard Académie Française,” Steven Lukes writes. To the novelist, politician, and anti-Dreyfusard leader Maurice Barrès, Dreyfusards were “anarchists of the lecture-platform.” To another of these immortals, Ferdinand Brunetière, the very word “intellectual” signified “one of the most ridiculous eccentricities of our time—I mean the pretension of raising writers, scientists, professors and philologists to the rank of supermen,” who dare to “treat our generals as idiots, our social institutions as absurd and our traditions as unhealthy.”

Who then were the intellectuals? The minority inspired by Zola (who was sentenced to jail for libel, and fled the country)? Or the immortals of the academy? The question resonates through the ages, in one or another form, and today offers a framework for determining the “responsibility of intellectuals.” The phrase is ambiguous: does it refer to intellectuals’ moral responsibility as decent human beings in a position to use their privilege and status to advance the causes of freedom, justice, mercy, peace, and other such sentimental concerns? Or does it refer to the role they are expected to play, serving, not derogating, leadership and established institutions?

One answer came during World War I, when prominent intellectuals on all sides lined up enthusiastically in support of their own states.

In their “Manifesto of 93 German Intellectuals,” leading figures in one of the world’s most enlightened states called on the West to “have faith in us! Believe, that we shall carry on this war to the end as a civilized nation, to whom the legacy of a Goethe, a Beethoven, and a Kant, is just as sacred as its own hearths and homes.” Their counterparts on the other side of the intellectual trenches matched them in enthusiasm for the noble cause, but went beyond in self-adulation. In The New Republic they proclaimed, “The effective and decisive work on behalf of the war has been accomplished by . . . a class which must be comprehensively but loosely described as the ‘intellectuals.’” These progressives believed they were ensuring that the United States entered the war “under the influence of a moral verdict reached, after the utmost deliberation by the more thoughtful members of the community.” They were, in fact, the victims of concoctions of the British Ministry of Information, which secretly sought “to direct the thought of most of the world,” but particularly the thought of American progressive intellectuals who might help to whip a pacifist country into war fever.

John Dewey was impressed by the great “psychological and educational lesson” of the war, which proved that human beings—more precisely, “the intelligent men of the community”—can “take hold of human affairs and manage them . . . deliberately and intelligently” to achieve the ends sought, admirable by definition.

Not everyone toed the line so obediently, of course. Notable figures such as Bertrand Russell, Eugene Debs, Rosa Luxemburg, and Karl Liebknecht were, like Zola, sentenced to prison. Debs was punished with particular severity—a ten-year prison term for raising questions about President Wilson’s “war for democracy and human rights.” Wilson refused him amnesty after the war ended, though Harding finally relented. Some, such as Thorstein Veblen, were chastised but treated less harshly; Veblen was fired from his position in the Food Administration after preparing a report showing that the shortage of farm labor could be overcome by ending Wilson’s brutal persecution of labor, specifically the International Workers of the World. Randolph Bourne was dropped by the progressive journals after criticizing the “league of benevolently imperialistic nations” and their exalted endeavors.

The pattern of praise and punishment is a familiar one throughout history: those who line up in the service of the state are typically praised by the general intellectual community, and those who refuse to line up in service of the state are punished. Thus in retrospect Wilson and the progressive intellectuals who offered him their services are greatly honored, but not Debs. Luxemburg and Liebknecht were murdered and have hardly been heroes of the intellectual mainstream. Russell continued to be bitterly condemned until after his death—and in current biographies still is.

Since power tends to prevail, intellectuals who serve their governments are considered the responsible ones.

In the 1970s prominent scholars distinguished the two categories of intellectuals more explicitly. A 1975 study, The Crisis of Democracy, labeled Brunetière’s ridiculous eccentrics “value-oriented intellectuals” who pose a “challenge to democratic government which is, potentially at least, as serious as those posed in the past by aristocratic cliques, fascist movements, and communist parties.” Among other misdeeds, these dangerous creatures “devote themselves to the derogation of leadership, the challenging of authority,” and they challenge the institutions responsible for “the indoctrination of the young.” Some even sink to the depths of questioning the nobility of war aims, as Bourne had. This castigation of the miscreants who question authority and the established order was delivered by the scholars of the liberal internationalist Trilateral Commission; the Carter administration was largely drawn from their ranks.

Like The New Republic progressives during World War I, the authors of The Crisis of Democracy extend the concept of the “intellectual” beyond Brunetière’s ridiculous eccentrics to include the better sort as well: the “technocratic and policy-oriented intellectuals,” responsible and serious thinkers who devote themselves to the constructive work of shaping policy within established institutions and to ensuring that indoctrination of the young proceeds on course.

It took Dewey only a few years to shift from the responsible technocratic and policy-oriented intellectual of World War I to an anarchist of the lecture-platform, as he denounced the “un-free press” and questioned “how far genuine intellectual freedom and social responsibility are possible on any large scale under the existing economic regime.”

What particularly troubled the Trilateral scholars was the “excess of democracy” during the time of troubles, the 1960s, when normally passive and apathetic parts of the population entered the political arena to advance their concerns: minorities, women, the young, the old, working people . . . in short, the population, sometimes called the “special interests.” They are to be distinguished from those whom Adam Smith called the “masters of mankind,” who are “the principal architects” of government policy and pursue their “vile maxim”: “All for ourselves and nothing for other people.” The role of the masters in the political arena is not deplored, or discussed, in the Trilateral volume, presumably because the masters represent “the national interest,” like those who applauded themselves for leading the country to war “after the utmost deliberation by the more thoughtful members of the community” had reached its “moral verdict.”

To overcome the excessive burden imposed on the state by the special interests, the Trilateralists called for more “moderation in democracy,” a return to passivity on the part of the less deserving, perhaps even a return to the happy days when “Truman had been able to govern the country with the cooperation of a relatively small number of Wall Street lawyers and bankers,” and democracy therefore flourished.

The Trilateralists could well have claimed to be adhering to the original intent of the Constitution, “intrinsically an aristocratic document designed to check the democratic tendencies of the period” by delivering power to a “better sort” of people and barring “those who were not rich, well born, or prominent from exercising political power,” in the accurate words of the historian Gordon Wood. In Madison’s defense, however, we should recognize that his mentality was pre-capitalist. In determining that power should be in the hands of “the wealth of the nation,” “a the more capable set of men,” he envisioned those men on the model of the “enlightened Statesmen” and “benevolent philosopher” of the imagined Roman world. They would be “pure and noble,” “men of intelligence, patriotism, property, and independent circumstances” “whose wisdom may best discern the true interest of their country, and whose patriotism and love of justice will be least likely to sacrifice it to temporary or partial considerations.” So endowed, these men would “refine and enlarge the public views,” guarding the public interest against the “mischiefs” of democratic majorities. In a similar vein, the progressive Wilsonian intellectuals might have taken comfort in the discoveries of the behavioral sciences, explained in 1939 by the psychologist and education theorist Edward Thorndike:

It is the great good fortune of mankind that there is a substantial correlation between intelligence and morality including good will toward one’s fellows . . . . Consequently our superiors in ability are on the average our benefactors, and it is often safer to trust our interests to them than to ourselves.

A comforting doctrine, though some might feel that Adam Smith had the sharper eye.

Since power tends to prevail, intellectuals who serve their governments are considered responsible, and value-oriented intellectuals are dismissed or denigrated. At home that is.

With regard to enemies, the distinction between the two categories of intellectuals is retained, but with values reversed. In the old Soviet Union, the value-oriented intellectuals were the honored dissidents, while we had only contempt for the apparatchiks and commissars, the technocratic and policy-oriented intellectuals. Similarly in Iran we honor the courageous dissidents and condemn those who defend the clerical establishment. And elsewhere generally.

The honorable term “dissident” is used selectively. It does not, of course, apply, with its favorable connotations, to value-oriented intellectuals at home or to those who combat U.S.-supported tyranny abroad. Take the interesting case of Nelson Mandela, who was removed from the official terrorist list in 2008, and can now travel to the United States without special authorization.

Father Ignacio Ellacuría / Photograph: Gervasio Sánchez

Father Ignacio Ellacuría / Photograph: Gervasio Sánchez

Twenty years earlier, he was the criminal leader of one of the world’s “more notorious terrorist groups,” according to a Pentagon report. That is why President Reagan had to support the apartheid regime, increasing trade with South Africa in violation of congressional sanctions and supporting South Africa’s depredations in neighboring countries, which led, according to a UN study, to 1.5 million deaths. That was only one episode in the war on terrorism that Reagan declared to combat “the plague of the modern age,” or, as Secretary of State George Shultz had it, “a return to barbarism in the modern age.” We may add hundreds of thousands of corpses in Central America and tens of thousands more in the Middle East, among other achievements. Small wonder that the Great Communicator is worshipped by Hoover Institution scholars as a colossus whose “spirit seems to stride the country, watching us like a warm and friendly ghost,” recently honored further by a statue that defaces the American Embassy in London.

What particularly troubled the Trilateral scholars was the ‘excess of democracy’ in the 1960s.

The Latin American case is revealing. Those who called for freedom and justice in Latin America are not admitted to the pantheon of honored dissidents. For example, a week after the fall of the Berlin Wall, six leading Latin American intellectuals, all Jesuit priests, had their heads blown off on the direct orders of the Salvadoran high command. The perpetrators were from an elite battalion armed and trained by Washington that had already left a gruesome trail of blood and terror, and had just returned from renewed training at the John F. Kennedy Special Warfare Center and School at Fort Bragg, North Carolina. The murdered priests are not commemorated as honored dissidents, nor are others like them throughout the hemisphere. Honored dissidents are those who called for freedom in enemy domains in Eastern Europe, who certainly suffered, but not remotely like their counterparts in Latin America.

The distinction is worth examination, and tells us a lot about the two senses of the phrase “responsibility of intellectuals,” and about ourselves. It is not seriously in question, as John Coatsworth writes in the recently published Cambridge University History of the Cold War, that from 1960 to “the Soviet collapse in 1990, the numbers of political prisoners, torture victims, and executions of nonviolent political dissenters in Latin America vastly exceeded those in the Soviet Union and its East European satellites.” Among the executed were many religious martyrs, and there were mass slaughters as well, consistently supported or initiated by Washington.

Why then the distinction? It might be argued that what happened in Eastern Europe is far more momentous than the fate of the South at our hands. It would be interesting to see the argument spelled out. And also to see the argument explaining why we should disregard elementary moral principles, among them that if we are serious about suffering and atrocities, about justice and rights, we will focus our efforts on where we can do the most good—typically, where we share responsibility for what is being done. We have no difficulty demanding that our enemies follow such principles.

Few of us care, or should, what Andrei Sakharov or Shirin Ebadi say about U.S. or Israeli crimes; we admire them for what they say and do about those of their own states, and the conclusion holds far more strongly for those who live in more free and democratic societies, and therefore have far greater opportunities to act effectively. It is of some interest that in the most respected circles, practice is virtually the opposite of what elementary moral values dictate.

But let us conform and keep only to the matter of historical import.

The U.S. wars in Latin America from 1960 to 1990, quite apart from their horrors, have long-term historical significance. To consider just one important aspect, in no small measure they were wars against the Church, undertaken to crush a terrible heresy proclaimed at Vatican II in 1962, which, under the leadership of Pope John XXIII, “ushered in a new era in the history of the Catholic Church,” in the words of the distinguished theologian Hans Küng, restoring the teachings of the gospels that had been put to rest in the fourth century when the Emperor Constantine established Christianity as the religion of the Roman Empire, instituting “a revolution” that converted “the persecuted church” to a “persecuting church.” The heresy of Vatican II was taken up by Latin American bishops who adopted the “preferential option for the poor.” Priests, nuns, and laypersons then brought the radical pacifist message of the gospels to the poor, helping them organize to ameliorate their bitter fate in the domains of U.S. power.

That same year, 1962, President Kennedy made several critical decisions. One was to shift the mission of the militaries of Latin America from “hemispheric defense”—an anachronism from World War II—to “internal security,” in effect, war against the domestic population, if they raise their heads. Charles Maechling, who led U.S. counterinsurgency and internal defense planning from 1961 to 1966, describes the unsurprising consequences of the 1962 decision as a shift from toleration “of the rapacity and cruelty of the Latin American military” to “direct complicity” in their crimes to U.S. support for “the methods of Heinrich Himmler’s extermination squads.” One major initiative was a military coup in Brazil, planned in Washington and implemented shortly after Kennedy’s assassination, instituting a murderous and brutal national security state. The plague of repression then spread through the hemisphere, including the 1973 coup installing the Pinochet dictatorship, and later the most vicious of all, the Argentine dictatorship, Reagan’s favorite. Central America’s turn—not for the first time—came in the 1980s under the leadership of the “warm and friendly ghost” who is now revered for his achievements.

The murder of the Jesuit intellectuals as the Berlin wall fell was a final blow in defeating the heresy, culminating a decade of horror in El Salvador that opened with the assassination, by much the same hands, of Archbishop Óscar Romero, the “voice for the voiceless.” The victors in the war against the Church declare their responsibility with pride. The School of the Americas (since renamed), famous for its training of Latin American killers, announces as one of its “talking points” that the liberation theology that was initiated at Vatican II was “defeated with the assistance of the US army.”

Actually, the November 1989 assassinations were almost a final blow. More was needed.

A year later Haiti had its first free election, and to the surprise and shock of Washington, which like others had anticipated the easy victory of its own candidate from the privileged elite, the organized public in the slums and hills elected Jean-Bertrand Aristide, a popular priest committed to liberation theology. The United States at once moved to undermine the elected government, and after the military coup that overthrew it a few months later, lent substantial support to the vicious military junta and its elite supporters. Trade was increased in violation of international sanctions and increased further under Clinton, who also authorized the Texaco oil company to supply the murderous rulers, in defiance of his own directives.

I will skip the disgraceful aftermath, amply reviewed elsewhere, except to point out that in 2004, the two traditional torturers of Haiti, France and the United States, joined by Canada, forcefully intervened, kidnapped President Aristide (who had been elected again), and shipped him off to central Africa. He and his party were effectively barred from the farcical 2010–11 elections, the most recent episode in a horrendous history that goes back hundreds of years and is barely known among the perpetrators of the crimes, who prefer tales of dedicated efforts to save the suffering people from their grim fate.

If we are serious about justice, we will focus our efforts where we share responsibility for what is being done.

Another fateful Kennedy decision in 1962 was to send a special forces mission to Colombia, led by General William Yarborough, who advised the Colombian security forces to undertake “paramilitary, sabotage and/or terrorist activities against known communist proponents,” activities that “should be backed by the United States.” The meaning of the phrase “communist proponents” was spelled out by the respected president of the Colombian Permanent Committee for Human Rights, former Minister of Foreign Affairs Alfredo Vázquez Carrizosa, who wrote that the Kennedy administration “took great pains to transform our regular armies into counterinsurgency brigades, accepting the new strategy of the death squads,” ushering in

what is known in Latin America as the National Security Doctrine. . . . [not] defense against an external enemy, but a way to make the military establishment the masters of the game . . . [with] the right to combat the internal enemy, as set forth in the Brazilian doctrine, the Argentine doctrine, the Uruguayan doctrine, and the Colombian doctrine: it is the right to fight and to exterminate social workers, trade unionists, men and women who are not supportive of the establishment, and who are assumed to be communist extremists. And this could mean anyone, including human rights activists such as myself.

In a 1980 study, Lars Schoultz, the leading U.S. academic specialist on human rights in Latin America, found that U.S. aid “has tended to flow disproportionately to Latin American governments which torture their citizens . . . to the hemisphere’s relatively egregious violators of fundamental human rights.” That included military aid, was independent of need, and continued through the Carter years. Ever since the Reagan administration, it has been superfluous to carry out such a study. In the 1980s one of the most notorious violators was El Salvador, which accordingly became the leading recipient of U.S. military aid, to be replaced by Colombia when it took the lead as the worst violator of human rights in the hemisphere. Vázquez Carrizosa himself was living under heavy guard in his Bogotá residence when I visited him there in 2002 as part of a mission of Amnesty International, which was opening its year-long campaign to protect human rights defenders in Colombia because of the country’s horrifying record of attacks against human rights and labor activists, and mostly the usual victims of state terror: the poor and defenseless. Terror and torture in Colombia were supplemented by chemical warfare (“fumigation”), under the pretext of the war on drugs, leading to huge flight to urban slums and misery for the survivors. Colombia’s attorney general’s office now estimates that more than 140,000 people have been killed by paramilitaries, often acting in close collaboration with the U.S.-funded military.

Signs of the slaughter are everywhere. On a nearly impassible dirt road to a remote village in southern Colombia a year ago, my companions and I passed a small clearing with many simple crosses marking the graves of victims of a paramilitary attack on a local bus. Reports of the killings are graphic enough; spending a little time with the survivors, who are among the kindest and most compassionate people I have ever had the privilege of meeting, makes the picture more vivid, and only more painful.

This is the briefest sketch of terrible crimes for which Americans bear substantial culpability, and that we could easily ameliorate, at the very least.

But it is more gratifying to bask in praise for courageously protesting the abuses of official enemies, a fine activity, but not the priority of a value-oriented intellectual who takes the responsibilities of that stance seriously.

The victims within our domains, unlike those in enemy states, are not merely ignored and quickly forgotten, but are also cynically insulted. One striking illustration came a few weeks after the murder of the Latin American intellectuals in El Salvador. Vaclav Havel visited Washington and addressed a joint session of Congress. Before his enraptured audience, Havel lauded the “defenders of freedom” in Washington who “understood the responsibility that flowed from” being “the most powerful nation on earth”—crucially, their responsibility for the brutal assassination of his Salvadoran counterparts shortly before.

The liberal intellectual class was enthralled by his presentation. Havel reminds us that “we live in a romantic age,” Anthony Lewis gushed. Other prominent liberal commentators reveled in Havel’s “idealism, his irony, his humanity,” as he “preached a difficult doctrine of individual responsibility” while Congress “obviously ached with respect” for his genius and integrity; and asked why America lacks intellectuals so profound, who “elevate morality over self-interest” in this way, praising us for the tortured and mutilated corpses that litter the countries that we have left in misery. We need not tarry on what the reaction would have been had Father Ellacuría, the most prominent of the murdered Jesuit intellectuals, spoken such words at the Duma after elite forces armed and trained by the Soviet Union assassinated Havel and half a dozen of his associates—a performance that is inconceivable.

John Dewey / Photograph: New York Public Library / Photoresearchers, Inc.

John Dewey / Photograph: New York Public Library / Photoresearchers, Inc.

The assassination of bin Laden, too, directs our attention to our insulted victims. There is much more to say about the operation—including Washington’s willingness to face a serious risk of major war and even leakage of fissile materials to jihadis, as I have discussed elsewhere—but let us keep to the choice of name: Operation Geronimo. The name caused outrage in Mexico and was protested by indigenous groups in the United States, but there seems to have been no further notice of the fact that Obama was identifying bin Laden with the Apache Indian chief. Geronimo led the courageous resistance to invaders who sought to consign his people to the fate of “that hapless race of native Americans, which we are exterminating with such merciless and perfidious cruelty, among the heinous sins of this nation, for which I believe God will one day bring [it] to judgement,” in the words of the grand strategist John Quincy Adams, the intellectual architect of manifest destiny, uttered long after his own contributions to these sins. The casual choice of the name is reminiscent of the ease with which we name our murder weapons after victims of our crimes: Apache, Blackhawk, Cheyenne . . . We might react differently if the Luftwaffe were to call its fighter planes “Jew” and “Gypsy.”

The first 9/11, unlike the second, did not change the world. It was ‘nothing of very great consequence,’ Kissinger said.

Denial of these “heinous sins” is sometimes explicit. To mention a few recent cases, two years ago in one of the world’s leading left-liberal intellectual journals, The New York Review of Books, Russell Baker outlined what he learned from the work of the “heroic historian” Edmund Morgan: namely, that when Columbus and the early explorers arrived they “found a continental vastness sparsely populated by farming and hunting people . . . . In the limitless and unspoiled world stretching from tropical jungle to the frozen north, there may have been scarcely more than a million inhabitants.” The calculation is off by many tens of millions, and the “vastness” included advanced civilizations throughout the continent. No reactions appeared, though four months later the editors issued a correction, noting that in North America there may have been as many as 18 million people—and, unmentioned, tens of millions more “from tropical jungle to the frozen north.” This was all well known decades ago—including the advanced civilizations and the “merciless and perfidious cruelty” of the “extermination”—but not important enough even for a casual phrase. In London Review of Books a year later, the noted historian Mark Mazower mentioned American “mistreatment of the Native Americans,” again eliciting no comment. Would we accept the word “mistreatment” for comparable crimes committed by enemies?

If the responsibility of intellectuals refers to their moral responsibility as decent human beings in a position to use their privilege and status to advance the cause of freedom, justice, mercy, and peace—and to speak out not simply about the abuses of our enemies, but, far more significantly, about the crimes in which we are implicated and can ameliorate or terminate if we choose—how should we think of 9/11?

The notion that 9/11 “changed the world” is widely held, understandably. The events of that day certainly had major consequences, domestic and international. One was to lead President Bush to re-declare Ronald Reagan’s war on terrorism—the first one has been effectively “disappeared,” to borrow the phrase of our favorite Latin American killers and torturers, presumably because the consequences do not fit well with preferred self images. Another consequence was the invasion of Afghanistan, then Iraq, and more recently military interventions in several other countries in the region and regular threats of an attack on Iran (“all options are open,” in the standard phrase). The costs, in every dimension, have been enormous. That suggests a rather obvious question, not asked for the first time: was there an alternative?

A number of analysts have observed that bin Laden won major successes in his war against the United States. “He repeatedly asserted that the only way to drive the U.S. from the Muslim world and defeat its satraps was by drawing Americans into a series of small but expensive wars that would ultimately bankrupt them,” the journalist Eric Margolis writes.

The United States, first under George W. Bush and then Barack Obama, rushed right into bin Laden’s trap. . . . Grotesquely overblown military outlays and debt addiction . . . . may be the most pernicious legacy of the man who thought he could defeat the United States.

A report from the Costs of War project at Brown University’s Watson Institute for International Studies estimates that the final bill will be $3.2–4 trillion. Quite an impressive achievement by bin Laden.

That Washington was intent on rushing into bin Laden’s trap was evident at once. Michael Scheuer, the senior CIA analyst responsible for tracking bin Laden from 1996 to 1999, writes, “Bin Laden has been precise in telling America the reasons he is waging war on us.” The al Qaeda leader, Scheuer continues, “is out to drastically alter U.S. and Western policies toward the Islamic world.”

And, as Scheuer explains, bin Laden largely succeeded: “U.S. forces and policies are completing the radicalization of the Islamic world, something Osama bin Laden has been trying to do with substantial but incomplete success since the early 1990s. As a result, I think it is fair to conclude that the United States of America remains bin Laden’s only indispensable ally.” And arguably remains so, even after his death.

There is good reason to believe that the jihadi movement could have been split and undermined after the 9/11 attack, which was criticized harshly within the movement. Furthermore, the “crime against humanity,” as it was rightly called, could have been approached as a crime, with an international operation to apprehend the likely suspects. That was recognized in the immediate aftermath of the attack, but no such idea was even considered by decision-makers in government. It seems no thought was given to the Taliban’s tentative offer—how serious an offer, we cannot know—to present the al Qaeda leaders for a judicial proceeding.

At the time, I quoted Robert Fisk’s conclusion that the horrendous crime of 9/11 was committed with “wickedness and awesome cruelty”—an accurate judgment. The crimes could have been even worse. Suppose that Flight 93, downed by courageous passengers in Pennsylvania, had bombed the White House, killing the president. Suppose that the perpetrators of the crime planned to, and did, impose a military dictatorship that killed thousands and tortured tens of thousands. Suppose the new dictatorship established, with the support of the criminals, an international terror center that helped impose similar torture-and-terror states elsewhere, and, as icing on the cake, brought in a team of economists—call them “the Kandahar boys”—who quickly drove the economy into one of the worst depressions in its history. That, plainly, would have been a lot worse than 9/11.

As we all should know, this is not a thought experiment. It happened. I am, of course, referring to what in Latin America is often called “the first 9/11”: September 11, 1973, when the United States succeeded in its intensive efforts to overthrow the democratic government of Salvador Allende in Chile with a military coup that placed General Pinochet’s ghastly regime in office. The dictatorship then installed the Chicago Boys—economists trained at the University of Chicago—to reshape Chile’s economy. Consider the economic destruction, the torture and kidnappings, and multiply the numbers killed by 25 to yield per capita equivalents, and you will see just how much more devastating the first 9/11 was.

Privilege yields opportunity, and opportunity confers responsibilities.

The goal of the overthrow, in the words of the Nixon administration, was to kill the “virus” that might encourage all those “foreigners [who] are out to screw us”—screw us by trying to take over their own resources and more generally to pursue a policy of independent development along lines disliked by Washington. In the background was the conclusion of Nixon’s National Security Council that if the United States could not control Latin America, it could not expect “to achieve a successful order elsewhere in the world.” Washington’s “credibility” would be undermined, as Henry Kissinger put it.

The first 9/11, unlike the second, did not change the world. It was “nothing of very great consequence,” Kissinger assured his boss a few days later. And judging by how it figures in conventional history, his words can hardly be faulted, though the survivors may see the matter differently.

These events of little consequence were not limited to the military coup that destroyed Chilean democracy and set in motion the horror story that followed. As already discussed, the first 9/11 was just one act in the drama that began in 1962 when Kennedy shifted the mission of the Latin American militaries to “internal security.” The shattering aftermath is also of little consequence, the familiar pattern when history is guarded by responsible intellectuals.

It seems to be close to a historical universal that conformist intellectuals, the ones who support official aims and ignore or rationalize official crimes, are honored and privileged in their own societies, and the value-oriented punished in one or another way. The pattern goes back to the earliest records. It was the man accused of corrupting the youth of Athens who drank the hemlock, much as Dreyfusards were accused of “corrupting souls, and, in due course, society as a whole” and the value-oriented intellectuals of the 1960s were charged with interference with “indoctrination of the young.”

In the Hebrew scriptures there are figures who by contemporary standards are dissident intellectuals, called “prophets” in the English translation. They bitterly angered the establishment with their critical geopolitical analysis, their condemnation of the crimes of the powerful, their calls for justice and concern for the poor and suffering. King Ahab, the most evil of the kings, denounced the Prophet Elijah as a hater of Israel, the first “self-hating Jew” or “anti-American” in the modern counterparts. The prophets were treated harshly, unlike the flatterers at the court, who were later condemned as false prophets. The pattern is understandable. It would be surprising if it were otherwise.

As for the responsibility of intellectuals, there does not seem to me to be much to say beyond some simple truths. Intellectuals are typically privileged—merely an observation about usage of the term. Privilege yields opportunity, and opportunity confers responsibilities. An individual then has choices.

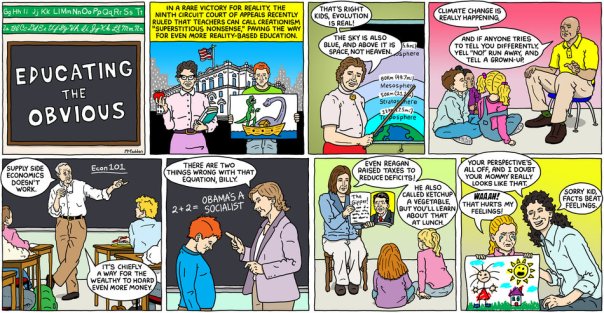

By BRIAN McFADDEN. Published: August 27, 2011

By BRIAN McFADDEN. Published: August 27, 2011

JC e-mail 4331, de 26 de Agosto de 2011.

Prova ABC avaliou desempenho de recém-alfabetizados; em leitura, resultado foi melhor: 56,1% mostraram dominar a língua.

Resultados de um teste aplicado em seis mil alunos de todas as capitais e do Distrito Federal mostram que 57,2% dos estudantes do 3 º ano do ensino fundamental – a antiga 2ª série – não conseguem resolver problemas básicos de matemática, como soma ou subtração. Inédita no País, a Prova ABC também avaliou a aprendizagem de leitura e escrita. “A dificuldade é na hora de fazer a conta do ‘vai um'”, explicou ontem, em São Paulo, na divulgação dos resultados, o professor Rubem Klein, da Fundação Cesgranrio, referindo-se à soma de números superiores a uma dezena.

A Prova ABC, ou Avaliação Brasileira do Final do Ciclo de Alfabetização, foi realizada pelo movimento Todos Pela Educação, em parceria com o Instituto Nacional de Estudos e Pesquisas Educacionais Anísio Teixeira (Inep), a Fundação Cesgranrio e o Instituto Paulo Montenegro/Ibope. O teste foi aplicado no início deste em estudantes de 250 escolas, conforme a proporção em cada rede (privada, estadual e municipal).

Em matemática, a média nacional de alunos do terceiro ano (2ª série) que aprenderam o esperado foi de 42,8%, o que significa que 57,2% não sabem o mínimo adequado para este período do aprendizado. As escolas privadas tiveram média de 74,3%, e as públicas, de apenas 32,6%, uma diferença de 41,7 pontos percentuais.

“Temos de levar em consideração que os professores dessas primeiras séries são formados em Pedagogia, curso que atrai pessoas de classes mais baixas e que não tiveram boa formação em matemática. É um ciclo vicioso que precisa ser rompido”, analisou o professor Paulo Horta, do Inep.

Teste de leitura: 43,9% não aprenderam o suficiente – A média nacional na prova de leitura foi 56,1%. Isso quer dizer que o restante, ou seja, 43,9% dos alunos, não aprenderam o suficiente. O índice dos que aprenderam o esperado chegou a 79% nas escolas particulares. Já nas públicas ficou em 48,6%.

Em escrita, o índice nacional dos que aprenderam o esperado caiu para 53,4%, ou seja, 46,6% não tiveram o aprendizado adequado. Nas escolas privadas, o aproveitamento foi 82,4%; nas públicas, 43,9%. “Mesmo com um índice melhor das escolas privadas, que são o objetivo dessa nova classe média para os seus filhos, elas não chegaram a 100%. E 100% significa apenas o que é esperado que as crianças tenham aprendido. No geral, a Prova ABC mostrou que as crianças que frequentam os três primeiros anos da escola não estão tendo garantido o direito básico que tem à aprendizagem”, observou a diretora-executiva do Todos Pela Educação, Priscila Cruz.

Assim como em outros índices da educação brasileira, o desempenho dos alunos do Sul e do Sudeste superou na Prova ABC os resultados das crianças do Norte e Nordeste, conforme o modelo do Sistema de Avaliação da Educação Básica (Saeb). A nota média nacional em matemática foi 171,07 (o desejado era 175), mas no Sul chegou a 185,64 e, no Sudeste, a 179,06. Por outro lado, foi bem menor no Norte (152,62), no Nordeste (158,19).

Regionalmente ainda os resultados da prova de leitura, conforme a escala Saeb de 175 pontos, não foram diferentes. No Sul a média foi 197,93, enquanto no Nordeste chegou a 167,37, uma diferença de 30 pontos. No Centro-Oeste a nota foi 196,57, no Sudeste 193,57 e no Norte 172,78.

Na prova de escrita – cuja nota média para um nível de aprendizagem considerado exitoso é 75, em uma escala de 0 a 100 – o Sudeste atingiu a média de 77,2 – uma diferença de 27 pontos em relação aos 50,2 do Nordeste.

Média nas escolas privadas foi 211; nas públicas, 158 – A metodologia da Prova ABC leva em conta a mesma escala Saeb, responsável por compor a nota do Índice de Desenvolvimento da Educação Básica (Ideb), que é o principal indicador de qualidade da educação do País. Nessa prova, como no Saeb, os alunos precisaram obter um resultado igual a 175 pontos para que o aprendizado equivalente ao terceiro ano (ou segunda série) seja considerado suficiente. Na prova escrita, no entanto, que foge do padrão Saeb, a nota média considerada de bom desempenho foi 75.

Por nota, a média nacional em matemática foi 171,1 (211,2 para escolas privadas e 158 para as públicas. Na prova de leitura, a nota média do País foi 185,8 (216,7 para as particulares e 175,8 para as públicas). Com outra escala de pontos, na prova escrita a nota média foi 68,1 (86,2 na rede privada e 62,3 na pública).

Em matemática, para conseguir os 175 pontos, as crianças teriam que demonstrar domínio de soma e subtração resolvendo problemas envolvendo, por exemplo, notas e moedas. Na prova de leitura, os alunos deveriam identificar temas de uma narrativa, identificar características de personagens em textos, como lendas, fábulas e histórias em quadrinhos, e perceber relações de causa e efeito nas narrativas Já na escrita foram exigidas três competências: adequação ao tema e ao gênero, coesão e coerência e registro (grafia, normas gramaticais, pontuação e segmentação de palavras).

Science 5 August 2011: Vol. 333 no. 6043 pp. 688-689 DOI: 10.1126/science.333.6043.688

SCIENCE EDUCATION

Sara Reardon

The U.S. political debate over climate change is seeping into K-12 science classrooms, and teachers are feeling the heat.

Growth potential. Students gather acorns for a middle school science project. CREDIT: JEFF CASALE/AP IMAGES

Growth potential. Students gather acorns for a middle school science project. CREDIT: JEFF CASALE/AP IMAGES

The topic, the board decided, is a “controversial issue.” Its next step was a new policy requiring teachers to explain to the school board how they are handling such topics in class in a “balanced” fashion. And the new environmental science course, which starts this fall, will be the first affected.

Local teachers immediately deplored the board’s actions. “It’s very difficult when we, as science teachers, are just trying to present scientific facts,” says Kathryn Currie, head of the high school’s science department. And science educators around the country say such attacks are becoming all too familiar. They see climate science now joining evolution as an inviting target for those who accuse “liberal” teachers of forcing their “beliefs” upon a captive audience of impressionable children.

“Evolution is still the big one, but climate change is catching up,” says Roberta Johnson, executive director of the National Earth Science Teachers Association (NESTA) in Boulder, Colorado. An informal survey this spring of 800 NESTA members (see word cloud) found that climate change was second only to evolution in triggering protests from parents and school administrators. One teacher reported being told by school administrators not to teach climate change after a parent threatened to come to class and make a scene. Online message boards for science teachers tell similar tales.

Hot topic. Teachers can bone up on climate science in workshops and classes. CREDIT: SOURCE: ROBERTA KILLEEN JOHNSON, NATIONAL EARTH SCIENCE TEACHERS ASSOCIATION

Hot topic. Teachers can bone up on climate science in workshops and classes. CREDIT: SOURCE: ROBERTA KILLEEN JOHNSON, NATIONAL EARTH SCIENCE TEACHERS ASSOCIATION

Most science teachers don’t relish having to engage this latest threat to their profession. “They want to teach the science,” says Susan Buhr, education director at the Cooperative Institute for Research in Environmental Sciences (CIRES) in Boulder. “They’re struggling to be on top of the science in the first place.”

CIRES and NESTA offer workshops and online resources for educators seeking more information on climate change. But teachers also say that they resent devoting any of their precious classroom time to a discussion of an alleged “controversy.” And they believe that politics has no place in a science classroom.

Even so, some are being dragged against their will into a conflict they fear could turn ugly. “There seems to be a lynch-mob hate against any teacher trying to teach climate change,” says Andrew Milbauer, an environmental sciences teacher at Conserve School, a private boarding school in Land O’Lakes, Wisconsin.

Milbauer felt that wrath after receiving an invitation to participate in a public debate about climate change. The event, put on last year by Tea Party activists, proposed to pit high school teachers against professors and climate change deniers David Legates and Willie Soon in front of students from 200 high schools. Organizers said the format was designed “to expand knowledge of the global warming debate to the youth of our state.” When Milbauer and his colleagues declined to participate, organizer Kim Simac complained to the local papers about their “suspicious” behavior. Milbauer corresponded for a time on the organization’s blog until Simac wrote that Milbauer, “in his role as science teacher, is passing on to our youth this monstrous hoax as being the gospel truth.”

Milbauer regards the episode as an unfortunate but telling example of misguided science and uses it in class discussions. “I explain this is the trap the [other side] is building,” he says.

Some teachers would disagree, however. In comments in the NESTA survey, a handful of teachers called climate change “just a theory like evolution” or said they firmly believed that opposing views should be presented with equal weight.

Given the ongoing and noisy national debate over climate change, it’s not surprising that those disagreements are seeping into K-12 schools, too. Science educators are scrambling to figure out how to deliver top-quality instruction without being sucked into the maelstrom. The issue is acute in Louisiana, which enacted a law in 2008 that lists climate change along with evolution as “controversial” subjects that teachers and students alike can challenge in the classroom without fear of reprisal.

A hotter climate? The phrase “climate change” came up often when NESTA asked its teacher members what classroom concepts trigger outside concerns. SOURCE: ROBERTA KILLEEN JOHNSON, NATIONAL EARTH SCIENCE TEACHERS ASSOCIATION

A hotter climate? The phrase “climate change” came up often when NESTA asked its teacher members what classroom concepts trigger outside concerns. SOURCE: ROBERTA KILLEEN JOHNSON, NATIONAL EARTH SCIENCE TEACHERS ASSOCIATION

In Los Alamitos, the course will follow the curriculum laid out by the nonprofit College Board for its Advanced Placement (AP) course in environmental science, which presents the scientific evidence for climate change. This curriculum, which prepares students to take an end-of-year test for college credit, is what irritated Jeffrey Barke, a Los Alamitos school board member and physician who led the push to revise the district’s policies after learning about the course. Barke has spoken publicly about his concern that “liberal faculty” members would use the course to present global warming as “dogma.”

Science department head Currie criticizes the board’s new policy and feels that it may confuse students when they answer multiple-choice questions relating to climate change on the final AP exam. “When a kid comes across that on the AP test, what are they supposed to bubble?” she asks. “The fact, or [Barke’s] belief that it’s not a fact?” The school board, however, has said that the new policy is simply a way to prevent political bias from entering the classroom.

Currie and her colleagues are spending the summer working up a lesson plan for the new course, but she isn’t sure what will satisfy the board. “I’m going to fight for scientific facts being presented in the classroom,” she says. “I want to keep politics out.”

The extent to which politics is affecting geoscience courses around the country is hard to measure, Rosenau says: “Just like with evolution, it’s difficult to know what a given teacher in a given classroom is teaching.”

To improve the quality of that instruction, both CIRES and NESTA are trying to put up-to-date, data-rich climate science materials into the hands of teachers and students to supplement textbooks. They’re not the only ones; even government agencies such as the National Oceanic and Atmospheric Administration, spurred by language in the 2007 America COMPETES Act about their role in improving science education, have beefed up their teacher training programs.

But it’s not enough to say that “you just need to teach people more,” Rosenau says. Teachers also have to learn how to defend themselves against parents or administrators wearing “ideological blinders,” he says. CIRES has analyzed the strategies that teachers used in the creationism debates and repurposed them for discussions about climate change. That includes citing state science standards—30 states include climate science in their description of what should be taught—and enlisting the support of administrators before tackling the subject in class.

Those who have taught geoscience or environmental science may feel more confident than colleagues who teach general physical science in managing a classroom discussion. Parents and students trying to poke holes in what they are being taught often “can’t articulate what the opposing view even is,” says Karen Lionberger, director of curriculum and content development for AP Environmental Science in Duluth, Georgia.

Of course, some attacks on climate change come from well-heeled sources. In 2009, the Heartland Institute, which has received significant funding from Exxon-Mobil, expanded its audience beyond teachers and students with a pamphlet, called The Skeptic’s Handbook, mailed to the presidents of the country’s 14,000 public school boards.

Heartland Institute senior fellow James Taylor, who sent out the pamphlet, says the underlying message is that educators need “to understand that there is quite a bit that remains to be learned” about climate change. Taylor also applauds the actions of the Los Alamitos school board, saying that “if the science is unsettled on any topic, of course you should present all points of view.”

The AP course itself doesn’t take a position on the issue, Lionberger says. The handful of multiple-choice questions on the final exam relating to climate change are not “slanted in any way,” she says, and none explicitly asks whether climate change is occurring. But because AP courses can be taken for college credit, she says, “we’re going to follow what colleges and universities are doing” by teaching students about the factors that contribute to climate change and its effects on the planet. Although researchers are always adding to that pool of knowledge, she says “for now, we will fall on the side of consensus science.”

July 31, 2011

By BRUCE E. LEVINE

Bruce E. Levine is a clinical psychologist and author of Get Up, Stand Up: Uniting Populists, Energizing the Defeated, and Battling the Corporate Elite (Chelsea Green, 2011).

Traditionally, young people have energized democratic movements. So it is a major coup for the ruling elite to have created societal institutions that have subdued young Americans and broken their spirit of resistance to domination.

Young Americans-even more so than older Americans-appear to have acquiesced to the idea that the corporatocracy can completely screw them and that they are helpless to do anything about it. A 2010 Gallup poll asked Americans ‘Do you think the Social Security system will be able to pay you a benefit when you retire?” Among 18- to 34-years-olds, 76 percent of them said no. Yet despite their lack of confidence in the availability of Social Security for them, few have demanded it be shored up by more fairly payroll-taxing the wealthy; most appear resigned to having more money deducted from their paychecks for Social Security, even though they don’t believe it will be around to benefit them.

How exactly has American society subdued young Americans?

1. Student-Loan Debt. Large debt-and the fear it creates-is a pacifying force. There was no tuition at the City University of New York when I attended one of its colleges in the 1970s, a time when tuition at many U.S. public universities was so affordable that it was easy to get a B.A. and even a graduate degree without accruing any student-loan debt. While those days are gone in the United States, public universities continue to be free in the Arab world and are either free or with very low fees in many countries throughout the world. The millions of young Iranians who risked getting shot to protest their disputed 2009 presidential election, the millions of young Egyptians who risked their lives earlier this year to eliminate Mubarak, and the millions of young Americans who demonstrated against the Vietnam War all had in common the absence of pacifying huge student-loan debt.

Today in the United States, two-thirds of graduating seniors at four-year colleges have student-loan debt, including over 62 percent of public university graduates. While average undergraduate debt is close to $25,000, I increasingly talk to college graduates with closer to $100,000 in student-loan debt. During the time in one’s life when it should be easiest to resist authority because one does not yet have family responsibilities, many young people worry about the cost of bucking authority, losing their job, and being unable to pay an ever-increasing debt. In a vicious cycle, student debt has a subduing effect on activism, and political passivity makes it more likely that students will accept such debt as a natural part of life.

2. Psychopathologizing and Medicating Noncompliance. In 1955, Erich Fromm, the then widely respected anti-authoritarian leftist psychoanalyst, wrote, ‘Today the function of psychiatry, psychology and psychoanalysis threatens to become the tool in the manipulation of man.” Fromm died in 1980, the same year that an increasingly authoritarian America elected Ronald Reagan president, and an increasingly authoritarian American Psychiatric Association added to their diagnostic bible (then the DSM-III) disruptive mental disorders for children and teenagers such as the increasingly popular ‘oppositional defiant disorder” (ODD). The official symptoms of ODD include ‘often actively defies or refuses to comply with adult requests or rules,” ‘often argues with adults,” and ‘often deliberately does things to annoy other people.”

Many of America’s greatest activists including Saul Alinsky (1909–1972), the legendary organizer and author of Reveille for Radicals and Rules for Radicals, would today certainly be diagnosed with ODD and other disruptive disorders. Recalling his childhood, Alinsky said, ‘I never thought of walking on the grass until I saw a sign saying ‘Keep off the grass.’ Then I would stomp all over it.” Heavily tranquilizing antipsychotic drugs (e.g. Zyprexa and Risperdal) are now the highest grossing class of medication in the United States ($16 billion in 2010); a major reason for this, according to theJournal of the American Medical Association in 2010, is that many children receiving antipsychotic drugs have nonpsychotic diagnoses such as ODD or some other disruptive disorder (this especially true of Medicaid-covered pediatric patients).

3. Schools That Educate for Compliance and Not for Democracy. Upon accepting the New York City Teacher of the Year Award on January 31, 1990, John Taylor Gatto upset many in attendance by stating: ‘The truth is that schools don’t really teach anything except how to obey orders. This is a great mystery to me because thousands of humane, caring people work in schools as teachers and aides and administrators, but the abstract logic of the institution overwhelms their individual contributions.” A generation ago, the problem of compulsory schooling as a vehicle for an authoritarian society was widely discussed, but as this problem has gotten worse, it is seldom discussed.

The nature of most classrooms, regardless of the subject matter, socializes students to be passive and directed by others, to follow orders, to take seriously the rewards and punishments of authorities, to pretend to care about things they don’t care about, and that they are impotent to affect their situation. A teacher can lecture about democracy, but schools are essentially undemocratic places, and so democracy is not what is instilled in students. Jonathan Kozol in The Night Is Dark and I Am Far from Home focused on how school breaks us from courageous actions. Kozol explains how our schools teach us a kind of ‘inert concern” in which ‘caring”-in and of itself and without risking the consequences of actual action-is considered ‘ethical.” School teaches us that we are ‘moral and mature” if we politely assert our concerns, but the essence of school-its demand for compliance-teaches us not to act in a friction-causing manner.

4. ‘No Child Left Behind” and ‘Race to the Top.” The corporatocracy has figured out a way to make our already authoritarian schools even more authoritarian. Democrat-Republican bipartisanship has resulted in wars in Afghanistan and Iraq, NAFTA, the PATRIOT Act, the War on Drugs, the Wall Street bailout, and educational policies such as ‘No Child Left Behind” and ‘Race to the Top.” These policies are essentially standardized-testing tyranny that creates fear, which is antithetical to education for a democratic society. Fear forces students and teachers to constantly focus on the demands of test creators; it crushes curiosity, critical thinking, questioning authority, and challenging and resisting illegitimate authority. In a more democratic and less authoritarian society, one would evaluate the effectiveness of a teacher not by corporatocracy-sanctioned standardized tests but by asking students, parents, and a community if a teacher is inspiring students to be more curious, to read more, to learn independently, to enjoy thinking critically, to question authorities, and to challenge illegitimate authorities.

5. Shaming Young People Who Take Education-But Not Their Schooling-Seriously. In a 2006 survey in the United States, it was found that 40 percent of children between first and third grade read every day, but by fourth grade, that rate declined to 29 percent. Despite the anti-educational impact of standard schools, children and their parents are increasingly propagandized to believe that disliking school means disliking learning. That was not always the case in the United States. Mark Twain famously said, ‘I never let my schooling get in the way of my education.” Toward the end of Twain’s life in 1900, only 6 percent of Americans graduated high school. Today, approximately 85 percent of Americans graduate high school, but this is good enough for Barack Obama who told us in 2009, ‘And dropping out of high school is no longer an option. It’s not just quitting on yourself, it’s quitting on your country.”

The more schooling Americans get, however, the more politically ignorant they are of America’s ongoing class war, and the more incapable they are of challenging the ruling class. In the 1880s and 1890s, American farmers with little or no schooling created a Populist movement that organized America’s largest-scale working people’s cooperative, formed a People’s Party that received 8 percent of the vote in 1892 presidential election, designed a ‘subtreasury” plan (that had it been implemented would have allowed easier credit for farmers and broke the power of large banks) and sent 40,000 lecturers across America to articulate it, and evidenced all kinds of sophisticated political ideas, strategies and tactics absent today from America’s well-schooled population. Today, Americans who lack college degrees are increasingly shamed as ‘losers”; however, Gore Vidal and George Carlin, two of America’s most astute and articulate critics of the corporatocracy, never went to college, and Carlin dropped out of school in the ninth grade.

6. The Normalization of Surveillance. The fear of being surveilled makes a population easier to control. While the National Security Agency (NSA) has received publicity for monitoring American citizen’s email and phone conversations, and while employer surveillance has become increasingly common in the United States, young Americans have become increasingly acquiescent to corporatocracy surveillance because, beginning at a young age, surveillance is routine in their lives. Parents routinely check Web sites for their kid’s latest test grades and completed assignments, and just like employers, are monitoring their children’s computers and Facebook pages. Some parents use the GPS in their children’s cell phones to track their whereabouts, and other parents have video cameras in their homes. Increasingly, I talk with young people who lack the confidence that they can even pull off a party when their parents are out of town, and so how much confidence are they going to have about pulling off a democratic movement below the radar of authorities?

7. Television. In 2009, the Nielsen Company reported that TV viewing in the United States is at an all-time high if one includes the following ‘three screens”: a television set, a laptop/personal computer, and a cell phone. American children average eight hours a day on TV, video games, movies, the Internet, cell phones, iPods, and other technologies (not including school-related use). Many progressives are concerned about the concentrated control of content by the corporate media, but the mere act of watching TV-regardless of the programming-is the primary pacifying agent (private-enterprise prisons have recognized that providing inmates with cable television can be a more economical method to keep them quiet and subdued than it would be to hire more guards).

Television is a dream come true for an authoritarian society: those with the most money own most of what people see; fear-based television programming makes people more afraid and distrustful of one another, which is good for the ruling elite who depend on a ‘divide and conquer” strategy; TV isolates people so they are not joining together to create resistance to authorities; and regardless of the programming, TV viewers’ brainwaves slow down, transforming them closer to a hypnotic state that makes it difficult to think critically. While playing a video games is not as zombifying as passively viewing TV, such games have become for many boys and young men their only experience of potency, and this ‘virtual potency” is certainly no threat to the ruling elite.

8. Fundamentalist Religion and Fundamentalist Consumerism. American culture offers young Americans the ‘choices” of fundamentalist religion and fundamentalist consumerism. All varieties of fundamentalism narrow one’s focus and inhibit critical thinking. While some progressives are fond of calling fundamentalist religion the ‘opiate of the masses,” they too often neglect the pacifying nature of America’s other major fundamentalism. Fundamentalist consumerism pacifies young Americans in a variety of ways. Fundamentalist consumerism destroys self-reliance, creating people who feel completely dependent on others and who are thus more likely to turn over decision-making power to authorities, the precise mind-set that the ruling elite loves to see. A fundamentalist consumer culture legitimizes advertising, propaganda, and all kinds of manipulations, including lies; and when a society gives legitimacy to lies and manipulativeness, it destroys the capacity of people to trust one another and form democratic movements. Fundamentalist consumerism also promotes self-absorption, which makes it difficult for the solidarity necessary for democratic movements.

These are not the only aspects of our culture that are subduing young Americans and crushing their resistance to domination. The food-industrial complex has helped create an epidemic of childhood obesity, depression, and passivity. The prison-industrial complex keeps young anti-authoritarians ‘in line” (now by the fear that they may come before judges such as the two Pennsylvania ones who took $2.6 million from private-industry prisons to ensure that juveniles were incarcerated). As Ralph Waldo Emerson observed: ‘All our things are right and wrong together. The wave of evil washes all our institutions alike.”

24/07/2011 13:38

Jucyllene Castilho, com informações da UCDB

Atualmente, há mais de 600 índios nas universidades de Mato Grosso do Sul, segundo estimativa do Projeto Rede de Saberes. Esse número vem crescendo junto ao de pós-graduandos índios, que fazem mestrado é até doutorado dentro ou fora do estado. Na sexta-feira (22), eles se reúnem na UCDB (Universidade Católica Dom Bosco) para pensar a criação de um fórum ou uma rede de pesquisadores indígenas.

Os professores perceberam que a universidade precisa estar preparada para recebê-los e criar formas de reconhecer os conhecimentos que eles já trazem de suas comunidades, ou seja, os conhecimentos tradicionais, que por muito tempo foram subestimados pela academia. A idéia desses professores é mostrar que os saberes destes povos são tão importantes quanto o da universidade, então a academia não deve tentar “engessá-los” em métodos científicos, mas ouvi-los e dialogar com eles.

Desde 1995, professores da UCDB têm trabalhado em projetos com populações indígenas de MS. Eles se articulam à professores de outras universidades do estado para ampliar essas ações e melhor entender quais são as necessidades destas comunidades.

Graduação

A UCDB tem 40 índios na graduação e seis cursando Mestrado em Educação ou Desenvolvimento Local. O número é maior nas universidades do interior do estado, que ficam mais próximas das reservas indígenas. Estes índios são das etnias Guarani, Terena, Kadiwéu, Ofaié e Kinikinau.

O projeto que trabalha especificamente com os universitários indígenas no MS é o Rede de Saberes. Os outros trabalhos de pesquisa e extensão junto aos índios estão articulados ao Programa Kaiowá Guarani, coordenado pelo Antonio Brand e desenvolvido pelo Neppi (Núcleo de Estudos e Pesquisas das Populações Indígenas), que é coordenado pelo padre George Lachnitt.

Rede de Saberes conta com a parceria entre a UCDB, UFMS (Universidade Federal de Mato Grosso do Sul), UFGD (Universidade Federal da Grande Dourados) e UEMS (Universidade Estadual do Mato Grosso do Sul), realizaram no último dia 17, em Dourados, o III Encontro de Acadêmicos Índios e Política Partidária, que reúne índios, professores e representantes de instituições convidadas, além de oito vereadores indígenas.

Durante o evento foi realizada uma discussão entre os parlamentares, estudantes e lideranças, a respeito de estratégias que fortaleçam a presença de índios no segundo maior estado com população indígena do País. Junto com o III Encontro, o Rede de Saberes realiza também nesses dois dias, o 1° Encontro Temático Saberes Tradicionais e Científicos – Direito.

O evento, que conta com a presença do Neppi da UCDB e de acadêmicos do curso de Direito da Católica, busca abrir discussão entre os estudantes sobre os saberes tradicionais e científicos, a fim de articular os dois conhecimentos. Mais informações sobre o Rede de Saberes podem ser obtidas pelo telefone 3312-3351.

ScienceDaily (May 26, 2011) — All human beings may have the ability to understand elementary geometry, independently of their culture or their level of education.

A Mundurucu participant measuring an angle using a goniometer laid on a table. (Credit: © Pierre Pica / CNRS)

A Mundurucu participant measuring an angle using a goniometer laid on a table. (Credit: © Pierre Pica / CNRS)

This is the conclusion of a study carried out by CNRS, Inserm, CEA, the Collège de France, Harvard University and Paris Descartes, Paris-Sud 11 and Paris 8 universities (1). It was conducted on Amazonian Indians living in an isolated area, who had not studied geometry at school and whose language contains little geometric vocabulary. Their intuitive understanding of elementary geometric concepts was compared with that of populations who, on the contrary, had been taught geometry at school. The researchers were able to demonstrate that all human beings may have the ability of demonstrating geometric intuition. This ability may however only emerge from the age of 6-7 years. It could be innate or instead acquired at an early age when children become aware of the space that surrounds them. This work is published in thePNAS.

Euclidean geometry makes it possible to describe space using planes, spheres, straight lines, points, etc. Can geometric intuitions emerge in all human beings, even in the absence of geometric training?

To answer this question, the team of cognitive science researchers elaborated two experiments aimed at evaluating geometric performance, whatever the level of education. The first test consisted in answering questions on the abstract properties of straight lines, in particular their infinite character and their parallelism properties. The second test involved completing a triangle by indicating the position of its apex as well as the angle at this apex.

To carry out this study correctly, it was necessary to have participants that had never studied geometry at school, the objective being to compare their ability in these tests with others who had received training in this discipline. The researchers focused their study on Mundurucu Indians, living in an isolated part of the Amazon Basin: 22 adults and 8 children aged between 7 and 13. Some of the participants had never attended school, while others had been to school for several years, but none had received any training in geometry. In order to introduce geometry to the Mundurucu participants, the scientists asked them to imagine two worlds, one flat (plane) and the second round (sphere), on which were dotted villages (corresponding to the points in Euclidean geometry) and paths (straight lines). They then asked them a series of questions illustrated by geometric figures displayed on a computer screen.

Around thirty adults and children from France and the United States, who, unlike the Mundurucu, had studied geometry at school, were also subjected to the same tests.

The result was that the Mundurucu Indians proved to be fully capable of resolving geometric problems, particularly in terms of planar geometry. For example, to the question Can two paths never cross?, a very large majority answered Yes. Their responses to the second test, that of the triangle, highlight the intuitive character of an essential property in planar geometry, namely the fact that the sum of the angles of the apexes of a triangle is constant (equal to 180°).

And, in a spherical universe, it turns out that the Amazonian Indians gave better answers than the French or North American participants who, by virtue of learning geometry at school, acquire greater familiarity with planar geometry than with spherical geometry. Another interesting finding was that young North American children between 5 and 6 years old (who had not yet been taught geometry at school) had mixed test results, which could signify that a grasp of geometric notions is acquired from the age of 6-7 years.